Run performance tests in Kubernetes cluster with Kangal.

- Why Kangal?

- Key features

- How it works

- Architectural diagram

- Components

- To start using Kangal

- To start developing Kangal

- Support

In Kangal project, the name stands for "Kubernetes and Go Automatic Loader". But originally Kangal is the breed of a shepherd dog. Let the smart and protective dog herd your load testing projects.

With Kangal, you can spin up an isolated environment in a Kubernetes cluster to run performance tests using different load generators.

- create an isolated Kubernetes environment with an opinionated load generator installation

- run load tests against any desired environment

- monitor load tests metrics in Grafana

- save the report for the successful load test

- clean up after the test finishes

Kangal application uses Kubernetes Custom Resources.

LoadTest custom resource (CR) is a main working entity. LoadTest custom resource definition (LoadTest CRD) can be found in /kangal/crd.yaml.

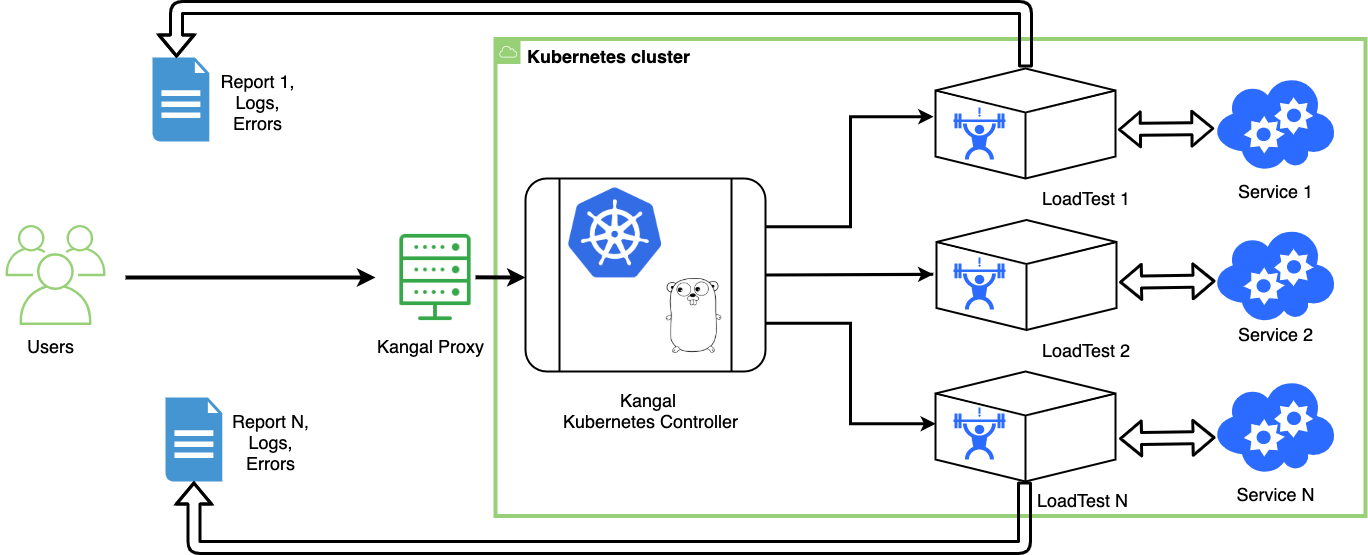

Kangal application contains two main parts:

- Proxy to create, delete and check load tests and reports via REST API requests

- Controller to operate with LoadTest CR and other Kubernetes entities.

Kangal also uses S3 compatible storage to save test reports.

The diagram below illustrates the workflow for Kangal in Kubernetes infrastructure.

A new custom resource in the Kubernetes cluster which contains requirements for performance testing environments.

More info about the Custom Resources in Official Kubernetes documentation

Provides the following HTTP methods for /load-test endpoint:

- POST - allowing the user to create a new LoadTest

- GET - allowing the user to see current LoadTest status / logs / report / metrics

- DELETE - allowing the user to stop and delete existing LoadTest

The Kangal Proxy is documented using the OpenAPI Spec.

If you prefer to use Postman you can also import openapi.json file into Postman to create a new collection.

The general name for several Kubernetes controllers created to manage all the aspects of the performance testing process.

- LoadTest controller

- Backend jobs controller

To run Kangal in your Kubernetes cluster follow docs

Also check out our User Flow guide to start creating load tests with Kangal.

More detailed information can be found in docs folder

To start developing Kangal you need a local Kubernetes environment, e.g. minikube or docker desktop.

Note: Depending on load generator type, load test environments created by Kangal may require a lot of resources. Make sure you increased your limits for local Kubernetes cluster. Read more about implemented load generators here.

-

Clone the repo locally

git clone https://github.com/hellofresh/kangal.git

-

Create required Kubernetes resource LoadTest CRD in your cluster

kubectl apply -f charts/kangal/crd.yaml

or just use:

make appply-crd

-

Download the dependencies

go mod vendor

-

Build Kangal binary

make build

-

Set the environment variables

export WEB_HTTP_PORT=8080 # API port for Kangal Proxy export AWS_BUCKET_NAME=YOUR_BUCKET_NAME # name of the bucket for saving reports export AWS_ENDPOINT_URL=YOUR_BUCKET_ENDPOINT # storage connection parameter export AWS_DEFAULT_REGION=YOUR_AWS_REGION # storage connection parameter

-

Run both Kangal proxy and controller

./kangal controller --kubeconfig=$KUBECONFIG ./kangal proxy --kubeconfig=$KUBECONFIG

To start contributing, please check CONTRIBUTING.

If you need support, start with the Troubleshooting guide, and work your way through the process that we've outlined.