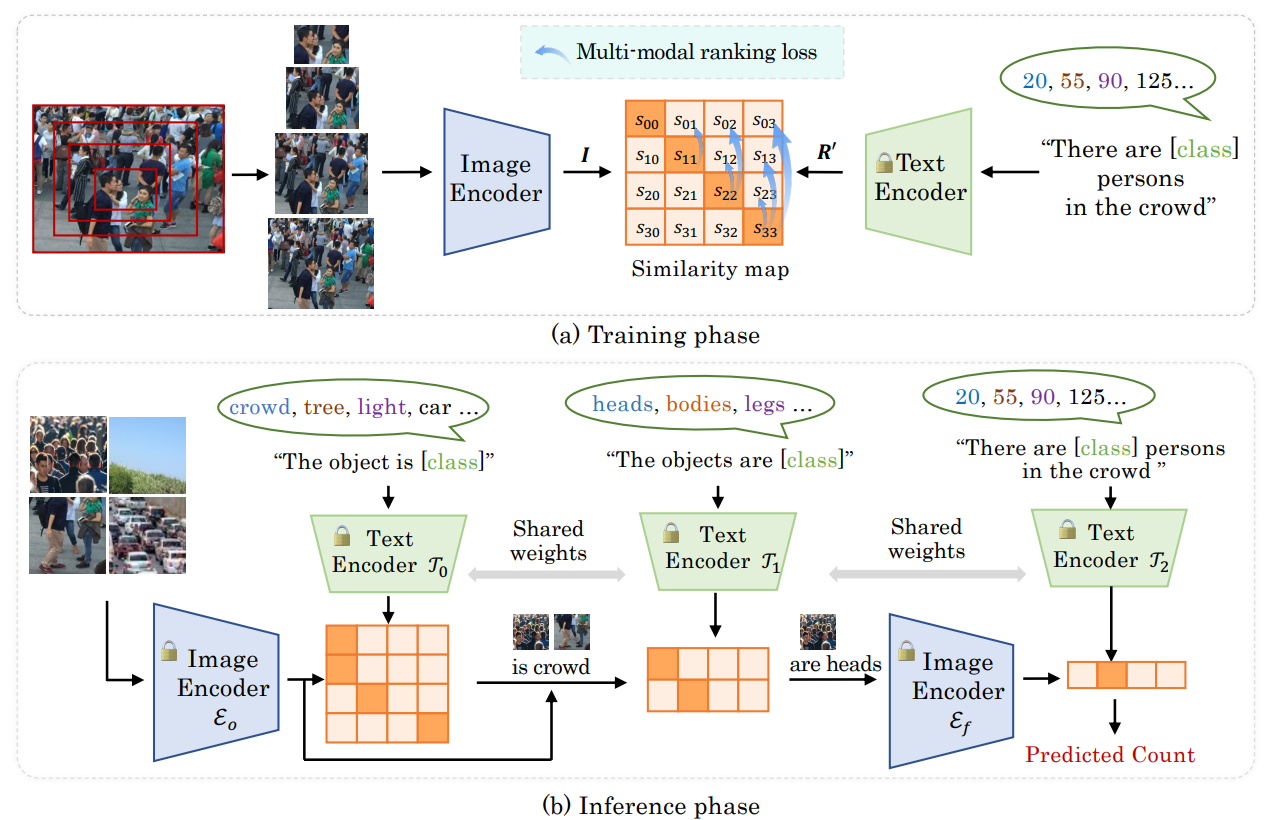

An officical implementation of "CrowdCLIP: Unsupervised Crowd Counting via Vision-Language Model" (Accepted by CVPR 2023).

Our experiments are tested on the following environments: Single 3090 GPU, Python: 3.8 PyTorch: 1.10 CUDA: 11.0

conda create --name crowdclip python=3.8 -y

conda activate crowdclip

conda install pytorch==1.10.0 torchvision==0.11.0 torchaudio==0.10.0 cudatoolkit=11.3 -c pytorch -c conda-forge

git clone [this repo]

cd CrowdCLIP

pip install -r requirements.txt

- Download UCF-QNRF dataset from here

- Download ShanghaiTech dataset from [here]

- Download the datasets, then put them under folder

datasets. The folder structure should look like this:

CrowdCLIP

├──CLIP

├──configs

├──scripts

├──datasets

├──ShanghaiTech

├──part_A_final

├──part_B_final

├──UCF-QNRF

├──Train

├──Test

- Generate the image patches

For UCF-QNRF dataset:

python configs/base_cfgs/data_cfg/datasets/qnrf/preprocess_qnrf.pyFor ShanghaiTech dataset:

python configs/base_cfgs/data_cfg/datasets/sha/preprocess_sha.py

python configs/base_cfgs/data_cfg/datasets/shb/preprocess_shb.py The folder structure should look like this:

CrowdCLIP

├──CLIP

├──configs

├──scripts

├──datasets

├──processed_datasets

├──SHA

├──test_data

├──train_data

├──SHB

├──test_data

├──train_data

├──UCF-QNRF

├──test_data

├──train_data

- Install CLIP

cd CrowdCLIP/CLIP

python setup.py develop

Download the pretrained model from Baidu-Disk, passward:ale1; or Onedrive;

We are preparing the journal version. The code will be coming soon.

Download the pretrained model and put them in CrowdCLIP/save_model

Example:

python scripts/run.py --config save_model/pretrained_qnrf/config.yaml --config configs/base_cfgs/data_cfg/datasets/qnrf/qnrf.yaml --test_only --gpu_id 0

python scripts/run.py --config save_model/pretrained_sha/config.yaml --config configs/base_cfgs/data_cfg/datasets/sha/sha.yaml --test_only --gpu_id 0

python scripts/run.py --config save_model/pretrained_shb/config.yaml --config configs/base_cfgs/data_cfg/datasets/shb/shb.yaml --test_only --gpu_id 0

Many thanks to the brilliant works (CLIP and OrdinalCLIP)!

If you find this codebase helpful, please consider to cite:

@article{Liang2023CrowdCLIP,

title={CrowdCLIP: Unsupervised Crowd Counting via Vision-Language Model},

author={Dingkang Liang, Jiahao Xie, Zhikang Zou, Xiaoqing Ye, Wei Xu, Xiang Bai},

journal={CVPR},

year={2023}

}