A Chainer implementation of VQ-VAE( https://arxiv.org/abs/1711.00937 ).

Trained about 63 hours with one 1080Ti (150000 iterations) on VCTK-Corpus. You can download pretrained model from here.

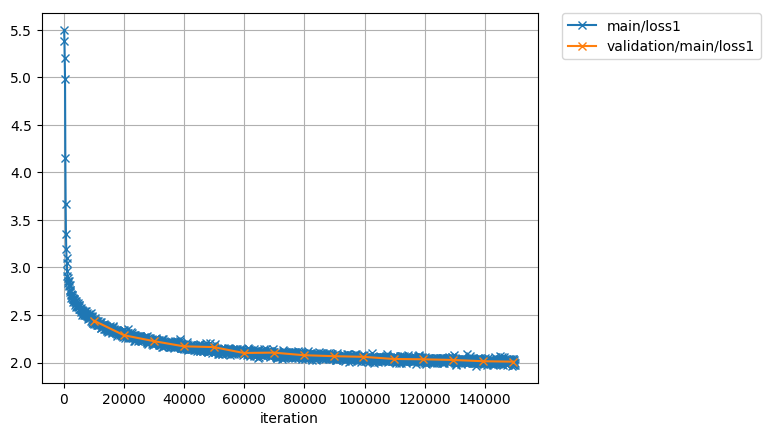

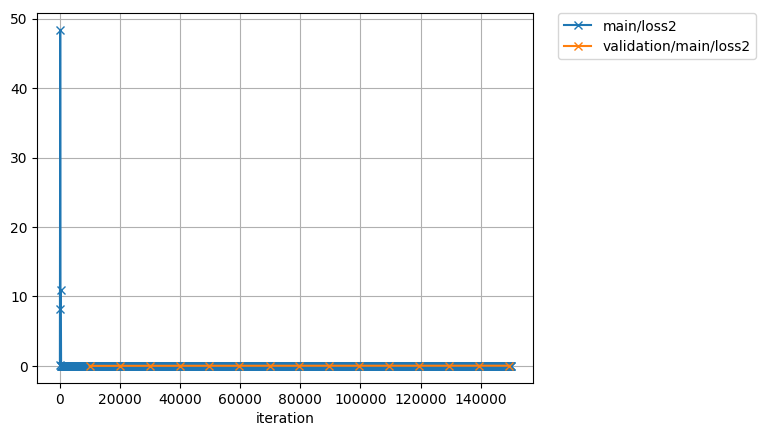

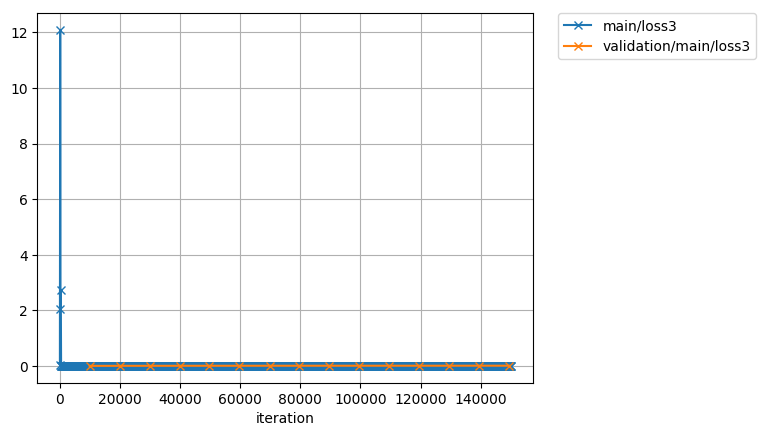

Losses:

Audios:

I trained and generated with

- python(3.5.2)

- chainer(4.0.0b3)

- librosa(0.5.1)

And now you can try it on Google Colaboratory. You don't need install chainer/librosa in your local or buy GPUs. Check this.

You can download VCTK-Corpus(en) from here. And you can download CMU-ARCTIC(en)/voice-statistics-corpus(ja) very easily via my repository.

- batchsize

- Batch size.

- lr

- Learning rate.

- ema_mu

- Rate of exponential moving average. If this is greater than 1 doesn't apply.

- trigger

- How many times you update the model. You can set this parameter like as (

<int>, 'iteration') or (<int>, 'epoch')

- How many times you update the model. You can set this parameter like as (

- evaluate_interval

- The interval that you evaluate validation dataset. You can set this parameter like as trigger.

- snapshot_interval

- The interval that you save snapshot. You can set this parameter like as trigger.

- report_interval

- The interval that you write log of loss. You can set this parameter like as trigger.

- root

- The root directory of training dataset.

- dataset

- The architecture of the directory of training dataset. Now this parameter supports

VCTK,ARCTICand 'vs'.

- The architecture of the directory of training dataset. Now this parameter supports

- split_seed

- A seed for splitting dataset into train and validation.

- sr

- Sampling rate. If it's different from input file, be resampled by librosa.

- res_type

- The resampling algorithm used in librosa.

- top_db

- The threshold db for triming silence.

- input_dim

- The input channels of wave. If it is

1, mu-law is not applied. Else mu-law is applied.

- The input channels of wave. If it is

- quantize

- The number for quantize.

- length

- How many samples used for training.

- use_logistic

- Use mixture of logistics or not.

- d

- The parameter

din the paper.

- The parameter

- k

- The parameter

kin the paper.

- The parameter

- n_loop

- If you want to make network like dilations [1, 2, 4, 1, 2, 4] set

n_loopas2.

- If you want to make network like dilations [1, 2, 4, 1, 2, 4] set

- n_layer

- If you want to make network like dilations [1, 2, 4, 1, 2, 4] set

n_layeras3.

- If you want to make network like dilations [1, 2, 4, 1, 2, 4] set

- filter_size

- The filter size of each dilated convolution.

- residual_channels

- The number of input/output channels of residual blocks.

- dilated_channels

- The number of output channels of causal dilated convolution layers. This is splited into tanh and sigmoid so the number of hidden units is half of this number.

- skip_channels

- The number of channels of skip connections and last projection layer.

- n_mixture

- The number of logistic distribution. It is used only

use_logisticisTrue.

- The number of logistic distribution. It is used only

- log_scale_min

- The number for stability. It is used only

use_logisticisTrue.

- The number for stability. It is used only

- global_condition_dim

- The dimension of speaker embeded-vector.

- local_condition_dim

- The dimension of local contioning vectors.

- dropout_zero_rate

- The rate of

0in dropout. If0doesn't apply dropout.

- The rate of

- beta

- The parameter

betain the paper.

- The parameter

- use_ema

- If

Trueuse the value of exponential moving average.

- If

- apply_dropout

- If

Trueapply dropout.

- If

(without GPU)

python train.py

(with GPU #n)

python train.py -g n

If you want to use multi GPUs, you can add IDs like below.

python train.py -g 0 1 2

You can resume snapshot and restart training like below.

python train.py -r snapshot_iter_100000

Other arguments -f and -p are parameters for multiprocess in preprocessing. -f means the number of prefetch and -p means the number of processes.

python generate.py -i <input file> -o <output file> -m <trained model> -s <speaker>

If you don't set -o, default file name result.wav is used. If you don't set -s, the speaker is same as input file that got from filepath.

- upload generated sample

- using GPU fot generating

- descritized mixture of logistics

- Parallel WaveNet