This project used adsf to provision the appropriate tooling and uses a .tool-versions file to explicitly set the versions.

asdf installThis will install kops, awscli and jq. Note that jq is used to parse the response from some of the aws commands, it is therefore assumed that your credential file is set up to output json

cat ~/.aws/credentials

[your-profile]

output = json

region = us-west-2

aws_access_key_id = XXXXX

aws_secret_access_key = XXXXXThe script includes a set of variables that can be overriden on the command line. See below for the list and an example.

: "${AWS_PROFILE:=default}"

: "${KOPS_USER:=kops}"

...And an example of overriding some variables.

AWS_PROFILE=galileo S3_BUCKET_PREFIX=galileo ./script/kops-aws.sh -a -ckOps uses the Go AWS SDK to register security credentials. This AWS article describes how to configure settings for service clients.

In order to use kOps to build clusters in AWS we will create a dedicated IAM user for kOps.

The kOps user will require the following IAM permissions to function properly:

AmazonEC2FullAccess

AmazonRoute53FullAccess

AmazonS3FullAccess

IAMFullAccess

AmazonVPCFullAccess

AmazonSQSFullAccess

AmazonEventBridgeFullAccess

These policies will be attached to the IAM group in the script.

kops-aws.sh - kOpS AWS Setup

Usage: kops-aws.sh -h

kops-aws.sh -a -c

-h show this help message

-v show Version

-a add the kOps user, group and bucket

-c create the cluster

-t create the terraform configuration

-k export admin kubecfg (from kops cluster to KUBECONFIG)

-o extract context from KUBECONFIG, write to file

-d delete the cluster

-r remove the kOps user, group and bucket

First time running this project you need to create the kOps IAM user and its group and to define the state bucket, run the following script, ensure that you bucket-prefix is globally unique.

AWS_PROFILE=<your-profile> S3_BUCKET_PREFIX=<bucket-prefix-globally-unique> ./script/kops-aws.sh -aFor debugging purposes, the output from the commands are stored in ./output folder, including the access-key created for the iam user.

After the user/group/bucket is created, then the cluster can be created.

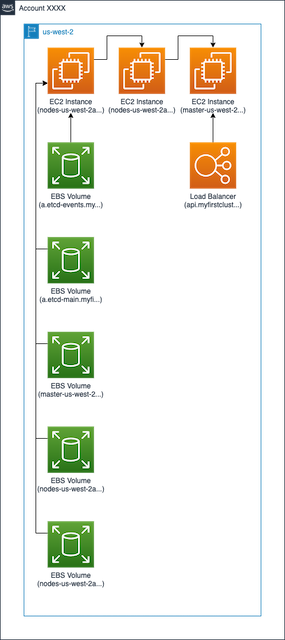

Note In this example we will be deploying our cluster to the us-west-2 region.

AWS_PROFILE=<your-profile> S3_BUCKET_PREFIX=<bucket-prefix-globally-unique> ./scripts/kops-aws.sh -cLet us deploy a simple Nginx workload and see if you can load the website in a browser.

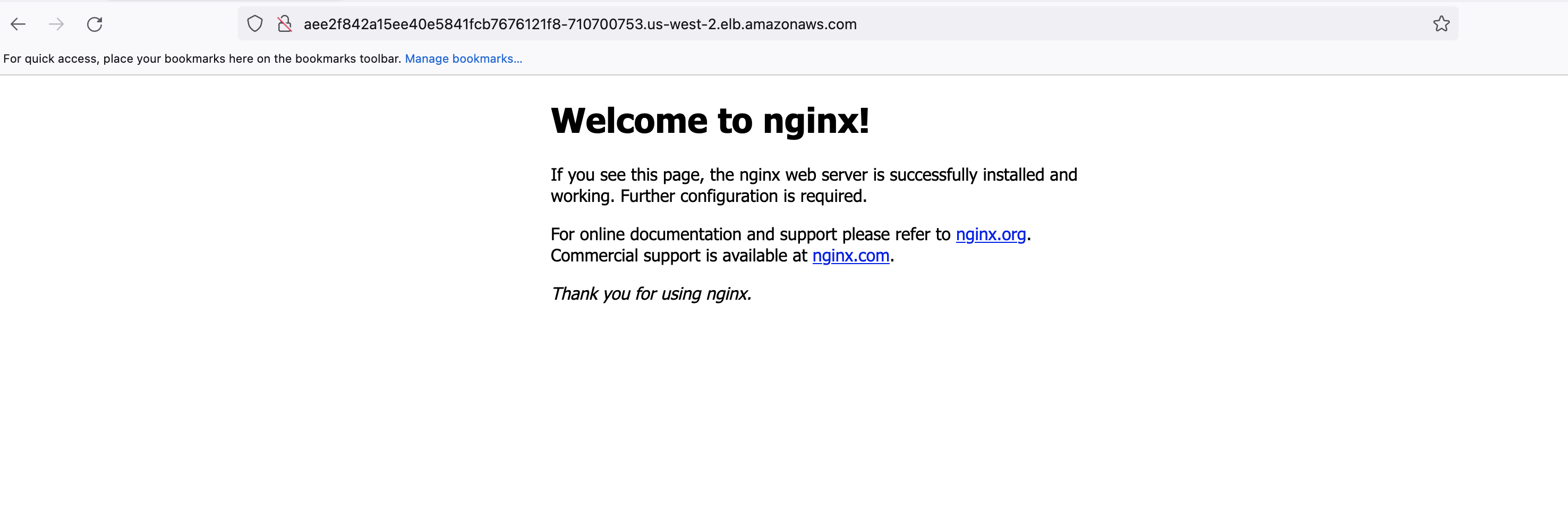

kubectl create deployment my-nginx --image=nginx --replicas=1 --port=80;

kubectl expose deployment my-nginx --port=80 --type=LoadBalancer;Verify if the Nginx pods are running:

kubectl get pods

NAME READY STATUS RESTARTS AGE

my-nginx-7576957b7b-wm95d 1/1 Running 0 7m10sGet the Load Balancer (LB) address, NOTE it can take some time before the external ip is available:

kubectl get svc my-nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

my-nginx LoadBalancer 100.71.164.21 aee2f842a15ee40e5841fcb7676121f8-710700753.us-west-2.elb.amazonaws.com 80:31828/TCP 7m33sHere, aee2f842a15ee40e5841fcb7676121f8-710700753.us-west-2.elb.amazonaws.com is the DNS name (endpoint) of the LB. Copy and paste it into a browser. You should see an Nginx default page:

The Kubernetes cluster is working as expected.

To clean up these resources:

kubectl delete svc my-nginx;

kubectl delete deploy my-nginx;Note In this example we will be deploying our cluster to the us-west-2 region.

AWS_PROFILE=<your-profile> S3_BUCKET_PREFIX=<bucket-prefix-globally-unique> ./scripts/kops-aws.sh -dWhen you no longer need kOps you can delete the kOps IAM user, group and bucket by running the following script

AWS_PROFILE=<your-profile> S3_BUCKET_PREFIX=<bucket-prefix-globally-unique> ./scripts/kops-aws.sh -r