This repository is the official implementation of Joint Physical-Digital Facial Attack Detection Via Simulating Spoofing Clues

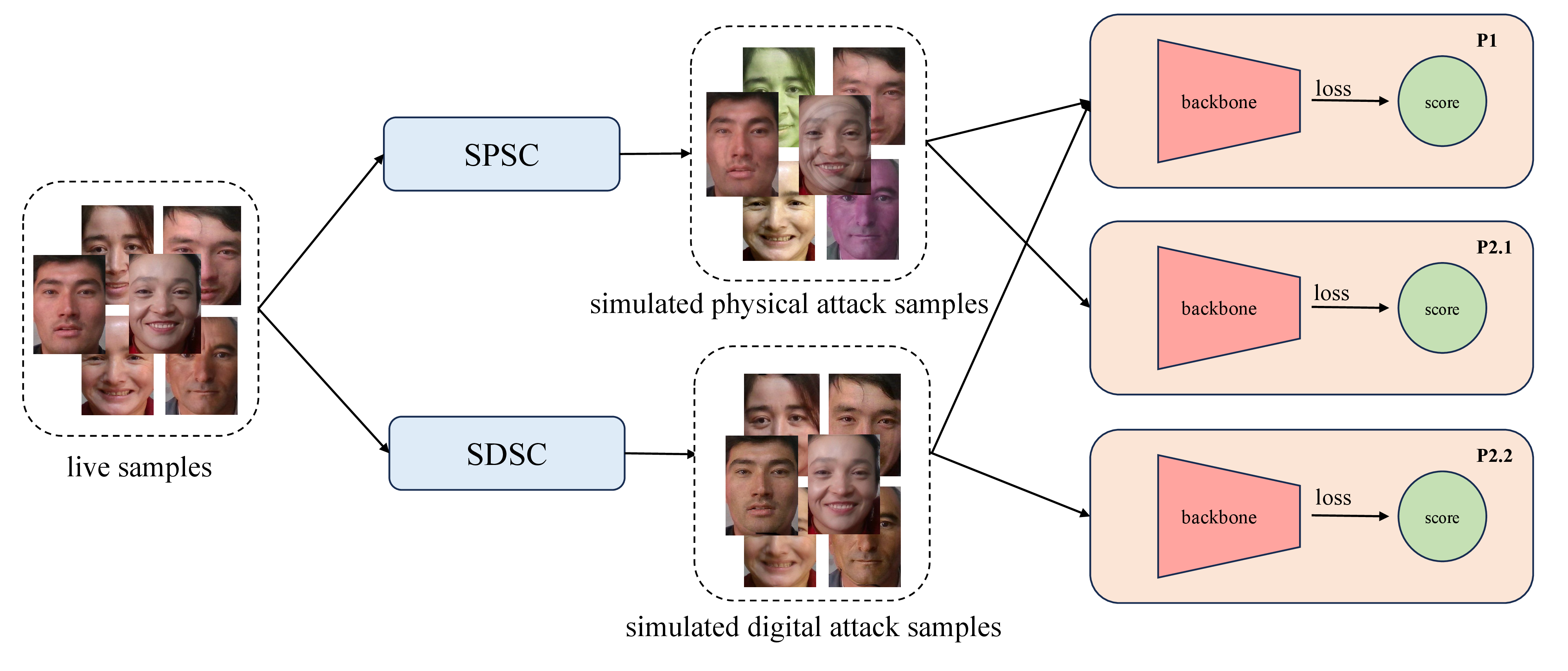

The overview pipeline of our method. We propose Simulated Physical Spoofing Clues augmentation (SPSC), which augments live samples into simulated physical attack samples for training within protocols 1 and 2.1. Concurrently, we present Simulated Digital Spoofing Clues augmentation (SDSC), converting live samples into simulated digital attack samples, tailored for training under protocols 1 and 2.2.

- employ ColorJitter to simulate the spoofing clues of print attacks

- use moire pattern augmentation to simulate the spoofing clues of replay attacks

- SPSC consists of ColorJitter and moire pattern augmentation

- introduce SPSC to simulate the spoofing clues of digital forgery

- attempt to use GaussNoise or gradient-based noise to simulate the spoofing clues of adversarial attacks, but do not work

Please make a copy of the original data and place it in the following format:

- copy data and get txt files

cp -r xxx/phase1/* cvpr2024/data/

cp -r xxx/phase2/* cvpr2024/data/

cd cvpr2024/data

cat p1/dev.txt p1/test.txt > p1/dev_test.txt

cat p2.1/dev.txt p2.1/test.txt > p2.1/dev_test.txt

cat p2.2/dev.txt p2.2/test.txt > p2.2/dev_test.txt- get train_dev_label.txt

python data_preprocess/merge_dev_train_data.py # please modify the base path

# p1: merge all dev data to train

# p2.1: only merge dev live data to train

# p2.2: only merge dev live data to train- final data format:

base_path = "xxx/cvpr2024"

|----cvpr2024/data

|----p1

|----train

|----dev

|----test

|----train_live_mask

|----train_label.txt

|----dev_label.txt

|----train_dev_label.txt

|----dev_test.txt

|----p2.1

|----train

|----dev

|----test

|----train_live_mask

|----train_label.txt

|----dev_label.txt

|----train_dev_label.txt

|----dev_test.txt

|----p2.2

|----train

|----dev

|----test

|----train_live_mask

|----train_label.txt

|----dev_label.txt

|----train_dev_label.txt

|----dev_test.txtpip install -r requirements.txtif image width >= 700 and image height >= 700, we will detect face from the image and expand 20 pixel crop face from the image.

If no face is detected in the image, we will center crop a 500*500 bbox from the image which implements this logic in dataset.py where the training data is loaded.

we need pip install insightface and use insightface to detect face.It will download the model by default to detect faces.

Please modify the base path and run detect_face.py.The crop_face will overwrite the original image.

cd data_preprocess

python detect_face.pyface-parsing github

download face-parsing model from 79999_iter.pth

Please modify root_dir and model_path.

cd data_preprocess/face_parsing

bash generate_mask.shdownload pretrain model: resnet50

- Please use 1*A100(80G) for training, only modify the dataset base path, do not modify other parameters in training.

- We fixed the random seed to ensure reproducible results. Modifying other training parameters will cause fluctuations in the final results.

- Training only takes 1 hour for each protocol.

- Inference only takes 1 minute for each protocol.

Train p1 protocol:

bash scripts/train_p1.shTest: select the 200th epoch model weight

bash scripts/test_p1.shTrain p2.1 protocol:

bash scripts/train_p21.shTest: select the 200th epoch model weight

bash scripts/test_p21.shTrain p2.2 protocol:

bash scripts/train_p22.shTest: select the 200th epoch model weight

bash scripts/test_p22.sh| Protocol | APCER(%) | BPCER(%) | ACER(%) | model |

|---|---|---|---|---|

| P1 | 0.31 | 0.09 | 0.20 | p1_resnet50.pth |

| P2.1 | 2.55 | 0.09 | 1.32 | p21_resnet50.pth |

| P2.2 | 1.73 | 1.58 | 1.65 | p22_resnet50.pth |