The Open Guide to Amazon Web Services

Credits ∙ Contributing guidelines

Table of Contents

Purpose

AWS in General

| Specific AWS Services | Basics | Tips | Gotchas |

|---|---|---|---|

| Security and IAM | 📗 | 📘 | 📙 |

| S3 | 📗 | 📘 | 📙 |

| EC2 | 📗 | 📘 | 📙 |

| AMIs | 📗 | 📘 | 📙 |

| Auto Scaling | 📗 | 📘 | 📙 |

| EBS | 📗 | 📘 | 📙 |

| EFS | 📗 | ||

| Load Balancers | 📗 | 📘 | 📙 |

| CLB (ELB) | 📗 | 📘 | 📙 |

| ALB | 📗 | 📘 | 📙 |

| Elastic IPs | 📗 | 📘 | 📙 |

| Glacier | 📗 | 📘 | 📙 |

| RDS | 📗 | 📘 | 📙 |

| RDS MySQL and MariaDB | 📗 | 📘 | 📙 |

| RDS Aurora | 📗 | 📘 | 📙 |

| RDS SQL Server | 📗 | 📘 | 📙 |

| DynamoDB | 📗 | 📘 | 📙 |

| ECS | 📗 | 📘 | |

| Lambda | 📗 | 📘 | 📙 |

| API Gateway | 📗 | 📙 | |

| Route 53 | 📗 | 📘 | |

| CloudFormation | 📗 | 📘 | 📙 |

| VPCs, Network Security, and Security Groups | 📗 | 📘 | 📙 |

| KMS | 📗 | 📘 | |

| CloudFront | 📗 | 📘 | 📙 |

| DirectConnect | 📗 | 📘 | |

| Redshift | 📗 | 📘 | 📙 |

| EMR | 📗 | 📘 | 📙 |

| Kinesis Streams | 📗 | 📘 | 📙 |

| Device Farm | 📗 | 📘 | 📙 |

| IoT | 📗 | 📘 | 📙 |

| SES | 📗 | 📘 | 📙 |

| Certificate Manager | 📗 |

Special Topics

Legal

Figures and Tables

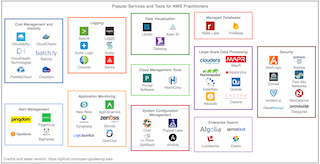

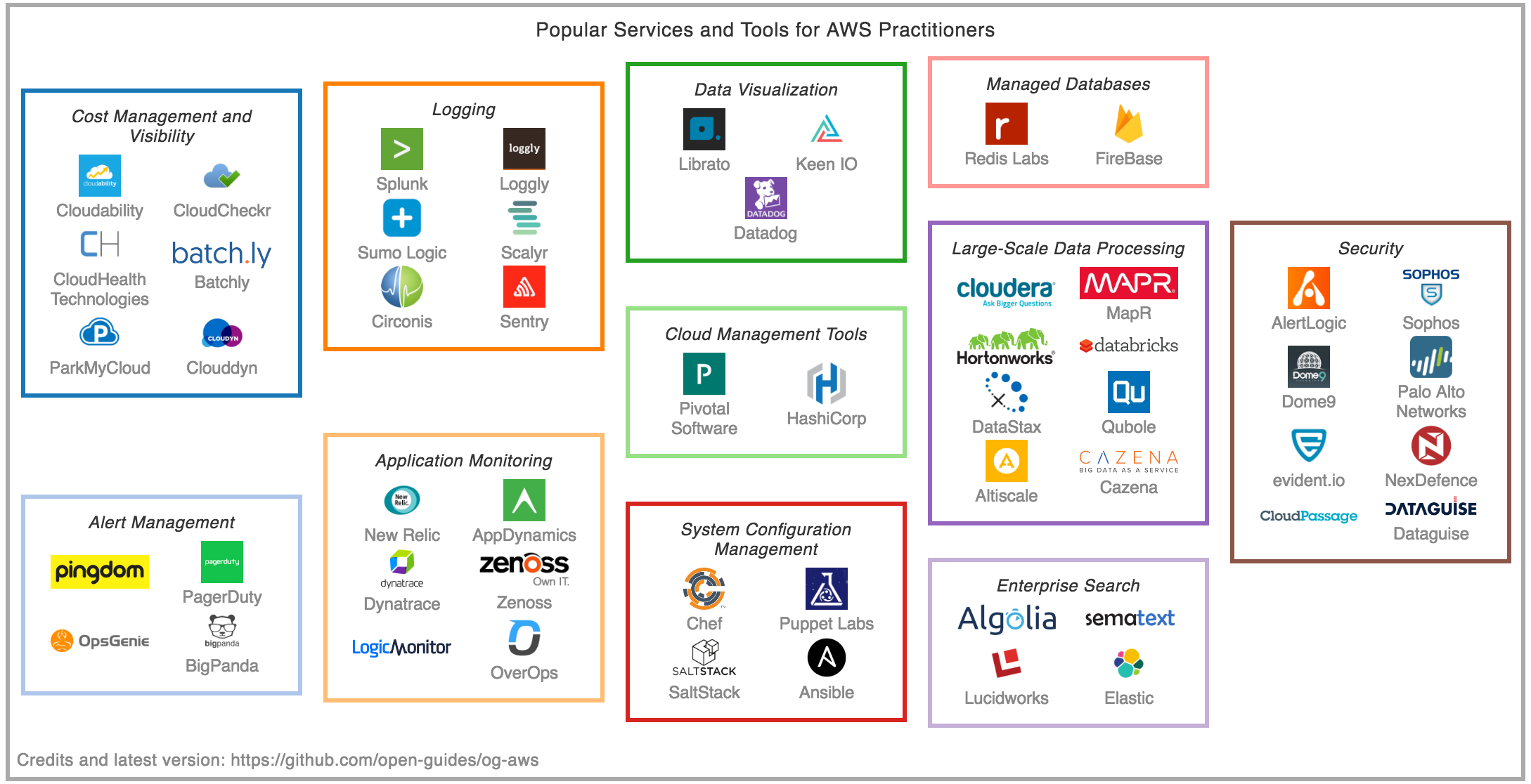

- Figure: Tools and Services Market Landscape: A selection of third-party companies/products

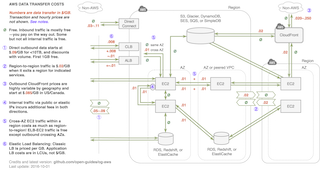

- Figure: AWS Data Transfer Costs: Visual overview of data transfer costs

- Table: Service Matrix: How AWS services compare to alternatives

- Table: AWS Product Maturity and Releases: AWS product releases

- Table: Storage Durability, Availability, and Price: A quantitative comparison

Why an Open Guide?

A lot of information on AWS is already written. Most people learn AWS by reading a blog or a “getting started guide” and referring to the standard AWS references. Nonetheless, trustworthy and practical information and recommendations aren’t easy to come by. AWS’s own documentation is a great but sprawling resource few have time to read fully, and it doesn’t include anything but official facts, so omits experiences of engineers. The information in blogs or Stack Overflow is also not consistently up to date.

This guide is by and for engineers who use AWS. It aims to be a useful, living reference that consolidates links, tips, gotchas, and best practices. It arose from discussion and editing over beers by several engineers who have used AWS extensively.

Before using the guide, please read the license and disclaimer.

Please help!

This is an early in-progress draft! It’s our first attempt at assembling this information, so is far from comprehensive still, and likely to have omissions or errors.

Please help by joining the Slack channel (we like to talk about AWS in general, even if you only have questions — discussion helps the community and guides improvements) and contributing to the guide. This guide is open to contributions, so unlike a blog, it can keep improving. Like any open source effort, we combine efforts but also review to ensure high quality.

Scope

- Currently, this guide covers selected “core” services, such as EC2, S3, Load Balancers, EBS, and IAM, and partial details and tips around other services. We expect it to expand.

- It is not a tutorial, but rather a collection of information you can read and return to. It is for both beginners and the experienced.

- The goal of this guide is to be:

- Brief: Keep it dense and use links

- Practical: Basic facts, concrete details, advice, gotchas, and other “folk knowledge”

- Current: We can keep updating it, and anyone can contribute improvements

- Thoughtful: The goal is to be helpful rather than present dry facts. Thoughtful opinion with rationale is welcome. Suggestions, notes, and opinions based on real experience can be extremely valuable. (We believe this is both possible with a guide of this format, unlike in some other venues.)

- This guide is not sponsored by AWS or AWS-affiliated vendors. It is written by and for engineers who use AWS.

Legend

- 📒 Marks standard/official AWS pages and docs

- 🔹 Important or often overlooked tip

- ❗ “Serious” gotcha (used where risks or time or resource costs are significant: critical security risks, mistakes with significant financial cost, or poor architectural choices that are fundamentally difficult to correct)

- 🔸 “Regular” gotcha, limitation, or quirk (used where where consequences are things not working, breaking, or not scaling gracefully)

- 📜 Undocumented feature (folklore)

- 🐥 Relatively new (and perhaps immature) services or features

- ⏱ Performance discussions

- ⛓ Lock-in: Products or decisions that are likely to tie you to AWS in a new or significant way — that is, later moving to a non-AWS alternative would be costly in terms of engineering effort

- 🚪 Alternative non-AWS options

- 💸 Cost issues, discussion, and gotchas

- 🕍 A mild warning attached to “full solution” or opinionated frameworks that may take significant time to understand and/or might not fit your needs exactly; the opposite of a point solution (the cathedral is a nod to Raymond’s metaphor)

- 📗📘📙 Colors indicate basics, tips, and gotchas, respectively.

- 🚧 Areas where correction or improvement are needed (possibly with link to an issue — do help!)

General Information

When to Use AWS

- AWS is the dominant public cloud computing provider.

- In general, “cloud computing” can refer to one of three types of cloud: “public,” “private,” and “hybrid.” AWS is a public cloud provider, since anyone can use it. Private clouds are within a single (usually large) organization. Many companies use a hybrid of private and public clouds.

- The core features of AWS are infrastructure-as-a-service (IaaS) — that is, virtual machines and supporting infrastructure. Other cloud service models include platform-as-a-service (PaaS), which typically are more fully managed services that deploy customers’ applications, or software-as-a-service (SaaS), which are cloud-based applications. AWS does offer a few products that fit into these other models, too.

- In business terms, with infrastructure-as-a-service you have a variable cost model — it is OpEx, not CapEx (though some pre-purchased contracts are still CapEx).

- AWS's annual revenue was $7.88 billion as of 2015 according to their SEC 10-K filing, or roughly 7% of Amazon.com’s total 2015 revenue.

- Main reasons to use AWS:

- If your company is building systems or products that may need to scale

- and you have technical know-how

- and you want the most flexible tools

- and you’re not significantly tied into different infrastructure already

- and you don’t have internal, regulatory, or compliance reasons you can’t use a public cloud-based solution

- and you’re not on a Microsoft-first tech stack

- and you don’t have a specific reason to use Google Cloud

- and you can afford, manage, or negotiate its somewhat higher costs

- ... then AWS is likely a good option for your company.

- Each of those reasons above might point to situations where other services are preferable. In practice, many, if not most, tech startups as well as a number of modern large companies can or already do benefit from using AWS. Many large enterprises are partly migrating internal infrastructure to Azure, Google Cloud, and AWS.

- Costs: Billing and cost management are such big topics that we have an entire section on this.

- 🔹EC2 vs. other services: Most users of AWS are most familiar with EC2, AWS’ flagship virtual server product, and possibly a few others like S3 and CLBs. But AWS products now extend far beyond basic IaaS, and often companies do not properly understand or appreciate all the many AWS services and how they can be applied, due to the sharply growing number of services, their novelty and complexity, branding confusion, and fear of ⛓lock-in to proprietary AWS technology. Although a bit daunting, it’s important for technical decision-makers in companies to understand the breadth of the AWS services and make informed decisions. (We hope this guide will help.)

- 🚪AWS vs. other cloud providers: While AWS is the dominant IaaS provider (31% market share in this 2016 estimate), there is significant competition and alternatives that are better suited to some companies. This Gartner report has a good overview of the major cloud players :

- Google Cloud. It arrived later to market than AWS, but has vast resources and is now used widely by many companies, including a few large ones. It is gaining market share. Not all AWS services have similar or analogous services in Google Cloud. And vice versa: In particular Google offers some more advanced machine learning-based services like the Vision, Speech, and Natural Language APIs. It’s not common to switch once you’re up and running, but it does happen: Spotify migrated from AWS to Google Cloud. There is more discussion on Quora about relative benefits.

- Microsoft Azure is the de facto choice for companies and teams that are focused on a Microsoft stack, and it has now placed significant emphasis on Linux as well

- In China, AWS’ footprint is relatively small. The market is dominated by Alibaba’s Aliyun.

- Companies at (very) large scale may want to reduce costs by managing their own infrastructure. For example, Dropbox migrated to their own infrastructure.

- Other cloud providers such as Digital Ocean offer similar services, sometimes with greater ease of use, more personalized support, or lower cost. However, none of these match the breadth of products, mind-share, and market domination AWS now enjoys.

- Traditional managed hosting providers such as Rackspace offer cloud solutions as well.

- 🚪AWS vs. PaaS: If your goal is just to put up a single service that does something relatively simple, and you’re trying to minimize time managing operations engineering, consider a platform-as-a-service such as Heroku. The AWS approach to PaaS, Elastic Beanstalk, is arguably more complex, especially for simple use cases.

- 🚪AWS vs. web hosting: If your main goal is to host a website or blog, and you don’t expect to be building an app or more complex service, you may wish consider one of the myriad of web hosting services.

- 🚪AWS vs. managed hosting: Traditionally, many companies pay managed hosting providers to maintain physical servers for them, then build and deploy their software on top of the rented hardware. This makes sense for businesses who want direct control over hardware, due to legacy, performance, or special compliance constraints, but is usually considered old fashioned or unnecessary by many developer-centric startups and younger tech companies.

- Complexity: AWS will let you build and scale systems to the size of the largest companies, but the complexity of the services when used at scale requires significant depth of knowledge and experience. Even very simple use cases often require more knowledge to do “right” in AWS than in a simpler environment like Heroku or Digital Ocean. (This guide may help!)

- Geographic locations: AWS has data centers in over a dozen geographic locations, known as regions, in Europe, East Asia, North and South America, and now Australia and India. It also has many more edge locations globally for reduced latency of services like CloudFront.

- See the current list of regions and edge locations, including upcoming ones.

- If your infrastructure needs to be in close physical proximity to another service for latency or throughput reasons (for example, latency to an ad exchange), viability of AWS may depend on the location.

- ⛓Lock-in: As you use AWS, it’s important to be aware when you are depending on AWS services that do not have equivalents elsewhere.

- Lock-in may be completely fine for your company, or a significant risk. It’s important from a business perspective to make this choice explicitly, and consider the cost, operational, business continuity, and competitive risks of being tied to AWS. AWS is such a dominant and reliable vendor, many companies are comfortable with using AWS to its full extent. Others can tell stories about the dangers of “cloud jail” when costs spiral.

- Generally, the more AWS services you use, the more lock-in you have to AWS — that is, the more engineering resources (time and money) it will take to change to other providers in the future.

- Basic services like virtual servers and standard databases are usually easy to migrate to other providers or on premises. Others like load balancers and IAM are specific to AWS but have close equivalents from other providers. The key thing to consider is whether engineers are architecting systems around specific AWS services that are not open source or relatively interchangeable. For example, Lambda, API Gateway, Kinesis, Redshift, and DynamoDB do not have substantially equivalent open source or commercial service equivalents, while EC2, RDS (MySQL or Postgres), EMR, and ElastiCache more or less do. (See more below, where these are noted with ⛓.)

- Combining AWS and other cloud providers: Many customers combine AWS with other non-AWS services. For example, legacy systems or secure data might be in a managed hosting provider, while other systems are AWS. Or a company might only use S3 with another provider doing everything else. However small startups or projects starting fresh will typically stick to AWS or Google Cloud only.

- Hybrid cloud: In larger enterprises, it is common to have hybrid deployments encompassing private cloud or on-premises servers and AWS — or other enterprise cloud providers like IBM/Bluemix, Microsoft/Azure, NetApp, or EMC.

- Major customers: Who uses AWS and Google Cloud?

- AWS’s list of customers includes large numbers of mainstream online properties and major brands, such as Netflix, Pinterest, Spotify (moving to Google Cloud), Airbnb, Expedia, Yelp, Zynga, Comcast, Nokia, and Bristol-Myers Squibb.

-

Azure's [list of customers](https://azure.microsoft.com/en-us/case-studies/) includes companies such as NBC Universal, 3M and Honeywell Inc.

-

- Google Cloud’s list of customers is large as well, and includes a few mainstream sites, such as Snapchat, Best Buy, Domino’s, and Sony Music.

- AWS’s list of customers includes large numbers of mainstream online properties and major brands, such as Netflix, Pinterest, Spotify (moving to Google Cloud), Airbnb, Expedia, Yelp, Zynga, Comcast, Nokia, and Bristol-Myers Squibb.

Which Services to Use

- AWS offers a lot of different services — about fifty at last count.

- Most customers use a few services heavily, a few services lightly, and the rest not at all. What services you’ll use depends on your use cases. Choices differ substantially from company to company.

- Immature and unpopular services: Just because AWS has a service that sounds promising, it doesn’t mean you should use it. Some services are very narrow in use case, not mature, are overly opinionated, or have limitations, so building your own solution may be better. We try to give a sense for this by breaking products into categories.

- Must-know infrastructure: Most typical small to medium-size users will focus on the following services first. If you manage use of AWS systems, you likely need to know at least a little about all of these. (Even if you don’t use them, you should learn enough to make that choice intelligently.)

- IAM: User accounts and identities (you need to think about accounts early on!)

- EC2: Virtual servers and associated components, including:

- AMIs: Machine Images

- Load Balancers: CLBs and ALBs

- Autoscaling: Capacity scaling (adding and removing servers based on load)

- EBS: Network-attached disks

- Elastic IPs: Assigned IP addresses

- S3: Storage of files

- Route 53: DNS and domain registration

- VPC: Virtual networking, network security, and co-location; you automatically use

- CloudFront: CDN for hosting content

- CloudWatch: Alerts, paging, monitoring

- Managed services: Existing software solutions you could run on your own, but with managed deployment:

- RDS: Managed relational databases (managed MySQL, Postgres, and Amazon’s own Aurora database)

- EMR: Managed Hadoop

- Elasticsearch: Managed Elasticsearch

- ElastiCache: Managed Redis and Memcached

- Optional but important infrastructure: These are key and useful infrastructure components that are less widely known and used. You may have legitimate reasons to prefer alternatives, so evaluate with care to be sure they fit your needs:

- ⛓Lambda: Running small, fully managed tasks “serverless”

- CloudTrail: AWS API logging and audit (often neglected but important)

- ⛓🕍CloudFormation: Templatized configuration of collections of AWS resources

- 🕍Elastic Beanstalk: Fully managed (PaaS) deployment of packaged Java, .NET, PHP, Node.js, Python, Ruby, Go, and Docker applications

- 🐥⛓EFS: Network filesystem

- ⛓🕍ECS: Docker container/cluster management (note Docker can also be used directly, without ECS)

- ⛓ECR: Hosted private Docker registry

- 🐥Config: AWS configuration inventory, history, change notifications

- Special-purpose infrastructure: These services are focused on specific use cases and should be evaluated if they apply to your situation. Many also are proprietary architectures, so tend to tie you to AWS.

- ⛓DynamoDB: Low-latency NoSQL key-value store

- ⛓Glacier: Slow and cheap alternative to S3

- ⛓Kinesis: Streaming (distributed log) service

- ⛓SQS: Message queueing service

- ⛓Redshift: Data warehouse

- 🐥QuickSight: Business intelligence service

- SES: Send and receive e-mail for marketing or transactions

- ⛓API Gateway: Proxy, manage, and secure API calls

- ⛓IoT: Manage bidirectional communication over HTTP, WebSockets, and MQTT between AWS and clients (often but not necessarily “things” like appliances or sensors)

- ⛓WAF: Web firewall for CloudFront to deflect attacks

- ⛓KMS: Store and manage encryption keys securely

- Inspector: Security audit

- Trusted Advisor: Automated tips on reducing cost or making improvements

- 🐥Certificate Manager: Manage SSL/TLS certificates for AWS services

- Compound services: These are similarly specific, but are full-blown services that tackle complex problems and may tie you in. Usefulness depends on your requirements. If you have large or significant need, you may have these already managed by in-house systems and engineering teams.

- Machine Learning: Machine learning model training and classification

- ⛓🕍Data Pipeline: Managed ETL service

- ⛓🕍SWF: Managed state tracker for distributed polyglot job workflow

- ⛓🕍Lumberyard: 3D game engine

- Mobile/app development:

- SNS: Manage app push notifications and other end-user notifications

- ⛓🕍Cognito: User authentication via Facebook, Twitter, etc.

- Device Farm: Cloud-based device testing

- Mobile Analytics: Analytics solution for app usage

- 🕍Mobile Hub: Comprehensive, managed mobile app framework

- Enterprise services: These are relevant if you have significant corporate cloud-based or hybrid needs. Many smaller companies and startups use other solutions, like Google Apps or Box. Larger companies may also have their own non-AWS IT solutions.

- AppStream: Windows apps in the cloud, with access from many devices

- Workspaces: Windows desktop in the cloud, with access from many devices

- WorkDocs (formerly Zocalo): Enterprise document sharing

- WorkMail: Enterprise managed e-mail and calendaring service

- Directory Service: Microsoft Active Directory in the cloud

- Direct Connect: Dedicated network connection between office or data center and AWS

- Storage Gateway: Bridge between on-premises IT and cloud storage

- Service Catalog: IT service approval and compliance

- Probably-don't-need-to-know services: Bottom line, our informal polling indicates these services are just not broadly used — and often for good reasons:

- Snowball: If you want to ship petabytes of data into or out of Amazon using a physical appliance, read on.

- CodeCommit: Git service. You’re probably already using GitHub or your own solution (Stackshare has informal stats).

- 🕍CodePipeline: Continuous integration. You likely have another solution already.

- 🕍CodeDeploy: Deployment of code to EC2 servers. Again, you likely have another solution.

- 🕍OpsWorks: Management of your deployments using Chef. While Chef is popular, it seems few people use OpsWorks, since it involves going in on a whole different code deployment framework.

- AWS in Plain English offers more friendly explanation of what all the other different services are.

Tools and Services Market Landscape

There are now enough cloud and “big data” enterprise companies and products that few can keep up with the market landscape. (See the Big Data Evolving Landscape – 2016 for one attempt at this.)

We’ve assembled a landscape of a few of the services. This is far from complete, but tries to emphasize services that are popular with AWS practitioners — services that specifically help with AWS, or a complementary, or tools almost anyone using AWS must learn.

🚧 Suggestions to improve this figure? Please file an issue.

Common Concepts

- 📒 The AWS General Reference covers a bunch of common concepts that are relevant for multiple services.

- AWS allows deployments in regions, which are isolated geographic locations that help you reduce latency or offer additional redundancy (though typically availability zones are the first tool of choice for high availability).

- Each service has API endpoints for each region. Endpoints differ from service to service and not all services are available in each region, as listed in these tables.

- Amazon Resource Names (ARNs) are specially formatted identifiers for identifying resources. They start with 'arn:' and are used in many services, and in particular for IAM policies.

Service Matrix

Many services within AWS can at least be compared with Google Cloud offerings or with internal Google services. And often times you could assemble the same thing yourself with open source software. This table is an effort at listing these rough correspondences. (Remember that this table is imperfect as in almost every case there are subtle differences of features!)

| Service | AWS | Google Cloud | Google Internal | Microsoft Azure | Other providers | Open source “build your own” |

|---|---|---|---|---|---|---|

| Virtual server | EC2 | Compute Engine (GCE) | Virtual Machine | DigitalOcean | OpenStack | |

| PaaS | Elastic Beanstalk | App Engine | App Engine | Web Apps | Heroku, AppFog, OpenShift | Meteor, AppScale, Cloud Foundry, Convox |

| Serverless, microservices | Lambda, API Gateway | Functions | Function Apps | PubNub Blocks, Auth0 Webtask | Kong, Tyk | |

| Container, cluster manager | ECS | Container Engine, Kubernetes | Borg or Omega | Container Service | Kubernetes, Mesos, Aurora | |

| File storage | S3 | Cloud Storage | GFS | Storage Account | Swift, HDFS | |

| Block storage | EBS | Persistent Disk | Storage Account | NFS | ||

| SQL datastore | RDS | Cloud SQL | SQL Database | MySQL, PostgreSQL | ||

| Sharded RDBMS | F1, Spanner | Crate.io, CockroachDB | ||||

| Bigtable | Cloud Bigtable | Bigtable | HBase | |||

| Key-value store, column store | DynamoDB | Cloud Datastore | Megastore | Tables, DocumentDB | Cassandra, CouchDB, RethinkDB, Redis | |

| Memory cache | ElastiCache | App Engine Memcache | Redis Cache | Memcached, Redis | ||

| Search | CloudSearch, Elasticsearch (managed) | Search | Algolia, QBox | Elasticsearch, Solr | ||

| Data warehouse | Redshift | BigQuery | Dremel | SQL Data Warehouse | Oracle, IBM, SAP, HP, many others | Greenplum |

| Business intelligence | QuickSight | Data Studio 360 | Power BI | Tableau | ||

| Lock manager | DynamoDB (weak) | Chubby | Lease blobs in Storage Account | ZooKeeper, Etcd, Consul | ||

| Message broker | SQS, SNS, IoT | Pub/Sub | PubSub2 | Service Bus | RabbitMQ, Kafka, 0MQ | |

| Streaming, distributed log | Kinesis | Dataflow | PubSub2 | Event Hubs | Kafka Streams, Apex, Flink, Spark Streaming, Storm | |

| MapReduce | EMR | Dataproc | MapReduce | HDInsight, DataLake Analytics | Qubole | Hadoop |

| Monitoring | CloudWatch | Monitoring | Borgmon | Monitor | Prometheus(?) | |

| Metric management | Borgmon, TSDB | Application Insights | Graphite, InfluxDB, OpenTSDB, Grafana, Riemann, Prometheus | |||

| CDN | CloudFront | Cloud CDN | CDN | Apache Traffic Server | ||

| Load balancer | CLB/ALB | Load Balancing | GFE | Load Balancer, Application Gateway | nginx, HAProxy, Apache Traffic Server | |

| DNS | Route53 | DNS | DNS | bind | ||

| SES | Sendgrid, Mandrill, Postmark | |||||

| Git hosting | CodeCommit | Cloud Source Repositories | Visual Studio Team Services | GitHub, BitBucket | GitLab | |

| User authentication | Cognito | Azure Active Directory | oauth.io | |||

| Mobile app analytics | Mobile Analytics | Firebase Analytics | HockeyApp | Mixpanel | ||

| Mobile app testing | Device Farm | Firebase Test Lab | Xamarin Test Cloud | BrowserStack, Sauce Labs, Testdroid | ||

| Managing SSL/TLS certificates | Certificate Manager | Let's Encrypt, Comodo, Symantec, GlobalSign |

🚧 Please help fill this table in.

Selected resources with more detail on this chart:

- Google internal: MapReduce, Bigtable, Spanner, F1 vs Spanner, Bigtable vs Megastore

AWS Product Maturity and Releases

It’s important to know the maturity of each AWS product. Here is a mostly complete list of first release date, with links to the release notes. Most recently released services are first. Not all services are available in all regions; see this table.

| Service | Original release | Availability | CLI Support |

|---|---|---|---|

| 🐥Database Migration Service | 2016-03 | General | |

| 🐥Certificate Manager | 2016-01 | General | ✓ |

| 🐥IoT | 2015-08 | General | ✓ |

| 🐥WAF | 2015-10 | General | ✓ |

| 🐥Data Pipeline | 2015-10 | General | ✓ |

| 🐥Elasticsearch | 2015-10 | General | ✓ |

| 🐥Service Catalog | 2015-07 | General | ✓ |

| 🐥Device Farm | 2015-07 | General | ✓ |

| 🐥CodePipeline | 2015-07 | General | ✓ |

| 🐥CodeCommit | 2015-07 | General | ✓ |

| 🐥API Gateway | 2015-07 | General | ✓ |

| 🐥Config | 2015-06 | General | ✓ |

| 🐥EFS | 2015-05 | General | ✓ |

| 🐥Machine Learning | 2015-04 | General | ✓ |

| Lambda | 2014-11 | General | ✓ |

| ECS | 2014-11 | General | ✓ |

| KMS | 2014-11 | General | ✓ |

| CodeDeploy | 2014-11 | General | ✓ |

| Kinesis | 2013-12 | General | ✓ |

| CloudTrail | 2013-11 | General | ✓ |

| AppStream | 2013-11 | Preview | |

| CloudHSM | 2013-03 | General | ✓ |

| Silk | 2013-03 | Obsolete? | |

| OpsWorks | 2013-02 | General | ✓ |

| Redshift | 2013-02 | General | ✓ |

| Elastic Transcoder | 2013-01 | General | ✓ |

| Glacier | 2012-08 | General | ✓ |

| CloudSearch | 2012-04 | General | ✓ |

| SWF | 2012-02 | General | ✓ |

| Storage Gateway | 2012-01 | General | ✓ |

| DynamoDB | 2012-01 | General | ✓ |

| DirectConnect | 2011-08 | General | ✓ |

| ElastiCache | 2011-08 | General | ✓ |

| CloudFormation | 2011-04 | General | ✓ |

| SES | 2011-01 | General | ✓ |

| Elastic Beanstalk | 2010-12 | General | ✓ |

| Route 53 | 2010-10 | General | ✓ |

| IAM | 2010-09 | General | ✓ |

| SNS | 2010-04 | General | ✓ |

| EMR | 2010-04 | General | ✓ |

| RDS | 2009-12 | General | ✓ |

| VPC | 2009-08 | General | ✓ |

| Snowball | 2009-05 | General | ✓ |

| CloudWatch | 2009-05 | General | ✓ |

| CloudFront | 2008-11 | General | ✓ |

| Fulfillment Web Service | 2008-03 | Obsolete? | |

| SimpleDB | 2007-12 | ❗Nearly obsolete | ✓ |

| DevPay | 2007-12 | General | |

| Flexible Payments Service | 2007-08 | Retired | |

| EC2 | 2006-08 | General | ✓ |

| SQS | 2006-07 | General | ✓ |

| S3 | 2006-03 | General | ✓ |

| Alexa Top Sites | 2006-01 | General ❗HTTP-only | |

| Alexa Web Information Service | 2005-10 | General ❗HTTP-only |

Compliance

- Many applications have strict requirements around reliability, security, or data privacy. The AWS Compliance page has details about AWS’s certifications, which include PCI DSS Level 1, SOC 3, and ISO 9001.

- Security in the cloud is a complex topic, based on a shared responsibility model, where some elements of compliance are provided by AWS, and some are provided by your company.

- Several third-party vendors offer assistance with compliance, security, and auditing on AWS. If you have substantial needs in these areas, assistance is a good idea.

- From inside China, AWS services outside China are generally accessible, though there are at times breakages in service. There are also AWS services inside China.

Getting Help and Support

- Forums: For many problems, it’s worth searching or asking for help in the discussion forums to see if it’s a known issue.

- Premium support: AWS offers several levels of premium support.

- The first tier, called "Developer support" lets you file support tickets with 12 to 24 hour turnaround time, it starts at $29 but once your monthly spend reaches around $1000 it changes to a 3% surcharge on your bill.

- The higher-level support services are quite expensive — and increase your bill by up to 10%. Many large and effective companies never pay for this level of support. They are usually more helpful for midsize or larger companies needing rapid turnaround on deeper or more perplexing problems.

- Keep in mind, a flexible architecture can reduce need for support. You shouldn’t be relying on AWS to solve your problems often. For example, if you can easily re-provision a new server, it may not be urgent to solve a rare kernel-level issue unique to one EC2 instance. If your EBS volumes have recent snapshots, you may be able to restore a volume before support can rectify the issue with the old volume. If your services have an issue in one availability zone, you should in any case be able to rely on a redundant zone or migrate services to another zone.

- Larger customers also get access to AWS Enterprise support, with dedicated technical account managers (TAMs) and shorter response time SLAs.

- There is definitely some controversy about how useful the paid support is. The support staff don’t always seem to have the information and authority to solve the problems that are brought to their attention. Often your ability to have a problem solved may depend on your relationship with your account rep.

- Account manager: If you are at significant levels of spend (thousands of US dollars plus per month), you may be assigned (or may wish to ask for) a dedicated account manager.

- These are a great resource, even if you’re not paying for premium support. Build a good relationship with them and make use of them, for questions, problems, and guidance.

- Assign a single point of contact on your company’s side, to avoid confusing or overwhelming them.

- Contact: The main web contact point for AWS is here. Many technical requests can be made via these channels.

- Consulting and managed services: For more hands-on assistance, AWS has established relationships with many consulting partners and managed service partners. The big consultants won’t be cheap, but depending on your needs, may save you costs long term by helping you set up your architecture more effectively, or offering specific expertise, e.g. security. Managed service providers provide longer-term full-service management of cloud resources.

- AWS Professional Services: AWS provides consulting services alone or in combination with partners.

Restrictions and Other Notes

- 🔸Lots of resources in Amazon have limits on them. This is actually helpful, so you don’t incur large costs accidentally. You have to request that quotas be increased by opening support tickets. Some limits are easy to raise, and some are not. (Some of these are noted in sections below.)

- 🔸AWS terms of service are extensive. Much is expected boilerplate, but it does contain important notes and restrictions on each service. In particular, there are restrictions against using many AWS services in safety-critical systems. (Those appreciative of legal humor may wish to review clause 57.10.)

Related Topics

- OpenStack is a private cloud alternative to AWS used by large companies that wish to avoid public cloud offerings.

Learning and Career Development

Certifications

- Certifications: AWS offers certifications for IT professionals who want to demonstrate their knowledge.

- Certified Solutions Architect Associate

- Certified Developer Associate

- Certified SysOps Administrator Associate

- Certified Solutions Architect Professional

- Certified DevOps Engineer Professional

- Getting certified: If you’re interested in studying for and getting certifications, this practical overview tells you a lot of what you need to know. The official page is here and there is an FAQ.

- Do you need a certification? Especially in consulting companies or when working in key tech roles in large non-tech companies, certifications are important credentials. In others, including in many tech companies and startups, certifications are not common or considered necessary. (In fact, fairly or not, some Silicon Valley hiring managers and engineers see them as a “negative” signal on a resume.)

Managing AWS

Managing Infrastructure State and Change

A great challenge in using AWS to build complex systems (and with DevOps in general) is to manage infrastructure state effectively over time. In general, this boils down to three broad goals for the state of your infrastructure:

- Visibility: Do you know the state of your infrastructure (what services you are using, and exactly how)? Do you also know when you — and anyone on your team — make changes? Can you detect misconfigurations, problems, and incidents with your service?

- Automation: Can you reconfigure your infrastructure to reproduce past configurations or scale up existing ones without a lot of extra manual work, or requiring knowledge that’s only in someone’s head? Can you respond to incidents easily or automatically?

- Flexibility: Can you improve your configurations and scale up in new ways without significant effort? Can you add more complexity using the same tools? Do you share, review, and improve your configurations within your team?

Much of what we discuss below is really about how to improve the answers to these questions.

There are several approaches to deploying infrastructure with AWS, from the console to complex automation tools, to third-party services, all of which attempt to help achieve visibility, automation, and flexibility.

AWS Configuration Management

The first way most people experiment with AWS is via its web interface, the AWS Console. But using the Console is a highly manual process, and often works against automation or flexibility.

So if you’re not going to manage your AWS configurations manually, what should you do? Sadly, there are no simple, universal answers — each approach has pros and cons, and the approaches taken by different companies vary widely, and include directly using APIs (and building tooling on top yourself), using command-line tools, and using third-party tools and services.

AWS Console

- The AWS Console lets you control much (but not all) functionality of AWS via a web interface.

- Ideally, you should only use the AWS Console in a few specific situations:

- It’s great for read-only usage. If you’re trying to understand the state of your system, logging in and browsing it is very helpful.

- It is also reasonably workable for very small systems and teams (for example, one engineer setting up one server that doesn’t change often).

- It can be useful for operations you’re only going to do rarely, like less than once a month (for example, a one-time VPC setup you probably won’t revisit for a year). In this case using the console can be the simplest approach.

- ❗Think before you use the console: The AWS Console is convenient, but also the enemy of automation, reproducibility, and team communication. If you’re likely to be making the same change multiple times, avoid the console. Favor some sort of automation, or at least have a path toward automation, as discussed next. Not only does using the console preclude automation, which wastes time later, but it prevents documentation, clarity, and standardization around processes for yourself and your team.

Command-Line tools

- The aws command-line interface (CLI), used via the aws command, is the most basic way to save and automate AWS operations.

- Don’t underestimate its power. It also has the advantage of being well-maintained — it covers a large proportion of all AWS services, and is up to date.

- In general, whenever you can, prefer the command line to the AWS Console for performing operations.

- 🔹Even in absence of fancier tools, you can write simple Bash scripts that invoke aws with specific arguments, and check these into Git. This is a primitive but effective way to document operations you’ve performed. It improves automation, allows code review and sharing on a team, and gives others a starting point for future work.

- 🔹For use that is primarily interactive, and not scripted, consider instead using the aws-shell tool from AWS. It is easier to use, with auto-completion and a colorful UI, but still works on the command line. If you’re using SAWS, a previous version of the program, you should migrate to aws-shell.

APIs and SDKs

- SDKs for using AWS APIs are available in most major languages, with Go, iOS, Java, JavaScript, Python, Ruby, and PHP being most heavily used. AWS maintains a short list, but the awesome-aws list is the most comprehensive and current. Note support for C++ is still new.

- Retry logic: An important aspect to consider whenever using SDKs is error handling; under heavy use, a wide variety of failures, from programming errors to throttling to AWS-related outages or failures, can be expected to occur. SDKs typically implement exponential backoff to address this, but this may need to be understood and adjusted over time for some applications. For example, it is often helpful to alert on some error codes and not on others.

- ❗Don’t use APIs directly. Although AWS documentation includes lots of API details, it’s better to use the SDKs for your preferred language to access APIs. SDKs are more mature, robust, and well-maintained than something you’d write yourself.

Boto

- A good way to automate operations in a custom way is Boto3, also known as the Amazon SDK for Python. Boto2, the previous version of this library, has been in wide use for years, but now there is a newer version with official support from Amazon, so prefer Boto3 for new projects.

- If you find yourself writing a Bash script with more than one or two CLI commands, you’re probably doing it wrong. Stop, and consider writing a Boto script instead. This has the advantages that you can:

- Check return codes easily so success of each step depends on success of past steps.

- Grab interesting bits of data from responses, like instance ids or DNS names.

- Add useful environment information (for example, tag your instances with git revisions, or inject the latest build identifier into your initialization script).

General Visibility

- 🔹Tagging resources is an essential practice, especially as organizations grow, to better understand your resource usage. For example, through automation or convention, you can add tags:

- For the org or developer that “owns” that resource

- For the product that resource supports

- To label lifecycles, such as temporary resources or one that should be deprovisioned in the future

- To distinguish production-critical infrastructure (e.g. serving systems vs backend pipelines)

- To distinguish resources with special security or compliance requirements

Managing Servers and Applications

AWS vs Server Configuration

This guide is about AWS, not DevOps or server configuration management in general. But before getting into AWS in detail, it’s worth noting that in addition to the configuration management for your AWS resources, there is the long-standing problem of configuration management for servers themselves.

Philosophy

- Heroku’s Twelve-Factor App principles list some established general best practices for deploying applications.

- Pets vs cattle: Treat servers like cattle, not pets. That is, design systems so infrastructure is disposable. It should be minimally worrisome if a server is unexpectedly destroyed.

- The concept of immutable infrastructure is an extension of this idea.

- Minimize application state on EC2 instances. In general, instances should be able to be killed or die unexpectedly with minimal impact. State that is in your application should quickly move to RDS, S3, DynamoDB, EFS, or other data stores not on that instance. EBS is also an option, though it generally should not be the bootable volume, and EBS will require manual or automated re-mounting.

Server Configuration Management

- There is a large set of open source tools for managing configuration of server instances.

- These are generally not dependent on any particular cloud infrastructure, and work with any variety of Linux (or in many cases, a variety of operating systems).

- Leading configuration management tools are Puppet, Chef, Ansible, and Saltstack. These aren’t the focus of this guide, but we may mention them as they relate to AWS.

Containers and AWS

- Docker and the containerization trend are changing the way many servers and services are deployed in general.

- Containers are designed as a way to package up your application(s) and all of their dependencies in a known way. When you build a container, you are including every library or binary your application needs, outside of the kernel. A big advantage of this approach is that it’s easy to test and validate a container locally without worrying about some difference between your computer and the servers you deploy on.

- A consequence of this is that you need fewer AMIs and boot scripts; for most deployments, the only boot script you need is a template that fetches an exported docker image and runs it.

- Companies that are embracing microservice architectures will often turn to container-based deployments.

- AWS launched ECS as a service to manage clusters via Docker in late 2014, though many people still deploy Docker directly themselves. See the ECS section for more details.

Visibility

- Store and track instance metadata (such as instance id, availability zone, etc.) and deployment info (application build id, Git revision, etc.) in your logs or reports. The instance metadata service can help collect some of the AWS data you’ll need.

- Use log management services: Be sure to set up a way to view and manage logs externally from servers.

- Cloud-based services such as Sumo Logic, Splunk Cloud, Scalyr, and Loggly are the easiest to set up and use (and also the most expensive, which may be a factor depending on how much log data you have).

- Major open source alternatives include Elasticsearch, Logstash, and Kibana (the “Elastic Stack”) and Graylog.

- If you can afford it (you have little data or lots of money) and don’t have special needs, it makes sense to use hosted services whenever possible, since setting up your own scalable log processing systems is notoriously time consuming.

- Track and graph metrics: The AWS Console can show you simple graphs from CloudWatch, you typically will want to track and graph many kinds of metrics, from CloudWatch and your applications. Collect and export helpful metrics everywhere you can (and as long as volume is manageable enough you can afford it).

- Services like Librato, KeenIO, and Datadog have fancier features or better user interfaces that can save a lot of time. (A more detailed comparison is here.)

- Use Prometheus or Graphite as timeseries databases for your metrics (both are open source).

- Grafana can visualize with dashboards the stored metrics of both timeseries databases (also open source).

Tips for Managing Servers

- ❗Timezone settings on servers: unless absolutely necessary, always set the timezone on servers to UTC (see instructions for your distribution, such as Ubuntu, CentOS or Amazon Linux). Numerous distributed systems rely on time for synchronization and coordination and UTC provides the universal reference plane: it is not subject to daylight savings changes and adjustments in local time. It will also save you a lot of headache debugging elusive timezone issues and provide coherent timeline of events in your logging and audit systems.

- NTP and accurate time: If you are not using Amazon Linux (which comes preconfigured), you should confirm your servers configure NTP correctly, to avoid insidious time drift (which can then cause all sorts of issues, from breaking API calls to misleading logs). This should be part of your automatic configuration for every server. If time has already drifted substantially (generally >1000 seconds), remember NTP won’t shift it back, so you may need to remediate manually (for example, like this on Ubuntu).

- Testing immutable infrastructure: If you want to be proactive about testing your service’s ability to cope with instance termination or failure, it can be helpful to introduce random instance termination during business hours, which will expose any such issues at a time when engineers are available to identify and fix them. Netflix’s Simian Army (specifically, Chaos Monkey) is a popular tool for this. Alternatively, chaos-lambda by the BBC is a lightweight option which runs on AWS Lambda.

Security and IAM

We cover security basics first, since configuring user accounts is something you usually have to do early on when setting up your system.

Security and IAM Basics

- 📒 IAM Homepage ∙ User guide ∙ FAQ

- The AWS Security Blog is one of the best sources of news and information on AWS security.

- IAM is the service you use to manage accounts and permissioning for AWS.

- Managing security and access control with AWS is critical, so every AWS administrator needs to use and understand IAM, at least at a basic level.

- IAM identities include users (people or services that are using AWS), groups (containers for sets of users and their permissions), and roles (containers for permissions assigned to AWS service instances). Permissions for these identities are governed by policies You can use AWS pre-defined policies or custom policies that you create.

- IAM manages various kinds of authentication, for both users and for software services that may need to authenticate with AWS, including:

- Passwords to log into the console. These are a username and password for real users.

- Access keys, which you may use with command-line tools. These are two strings, one the “id”, which is an upper-case alphabetic string of the form 'AXXXXXXXXXXXXXXXXXXX', and the other is the secret, which is a 40-character mixed-case base64-style string. These are often set up for services, not just users.

- 📜 Access keys that start with AKIA are normal keys. Access keys that start with ASIA are session/temporary keys from STS, and will require an additional "SessionToken" parameter to be sent along with the id and secret.

- Multi-factor authentication (MFA), which is the highly recommended practice of using a keychain fob or smartphone app as a second layer of protection for user authentication.

- IAM allows complex and fine-grained control of permissions, dividing users into groups, assigning permissions to roles, and so on. There is a policy language that can be used to customize security policies in a fine-grained way.

- 🔸The policy language has a complex and error-prone JSON syntax that’s quite confusing, so unless you are an expert, it is wise to base yours off trusted examples or AWS’ own pre-defined managed policies.

- At the beginning, IAM policy may be very simple, but for large systems, it will grow in complexity, and need to be managed with care.

- 🔹Make sure one person (perhaps with a backup) in your organization is formally assigned ownership of managing IAM policies, make sure every administrator works with that person to have changes reviewed. This goes a long way to avoiding accidental and serious misconfigurations.

- It is best to give each user or service the minimum privileges needed to perform their duties. This is the principle of least privilege, one of the foundations of good security. Organize all IAM users and groups according to levels of access they need.

- IAM has the permission hierarchy of:

- Explicit deny: The most restrictive policy wins.

- Explicit allow: Access permissions to any resource has to be explicitly given.

- Implicit deny: All permissions are implicitly denied by default.

- You can test policy permissions via the AWS IAM policy simulator tool tool. This is particularly useful if you write custom policies.

Security and IAM Tips

- 🔹Use IAM to create individual user accounts and use IAM accounts for all users from the beginning. This is slightly more work, but not that much.

- That way, you define different users, and groups with different levels of privilege (if you want, choose from Amazon’s default suggestions, of administrator, power user, etc.).

- This allows credential revocation, which is critical in some situations. If an employee leaves, or a key is compromised, you can revoke credentials with little effort.

- You can set up Active Directory federation to use organizational accounts in AWS.

- ❗Enable MFA on your account.

- You should always use MFA, and the sooner the better — enabling it when you already have many users is extra work.

- Unfortunately it can’t be enforced in software, so an administrative policy has to be established.

- Most users can use the Google Authenticator app (on iOS or Android) to support two-factor authentication. For the root account, consider a hardware fob.

- ❗Turn on CloudTrail: One of the first things you should do is enable CloudTrail. Even if you are not a security hawk, there is little reason not to do this from the beginning, so you have data on what has been happening in your AWS account should you need that information. You’ll likely also want to set up a log management service to search and access these logs.

- 🔹Use IAM roles for EC2: Rather than assign IAM users to applications like services and then sharing the sensitive credentials, define and assign roles to EC2 instances and have applications retrieve credentials from the instance metadata.

- Assign IAM roles by realm — for example, to development, staging, and production. If you’re setting up a role, it should be tied to a specific realm so you have clean separation. This prevents, for example, a development instance from connecting to a production database.

- Best practices: AWS’ list of best practices is worth reading in full up front.

- Multiple accounts: Decide on whether you want to use multiple AWS accounts and research how to organize access across them. Factors to consider:

- Number of users

- Importance of isolation

- Resource Limits

- Permission granularity

- Security

- API Limits

- Regulatory issues

- Workload

- Size of infrastructure

- Cost of multi-account “overhead”: Internal AWS service management tools may need to be custom built or adapted.

- 🔹It can help to use separate AWS accounts for independent parts of your infrastructure if you expect a high rate of AWS API calls, since AWS throttles calls at the AWS account level.

- Inspector is an automated security assessment service from AWS that helps identify common security risks. This allows validation that you adhere to certain security practices and may help with compliance.

- Trusted Advisor addresses a variety of best practices, but also offers some basic security checks around IAM usage, security group configurations, and MFA.

- Use KMS for managing keys: AWS offers KMS for securely managing encryption keys, which is usually a far better option than handling key security yourself. See below.

- AWS WAF is a web application firewall to help you protect your applications from common attack patterns.

- Security auditing:

- Security Monkey is an open source tool that is designed to assist with security audits.

- Scout2 is an open source tool that uses AWS APIs to assess an environment's security posture. Scout2 is stable and actively maintained.

- 🔹Export and audit security settings: You can audit security policies simply by exporting settings using AWS APIs, e.g. using a Boto script like SecConfig.py (from this 2013 talk) and then reviewing and monitoring changes manually or automatically.

Security and IAM Gotchas and Limitations

- ❗Don’t share user credentials: It’s remarkably common for first-time AWS users to create one account and one set of credentials (access key or password), and then use them for a while, sharing among engineers and others within a company. This is easy. But don’t do this. This is an insecure practice for many reasons, but in particular, if you do, you will have reduced ability to revoke credentials on a per-user or per-service basis (for example, if an employee leaves or a key is compromised), which can lead to serious complications.

- ❗Instance metadata throttling: The instance metadata service has rate limiting on API calls. If you deploy IAM roles widely (as you should!) and have lots of services, you may hit global account limits easily.

- One solution is to have code or scripts cache and reuse the credentials locally for a short period (say 2 minutes). For example, they can be put into the ~/.aws/credentials file but must also be refreshed automatically.

- But be careful not to cache credentials for too long, as they expire. (Note the other dynamic metadata also changes over time and should not be cached a long time, either.)

- 🔸Some IAM operations are slower than other API calls (many seconds), since AWS needs to propagate these globally across regions.

- ❗The uptime of IAM’s API has historically been lower than that of the instance metadata API. Be wary of incorporating a dependency on IAM’s API into critical paths or subsystems — for example, if you validate a user’s IAM group membership when they log into an instance and aren’t careful about precaching group membership or maintaining a back door, you might end up locking users out altogether when the API isn’t available.

- ❗Don't check in AWS credentials or secrets to a git repository. There are bots that scan GitHub looking for credentials. Use scripts or tools, such as git-secrets to prevent anyone on your team from checking in sensitive information to your git repositories.

S3

S3 Basics

- 📒 Homepage ∙ Developer guide ∙ FAQ ∙ Pricing

- S3 (Simple Storage Service) is AWS’ standard cloud storage service, offering file (opaque “blob”) storage of arbitrary numbers of files of almost any size, from 0 to 5 TB. (Prior to 2011 the maximum size was 5 GB; larger sizes are now well supported via multipart support.)

- Items, or objects, are placed into named buckets stored with names which are usually called keys. The main content is the value.

- Objects are created, deleted, or updated. Large objects can be streamed, but you cannot access or modify parts of a value; you need to update the whole object.

- Every object also has metadata, which includes arbitrary key-value pairs, and is used in a way similar to HTTP headers. Some metadata is system-defined, some are significant when serving HTTP content from buckets or CloudFront, and you can also define arbitrary metadata for your own use.

- S3 URIs: Although often bucket and key names are provided in APIs individually, it’s also common practice to write an S3 location in the form 's3://bucket-name/path/to/key' (where the key here is 'path/to/key'). (You’ll also see 's3n://' and 's3a://' prefixes in Hadoop systems.)

- S3 vs Glacier, EBS, and EFS: AWS offers many storage services, and several besides S3 offer file-type abstractions. Glacier is for cheaper and infrequently accessed archival storage. EBS, unlike S3, allows random access to file contents via a traditional filesystem, but can only be attached to one EC2 instance at a time. EFS is a network filesystem many instances can connect to, but at higher cost. See the comparison table.

S3 Tips

- For most practical purposes, you can consider S3 capacity unlimited, both in total size of files and number of objects.

- Bucket naming: Buckets are chosen from a global namespace (across all regions, even though S3 itself stores data in whichever S3 region you select), so you’ll find many bucket names are already taken. Creating a bucket means taking ownership of the name until you delete it. Bucket names have a few restrictions on them.

- Bucket names can be used as part of the hostname when accessing the bucket or its contents, like

<bucket_name>.s3-us-east-1.amazonaws.com, as long as the name is DNS compliant. - A common practice is to use the company name acronym or abbreviation to prefix (or suffix, if you prefer DNS-style hierarchy) all bucket names (but please, don’t use a check on this as a security measure — this is highly insecure and easily circumvented!).

- 🔸Bucket names with '.' (periods) in them can cause certificate mismatches when used with SSL. Use '-' instead, since this then conforms with both SSL expectations and is DNS compliant.

- Bucket names can be used as part of the hostname when accessing the bucket or its contents, like

- The number of objects in a bucket is essentially unlimited. Customers routinely have millions of objects.

- Versioning: S3 has optional versioning support, so that all versions of objects are preserved on a bucket. This is mostly useful if you want an archive of changes or the ability to back out mistakes (it has none of the features of full version control systems like Git).

- Durability: Durability of S3 is extremely high, since internally it keeps several replicas. If you don’t delete it by accident, you can count on S3 not losing your data. (AWS offers the seemingly improbable durability rate of 99.999999999%, but this is a mathematical calculation based on independent failure rates and levels of replication — not a true probability estimate. Either way, S3 has had a very good record of durability.) Note this is much higher durability than EBS! If durability is less important for your application, you can use S3 Reduced Redundancy Storage, which lowers the cost per GB, as well as the redundancy.

- 💸S3 pricing depends on storage, requests, and transfer.

- For transfer, putting data into AWS is free, but you’ll pay on the way out. Transfer from S3 to EC2 in the same region is free. Transfer to other regions or the Internet in general is not free.

- Deletes are free.

- S3 Reduced Redundancy and Infrequent Access: Most people use the Standard storage class in S3, but there are other storage classes with lower cost:

- Reduced Redundancy Storage (RRS) has lower durability (99.99%, so just four nines). That is, there’s a small chance you’ll lose data. For some data sets where data has value in a statistical way (losing say half a percent of your objects isn’t a big deal) this is a reasonable trade-off.

- Infrequent Access (IA) lets you get cheaper storage in exchange for more expensive access. This is great for archives like logs you already processed, but might want to look at later. To get an idea of the cost savings when using Infrequent Access (IA), you can use this S3 Infrequent Access Calculator.

- Glacier is a third alternative discussed as a separate product.

- See the comparison table.

- ⏱Performance: Maximizing S3 performance means improving overall throughput in terms of bandwidth and number of operations per second.

- S3 is highly scalable, so in principle you can get arbitrarily high throughput. (A good example of this is S3DistCp.)

- But usually you are constrained by the pipe between the source and S3 and/or the level of concurrency of operations.

- Throughput is of course highest from within AWS to S3, and between EC2 instances and S3 buckets that are in the same region.

- Bandwidth from EC2 depends on instance type. See the “Network Performance” column at ec2instances.info.

- Throughput of many objects is extremely high when data is accessed in a distributed way, from many EC2 instances. It’s possible to read or write objects from S3 from hundreds or thousands of instances at once.

- However, throughput is very limited when objects accessed sequentially from a single instance. Individual operations take many milliseconds, and bandwidth to and from instances is limited.

- Therefore, to perform large numbers of operations, it’s necessary to use multiple worker threads and connections on individual instances, and for larger jobs, multiple EC2 instances as well.

- Multi-part uploads: For large objects you want to take advantage of the multi-part uploading capabilities (starting with minimum chunk sizes of 5 MB).

- Large downloads: Also you can download chunks of a single large object in parallel by exploiting the HTTP GET range-header capability.

- 🔸List pagination: Listing contents happens at 1000 responses per request, so for buckets with many millions of objects listings will take time.

- ❗Key prefixes: In addition, latency on operations is highly dependent on prefix similarities among key names. If you have need for high volumes of operations, it is essential to consider naming schemes with more randomness early in the key name (first 6 or 8 characters) in order to avoid “hot spots”.

- We list this as a major gotcha since it’s often painful to do large-scale renames.

- 🔸Note that sadly, the advice about random key names goes against having a consistent layout with common prefixes to manage data lifecycles in an automated way.

- For data outside AWS, DirectConnect and S3 Transfer Acceleration can help. For S3 Transfer Acceleration, you pay about the equivalent of 1-2 months of storage for the transfer in either direction for using nearer endpoints.

- Command-line applications: There are a few ways to use S3 from the command line:

- Originally, s3cmd was the best tool for the job. It’s still used heavily by many.

- The regular aws command-line interface now supports S3 well, and is useful for most situations.

- s4cmd is a replacement, with greater emphasis on performance via multi-threading, which is helpful for large files and large sets of files, and also offers Unix-like globbing support.

- GUI applications: You may prefer a GUI, or wish to support GUI access for less technical users. Some options:

- The AWS Console does offer a graphical way to use S3. Use caution telling non-technical people to use it, however, since without tight permissions, it offers access to many other AWS features.

- Transmit is a good option on OS X for basic use cases. Uses legacy AWS2 signatures for authentication and is missing multipart upload support.

- Cyberduck is a good option on OS X and Windows with support for multipart uploads, ACLs, versioning, lifecycle configuration, storage classes and server side encryption (SSE-S3 and SSE-KMS).

- S3 and CloudFront: S3 is tightly integrated with the CloudFront CDN. See the CloudFront section for more information, as well as S3 transfer acceleration.

- Static website hosting:

- S3 has a static website hosting option that is simply a setting that enables configurable HTTP index and error pages and HTTP redirect support to public content in S3. It’s a simple way to host static assets or a fully static website.

- Consider using CloudFront in front of most or all assets:

- Like any CDN, CloudFront improves performance significantly.

- 🔸SSL is only supported on the built-in amazonaws.com domain for S3. S3 supports serving these sites through a custom domain, but not over SSL on a custom domain. However, CloudFront allows you to serve a custom domain over https. Amazon provides free SNI SSL/TLS certificates via Amazon Certificate Manager. SNI does not work on very outdated browsers/operating systems. Alternatively, you can provide your own certificate to use on CloudFront to support all browsers/operating systems.

- 🔸If you are including resources across domains, such as fonts inside CSS files, you may need to configure CORS for the bucket serving those resources.

- Since pretty much everything is moving to SSL nowadays, and you likely want control over the domain, you probably want to set up CloudFront with your own certificate in front of S3 (and to ignore the AWS example on this as it is non-SSL only).

- That said, if you do, you’ll need to think through invalidation or updates on CloudFront. You may wish to include versions or hashes in filenames so invalidation is not necessary.

- Permissions:

- 🔸It’s important to manage permissions sensibly on S3 if you have data sensitivities, as fixing this later can be a difficult task if you have a lot of assets and internal users.

- 🔹Do create new buckets if you have different data sensitivities, as this is much less error prone than complex permissions rules.

- 🔹If data is for administrators only, like log data, put it in a bucket that only administrators can access.

- 💸Limit individual user (or IAM role) access to S3 to the minimal required and catalog the “approved” locations. Otherwise, S3 tends to become the dumping ground where people put data to random locations that are not cleaned up for years, costing you big bucks.

- Data lifecycles:

- When managing data, the understanding the lifecycle of the data is as important as understanding the data itself. When putting data into a bucket, think about its lifecycle — its end of life, not just its beginning.

- 🔹In general, data with different expiration policies should be stored under separate prefixes at the top level. For example, some voluminous logs might need to be deleted automatically monthly, while other data is critical and should never be deleted. Having the former in a separate bucket or at least a separate folder is wise.

- 🔸Thinking about this up front will save you pain. It’s very hard to clean up large collections of files created by many engineers with varying lifecycles and no coherent organization.

- Alternatively you can set a lifecycle policy to archive old data to Glacier. Be careful with archiving large numbers of small objects to Glacier, since it may actually cost more.

- There is also a storage class called Infrequent Access that has the same durability as Standard S3, but is discounted per GB. It is suitable for objects that are infrequently accessed.

- Data consistency: Understanding data consistency is critical for any use of S3 where there are multiple producers and consumers of data.

- Creation of individual objects in S3 is atomic. You’ll never upload a file and have another client see only half the file.

- Also, if you create a new object, you’ll be able to read it instantly, which is called read-after-write consistency.

- Well, with the additional caveat that if you do a read on an object before it exists, then create it, you get eventual consistency (not read-after-write).

- If you overwrite or delete an object, you’re only guaranteed eventual consistency.

- 🔹Note that until 2015, 'us-standard' region had had a weaker eventual consistency model, and the other (newer) regions were read-after-write. This was finally corrected — but watch for many old blogs mentioning this!

- In practice, “eventual consistency” usually means within seconds, but expect rare cases of minutes or hours.

- S3 as a filesystem:

- In general S3’s APIs have inherent limitations that make S3 hard to use directly as a POSIX-style filesystem while still preserving S3’s own object format. For example, appending to a file requires rewriting, which cripples performance, and atomic rename of directories, mutual exclusion on opening files, and hardlinks are impossible.

- s3fs is a FUSE filesystem that goes ahead and tries anyway, but it has performance limitations and surprises for these reasons.

- Riofs (C) and Goofys (Go) are more recent efforts that attempt adopt a different data storage format to address those issues, and so are likely improvements on s3fs.

- S3QL (discussion) is a Python implementation that offers data de-duplication, snap-shotting, and encryption, but only one client at a time.

- ObjectiveFS (discussion) is a commercial solution that supports filesystem features and concurrent clients.

- If you are primarily using a VPC, consider setting up a VPC Endpoint for S3 in order to allow your VPC-hosted resources to easily access it without the need for extra network configuration or hops.

- Cross-region replication: S3 has a feature for replicating a bucket between one region and another. Note that S3 is already highly replicated within one region, so usually this isn’t necessary for durability, but it could be useful for compliance (geographically distributed data storage), lower latency, or as a strategy to reduce region-to-region bandwidth costs by mirroring heavily used data in a second region.

- IPv4 vs IPv6: For a long time S3 only supported IPv4 at the default endpoint

https://BUCKET.s3.amazonaws.com. However, as of Aug 11, 2016 it now supports both IPv4 & IPv6! To use both, you have to enable dualstack either in your preferred API client or by directly using this url schemehttps://BUCKET.s3.dualstack.REGION.amazonaws.com. - S3 event notifications: S3 can be configured to send an SNS notification, SQS message, or AWS Lambda function on bucket events.

S3 Gotchas and Limitations

- 🔸For many years, there was a notorious 100-bucket limit per account, which could not be raised and caused many companies significant pain. As of 2015, you can request increases. You can ask to increase the limit, but it will still be capped (generally below ~1000 per account).

- 🔸Be careful not to make implicit assumptions about transactionality or sequencing of updates to objects. Never assume that if you modify a sequence of objects, the clients will see the same modifications in the same sequence, or if you upload a whole bunch of files, that they will all appear at once to all clients.

- 🔸S3 has an SLA with 99.9% uptime. If you use S3 heavily, you’ll inevitably see occasional error accessing or storing data as disks or other infrastructure fail. Availability is usually restored in seconds or minutes. Although availability is not extremely high, as mentioned above, durability is excellent.

- 🔸After uploading, any change that you make to the object causes a full rewrite of the object, so avoid appending-like behavior with regular files.

- 🔸Eventual data consistency, as discussed above, can be surprising sometimes. If S3 suffers from internal replication issues, an object may be visible from a subset of the machines, depending on which S3 endpoint they hit. Those usually resolve within seconds; however, we’ve seen isolated cases when the issue lingered for 20-30 hours.

- 🔸MD5s and multi-part uploads: In S3, the ETag header in S3 is a hash on the object. And in many cases, it is the MD5 hash. However, this is not the case in general when you use multi-part uploads. One workaround is to compute MD5s yourself and put them in a custom header (such as is done by s4cmd).

- 🔸US Standard region: Previously, the us-east-1 region (also known as the US Standard region) was replicated across coasts, which led to greater variability of latency. Effective Jun 19, 2015 this is no longer the case. All Amazon S3 regions now support read-after-write consistency. Amazon S3 also renamed the US Standard region to the US East (N. Virginia) region to be consistent with AWS regional naming conventions.

- ❗When configuring ACLs on who can access the bucket and contents, a predefined group exists called Authenticated Users. This group is often used, incorrectly, to restrict S3 resource access to authenticated users of the owning account. If granted, the AuthenticatedUsers group will allow S3 resource access to all authenticated users, across all AWS accounts. A typical use case of this ACL is used in conjunction with the requester pays functionality of S3.

Storage Durability, Availability, and Price

As an illustration of comparative features and price, the table below gives S3 Standard, RRS, IA, in comparison with Glacier, EBS, and EFS, using Virginia region as of August 2016.

| Durability (per year) | Availability “designed” | Availability SLA | Storage (per TB per month) | GET or retrieve (per million) | Write or archive (per million) | |

|---|---|---|---|---|---|---|

| Glacier | Eleven 9s | Sloooow | – | $7 | $50 | $50 |

| S3 IA | Eleven 9s | 99.9% | 99% | $12.50 | $1 | $10 |

| S3 RRS | 99.99% | 99.99% | 99.9% | $24 | $0.40 | $5 |

| S3 Standard | Eleven 9s | 99.99% | 99.9% | $30 | $0.40 | $5 |

| EBS | 99.8% | Unstated | 99.95% | $25/$45/$100/$125+ (sc1/st1/gp2/io1) | ||

| EFS | “High” | “High” | – | $300 |

Especially notable items are in boldface. Sources: S3 pricing, S3 SLA, S3 FAQ, RRS info, Glacier pricing, EBS availability and durability, EBS pricing, EFS pricing, EC2 SLA

EC2

EC2 Basics

- 📒 Homepage ∙ Documentation ∙ FAQ ∙ Pricing (see also ec2instances.info)

- EC2 (Elastic Compute Cloud) is AWS’ offering of the most fundamental piece of cloud computing: A virtual private server. These “instances” can run most Linux, BSD, and Windows operating systems. Internally, they use Xen virtualization.

- The term “EC2” is sometimes used to refer to the servers themselves, but technically refers more broadly to a whole collection of supporting services, too, like load balancing (CLBs/ALBs), IP addresses (EIPs), bootable images (AMIs), security groups, and network drives (EBS) (which we discuss individually in this guide).

- 💸**EC2 pricing** and cost management is a complicated topic. It can range from free (on the AWS free tier) to a lot, depending on your usage. Pricing is by instance type, by hour and changes depending on AWS region and whether you are purchasing your instances On-Demand, on the Spot market or pre-purchasing (Reserved Instances).

EC2 Alternatives and Lock-In

- Running EC2 is akin to running a set of physical servers, as long as you don’t do automatic scaling or tooled cluster setup. If you just run a set of static instances, migrating to another VPS or dedicated server provider should not be too hard.

- 🚪Alternatives to EC2: The direct alternatives are Google Cloud, Microsoft Azure, Rackspace, DigitalOcean and other VPS providers, some of which offer similar API for setting up and removing instances. (See the comparisons above.)

- Should you use Amazon Linux? AWS encourages use of their own Amazon Linux, which is evolved from Red Hat Enterprise Linux (RHEL) and CentOS. It’s used by many, but others are skeptical. Whatever you do, think this decision through carefully. It’s true Amazon Linux is heavily tested and better supported in the unlikely event you have deeper issues with OS and virtualization on EC2. But in general, many companies do just fine using a standard, non-Amazon Linux distribution, such as Ubuntu or CentOS. Using a standard Linux distribution means you have an exactly replicable environment should you use another hosting provider instead of (or in addition to) AWS. It’s also helpful if you wish to test deployments on local developer machines running the same standard Linux distribution (a practice that’s getting more common with Docker, too, and not currently possible with Amazon Linux).

- EC2 costs: See the section on this.

EC2 Tips

- 🔹Picking regions: When you first set up, consider which regions you want to use first. Many people in North America just automatically set up in the us-east-1 (N. Virginia) region, which is the default, but it’s worth considering if this is best up front. For example, you might find it preferable to start in us-west-1 (N. California) or us-west-2 (Oregon) if you’re in California and latency matters. Some services are not available in all regions. Baseline costs also vary by region, up to 10-30% (generally lowest in us-east-1).

- Instance types: EC2 instances come in many types, corresponding to the capabilities of the virtual machine in CPU architecture and speed, RAM, disk sizes and types (SSD or magnetic), and network bandwidth.

- Selecting instance types is complex since there are so many types. Additionally, there are different generations, released over the years.

- 🔹Use the list at ec2instances.info to review costs and features. Amazon’s own list of instance types is hard to use, and doesn’t list features and price together, which makes it doubly difficult.

- Prices vary a lot, so use ec2instances.info to determine the set of machines that meet your needs and ec2price.com to find the cheapest type in the region you’re working in. Depending on the timing and region, it might be much cheaper to rent an instance with more memory or CPU than the bare minimum.