Microservices with Micronaut and Kubernetes

This project is a learning exercise and a deeper dive into the practicalities of developing and operating Kubernetes microservices with Micronaut and Kotlin on AWS.

The system is built as a learning tool and a testbed for various microservices concepts. It's not a production-ready design, and it's a constantly evolving work. Contributions are welcome.

The app is a very simple hotel booking website for the fictional Linton Travel Tavern where Alan Partridge spent 182 days in 1996. Its location is equidistant between Norwich and London.

There are two application roles, GUEST and STAFF, with different views and access levels.

A GUEST can manage their own bookings, while STAFF members can view and manage all bookings in the system.

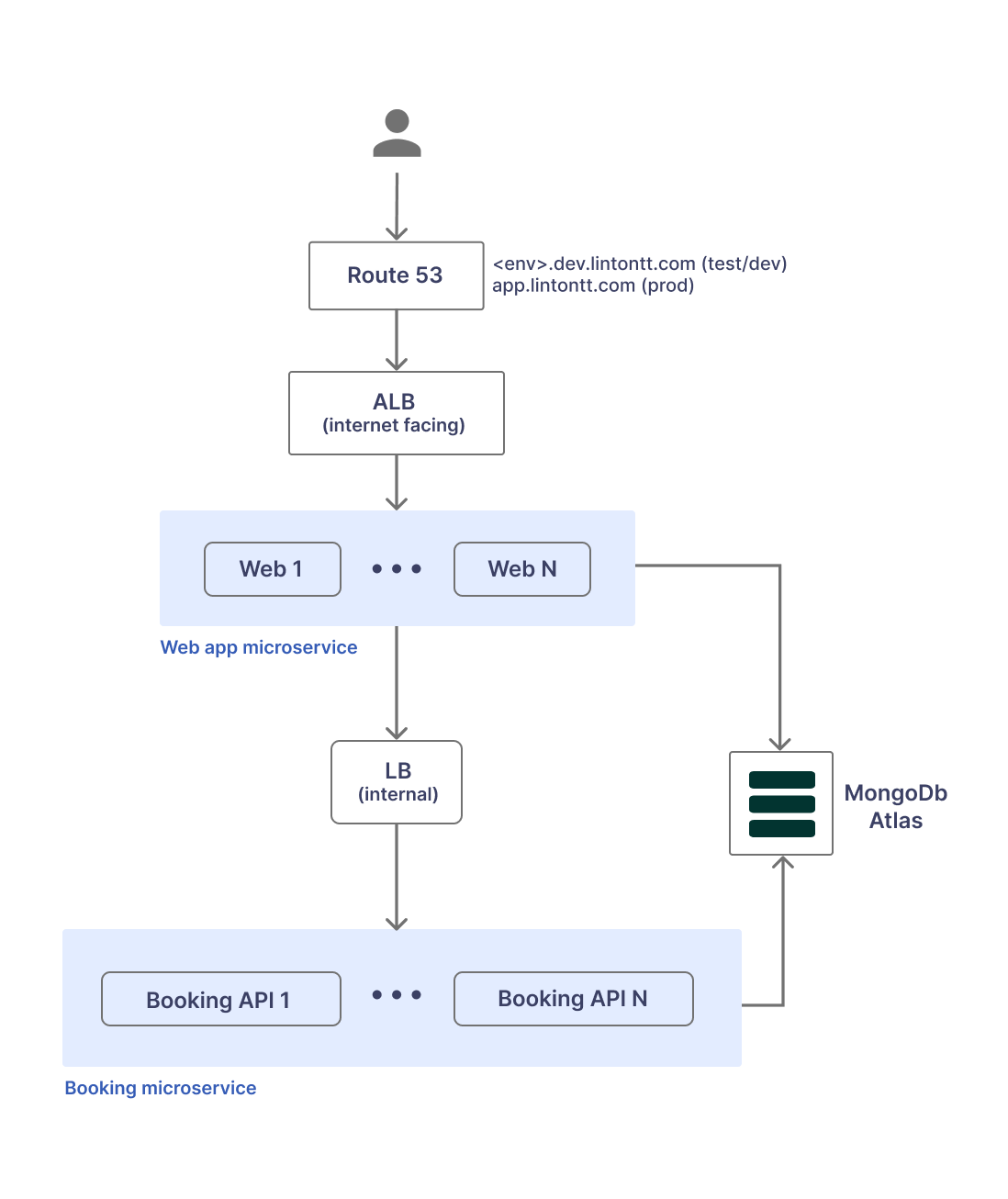

Architecture

The architecture is fairly basic, using two microservices (Web and Booking), and MongoDB Atlas for persistence, in order to reduce the operational complexity and cost for this exercise.

Objectives

Some guiding principles and things we wanted to get out of this exercise.

Kubernetes

- Deploy on AWS EKS

- Use AWS ECR for Docker images

- Kubernetes service discovery with Micronaut

- Injecting Kubernetes secrets and config maps to be used by Micronaut config

- Make use of Kustomize to manage Kubernetes configs

Architecture / NFRs

- Use load balancers for service traffic and materialise them into AWS ALBs/ELBs

- Use Route53 for target environments (qa.dev.lintontt.com, feature1274.dev.lintontt.com) to provide full working platform for development and testing.

- HTTPS for web traffic

- Basic role-based security for platform users

Persistence

- Use MongoDB Atlas for persistence

- Secure MongoDB by default

Scaffolding & Devops

- Use basic shell scripts that can be re-used or easily integrated into CI/CD pipelines

- Deployment (Kubernetes) configs should be scope-aware but environment-agnostic (more on this in the Learnings section)

- Provide a basic ("good-enough") practice for not storing passwords and secrets in the code repository

- Devops pipeline should be service agnostic. The services should be self-describing (or "self-configuring") while the pipeline relies on naming conventions for packaging and deployment.

- Provide tooling for creating and deleting AWS EKS clusters

Development

- Design microservices with isolation in mind, and with the idea that they could be split into separate code repositories if necessary [Work in progress]

- Ability to deploy the system on a local Kubernetes cluster

- Explore the challenges around sharing a data model & concepts between services, and related (anti)patterns

Others

- Focus on architecture best practice, development and devops, and less on features or system capabilities.

- Keep things as vanilla as possible to minimise setup and use of 3rd party tooling. In the real world, a lot of this could be done with smarter tooling, but that's not the objective here.

- Describe solutions for some of the caveats we've found

What we didn't prioritise

Things that are deliberately simplified or irrelevant to the above objectives.

- There's no mature application design for the use case. The data model is simplified/denormalised in places, security is very basic, service and component design is often naff, etc.

- No robust MongoDB wiring. We used a very crude/vanilla approach for interacting with Mongo. Micronaut offers more advanced alternatives should you want to explore that.

- The web application is not state of the art. The web is built with Thymeleaf templates and the CSS styling is intentionally simplistic. UX & UI practices are often butchered.

Learnings

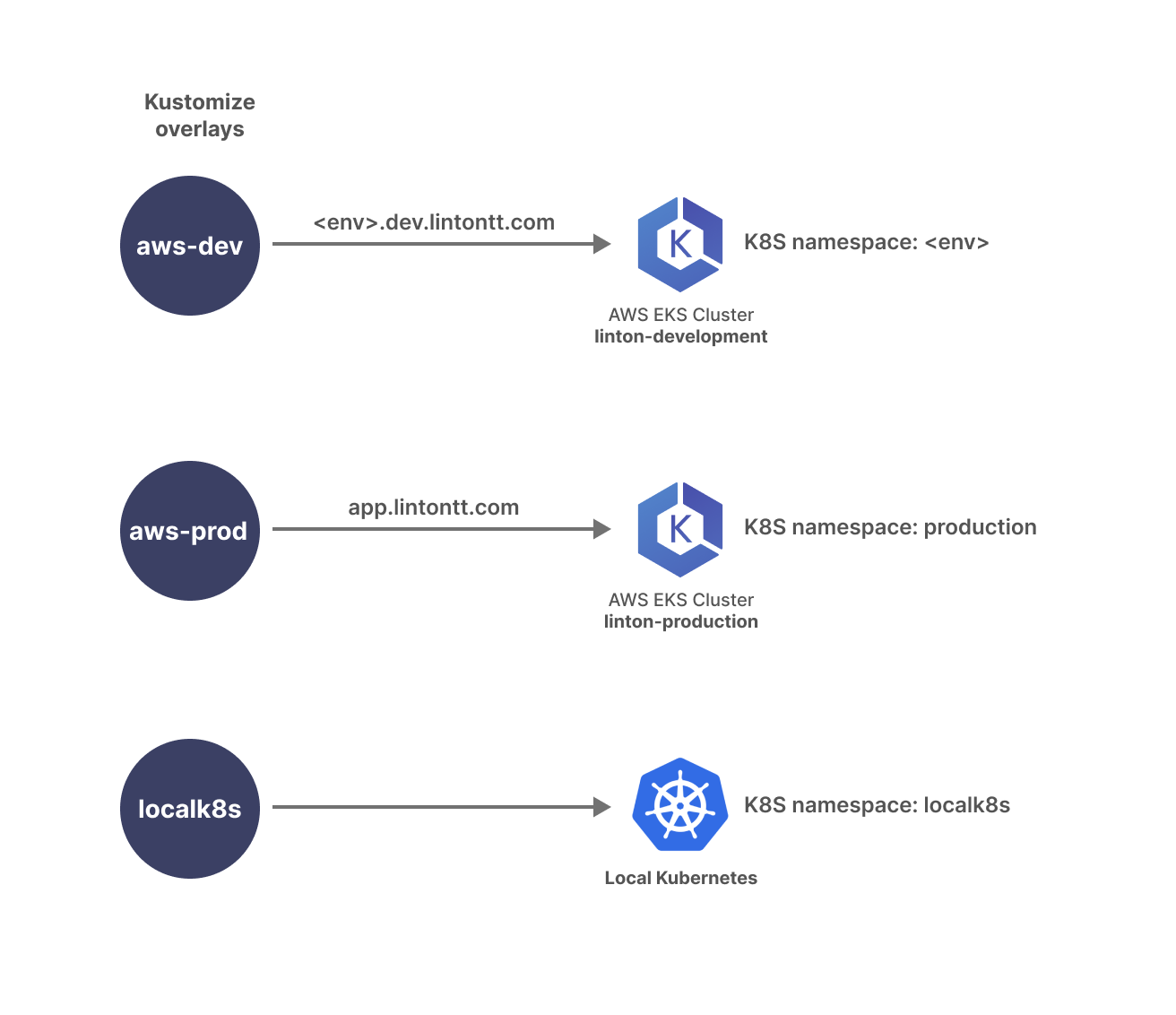

Scope-aware & environment-agnostic configs

We assume the application requires 3 types of environments so we provide 3 Kustomize overlays with each microservice:

aws-dev- a development or test environmentaws-prod- the production environmentlocalk8s- environment running on a local (dev machine) Kubernetes

These configs are customised for the intended scope but are agnostic when it comes to environment name.

For example, deploying qa.dev.lintontt.com or feature1274.dev.lintontt.com would both use the aws-dev overlay

(scope) since they have similar deployments scaffolding.

Then the environment name (qa, feature1274, etc) can be passed at deployment time, to enable domain customisation, MongoDB data isolation, etc.

cd devops

./aws-dev-create.sh qa

./aws-dev-create.sh feature1274This approach minimises (eliminates) configuration changes required to spin up a new environment while allowing for safe deployment isolation.

For simplicity and speed, all aws-dev environments run on the same Kubernetes cluster, linton-development

while production (aws-prod) runs on its own cluster, linton-production. Within the linton-development cluster

deployments are further segregated by using different namespaces, one for each environment.

However, it should be easy to wire the devops pipeline to provision a new EKS cluster for each environment, but

it would then take a lot longer to spin up a new deployment.

Deploying an AWS ALB with Kubernetes

- Install the AWS Load Balancer controller (see Creating and destroying AWS EKS clusters)

- Configure ALB when deploying a Kubernetes load balancer

Using Route53 with Kubernetes

To use Route53 with EKS:

- Setup an IAM policy to allow EKS to manage Route53 records (see Creating and destroying AWS EKS clusters)

- When creating the cluster, attach the policy using

eksctl(see Creating and destroying AWS EKS clusters) - Setup external DNS for Kubernetes (see Creating and destroying AWS EKS clusters)

- Simply configure the host name when deploying a load balancer

Creating and destroying AWS EKS clusters

One time setup

- Set up ALB IAM policy with the contents of lb_iam_policy.json

aws iam create-policy \

--policy-name AWSLoadBalancerControllerIAMPolicy \

--policy-document file://lb_iam_policy.json- Setup Route53 policy with the contents of eks-route53.json

aws iam create-policy \

--policy-name EKS-Route53 \

--policy-document file://eks-route53.json- Create the EKS cluster IAM role with the contents of cluster-trust-policy.json

aws iam create-role \

--role-name EKS-ClusterRole \

--assume-role-policy-document file://cluster-trust-policy.json- Attach the IAM policy to the role

aws iam attach-role-policy \

--policy-arn arn:aws:iam::aws:policy/AmazonEKSClusterPolicy \

--role-name EKS-ClusterRoleCreate an EKS cluster

Run create-cluster.sh

from in devops/kubernetes/aws directory:

cd devops/kubernetes/aws

./create-cluster.sh linton-developmentDelete an EKS cluster

Run delete-cluster.sh

from in devops/kubernetes/aws directory:

cd devops/kubernetes/aws

./delete-cluster.sh linton-developmentUsing envsubst to customise Kubernetes deployment

Setting up CloudWatch Container Insights

This is set up when the cluster is created: https://github.com/cosmin-marginean/linton/blob/main/devops/kubernetes/aws/create-cluster.sh#L64

Others

- Overriding Kubernetes configs using Kustomize

- Switching Kubernetes context automatically

- Providing AWS console access to EKS clusters

- Basic role-based security with Micronaut

- Loading the Thymeleaf Layout dialect with Micronaut

- Managing semantic versioning with Gradle

- Gradle plugin setup (full docs here)

- Increment patch version:

./gradlew incrementSemanticVersion --patch

- Using KMongo codec for Kotlin data classes: https://github.com/cosmin-marginean/linton/blob/main/linton-utils/src/main/kotlin/com/linton/bootstrap/KMongoFactory.kt

Future work

Things we'd like to explore further.

- CI/CD pipeline integration, ideally using something off the shelf (AWS CodePipeline, CircleCI, etc)

- Design a service that is more resource intensive and explore memory/CPU config for it

- Auto-scale

- AWS WAF

- Separate the codebase into separate repositories for a true microservices approach

Local environment setup

Prerequisites are mostly installed using SDKMAN and Homebrew where possible.

- JDK 17

- MongoDB Community Edition

- Docker with Kubernetes enabled

Next steps are:

To run the app on your local Kubernetes cluster:

cd devops

./localk8s-run.shWhen the system is deployed, it should be accessible at http://localhost:8081, where you should get the screen below.

Login with alan@apacheproductions.com for GUEST, or susan@lintontt.com for STAFF. Password is Zepass123! for all users.

AWS Setup

To run this on AWS you'll obviously need an AWS account and a series of prerequisites.

TODO - elaborate on policies, roles etc to be bootstrapped