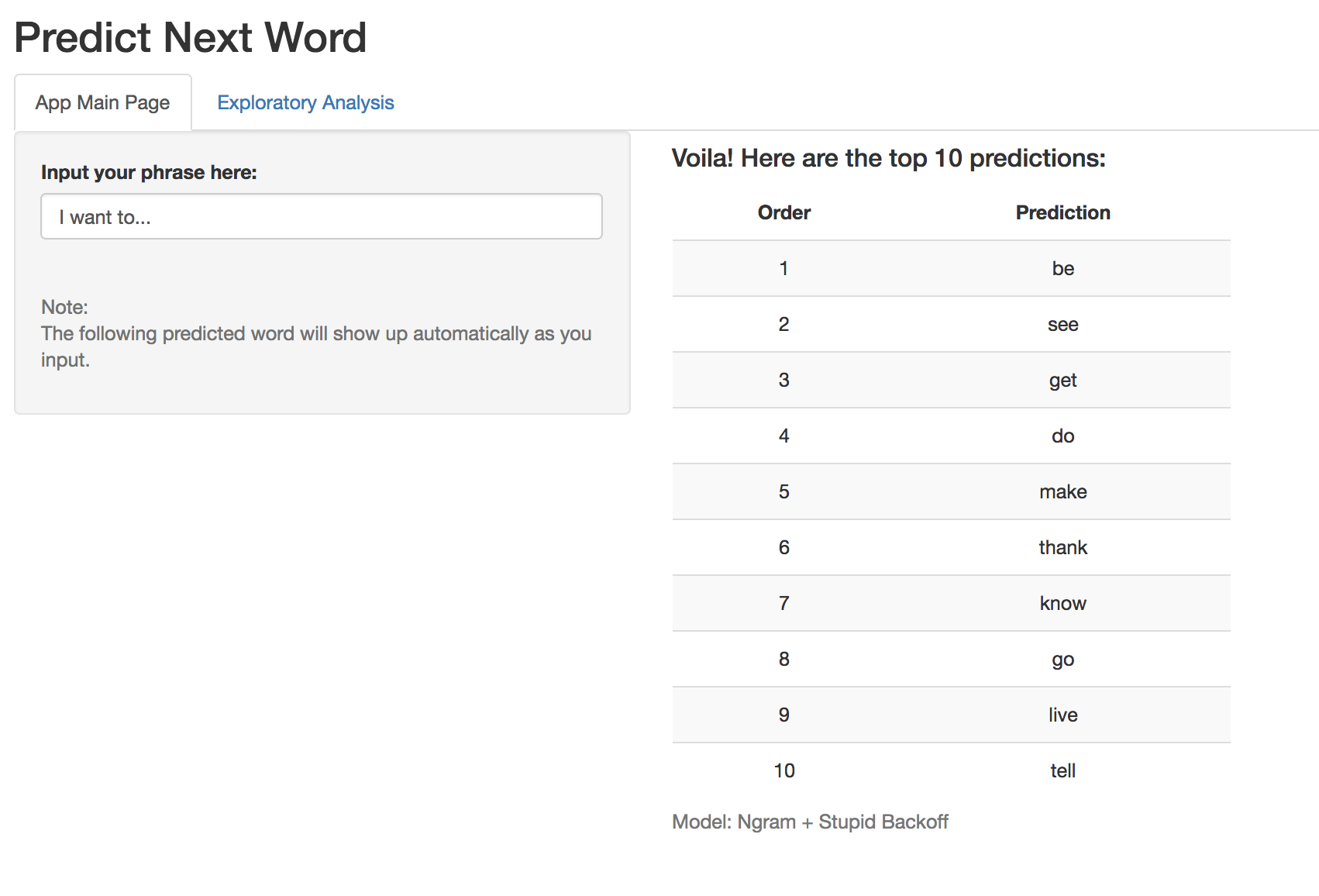

This is Coursera JHU Data Science Specialization capstone project, to build a Shiny App with Natural Processing Model (NLP) to predict what user next input word fast and accurately.

The dataset is from SwiftKey, a industry partner with JHU. The original text dataset can be downloaded here.

- N-Gram Tokenization. The usual n-gram consists of a sequence of n words, e.g. "I like to eat" is a Quandgram. This project used up to Pentagram (N=5).

- Stupid-Backoff. One of the most used scheme for large language models. For more infomation about this model, click here.

- preprocessing.R: Loading,sampling text dataset. Data cleaning & profanity filtering. Building N-Grams.

- global.R: Store all the functions and text analysis models to be used in the app.

- ui.R/server.R: Shiny App file.

- ngram.rds: Cleaned ngrams data from preprocessing.

- Milestone2: A folder with a report on exploratory analysis of original text data.