This repository contains the source code for the CVPR 2021 oral paper Point2Skeleton: Learning Skeletal Representations from Point Clouds, where we introduce an unsupervised method to generate skeletal meshes from point clouds.

We introduce a generalized skeletal representation, called skeletal mesh. Several good properties of the skeletal mesh make it a useful representation for shape analysis:

-

Recoverability The skeletal mesh can be considered as a complete shape descriptor, which means it can reconstruct the shape of the original domain.

-

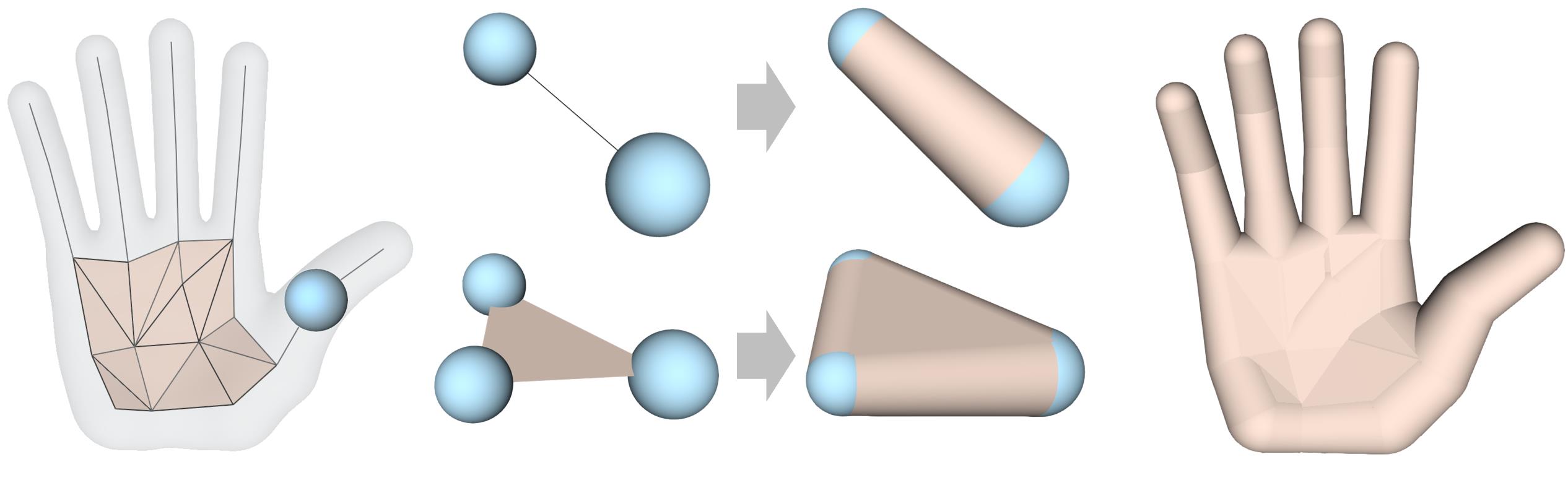

Abstraction The skeletal mesh captures the fundamental geometry of a 3D shape and extracts its global topology; the tubular parts are abstracted by simple 1D curve segments and the planar or bulky parts by 2D surface triangles.

-

Structure awareness The 1D curve segments and 2D surface sheets as well as the non-manifold branches on the skeletal mesh give a structural differentiation of a shape.

-

Volume-based closure The interpolation of the skeletal spheres gives solid cone-like or slab-like primitives; then a local geometry is represented by volumetric parts, which provides better integrity of shape context. The interpolation also forms a closed watertight surface.

You need to install PyTorch, NumPy, and TensorboardX (for visualization of training). This code is tested under Python 3.7.3, PyTorch 1.1.0, NumPy 1.16.4 on Ubuntu 18.04.

To setup PointNet++, please use:

pip install -r requirements.txt

cd src/pointnet2

python setup.py build_ext --inplace

- Example command with required parameters:

cd src

python train.py --pc_list_file ../data/data-split/all-train.txt --data_root ../data/pointclouds/ --point_num 2000 --skelpoint_num 100 --gpu 0

- Can simply call

python train.pyonce the data folderdata/is prepared. - See

python train.py --helpfor all the training options. Can change the setting by modifying the parameters insrc/config.py

- Example command with required parameters:

cd src

python test.py --pc_list_file ../data/data-split/all-test.txt --data_root ../data/pointclouds/ --point_num 2000 --skelpoint_num 100 --gpu 0 --load_skelnet_path ../weights/weights-skelpoint.pth --load_gae_path ../weights/weights-gae.pth --save_result_path ../results/

- Can also simply call

python test.pyonce the data folderdata/and network weight folderweights/are prepared. - See

python test.py --helpfor all the testing options.

- Train/test data data.zip.

- Pre-trained model weights.zip.

- Unzip the downloaded files to replace the

data/andweights/folders; then you can run the code by simply callingpython train.pyandpython test.py. - Dense point cloud data_dense.zip and simplified MAT MAT.zip for evaluation.

We would like to acknowledge the following projects:

Unsupervised Learning of Intrinsic Structural Representation Points

If you find our work useful in your research, please consider citing:

@InProceedings{Lin_2021_CVPR,

author = {Lin, Cheng and Li, Changjian and Liu, Yuan and Chen, Nenglun and Choi, Yi-King and Wang, Wenping},

title = {Point2Skeleton: Learning Skeletal Representations from Point Clouds},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2021},

pages = {4277-4286}

}

If you have any questions, please email Cheng Lin at chlin@connect.hku.hk.