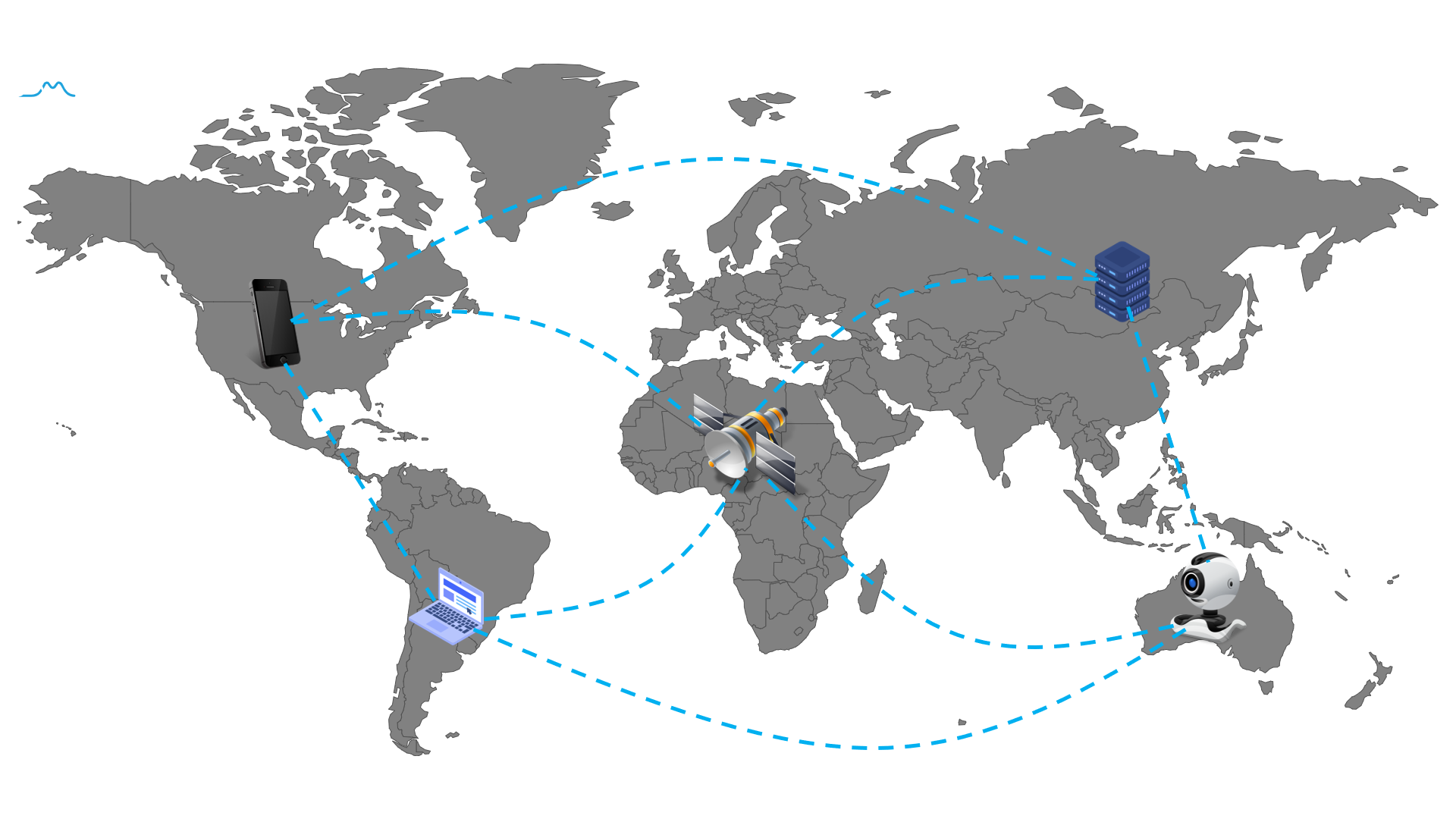

Federated Learning (FL) is a new machine learning framework, which enables multiple devices collaboratively to train a shared model without compromising data privacy and security.

This repository will continue to be collected and updated everything about federated learning materials, including research papers, conferences, blogs and beyond.

- Top Machine Learning conferences

- Books

- Talks and Tutorials

- Conferences and Workshops

- Blogs

- Papers

- Open-Sources

In this section, we will summarize Federated Learning papers accepted by top machine learning conference, Including Neurips, ICML, ICLR.

| Conferences | Title | Affiliation | Slide & Code |

| AISTATS 2020 | FedPAQ: A Communication-Efficient Federated Learning Method with Periodic Averaging and Quantization | UC Santa Barbara; UT Austin | Supplementary |

| How To Backdoor Federated Learning | Cornell Tech | Supplementary | |

| Federated Heavy Hitters Discovery with Differential Privacy | RPI; |

Supplementary |

-

联邦学习(Federated Learning)

-

联邦学习实战(Practicing Federated Learning)

-

TensorFlow Federated (TFF): Machine Learning on Decentralized Data - Google, TF Dev Summit ‘19 2019

-

Federated Learning: Machine Learning on Decentralized Data - Google, Google I/O 2019

-

Federated Learning - Cloudera Fast Forward Labs, DataWorks Summit 2019

-

GDPR, Data Shortage and AI - Qiang Yang, AAAI 2019 Invited Talk

-

Making every phone smarter with Federated Learning - Google, 2018

- FL-ICML 2020 - Organized by IBM Watson Research.

- FL-IBM 2020 - Organized by IBM Watson Research and Webank.

- FL-NeurIPS 2019 - Organized by Google, Webank, NTU, CMU.

- FL-IJCAI 2019 - Organized by Webank.

- Google Federated Learning workshop - Organized by Google.

- What is Federated Learning - Nvidia 2019

- Online Federated Learning Comic - Google 2019

- Federated Learning: Collaborative Machine Learning without Centralized Training Data - Google AI Blog 2017

Personalized federated learning refers to train a model for each client, based on the client’s own dataset and the datasets of other clients. There are two major motivations for personalized federated learning:

- Due to statistical heterogeneity across clients, a single global model would not be a good choice for all clients. Sometimes, the local models trained solely on their private data perform better than the global shared model.

- Different clients need models specifically customized to their own environment. As an example of model heterogeneity, consider the sentence: “I live in .....”. The next-word prediction task applied on this sentence needs to predict a different answer customized for each user. Different clients may assign different labels to the same data.

Personalized federated learning Survey paper:

-

Three Approaches for Personalization with Applications to Federated Learning

-

Personalized Federated Learning for Intelligent IoT Applications: A Cloud-Edge based Framework

-

Advances and Open Problems in Federated Learning - arXiv Dec 2019

-

A Survey on Federated Learning Systems: Vision, Hype and Reality for Data Privacy and Protection - arXiv Apr 2020

-

Federated Learning in Mobile Edge Networks: A Comprehensive Survey - arXiv Sep 2019

-

Federated Learning for Wireless Communications: Motivation, Opportunities and Challenges - arXiv Sep 2019

-

Federated Learning: Challenges, Methods, and Future Directions - arXiv Aug 2019

-

Federated Machine Learning: Concept and Applications - ACM TIST 2018

-

Towards Federated Learning at Scale: System Design - SysML 2019

-

A generic framework for privacy preserving deep learning - arXiv 2018

-

Communication-Efficient Learning of Deep Networks from Decentralized Data - AISTATS 2017 (The first paper proposed federated learning concept)

-

DBA: Distributed Backdoor Attacks against Federated Learning - ICLR 2020

-

Can You Really Backdoor Federated Learning? - arXiv Dec 2019

-

Analyzing Federated Learning through an Adversarial Lens - ICML 2019

-

How To Backdoor Federated Learning - arXiv 2018

-

Federated Learning with Differential Privacy: Algorithms and Performance Analysis - IEEE 2020

-

Differentially Private Federated Learning: A Client Level Perspective - arXiv 2018

-

Learning Differentially Private Recurrent Language Models - ICLR 2018

- Private federated learning on vertically partitioned data via entity resolution and additively homomorphic encryption - arXiv 2017

-

RPN: A Residual Pooling Network for Efficient Federated Learning - ECAI 2020

-

Federated Learning: Strategies for Improving Communication Efficiency - arXiv 2017

-

Federated Learning with Matched Averaging - ICLR 2020

-

One-Shot Federated Learning - arXiv 2019

-

cpSGD: Communication-efficient and differentially-private distributed SGD - NeurIPS 2018

-

Federated Optimization: Distributed Machine Learning for On-Device Intelligence - arXiv 2016

-

Fair Resource Allocation in Federated Learning - arXiv 2019

-

Agnostic Federated Learning - ICML 2019

-

Adaptive Personalized Federated Learning - arXiv 2020

-

Three Approaches for Personalization with Applications to Federated Learning - arXiv 2020

-

Survey of Personalization Techniques for Federated Learning - arXiv 2020

-

Survey of Personalization Techniques for Federated Learning - arXiv 2020

-

Federated Topic Modeling - CIKM 2019

-

Federated Learning for Mobile Keyboard Prediction - arXiv 2019

-

Applied federated learning: Improving google keyboard query suggestions - arXiv 2018

-

Federated Learning Of Out-Of-Vocabulary Words - arXiv 2018

- Privacy-preserving Federated Brain Tumour Segmentation - MICCAI MLMI 2019

- FedHealth: A Federated Transfer Learning Framework for Wearable Healthcare - arXiv 2019

-

Secure Federated Matrix Factorization - arXiv 2019

-

Federated Collaborative Filtering for Privacy-Preserving Personalized Recommendation System - arXiv 2019

-

Federated Meta-Learning with Fast Convergence and Efficient Communication - arXiv 2018

- FedCoin: A Peer-to-Peer Payment System for Federated Learning - arXiv Feb 2020

- Blockchained On-Device Federated Learning - IEEE Communications Letters 2019