This is just for educational purpose, I didn't use it in any malicious or commercial way

- The copyright of the training images belongs to Nexon game - Maplestory

- So I can't share the training images I've used, please understand

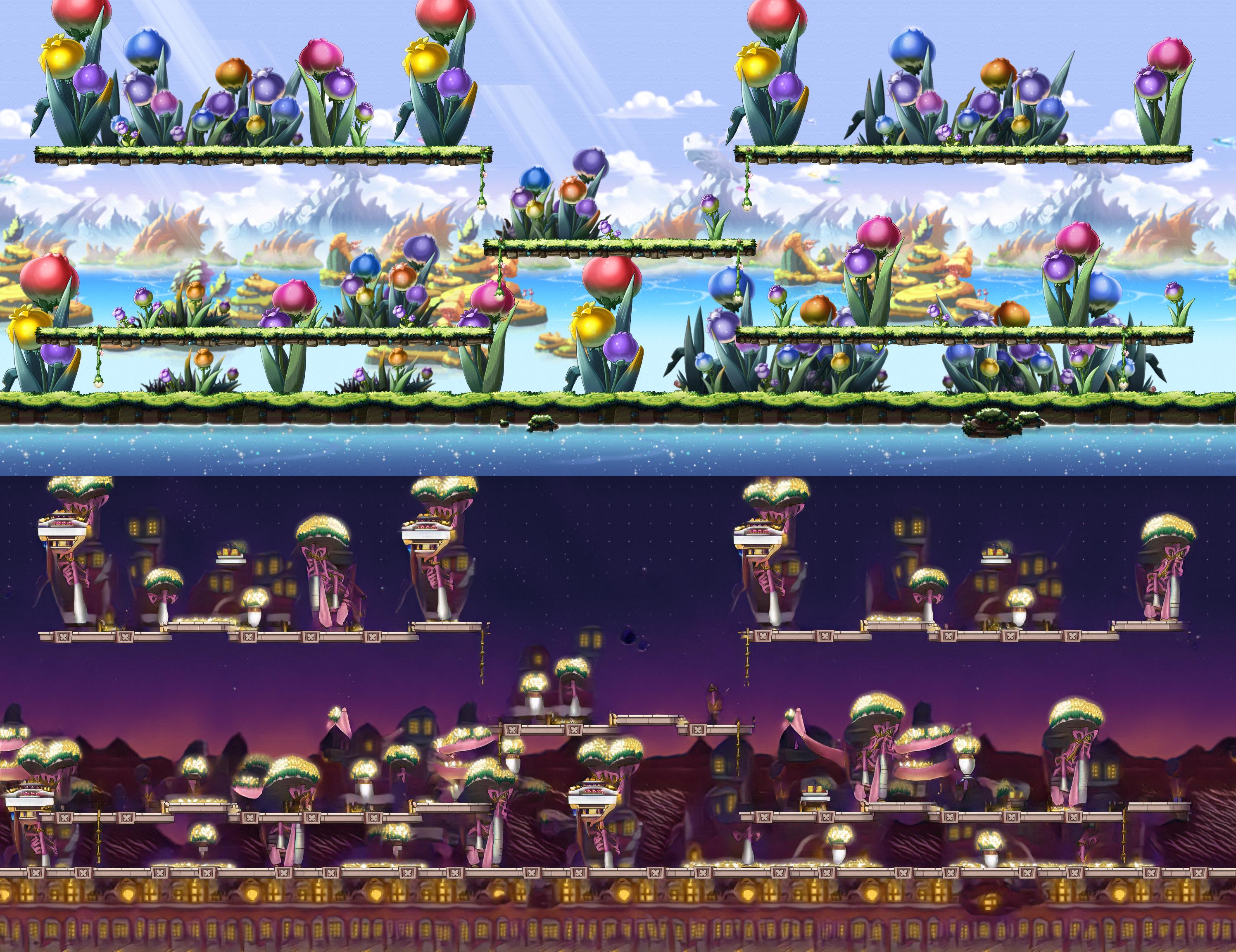

For those who like Maplestory or those who have played it, I hope this would be a little bit of fun for them.

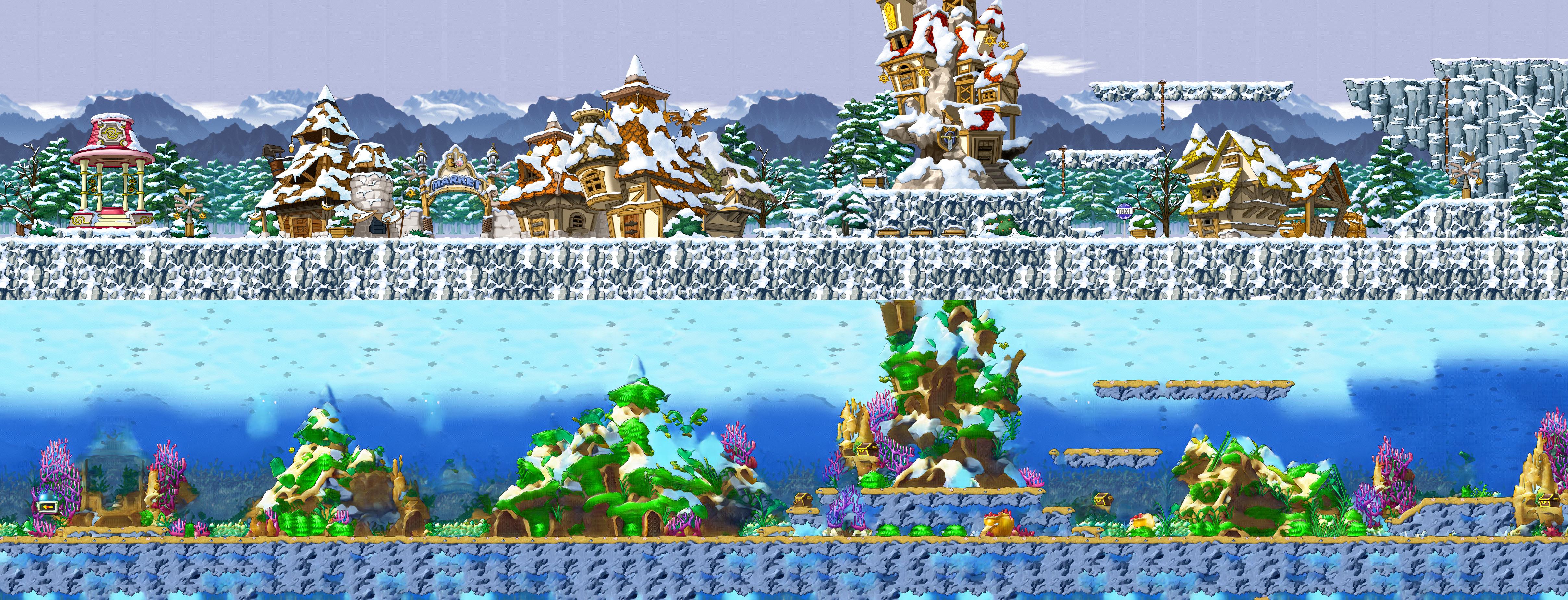

- (23/04/24) elnath2aquarium update

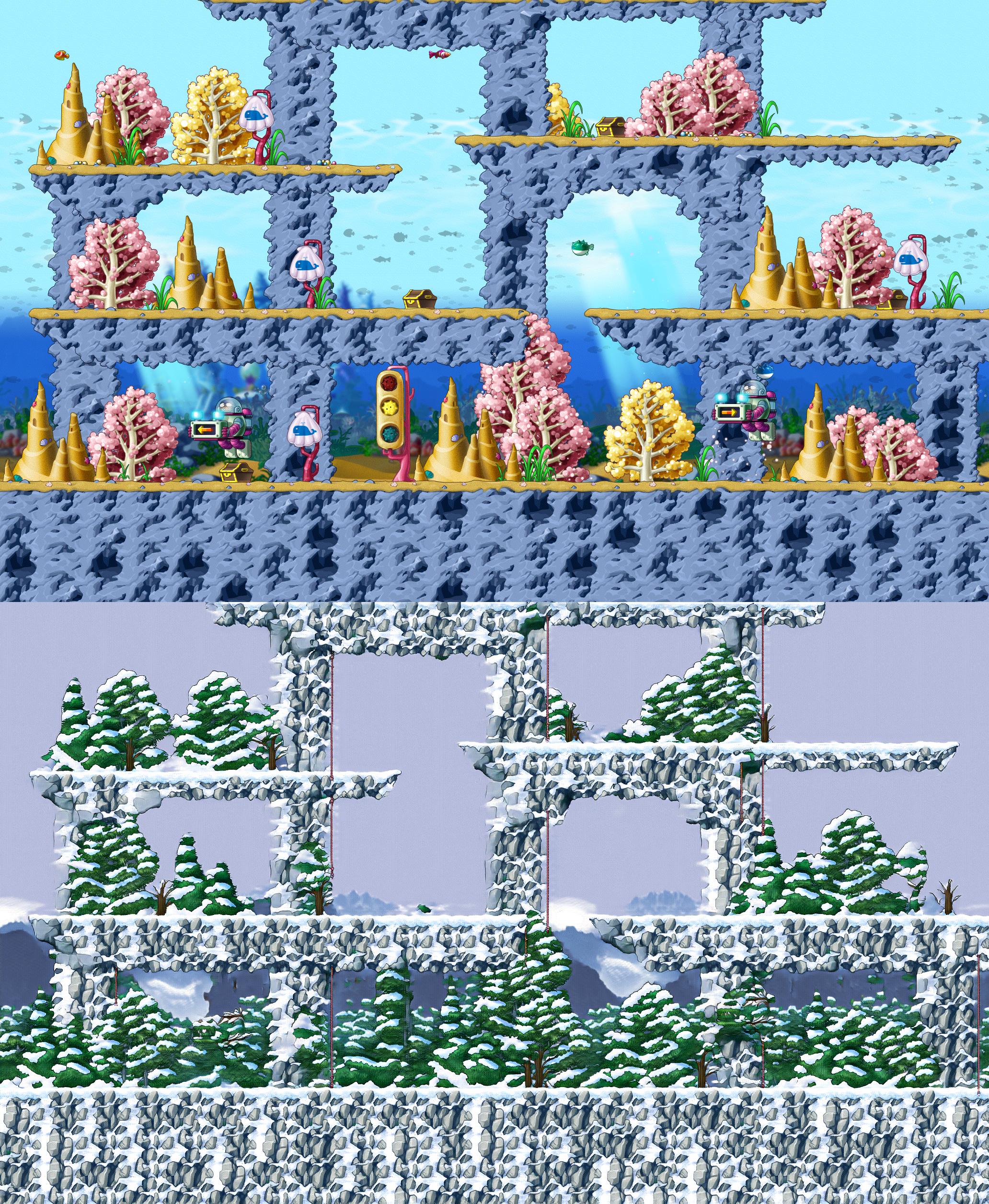

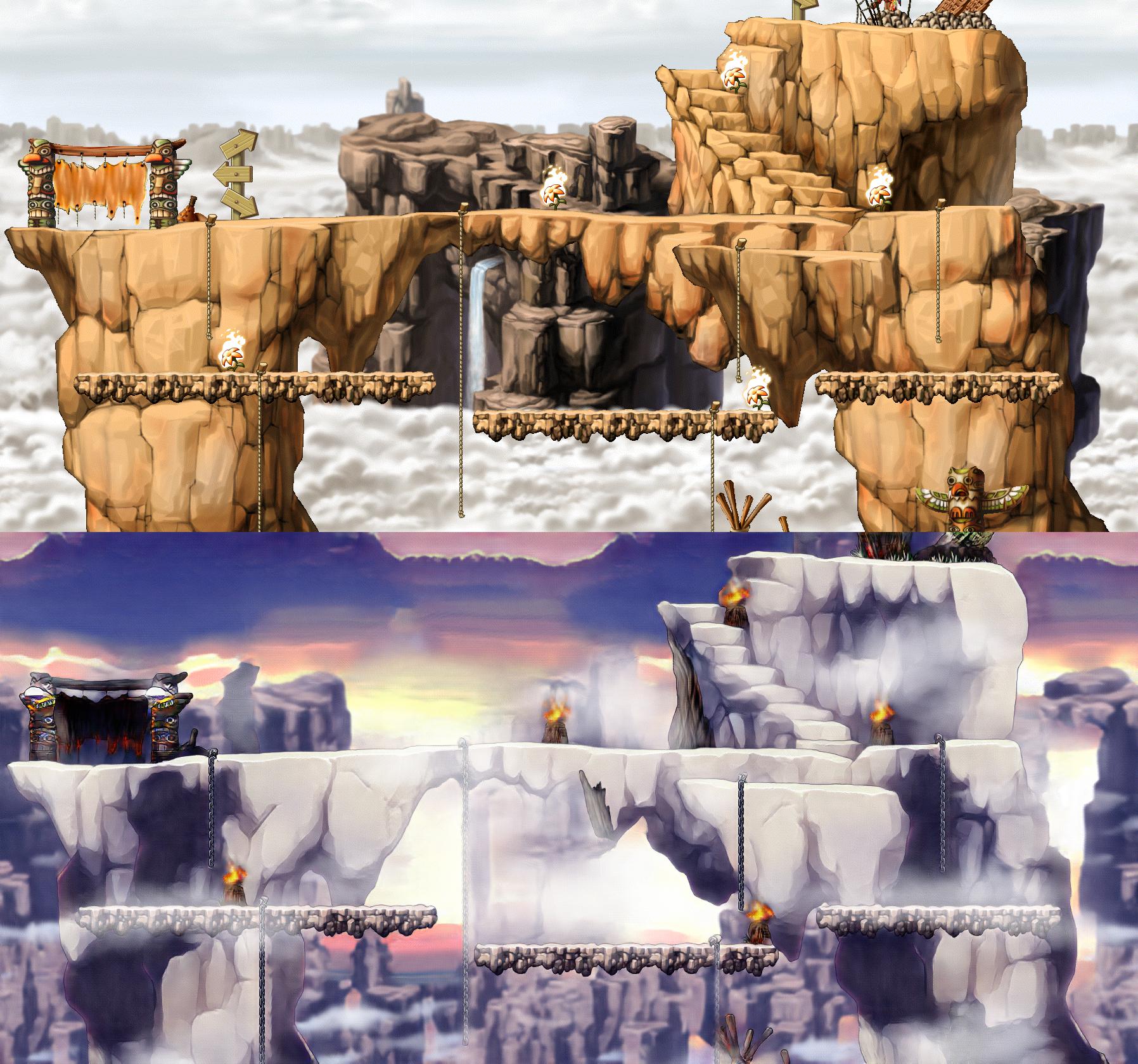

- (23/04/29) perion2twilight_perion update

- (23/05/05) chewchew_island2lachelein update

Note : Current version of my implementation uses separate data augmentation layer. One might slightly reduce the training time by letting the tf.data.dataset object to do that. Currently all the data preprocessing steps(except augmentation) make use of it to parallelize the I/O & GPU works.

by. 배고픈뿔버섯/엘리시움

chk70821@naver.com

Henesys and ellinia are two of the most popular cities in maple world. And both cities have their own styles.

Think about particular henesys map. What would this map look like if it were in ellinia? (or in the opposite direction)

My goal is image-to-image translation between cities in maple world.

But here's the problem, there's no (henesys_city, ellinia_city) pair which have same structure. (i.e we don't have paired dataset)

CycleGAN will be used to deal with this unpaired image-to-image translation task.

You could see the details at the following links

| CycleGAN paper | Official homepage |

- First, some result images & videos are provided

- And then, training details

Recommend you to check the images & videos at result images & result videos directory

Since the scale(in pixels) in the original game is preserved, feel free to open the files & zoom in to see the details.

- From now on, only the training performance will be considered.

WzComparerR2 was used to extract images, by following the instruction.

The npc & monster & portal are excluded.

Note : Map can be decomposed into two parts, foreground & background. When extracting images, foreground part is invariant to the position you're looking at, but background part isn't.

- Again, Background part might be composed of multiple layers. Where each layers have different moving ratio w.r.t the position we're looking at.

By using this property, one could extract some large maps multiple times at different positions. By doing this, we could collect sufficient number of images for each distribution. And extra maps were also considered if those resembles the distribution of our targets. (such as, maple island ~ henesys, some event maps)

All the images are acquired only from WzComparerR2, just to maintain the original & consistent scale of pixels.

CycleGAN is a generative adversarial network for unpaired image-to-image translation task. It's composed of 2 generators & 2 discriminators. Where generator's job is to map one distribution image to another, and discriminator's job is to discriminate whether the given image is real or fake.

Adversarial loss makes the result realistic(indistinguishable from real distribution). Among those, least square loss from LSGAN was used as author did.

Cycle consistency loss was introduced in this paper. Basically it means, if we transfrom the image from A to another distribution by using one generator, and then transform it back by using the other generator(which forms a cycle), we want those two to be equal. So cycle consistency loss is defined as the L1 distance between those two images in pixel space.

The optional identity loss (described in paper) was omitted.

-

generator_loss = lsgan_adversarial_loss + lambda * cycle_consistency_loss

- lambda = 10 was used.

-

discriminator_loss = lsgan_adversarial_loss / 2

- discriminator objective was divided by 2, just to slow down the rate at which it learns.

Each generator is composed of 2 contracting blocks & 9 residual blocks & 2 expanding blocks.

- c7s1-64, d128, d256, R256 * 9, u128, u64, c7s1-3 (following the notation of paper in Section 7.2)

Each discriminator is PatchGAN discriminator(introduced in pix2pix) of receptive field : 70 (in pixels)

- C64 - C128 - C256 - C512 - output (also following the notation of paper in Section 7.2)

For more details, refer | CycleGAN paper | official homepage | my implementation |

All the models were trained from scratch with Adam optimizer (learning_rate=0.0002, beta_1=0.5, beta_2=0.999). All weights were initialized from a gaussian distribution with mean=0 and standard deviation=0.02.

Since we used the generator with 4x downsampling & 4x upsampling, in order to compute cycle consistency loss, height and width should be multiple of 4(otherwise, we need extra modification).

The network is fully convolutional. We don't use any fully connected layer. Since convolution can be applied to arbitrary size image, we trained the network with randomly cropped image of size (height, width) = (480, 480) (which was horizontally flipped with 50% chance), and at inference time, we applied it to the original images.

Video could be interpreted as the sequence of images(frames). So we mimicked horse2zebra video by applying the generator for frames.

- recording of the original video was done in WzComparerR2 by windows xbox game bar.

- to reduce memory we choose low fps

Note : We didn't impose explicit temporal consistency to the model, but the result is quite good (at least I think). It seems that the combination of continuous change of background & foreground and cycle consistency loss makes it continuous.

- training dataset : 173 images from henesys & 135 images from ellinia (Batch_size = 1, 1 epoch = 135 update)

- trained number of epoch : 2000

- after 1000 epoch, we linearly decayed the learning rate to 0

- training time : roughly 100 hours with NVIDIA Tesla T4

- training dataset : 159 images from elnath & 147 images from aquarium (Batch_size = 1, 1 epoch = 147 update)

- trained number of epoch : 2000

- after 1000 epoch, we linearly decayed the learning rate to 0

- training time : roughly 100 hours with NVIDIA Tesla T4

- training dataset : 189 images from perion & 220 images from twilight_perion (Batch_size = 1, 1 epoch = 189 update)

- trained number of epoch : 1200

- after 600 epoch, we linearly decayed the learning rate to 0

- training time : roughly 80 hours with NVIDIA Tesla T4

- training dataset : 250 images from chewchew_island & 156 images from lachelein (Batch_size = 1, 1 epoch = 156 update)

- trained number of epoch : 2000

- after 1000 epoch, we linearly decayed the learning rate to 0

- training time : roughly 100 hours with NVIDIA Tesla T4