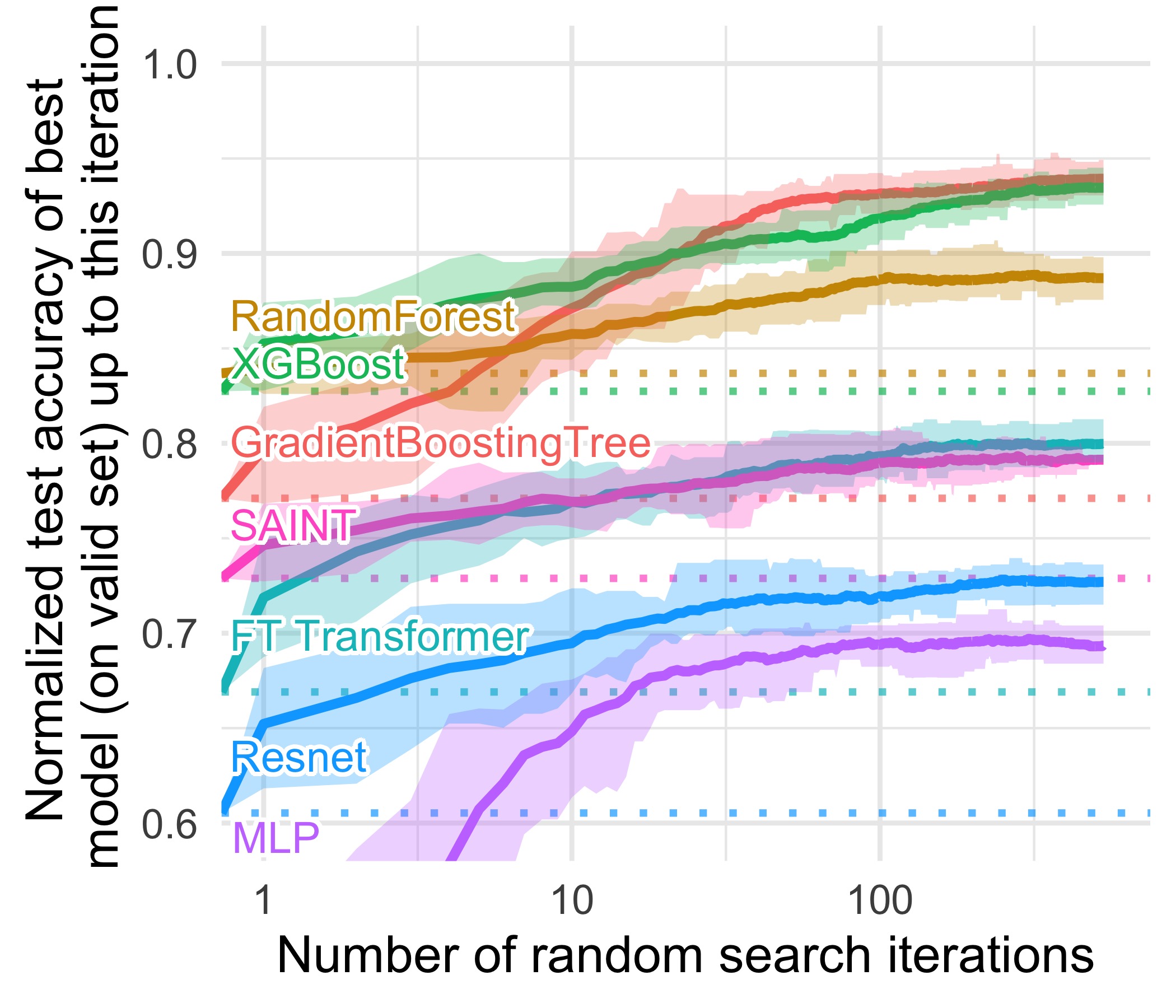

Accompanying repository for the paper Why do tree-based models still outperform deep learning on tabular data?

To download these datasets, simply run python data/download_data.py.

You can also find these datasets on Hugging Face Hub.

You can re-run the training using WandB sweeps.

- Copy / clone this repo on the different machines / clusters you want to use.

- Login to WandB and create new projects.

- Enter your projects name in

launch_config/launch_benchmarks.py(orlaunch_config/launch_xps.py) - run

python launch_config/launch_benchmarks.py - Run the generated sweeps using

wandb agent <USERNAME/PROJECTNAME/SWEEPID>on the machine of your choice. More infos in the WandB doc - After you've stopped the runs, download the results:

python launch_config/download_data.py, after entering your wandb login inlaunch_config/download_data.py.

We're planning to release a version allowing to use Benchopt instead of WandB to make it easier to run.

All the R code used to generate the analyses and figures in available in the analyses folder.

The datasets used in the benchmark have been uploaded as OpenML benchmarks, with the same transformations that are used in the paper.

import openml

#openml.config.apikey = 'FILL_IN_OPENML_API_KEY' # set the OpenML Api Key

SUITE_ID = 297 # Regression on numerical features

#SUITE_ID = 298 # Classification on numerical features

#SUITE_ID = 299 # Regression on numerical and categorical features

#SUITE_ID = 304 # Classification on numerical and categorical features

benchmark_suite = openml.study.get_suite(SUITE_ID) # obtain the benchmark suite

for task_id in benchmark_suite.tasks: # iterate over all tasks

task = openml.tasks.get_task(task_id) # download the OpenML task

dataset = task.get_dataset()

X, y, categorical_indicator, attribute_names = dataset.get_data(

dataset_format="dataframe", target=dataset.default_target_attribute

)

If you want to compare you own algorithms with the models used in

this benchmark for a given number of random search iteration,

you can use the results from our random searches, which we share

as two csv files located in the analyses/results folder.

To benchmark your own algorithm using our code, you'll need:

- a model which uses the sklearn's API, i.e having fit and predict methods. We recommend using Skorch use sklearn's API with a Pytorch model.

- to add your model hyperparameters search space to

launch_config/model_config. - to add your model name in

launch_config/launch_benchmarksandutils/keyword_to_function_conversion.py - to run the benchmarks as explained in Training the models.

We're planning to release a version allowing to use Benchopt instead of WandB to make it easier to run.