Face Detection and Tweets on the GCP Serverless Architecture

Topics

- Getting a Twitter home timeline by using the Twitter API (by Tweepy)

- Face detection using OpenCV (DNN)

- GCP serverless architecture

- Cloud Scheduler, Pub/Sub, Cloud Functions, GCS, Datastore, Cloud SDK

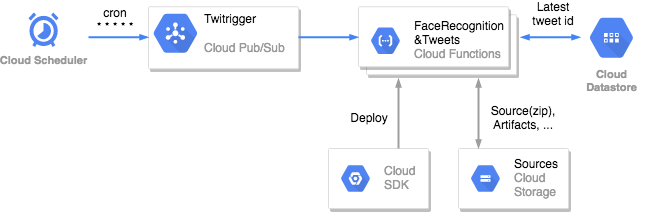

Architecture

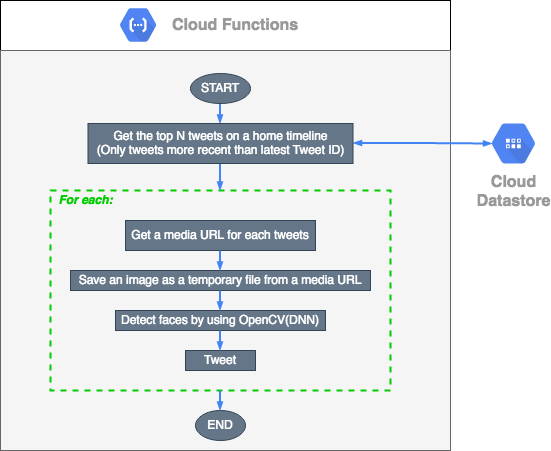

Processing flow

Usage (Run in the GCP)

-

Make a config.py

CONSUMER_KEY = "<your-twitter-consumer-key>" CONSUMER_SECRET = "<your-twitter-consumer-secret>" ACCESS_TOKEN = "<your-twitter-access-token>" ACCESS_TOKEN_SECRET = "<your-twitter-access-token-secret>"

-

Install the latest Cloud SDK

-

Make a zip file

$ zip -r [FILE_NAME].zip * -

Make a bucket in GCS

Example:

$ gsutil mb -p [GCP_PROJECT_ID] -l US-CENTRAL1 gs://[BUCKET_NAME] -

Upload a zip to GCS

Example:

$ gsutil cp [FILE_NAME].zip gs://[BUCKET_NAME]Ref: Uploading objects

-

Deploy to Cloud Functinos

Example:

$ gcloud functions deploy [FUNCTION_NAME] \ --source=gs://[BUCKET_NAME]/[FILE_NAME].zip \ --stage-bucket=[BUCKET_NAME] \ --trigger-topic=[Pub/Sub_TOPIC_NAME] \ --memory=256MB \ --runtime=python37 \ --region=us-central1 \ --project=[GCP_PROJECT_ID] \ --entry-point=main

If the Pub/Sub_TOPIC_NAME doesn't exist, it is created during deployment.

Ref: Google Cloud Pub/Sub Triggers, Deploying from Your Local Machine

-

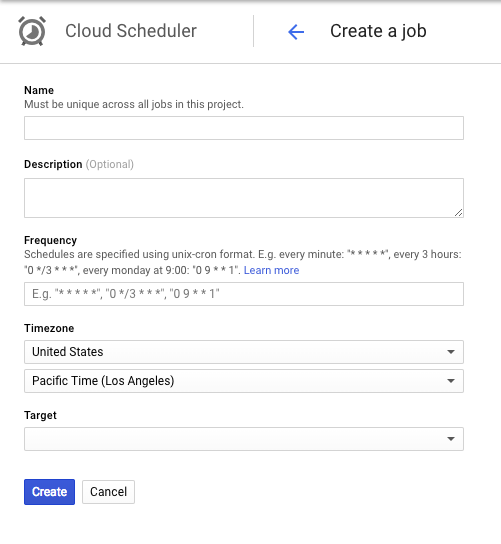

Create a Cloud Scheduler job

Ref:

Using Pub/Sub to trigger a Cloud Function - Create a Cloud Scheduler job

-

Done

After receiving the Cloud Scheduler job, get tweets, detect faces, and do tweets every minute (cron * * * * *).

Usage (Run locally)

-

Make a config.py

CONSUMER_KEY = "<your-twitter-consumer-key>" CONSUMER_SECRET = "<your-twitter-consumer-secret>" ACCESS_TOKEN = "<your-twitter-access-token>" ACCESS_TOKEN_SECRET = "<your-twitter-access-token-secret>"

-

Install python packages

$ pip install -r requirements.txt -

Set up authentication for GCP

Create a service account for Cloud Datastore.

Ref: Datastore mode Client Libraries - Setting up authentication

-

Run

$ python main.pyGet tweets, detect faces, and do tweets.