Real-time transcription using faster-whisper

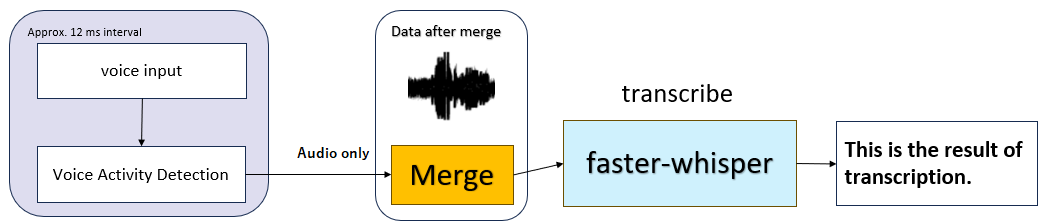

Accepts audio input from a microphone using a Sounddevice. By using Silero VAD(Voice Activity Detection), silent parts are detected and recognized as one voice data. This audio data is converted to text using Faster-Whisper.

The HTML-based GUI allows you to check the transcription results and make detailed settings for the faster-whisper.

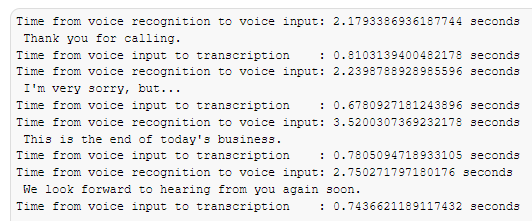

If the sentences are well separated, the transcription takes less than a second.

Large-v2 model

Executed with CUDA 11.7 on a NVIDIA GeForce RTX 3060 12GB.

- pip install .

- python -m speech_to_text

- Select "App Settings" and configure the settings.

- Select "Model Settings" and configure the settings.

- Select "Transcribe Settings" and configure the settings.

- Select "VAD Settings" and configure the settings.

- Start Transcription

- If you select local_model in "Model size or path", the model with the same name in the local folder will be referenced.

- Implemented feature to generate audio files from input sound.

- Implemented feature to synchronize audio files with transcription. Audio and text highlighting are linked.

- Implemented feature to transcription from audio files.

-

Save and load previous settings.

-

Use Silero VAD

-

Allow local parameters to be set from the GUI.