This project is currently being restructured, don't use it right now, hold for a bit.

In the next couple of weeks it will be ready. 😎 🚀

Some of the future new features and changes:

- Upgrade to the latest FastAPI.

- Migration from SQLAlchemy to SQLModel.

- Upgrade to Pydantic v2.

- Refactor and simplification of most of the code, a lot of the complexity won't be necessary anymore.

- Automatic TypeScript frontend client generated from the FastAPI API (OpenAPI).

- Migrate from Vue.js 2 to React with hooks and TypeScript.

- Make the project work as is, allowing to clone and use (not requiring to generate a project with Cookiecutter or Copier)

- Migrate from Cookiecutter to Copier

- Move from Docker Swarm Model to Docker Compose for a simple deployment.

- GitHub Actions for CI.

- ⚡ FastAPI for the Python backend API.

- 🧰 SQLModel for the Python SQL database interactions (ORM).

- 🔍 Pydantic, used by FastAPI, for the data validation and settings management.

- 💾 PostgreSQL as the SQL database.

- 🚀 React for the frontend.

- 💃 Using TypeScript, hooks, Vite, and other parts of a modern frontend stack.

- 🎨 Chakra UI for the frontend components.

- 🤖 An automatically generated frontend client.

- 🐋 Docker Compose for development and production.

- 🔒 Secure password hashing by default.

- 🔑 JWT token authentication.

- 📫 Email based password recovery.

- ✅ Tests with Pytest.

- 📞 Traefik as a reverse proxy / load balancer.

- 🚢 Deployment instructions using Docker Compose, including how to set up a frontend Traefik proxy to handle automatic HTTPS certificates.

- 🏭 CI (continuous integration) and CD (continuous deployment) based on GitHub Actions.

You can just fork or clone this repository and use it as is.

✨ It just works. ✨

You can then update configs in the .env files to customize your configurations.

Make sure you at least change the value for SECRET_KEY in the main .env file before deploying to production.

You will be asked to provide passwords and secret keys for several components.

They have a default value of changethis. You can also update them later in the .env files after generating the project.

You could generate those secrets with:

python -c "import secrets; print(secrets.token_urlsafe(32))"Copy the contents and use that as password / secret key. And run that again to generate another secure key.

This project template also supports generating a new project using Copier.

It will copy all the files, ask you configuration questions, and update the .env files with your answers.

You can install Copier with:

pip install copierOr better, if you have pipx, you can run it with:

pipx install copierNote: If you have pipx, installing copier is optional, you could run it directly.

Decide a name for your new project's directory, you will use it below. For example, my-awesome-project.

Go to the directory that will be the parent of your project, and run the command with your project's name:

copier copy https://github.com/tiangolo/full-stack-fastapi-postgresql my-awesome-project --trustIf you have pipx and you didn't install copier, you can run it directly:

pipx run copier copy https://github.com/tiangolo/full-stack-fastapi-postgresql my-awesome-project --trustNote the --trust option is necessary to be able to execute a post-creation script that updates your .env files.

Copier will ask you for some data, you might want to have at hand before generating the project.

But don't worry, you can just update any of that in the .env files afterwards.

The input variables, with their default values (some auto generated) are:

domain: (default:"localhost") Which domain name to use for the project, by default, localhost, but you should change it later (in .env).project_name: (default:"FastAPI Project") The name of the project, shown to API users (in .env).stack_name: (default:"fastapi-project") The name of the stack used for Docker Compose labels (no spaces) (in .env).secret_key: (default:"changethis") The secret key for the project, used for security, stored in .env, you can generate one with the method above.first_superuser: (default:"admin@example.com") The email of the first superuser (in .env).first_superuser_password: (default:"changethis") The password of the first superuser (in .env).smtp_host: (default: "") The SMTP server host to send emails, you can set it later in .env.smtp_user: (default: "") The SMTP server user to send emails, you can set it later in .env.smtp_password: (default: "") The SMTP server password to send emails, you can set it later in .env.emails_from_email: (default:"info@example.com") The email account to send emails from, you can set it later in .env.postgres_password: (default:"changethis") The password for the PostgreSQL database, stored in .env, you can generate one with the method above.pgadmin_default_user: (default:"admin") The default user for pgAdmin, you can set it later in .env.pgadmin_default_password: (default:"changethis") The default user password for pgAdmin, stored in .env.sentry_dsn: (default: "") The DSN for Sentry, if you are using it, you can set it later in .env.

Deploy using Docker Compose and Traefik as a reverse proxy / load balancer handling automatic HTTPS certificates.

Check the file release-notes.md.

The FastAPI Project Template is licensed under the terms of the MIT license.

The documentation below is for your own project, not the Project Template. 👇

- Node.js (with

npm).

- Start the stack with Docker Compose:

docker compose up -d- Now you can open your browser and interact with these URLs:

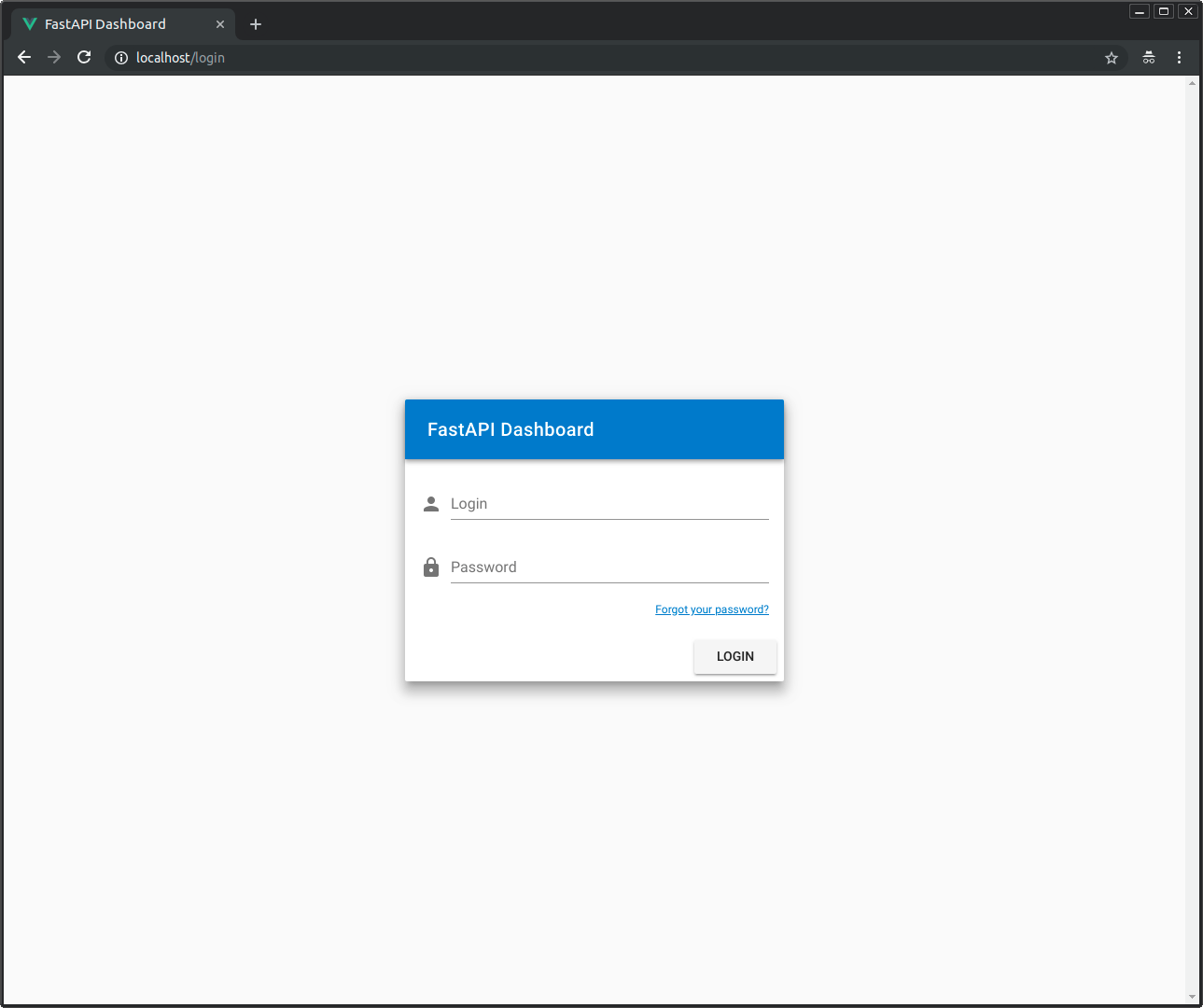

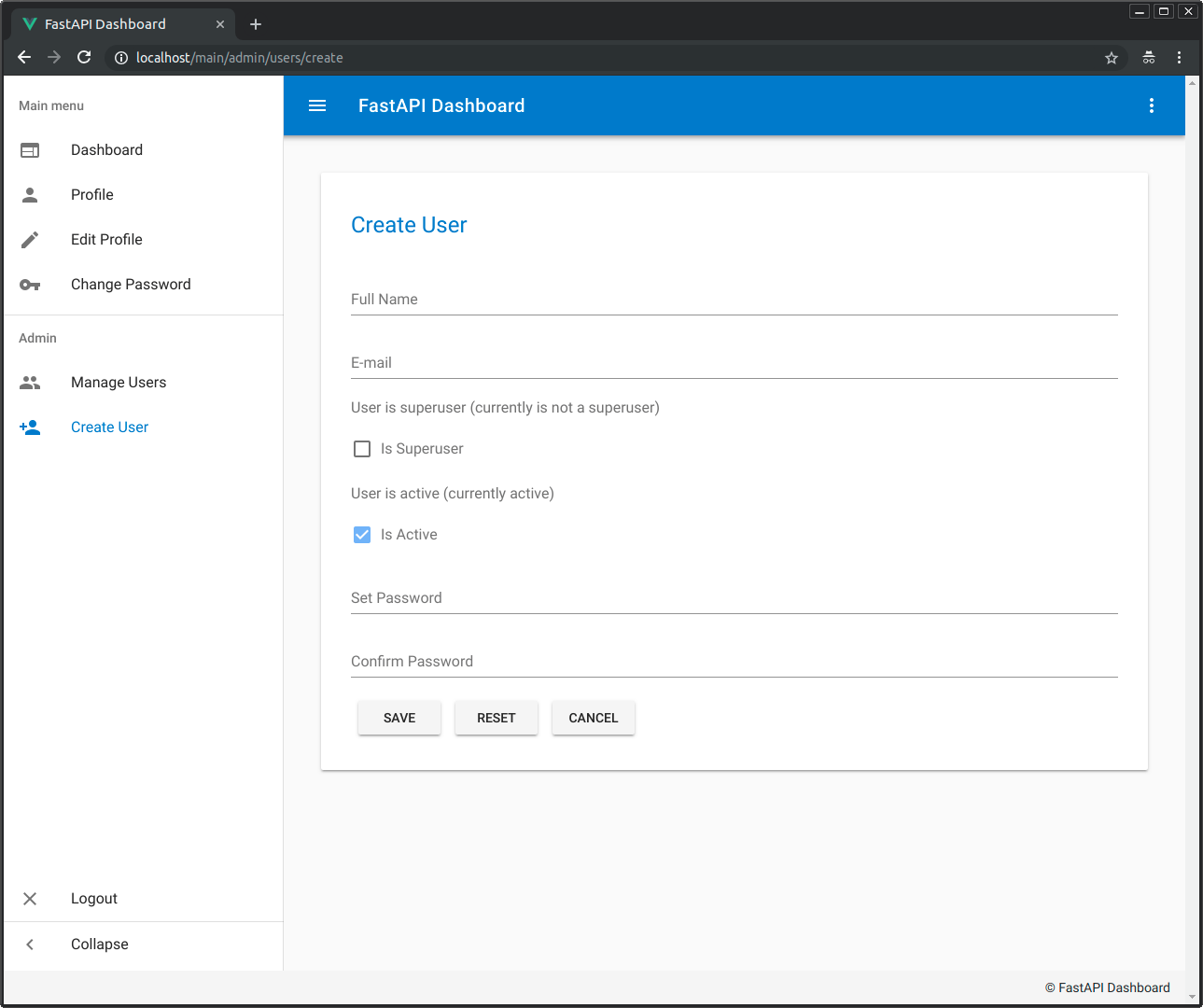

Frontend, built with Docker, with routes handled based on the path: http://localhost

Backend, JSON based web API based on OpenAPI: http://localhost/api/

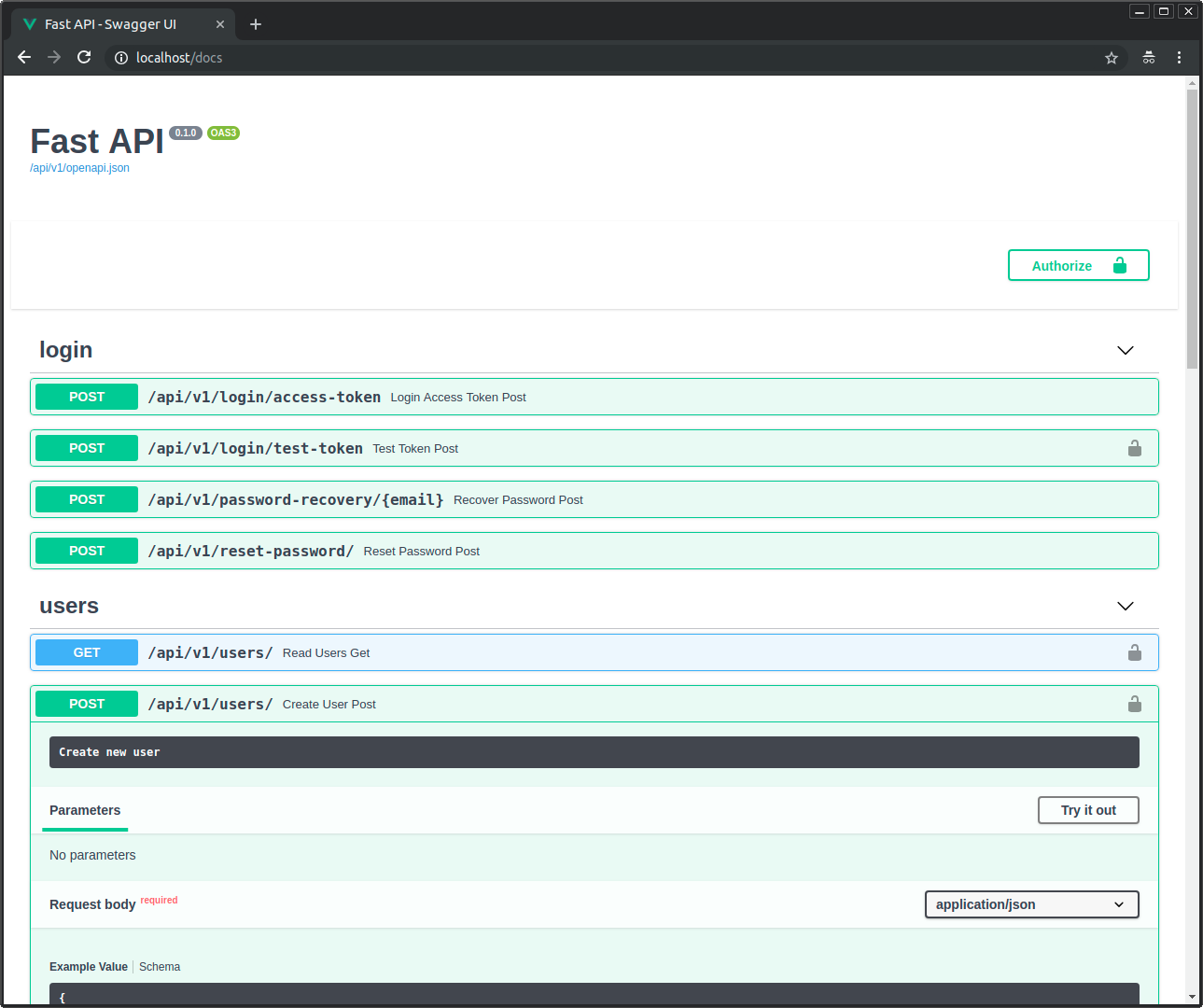

Automatic interactive documentation with Swagger UI (from the OpenAPI backend): http://localhost/docs

PGAdmin, PostgreSQL web administration: http://localhost:5050

Flower, administration of Celery tasks: http://localhost:5555

Traefik UI, to see how the routes are being handled by the proxy: http://localhost:8090

Note: The first time you start your stack, it might take a minute for it to be ready. While the backend waits for the database to be ready and configures everything. You can check the logs to monitor it.

To check the logs, run:

docker compose logsTo check the logs of a specific service, add the name of the service, e.g.:

docker compose logs backendIf your Docker is not running in localhost (the URLs above wouldn't work) you would need to use the IP or domain where your Docker is running.

By default, the dependencies are managed with Poetry, go there and install it.

From ./backend/ you can install all the dependencies with:

$ poetry installThen you can start a shell session with the new environment with:

$ poetry shellNext, open your editor at ./backend/ (instead of the project root: ./), so that you see an ./app/ directory with your code inside. That way, your editor will be able to find all the imports, etc. Make sure your editor uses the environment you just created with Poetry.

Modify or add SQLModel models for data and SQL tables in ./backend/app/models.py, API endpoints in ./backend/app/api/, CRUD (Create, Read, Update, Delete) utils in ./backend/app/crud.py.

Add and modify tasks to the Celery worker in ./backend/app/worker.py.

During development, you can change Docker Compose settings that will only affect the local development environment in the file docker-compose.override.yml.

The changes to that file only affect the local development environment, not the production environment. So, you can add "temporary" changes that help the development workflow.

For example, the directory with the backend code is mounted as a Docker "host volume", mapping the code you change live to the directory inside the container. That allows you to test your changes right away, without having to build the Docker image again. It should only be done during development, for production, you should build the Docker image with a recent version of the backend code. But during development, it allows you to iterate very fast.

There is also a command override that runs /start-reload.sh (included in the base image) instead of the default /start.sh (also included in the base image). It starts a single server process (instead of multiple, as would be for production) and reloads the process whenever the code changes. Have in mind that if you have a syntax error and save the Python file, it will break and exit, and the container will stop. After that, you can restart the container by fixing the error and running again:

$ docker compose up -dThere is also a commented out command override, you can uncomment it and comment the default one. It makes the backend container run a process that does "nothing", but keeps the container alive. That allows you to get inside your running container and execute commands inside, for example a Python interpreter to test installed dependencies, or start the development server that reloads when it detects changes.

To get inside the container with a bash session you can start the stack with:

$ docker compose up -dand then exec inside the running container:

$ docker compose exec backend bashYou should see an output like:

root@7f2607af31c3:/app#that means that you are in a bash session inside your container, as a root user, under the /app directory, this directory has another directory called "app" inside, that's where your code lives inside the container: /app/app.

There you can use the script /start-reload.sh to run the debug live reloading server. You can run that script from inside the container with:

$ bash /start-reload.sh...it will look like:

root@7f2607af31c3:/app# bash /start-reload.shand then hit enter. That runs the live reloading server that auto reloads when it detects code changes.

Nevertheless, if it doesn't detect a change but a syntax error, it will just stop with an error. But as the container is still alive and you are in a Bash session, you can quickly restart it after fixing the error, running the same command ("up arrow" and "Enter").

...this previous detail is what makes it useful to have the container alive doing nothing and then, in a Bash session, make it run the live reload server.

To test the backend run:

$ bash ./scripts/test.shThe tests run with Pytest, modify and add tests to ./backend/app/tests/.

If you use GitHub Actions the tests will run automatically.

If your stack is already up and you just want to run the tests, you can use:

docker compose exec backend /app/tests-start.shThat /app/tests-start.sh script just calls pytest after making sure that the rest of the stack is running. If you need to pass extra arguments to pytest, you can pass them to that command and they will be forwarded.

For example, to stop on first error:

docker compose exec backend bash /app/tests-start.sh -xBecause the test scripts forward arguments to pytest, you can enable test coverage HTML report generation by passing --cov-report=html.

To run the local tests with coverage HTML reports:

DOMAIN=backend sh ./scripts/test-local.sh --cov-report=htmlTo run the tests in a running stack with coverage HTML reports:

docker compose exec backend bash /app/tests-start.sh --cov-report=htmlAs during local development your app directory is mounted as a volume inside the container, you can also run the migrations with alembic commands inside the container and the migration code will be in your app directory (instead of being only inside the container). So you can add it to your git repository.

Make sure you create a "revision" of your models and that you "upgrade" your database with that revision every time you change them. As this is what will update the tables in your database. Otherwise, your application will have errors.

- Start an interactive session in the backend container:

$ docker compose exec backend bash-

Alembic is already configured to import your SQLModel models from

./backend/app/models.py. -

After changing a model (for example, adding a column), inside the container, create a revision, e.g.:

$ alembic revision --autogenerate -m "Add column last_name to User model"-

Commit to the git repository the files generated in the alembic directory.

-

After creating the revision, run the migration in the database (this is what will actually change the database):

$ alembic upgrade headIf you don't want to use migrations at all, uncomment the lines in the file at ./backend/app/db/init_db.py that end in:

SQLModel.metadata.create_all(engine)and comment the line in the file prestart.sh that contains:

$ alembic upgrade headIf you don't want to start with the default models and want to remove them / modify them, from the beginning, without having any previous revision, you can remove the revision files (.py Python files) under ./backend/app/alembic/versions/. And then create a first migration as described above.

You might want to use something different than localhost as the domain. For example, if you are having problems with cookies that need a subdomain, and Chrome is not allowing you to use localhost.

In that case, you have two options: you could use the instructions to modify your system hosts file with the instructions below in Development with a custom IP or you can just use localhost.tiangolo.com, it is set up to point to localhost (to the IP 127.0.0.1) and all its subdomains too. And as it is an actual domain, the browsers will store the cookies you set during development, etc.

If you used the default CORS enabled domains while generating the project, localhost.tiangolo.com was configured to be allowed. If you didn't, you will need to add it to the list in the variable BACKEND_CORS_ORIGINS in the .env file.

To configure it in your stack, follow the section Change the development "domain" below, using the domain localhost.tiangolo.com.

After performing those steps you should be able to open: http://localhost.tiangolo.com and it will be served by your stack in localhost.

Check all the corresponding available URLs in the section at the end.

If you are running Docker in an IP address different than 127.0.0.1 (localhost), you will need to perform some additional steps. That will be the case if you are running a custom Virtual Machine or your Docker is located in a different machine in your network.

In that case, you will need to use a fake local domain (dev.example.com) and make your computer think that the domain is is served by the custom IP (e.g. 192.168.99.150).

If you have a custom domain like that, you need to add it to the list in the variable BACKEND_CORS_ORIGINS in the .env file.

-

Open your

hostsfile with administrative privileges using a text editor:- Note for Windows: If you are in Windows, open the main Windows menu, search for "notepad", right click on it, and select the option "open as Administrator" or similar. Then click the "File" menu, "Open file", go to the directory

c:\Windows\System32\Drivers\etc\, select the option to show "All files" instead of only "Text (.txt) files", and open thehostsfile. - Note for Mac and Linux: Your

hostsfile is probably located at/etc/hosts, you can edit it in a terminal runningsudo nano /etc/hosts.

- Note for Windows: If you are in Windows, open the main Windows menu, search for "notepad", right click on it, and select the option "open as Administrator" or similar. Then click the "File" menu, "Open file", go to the directory

-

Additional to the contents it might have, add a new line with the custom IP (e.g.

192.168.99.150) a space character, and your fake local domain:dev.example.com.

The new line might look like:

192.168.99.150 dev.example.com

- Save the file.

- Note for Windows: Make sure you save the file as "All files", without an extension of

.txt. By default, Windows tries to add the extension. Make sure the file is saved as is, without extension.

- Note for Windows: Make sure you save the file as "All files", without an extension of

...that will make your computer think that the fake local domain is served by that custom IP, and when you open that URL in your browser, it will talk directly to your locally running server when it is asked to go to dev.example.com and think that it is a remote server while it is actually running in your computer.

To configure it in your stack, follow the section Change the development "domain" below, using the domain dev.example.com.

After performing those steps you should be able to open: http://dev.example.com and it will be server by your stack in 192.168.99.150.

Check all the corresponding available URLs in the section at the end.

If you need to use your local stack with a different domain than localhost, you need to make sure the domain you use points to the IP where your stack is set up.

To simplify your Docker Compose setup, for example, so that the API docs (Swagger UI) knows where is your API, you should let it know you are using that domain for development.

- Open the file located at

./.env. It would have a line like:

DOMAIN=localhost

- Change it to the domain you are going to use, e.g.:

DOMAIN=localhost.tiangolo.com

That variable will be used by the Docker Compose files.

After that, you can restart your stack with:

docker compose up -dand check all the corresponding available URLs in the section at the end.

- Enter the

frontenddirectory, install the NPM packages and start the live server using thenpmscripts:

cd frontend

npm install

npm run devThen open your browser at http://localhost:5173/.

Notice that this live server is not running inside Docker, it is for local development, and that is the recommended workflow. Once you are happy with your frontend, you can build the frontend Docker image and start it, to test it in a production-like environment. But compiling the image at every change will not be as productive as running the local development server with live reload.

Check the file package.json to see other available options.

If you are developing an API-only app and want to remove the frontend, you can do it easily:

- Remove the

./frontenddirectory. - In the

docker-compose.ymlfile, remove the whole service / sectionfrontend. - In the

docker-compose.override.ymlfile, remove the whole service / sectionfrontend.

Done, you have a frontend-less (api-only) app. 🤓

If you want, you can also remove the FRONTEND environment variables from:

.env./scripts/*.sh

But it would be only to clean them up, leaving them won't really have any effect either way.

You can deploy the using Docker Compose with a main Traefik proxy outside handling communication to the outside world and HTTPS certificates.

And you can use CI (continuous integration) systems to do it automatically.

But you have to configure a couple things first.

This stack expects the public Traefik network to be named traefik-public.

If you need to use a different Traefik public network name, update it in the docker-compose.yml files, in the section:

networks:

traefik-public:

external: trueChange traefik-public to the name of the used Traefik network. And then update it in the file .env:

TRAEFIK_PUBLIC_NETWORK=traefik-publicThere is a main docker-compose.yml file with all the configurations that apply to the whole stack, it is used automatically by docker compose.

And there's also a docker-compose.override.yml with overrides for development, for example to mount the source code as a volume. It is used automatically by docker compose to apply overrides on top of docker-compose.yml.

These Docker Compose files use the .env file containing configurations to be injected as environment variables in the containers.

They also use some additional configurations taken from environment variables set in the scripts before calling the docker compose command.

It is all designed to support several "stages", like development, building, testing, and deployment. Also, allowing the deployment to different environments like staging and production (and you can add more environments very easily).

They are designed to have the minimum repetition of code and configurations, so that if you need to change something, you have to change it in the minimum amount of places. That's why files use environment variables that get auto-expanded. That way, if for example, you want to use a different domain, you can call the docker compose command with a different DOMAIN environment variable instead of having to change the domain in several places inside the Docker Compose files.

Also, if you want to have another deployment environment, say preprod, you just have to change environment variables, but you can keep using the same Docker Compose files.

The .env file is the one that contains all your configurations, generated keys and passwords, etc.

Depending on your workflow, you could want to exclude it from Git, for example if your project is public. In that case, you would have to make sure to set up a way for your CI tools to obtain it while building or deploying your project.

One way to do it could be to add each environment variable to your CI/CD system, and updating the docker-compose.yml file to read that specific env var instead of reading the .env file.

The production or staging URLs would use these same paths, but with your own domain.

Development URLs, for local development.

Frontend: http://localhost

Backend: http://localhost/api/

Automatic Interactive Docs (Swagger UI): https://localhost/docs

Automatic Alternative Docs (ReDoc): https://localhost/redoc

PGAdmin: http://localhost:5050

Flower: http://localhost:5555

Traefik UI: http://localhost:8090

Development URLs, for local development.

Frontend: http://localhost.tiangolo.com

Backend: http://localhost.tiangolo.com/api/

Automatic Interactive Docs (Swagger UI): https://localhost.tiangolo.com/docs

Automatic Alternative Docs (ReDoc): https://localhost.tiangolo.com/redoc

PGAdmin: http://localhost.tiangolo.com:5050

Flower: http://localhost.tiangolo.com:5555

Traefik UI: http://localhost.tiangolo.com:8090