Labelmm is the next generation of annotation tools, harnessing the power of Computer Vision SOTA models from mmdetection to Labelme in a seamless expirence with modern user interface and intuitive workflow

Installation 🛠️ | Input Modes 🎞️ | Model Selection 🤖 | Object Tracking 🚗 | Export 📤 | Other Features 🌟| Contributing 🤝| Resources 🌐 | License 📜

1. Install Pytorch

preferably using anaconda

conda create --name labelmm python=3.8 -y

conda activate labelmm

conda install pytorch==1.13.1 torchvision==0.14.1 torchaudio==0.13.1 pytorch-cuda=11.7 -c pytorch -c nvidia

pip install -r requirements.txt

mim install mmcv-full==1.7.0

Run the tool from labelmm-master directory

cd labelme-master

python __main__.py

click to expand

some linux machines may have this problem

Could not load the Qt platform plugin "xcb" in "/home/<username>/miniconda3/envs/test/lib/python3.8/site-packages/cv2/qt/plugins" even though it was found.

This application failed to start because no Qt platform plugin could be initialized. Reinstalling the application may fix this problem.

Available platform plugins are: xcb, eglfs, linuxfb, minimal, minimalegl, offscreen, vnc, wayland-egl, wayland, wayland-xcomposite-egl, wayland-xcomposite-glx, webgl.

it can be solved simply be installing opencv-headless

pip3 install opencv-python-headless

some windows machines may have this problem when installing mmdet

Building wheel for pycocotools (setup.py) ... error

...

error: Microsoft Visual C++ 14.0 or greater is required. Get it with "Microsoft C++ Build Tools": https://visualstudio.microsoft.com/visual-cpp-build-tools/

You can try

conda install -c conda-forge pycocotools

or just use Visual Studio installer to Install MSVC v143 - VS 2022 C++ x64/x86 build tools (Latest)**

labelmm provides 3 Input modes:

- Image : for image annotation

- Directory : for annotating images in a directory

- Video : for annotating videos

For model selection, Labelmm provides the Model Explorer to utilize the power of the numerous models in mmdetection and ultralytics YOLOv8 the to give the user the ability to compare, download and select his library of models

for Object Tracking, Labelmm offers 5 different tracking models with the ability to select between them

for Object Tracking, Labelmm offers 5 different tracking models with the ability to select between them

In Object Detection, Labelmm provides a seamless expirence for video navigation, tracking settings and different visualization options with the ability to export the tracking results to a video file

Beside this, Labelmm provides a completely new way to modify the tracking results, including edit and delete propagation across frames and different interpolation methods

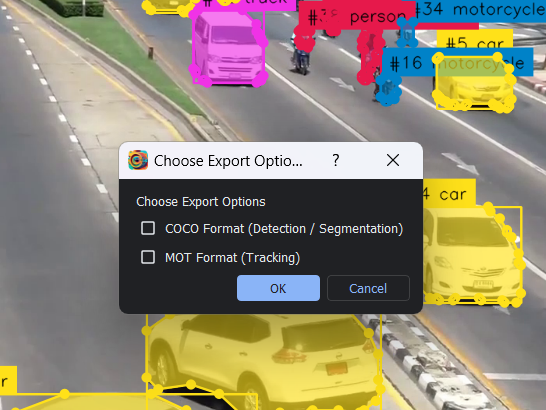

For Instance Segmentation, Labelmm provides to option to export all input modes to COCO format while for Object Detection on videos, Labelmm provides the ability to export the tracking results to MOT format

- Threshold Selection

- Select Classes (from 80 COCO classes)

- Track assigned objects only

- Merging models (Run both models and merge the results)

- Show Runtime Type (CPU/GPU)

- Show GPU Memory Usage

- Video Navigation (Frame by Frame, Fast Forward, Fast Backward, Play/Pause)

- Light / Dark Theme Support (syncs with OS theme)

- Customizable UI Elements (Hide/Show and Change Position)

Labelmm is an open source project and contributions are very welcome, specially in this early stage of development.

you can contribute by:

-

Create an issue Reporting bugs 🐞 or suggesting new features 🌟 or just give your feedback 📝

-

Create a pull request to fix bugs or add new features, or just to improve the code quality, optimize performance, documentation, or even just to fix typos

-

Review pull requests and help with the code review process

-

Spread the word about Labelmm and help us grow the community 🌎, by sharing the project on social media, or just by telling your friends about it

Labelmm is released under the GPLv3 license.