Official implementation of "Text2Model: Model Induction for Zero-shot Generalization Using Task Descriptions".

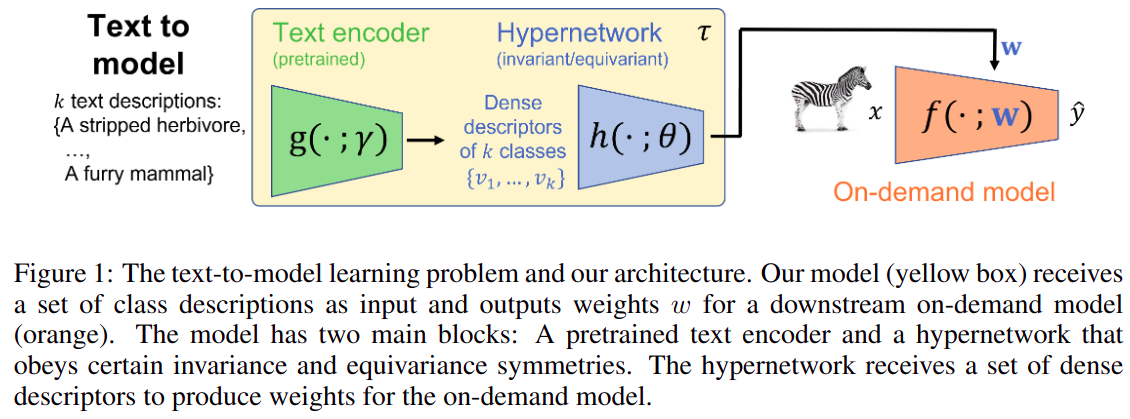

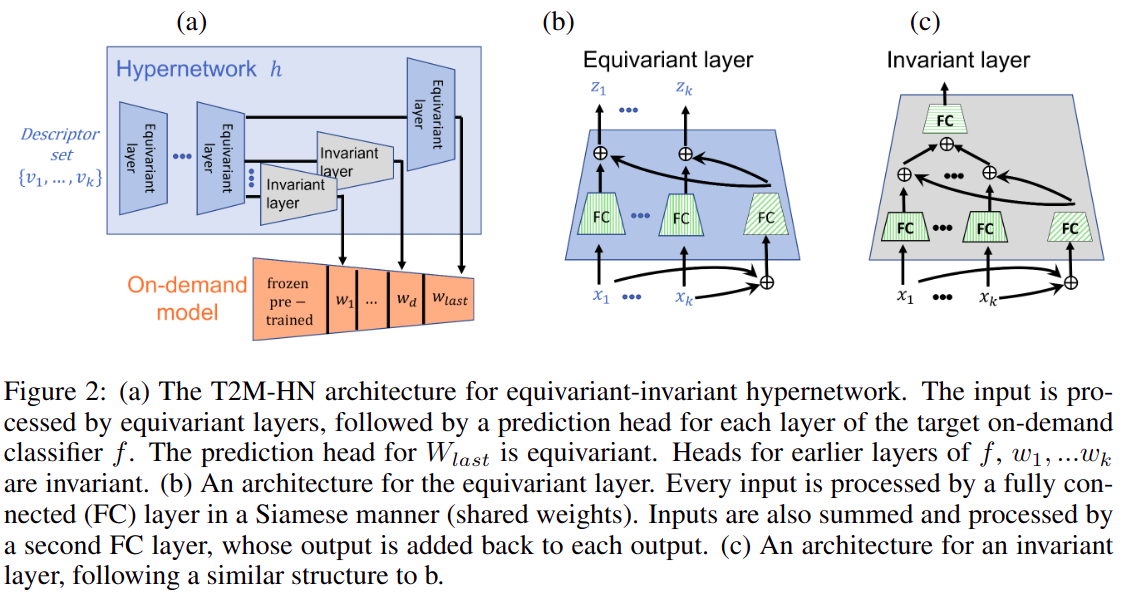

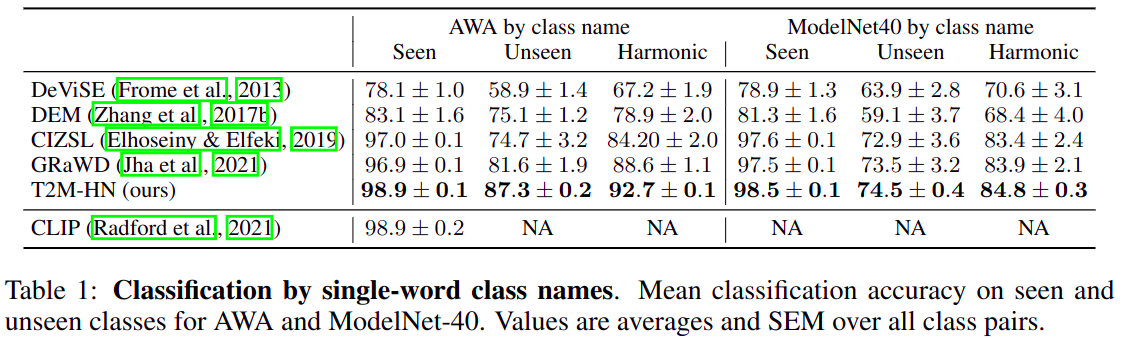

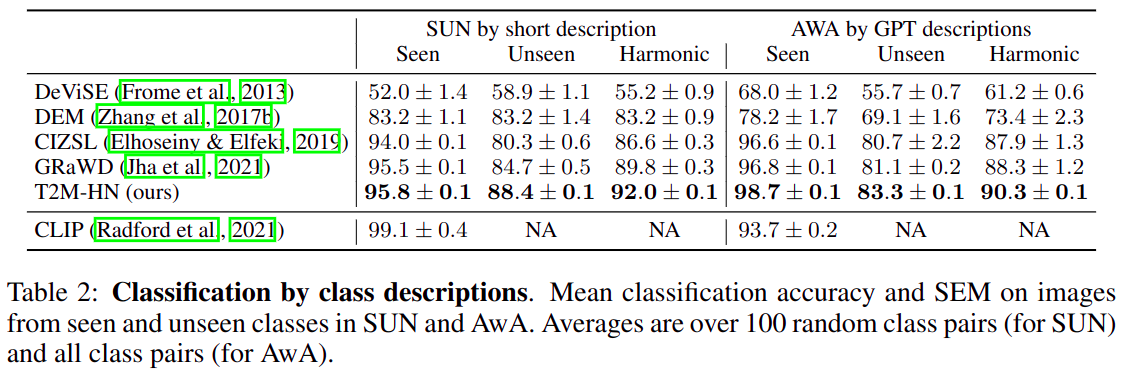

We study the problem of generating a training-free task-dependent visual classifier from text descriptions without visual samples. We analyze the symmetries of T2M, and characterize the equivariance and invariance properties of corresponding models. In light of these properties we design an architecture based on hypernetworks that given a set of new class descriptions predicts the weights for an object recognition model which classifies images from those zero-shot classes. We demonstrate the benefits of our approach compared to zero-shot learning from text descriptions in image and point-cloud classification using various types of text descriptions: From single words to rich text descriptions.

sudo apt install docker.iosudo groupadd dockersudo usermod -aG docker $USERnewgrp docker

docker pull amosy3/t2m:latestdocker run --rm -it -v $(pwd):/data:rw --name text2model amosy3/t2m:latest

git clone https://github.com/amosy3/Text2Model.gitcd Text2Modelchmod +x download.sh./download.sh

git config --global --add safe.directory /datapython main.py --batch_size=64 --hn_train_epochs=100 --hnet_hidden_size=120 --inner_train_epochs=3 --lr=0.005 --momentum=0.9 --weight_decay=0.0001 --text_encoder SBERT --hn_type EV