Project 2: Reacher Continuous Control

Project description

In this environment called Reacher, a double-jointed arm can move to target locations. A reward of +0.1 is provided for each step that the agent's hand is in the goal location. Thus, the goal the agent is to maintain its position at the target location for as many time steps as possible. Additional information can be found here: link

The observation space consists of Each action is a vector with four numbers,

-

State space is

33dimensional continuous vector, consisting of position, rotation, velocity, and angular velocities of the arm. -

Action space is

4dimentional continuous vector, corresponding to torque applicable to two joints. Every entry in the action vector should be a number between -1 and 1. -

Solution criteria: the environment is considered as solved when the agent gets an average score of +30 over 100 consecutive episodes (averaged over all agents in case of multiagent environment).

Dependencies

To set up your python environment to run the code in this repository, please follow the instructions below.

-

Create (and activate) a new environment with Python 3.6.

- Linux or Mac:

conda create --name drlnd python=3.6 source activate drlnd- Windows:

conda create --name drlnd python=3.6 activate drlnd

-

Follow the instructions in this repository to perform a minimal install of OpenAI gym.

-

Clone the repository (if you haven't already!), and navigate to the

python/folder. Then, install several dependencies.

git clone https://github.com/udacity/deep-reinforcement-learning.git

cd deep-reinforcement-learning/python

pip install .- Create an IPython kernel for the

drlndenvironment.

python -m ipykernel install --user --name drlnd --display-name "drlnd"- Before running code in a notebook, change the kernel to match the

drlndenvironment by using the drop-downKernelmenu.

Getting Started

-

Download the environment from one of the links below. You need only select the environment that matches your operating system (Note: Releteted with second option of environemt):

- Twenty (20) Agents

- Linux: click here

- Mac OSX: click here

- Windows (32-bit): click here

- Windows (64-bit): click here

(For Windows users) Check out this link if you need help with determining if your computer is running a 32-bit version or 64-bit version of the Windows operating system.

(For AWS) If you'd like to train the agent on AWS (and have not enabled a virtual screen), then please use this link (version 1) or this link (version 2) to obtain the "headless" version of the environment. You will not be able to watch the agent without enabling a virtual screen, but you will be able to train the agent. (To watch the agent, you should follow the instructions to enable a virtual screen, and then download the environment for the Linux operating system above.)

- Twenty (20) Agents

-

Place the file in this folder, unzip (or decompress) the file and then write the correct path in the argument for creating the environment under the notebook

Continuous_Control_DDPG.ipynb:

env = env = UnityEnvironment(file_name="Reacher.app")Description

.

├── images # Supporting images

├── checkpoint # Contains the saved models

│ ├── checkpoint_actor.pth # Saved model weights for Actor network

│ ├── checkpoint_critic.pth # Saved model weights for Critic Network

├── results # Contains images of result

│ ├── ddpg_result.png # Plot Result for the DDPGmodel

├── Continuous_Control_DDPG.ipynb # Notebook with solution using DDPG model

Instructions

Follow the instructions in Continuous_Control_DDPG.ipynb to get started with training your own agent!

To watch a trained smart agent, Every notebook will have the section Model in action run that section after loading the enviroment. It will load the save model and start playing the game.

Paper implemented

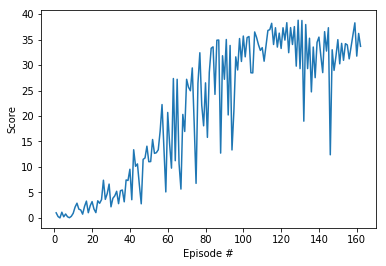

Results

Plot showing the score per episode over all the episodes. The environment was solved in 162 episodes.