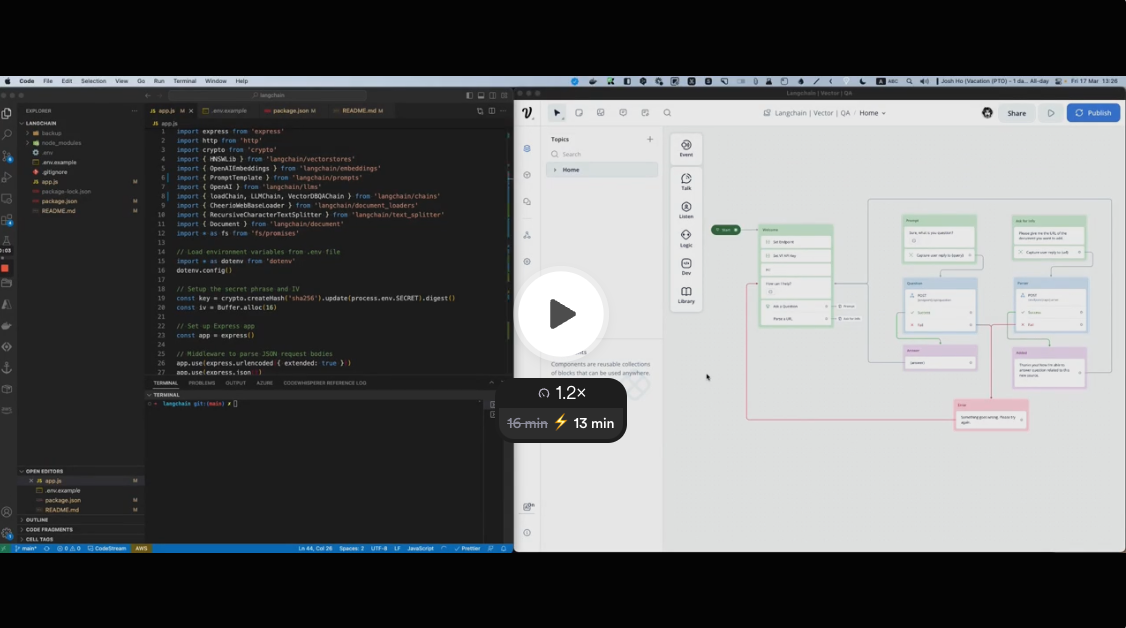

This code utilizes Open AI GPT, Langchain, HNSWLib and Cheerio to fetch web content from URLs, create embeddings/vectors and save them in a local database. The knowledge base can then be used with GPT to answer questions.

If you are running this on Node.js 16, either:

run your application with NODE_OPTIONS='--experimental-fetch' node ..., or install node-fetch and follow the instructions here

If you are running this on Node.js 18 or 19, you do not need to do anything.

Setup is simple, just run:

npm i

npm start

This app requires an .env file with PORT, OPENAI_API_KEY and SECRET (for API key encoding).

You can rename the .env.example file to .env and fill in the values.

There are 2 endpoints available:

This endpoint allows a POST request with the URL link of the page to parse. You need to pass the Voiceflow Assistant API Key in the body.

{

"url":"https://www.voiceflow.com/blog/prompt-chaining-conversational-ai",

"apikey":"VF.DM.XXX"

}This endpoint allows a POST request with the question. You need to pass the Voiceflow Assistant API Key in the body.

{

"question":"What is prompt chaining?",

"apikey":"VF.DM.XXX"

}To allow access to the app externally using the port set in the .env file, you can use ngrok. Follow the steps below:

- Install ngrok: https://ngrok.com/download

- Run

ngrok http <port>in your terminal (replace<port>with the port set in your.envfile) - Copy the ngrok URL generated by the command and use it in your Voiceflow Assistant API step.

This can be handy if you want to quickly test this in an API step within your Voiceflow Assistant.