Can Wang1,

Menglei Chai2,

Mingming He3,

Dongdong Chen4,

Jing Liao1

1City University of Hong Kong, 2Creative Vision, Snap Inc., 3USC Institute for Creative Technologies, 4Microsoft Cloud AI

- Python 3.8

- Torch 1.7.1 or Torch 1.8.0

- Pytorch3D for rendering images

Note: Related checkpoints and 3DMM bases can be downloaded from here; you can also take the following steps to prepare these.

- Download the StyleGAN2 checkpoint from here and place it into the 'stylegan2-pytorch/checkpoint' directory.

- For quickly trying our method, I recommend to generate 4K latent(StyleSpace)&image training pairs:

cd stylegan2-pytorch

python generate_data.py --pics 4000 --ckpt checkpoint/stylegan2-ffhq-config-f.pt

Once finished, you will acquire 'Images' and 'latents.pkl' files.

- Download checkpoint from here and place it into the 'Deep3DFaceReconstruction-pytorch/network' directory;

- Download 3DMM bases from here and place these files into the 'Deep3DFaceReconstruction-pytorch/BFM' directory;

- Estimate 3DMM parameters and facial Landmarks:

cd Deep3DFaceReconstruction

python extract_gt.py ../stylegan2-pytorch/Images

Once finished, you will acquire the 'params.pkl' file. Then estimate the landmarks using dlib and split training and testing datasets. Please download the dlib landmark predictor from here and place it into the 'sample_dataset' directory.

mv stylegan2-pytorch/Images sample_dataset/

mv stylegan2-pytorch/latents.pkl sample_dataset/

mv Deep3DFaceReconstruction-pytorch/params.pkl sample_dataset/

cd sample_dataset/

python extract_landmarks.py

python split_train_test.py

Copy BFM from Deep3DFaceReconstruction:

cp -r Deep3DFaceReconstruction-pytorch/BFM/ ./bfm/

Then train the Attribute Prediction Network:

python train.py --name apnet_wpdc --model APModel --train_wpdc

Test the Attribute Prediction Network and you will find results (the rendered image and the mesh) in the 'results' directory:

python evaluate.py --name apnet_wpdc --model APModel

Some testing results of APNet (pre-trained model with only 4K images, 3800 for training and 200 for testing):

Some important parameters for training or testing:

| Parameter | Default | Description |

|---|---|---|

| --name | 'test' | name of the experiment |

| --model | 'APModel' | which model to be trained |

| --train_wpdc | False | whether use WPDC loss |

| --w_wpdc | 1.0 | weight of the WPDC loss |

| --data_dir | 'sample_dataset' | path to dataset |

| --total_epoch | 200 | total epochs for training |

| --save_interval | 20 | interval to save the model |

| --batch_size | 128 | batch size to train the model |

| --load_epoch | -1 (the final saved model) | which checkpoint to load for testing |

-

Code for generating latent&image training pairs; -

Code for estimating 3DMM parameters and landmarks; -

Code and pre-trained models for the Attribute Prediction Network; - Code and pre-trained models for the Latent Manipulation Network

- Code, Data and pre-trained models for Latent-Consistent Finetuning

- Remains ...

- StyleGAN2-pytorch: https://github.com/rosinality/stylegan2-pytorch

- Deep3DFaceReconstruction-pytorch: https://github.com/changhongjian/Deep3DFaceReconstruction-pytorch

If you find our work useful for your research, please consider citing the following papers :)

@article{wang2021cross,

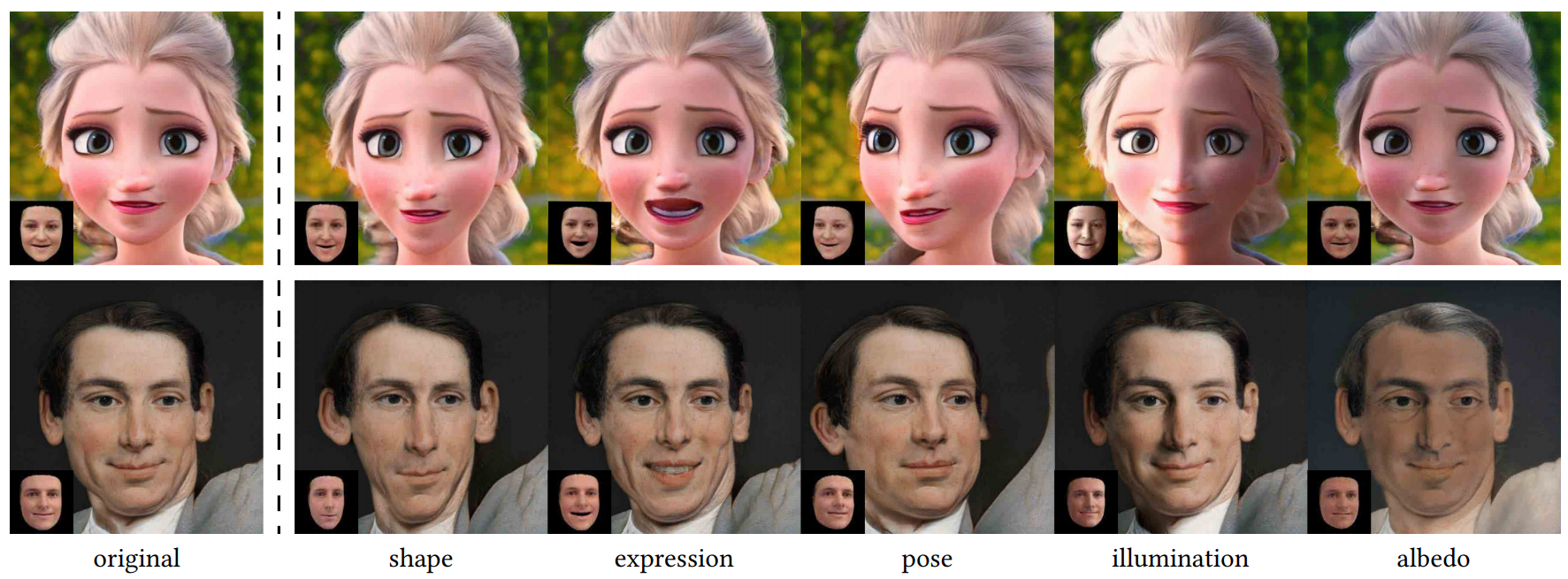

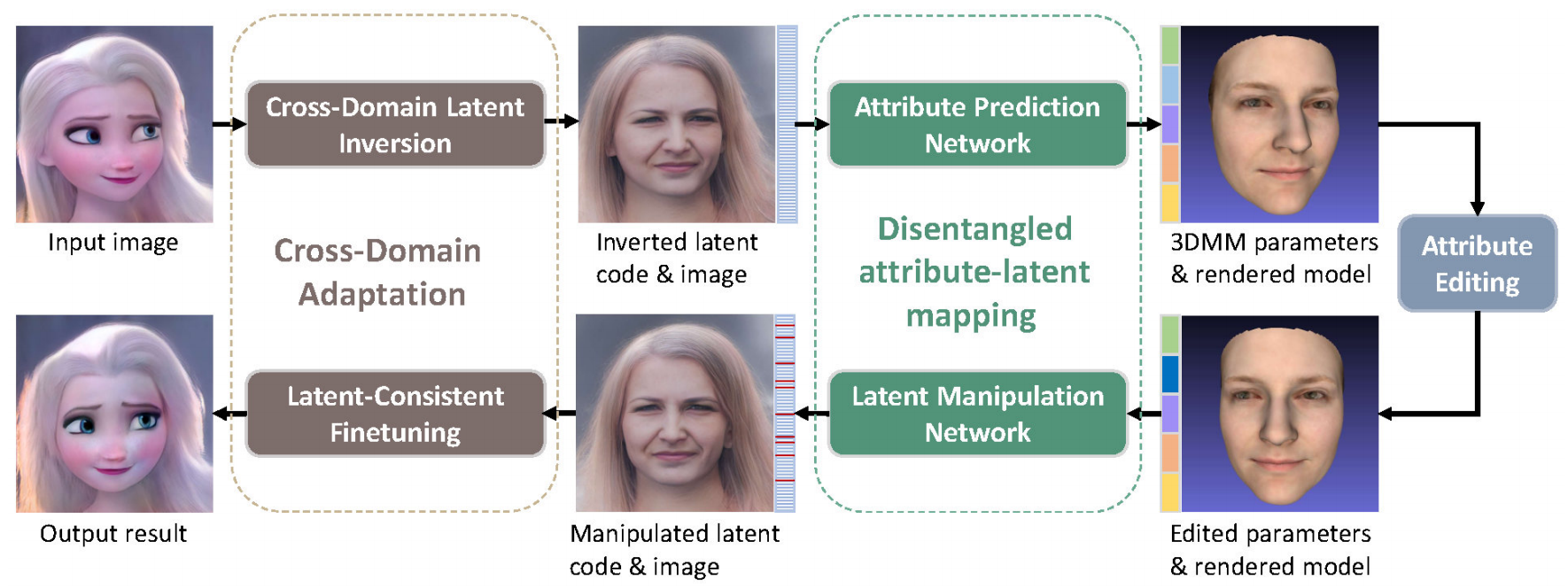

title={Cross-Domain and Disentangled Face Manipulation with 3D Guidance},

author={Wang, Can and Chai, Menglei and He, Mingming and Chen, Dongdong and Liao, Jing},

journal={arXiv preprint arXiv:2104.11228},

year={2021}

}