The goal of this project is to train an open-source 3D printed quadruped robot exploring

Reinforcement Learning and OpenAI Gym. The aim is to let the robot learns domestic and generic tasks in the simulations and then

successfully transfer the knowledge (Control Policies) on the real robot without any other manual tuning.

This project is mostly inspired by the incredible works done by Boston Dynamics.

This repository contains different OpenAI Gym Environments used to train Rex, the Rex URDF model,

the learning agent and some scripts to start the training session and visualise the learned Control Polices.

Create a Python 3.7 virtual environment, e.g. using Anaconda

conda create -n rex python=3.7 anaconda

conda activate rex

Install the public rex-gym package:

pip install rex_gym

Alternately, clone this repository and run from the root of the project:

pip install .

To start a pre-trained agent:

python -m rex_gym.playground.policy_player --env ENV-NAME-HERE

| Environment | Flag |

|---|---|

| Run | rex_galloping |

| Walk | rex_walk |

| Turn | rex_turn |

| Stand up | rex_standup |

To start a training simulation test (agents=1, render=True):

python -m rex_gym.playground.training_player --config rex_reactive --logdir YOUR_LOG_DIR_PATH

Where YOUR_LOG_DIR_PATH is the output path.

Set the Environment with the --config flag:

| Environment | Flag |

|---|---|

| Run | rex_galloping |

| Walk | rex_walk |

| Turn | rex_turn |

| Stand up | rex_standup |

To start a new batch training session:

python -m rex_gym.agents.scripts.train --config rex_reactive --logdir YOUR_LOG_DIR_PATH

Where YOUR_LOG_DIR_PATH is the output path.

Set the Environment with the --config flag:

| Environment | Flag |

|---|---|

| Run | rex_galloping |

| Walk | rex_walk |

| Turn | rex_turn |

| Stand up | rex_standup |

You may want to edit the PPO agent's default configuration, especially the number of parallel agents launched during the simulation.

Edit the num_agents variable in the agents/scripts/configs.py script:

def default():

"""Default configuration for PPO."""

# General

...

num_agents = 20

Install rex_gym from source. This configuration will launch 20 agents (threads) in parallel to train your model.

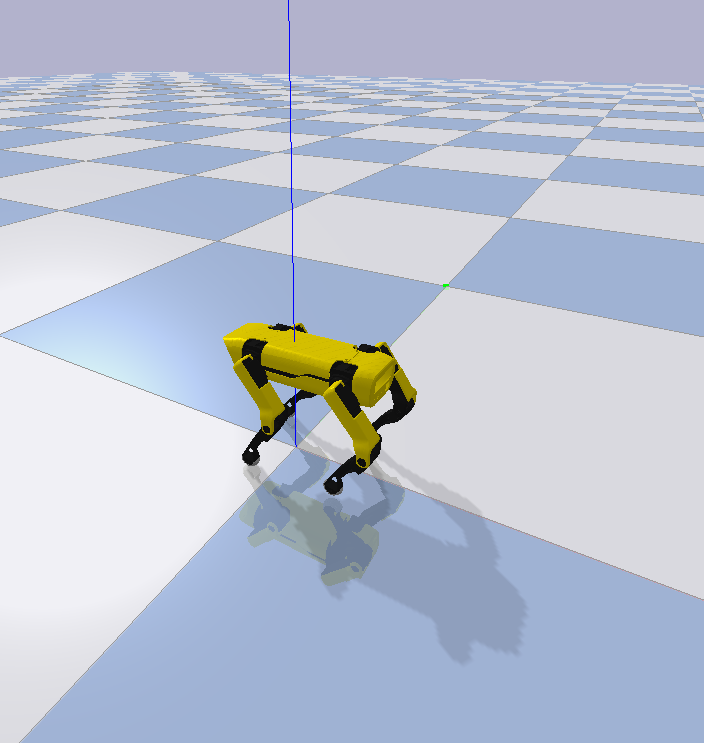

The robot used for this experiment is the Spotmicro made by Deok-yeon Kim.

I've printed the components using a Creality Ender3 3D printer, with PLA and TPU+.

The idea is to extend the robot adding components like a robotic arm on the top of the rack and a LiDAR sensor.

Rex is a 12 joints robot with 3 motors (Shoulder, Leg and Foot) for each leg.

The poses signals (see /model/rex.py) set the 12 motor angles and allow Rex to stand up.

The robot model is imported in pyBullet using an URDF file.

This is the list of tasks this experiment will cover:

- Basic controls

- Run/Walk straight on - forward/backward

- Turn left/right on the spot

- Stand up/Sit down

- Steer - Run/Walk

- Side swipe

- Fall recovery

- Reach a specific point in a map

- Grab an object

Goal: how to run straight on.

There is a good number of papers on quadrupeds locomotion, some of them with sample code. Probably, the most complete collection

of examples is the Minitaur folder in the Bullet3 repository.

For this task, the Minitaur Reactive Environment explained in the paper Sim-to-Real: Learning Agile Locomotion For Quadruped Robots

is a great example.

In this very first experiment, I let the system learn from scratch: giving the feedback component large output bounds [−0.6,0.6] radians.

The leg model (see galloping_env.py) forces legs and foots movements (positive or negative direction, depending on the leg) influencing the learning

score and time. In this first version, the leg model holds the Shoulder motors in the start position (0 degrees).

As in the Minitaur example, I'm using the Proximal Policy Optimization (PPO).

The emerged galloping gait shows the chassis tilled up and some unusual positions/movements (especially starting from the initial pose) during the locomotion. The leg model needs improvements.

To improve the gait, in this second simulation, I've worked on the leg model:

I set bounds for both Leg and Foot angles, keeping the Shoulder in the initial position.

The emerged gait now looks more clear.

Another test was made using a balanced feedback:

The Action Space dimension is equals to 4, the same angle is assigned to both the front legs and a different one to the rear ones. The very same was done for the foot angles.

The simulation score is massively improved (about 10x) as the learning time while the emerged gait is very similar to the bounded feedback model.

The Tensorflow score with this model, after ~500k attempts, is the same after ~4M attempts using any other models.

Goal: how to walk straight on.

Starting from Minitaur Alternating Leg

environment, I've used a sinusoidal signal as leg_model alternating the Rex legs during the locomotion. The feedback component has small

bounds [-0.1,0.1] as in the original script.

Goal: How to reach a certain orientation turning on the spot.

In this environment the leg_model applies a 'steer-on-the-spot' gait, allowing Rex to moving towards a specific orientation.

The reward function takes the chassis position/orientation and compares it with a fixed target position/orientation.

When this difference is less than 0.1 radiant, the leg_model is set to the stand up. In order to make the learning more robust,

the Rex starting orientation is randomly chosen (every 'Reset' step).

Goal: Reach the base standing position starting from the rest position

This environment introduces the rest_postion, ideally the position assumed when Rex is in stand-by.

The leg_model is the stand_low position, while the signal function applies a 'brake' forcing Rex to assume an halfway position

before completing the movement.

Sim-to-Real: Learning Agile Locomotion For Quadruped Robots and all the related papers. Google Brain, Google X, Google DeepMind - Minitaur Ghost Robotics.

Deok-yeon Kim creator of SpotMini.

The great work in rendering the robot platform done by the SpotMicroAI community.