pg-osc

pg-online-schema-change (pg-osc) is a tool for making schema changes (any ALTER statements) in Postgres tables with minimal locks, thus helping achieve zero downtime schema changes against production workloads.

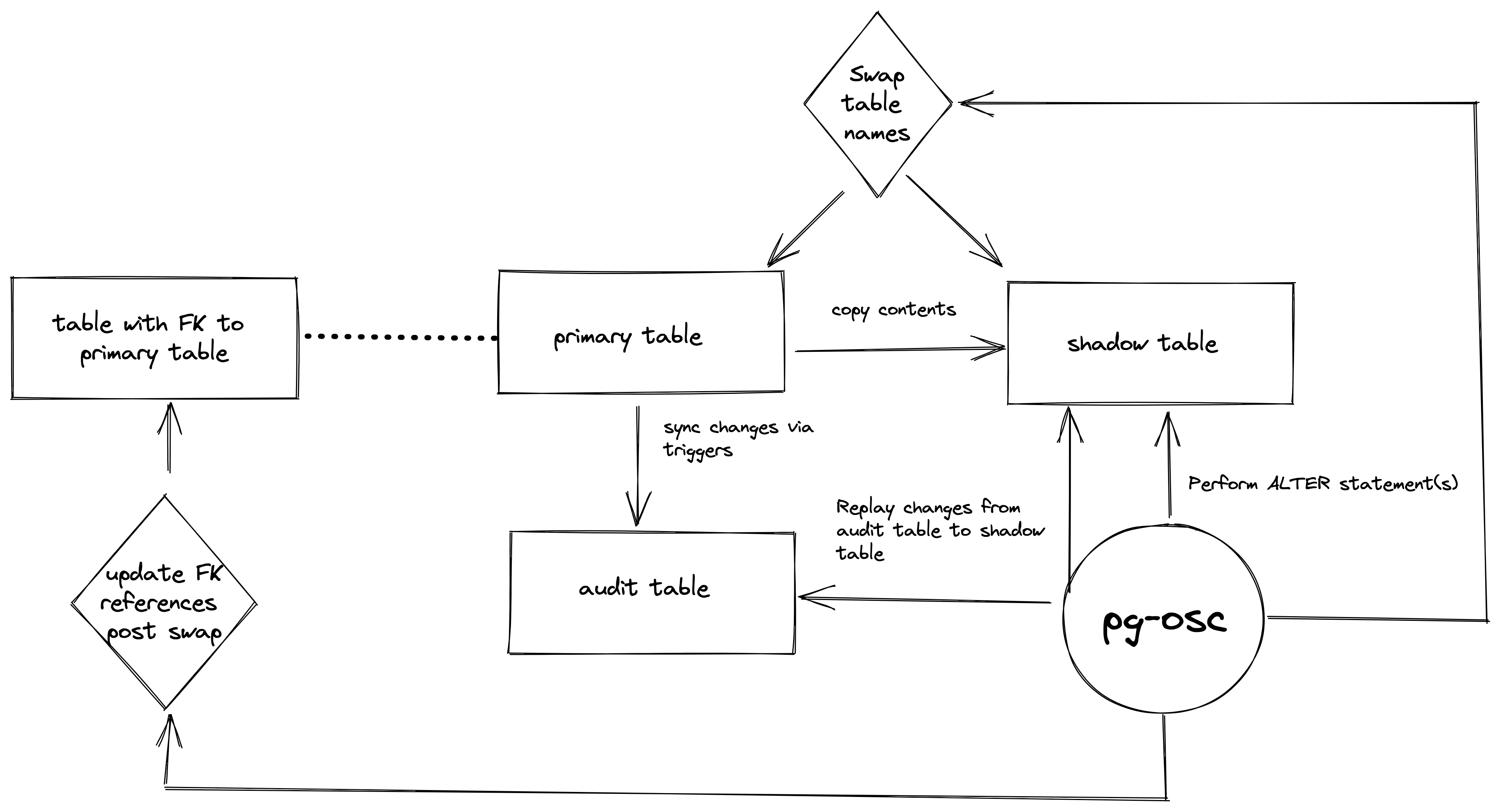

pg-osc uses the concept of shadow table to perform schema changes. At a high level, it creates a shadow table that looks structurally the same as the primary table, performs the schema change on the shadow table, copies contents from the primary table to the shadow table and swaps the table names in the end while preserving all changes to the primary table using triggers (via audit table).

pg-osc is inspired by the design and workings of tools like pg_repack and pt-online-schema-change (MySQL). Read more below on how does it work, prominent features, the caveats and examples

Table of Contents

- Installation

- Requirements

- Usage

- Prominent features

- Load test

- Examples

- Caveats

- How does it work

- Development

- Releasing

- Contributing

- License

- Code of Conduct

Installation

Add this line to your application's Gemfile:

gem "pg_online_schema_change"And then execute:

$ bundle install

Or install it yourself as:

$ gem install pg_online_schema_change

This will include all dependencies accordingly as well. Make sure the following requirements are satisfied.

Or via Docker:

docker pull shayonj/pg-osc:latest

https://hub.docker.com/r/shayonj/pg-osc

Requirements

- PostgreSQL 9.6 and later

- Ruby 2.6 and later

- Database user should have permissions for

TRIGGERand/or aSUPERUSER

Usage

pg-online-schema-change help perform

Usage:

pg-online-schema-change perform -a, --alter-statement=ALTER_STATEMENT -d, --dbname=DBNAME -h, --host=HOST -p, --port=N -s, --schema=SCHEMA -u, --username=USERNAME -w, --password=PASSWORD

Options:

-a, --alter-statement=ALTER_STATEMENT # The ALTER statement to perform the schema change

-s, --schema=SCHEMA # The schema in which the table is

# Default: public

-d, --dbname=DBNAME # Name of the database

-h, --host=HOST # Server host where the Database is located

-u, --username=USERNAME # Username for the Database

-p, --port=N # Port for the Database

# Default: 5432

-w, --password=PASSWORD # DEPRECATED: Password for the Database. Please pass PGPASSWORD environment variable instead.

-v, [--verbose], [--no-verbose] # Emit logs in debug mode

-f, [--drop], [--no-drop] # Drop the original table in the end after the swap

-k, [--kill-backends], [--no-kill-backends] # Kill other competing queries/backends when trying to acquire lock for the shadow table creation and swap. It will wait for --wait-time-for-lock duration before killing backends and try upto 3 times.

-w, [--wait-time-for-lock=N] # Time to wait before killing backends to acquire lock and/or retrying upto 3 times. It will kill backends if --kill-backends is true, otherwise try upto 3 times and exit if it cannot acquire a lock.

# Default: 10

-c, [--copy-statement=COPY_STATEMENT] # Takes a .sql file location where you can provide a custom query to be played (ex: backfills) when pgosc copies data from the primary to the shadow table. More examples in README.

-b, [--pull-batch-count=N] # Number of rows to be replayed on each iteration after copy. This can be tuned for faster catch up and swap. Best used with delta-count.

# Default: 1000

-e, [--delta-count=N] # Indicates how many rows should be remaining before a swap should be performed. This can be tuned for faster catch up and swap, especially on highly volume tables. Best used with pull-batch-count.

# Default: 20

Usage:

pg-online-schema-change --version, -v

print the version

Prominent features

pg-oscsupports when a column is being added, dropped or renamed with no data loss.pg-oscacquires minimal locks throughout the process (read more below on the caveats).- Copies over indexes and Foreign keys.

- Optionally drop or retain old tables in the end.

- Tune how slow or fast should replays be from the audit/log table (Replaying larger workloads).

- Backfill old/new columns as data is copied from primary table to shadow table, and then perform the swap. Example

- TBD: Ability to reverse the change with no data loss. tracking issue

Load test

More about the preliminary load test figures here

Examples

Renaming a column

export PGPASSWORD=""

pg-online-schema-change perform \

--alter-statement 'ALTER TABLE books RENAME COLUMN email TO new_email' \

--dbname "postgres" \

--host "localhost" \

--username "jamesbond" \

Multiple ALTER statements

export PGPASSWORD=""

pg-online-schema-change perform \

--alter-statement 'ALTER TABLE books ADD COLUMN "purchased" BOOLEAN DEFAULT FALSE; ALTER TABLE books RENAME COLUMN email TO new_email;' \

--dbname "postgres" \

--host "localhost" \

--username "jamesbond" \

--drop

Kill other backends after 5s

If the operation is being performed on a busy table, you can use pg-osc's kill-backend functionality to kill other backends that may be competing with the pg-osc operation to acquire a lock for a brief while. The ACCESS EXCLUSIVE lock acquired by pg-osc is only held for a brief while and released after. You can tune how long pg-osc should wait before killing other backends (or if at all pg-osc should kill backends in the first place).

export PGPASSWORD=""

pg-online-schema-change perform \

--alter-statement 'ALTER TABLE books ADD COLUMN "purchased" BOOLEAN DEFAULT FALSE;' \

--dbname "postgres" \

--host "localhost" \

--username "jamesbond" \

--wait-time-for-lock 5 \

--kill-backends \

--drop

Replaying larger workloads

If you have a table with high write volume, the default replay iteration may not suffice. That is - you may see that pg-osc is replaying 1000 rows (pull-batch-count) in one go from the audit table. pg-osc also waits until the remaining row count (delta-count) in audit table is 20 before making the swap. You can tune these values to be higher for faster catch up on these kind of workloads.

export PGPASSWORD=""

pg-online-schema-change perform \

--alter-statement 'ALTER TABLE books ADD COLUMN "purchased" BOOLEAN DEFAULT FALSE;' \

--dbname "postgres" \

--host "localhost" \

--username "jamesbond" \

--pull-batch-count 2000

--delta-count 500

--wait-time-for-lock 5 \

--kill-backends \

--drop

Backfill data

When inserting data into the shadow table, instead of just copying all columns and rows from the primary table, you can pass in a custom sql file to perform the copy and do any additional work. For instance - backfilling certain columns. By providing the copy-statement, pg-osc will instead play the query to perform the copy operation.

IMPORTANT NOTES:

- It is possible to violate a constraint accidentally or not copy data, so proceed with caution.

- You must use OUTER JOINs when joining in the custom SQL, or you will lose rows which do not match the joined table.

- The

ALTERstatement can change the table's structure, so proceed with caution. - Preserve

%{shadow_table}as that will be replaced with the destination of the shadow table. - Users are STRONGLY URGED to test and validate results before using in production!

-- file: /src/query.sql

INSERT INTO %{shadow_table}(foo, bar, baz, rental_id, tenant_id)

SELECT a.foo,a.bar,a.baz,a.rental_id,r.tenant_id AS tenant_id

FROM ONLY examples a

LEFT OUTER JOIN rentals r

ON a.rental_id = r.idpg-online-schema-change perform \

--alter-statement 'ALTER TABLE books ADD COLUMN "tenant_id" VARCHAR;' \

--dbname "postgres" \

--host "localhost" \

--username "jamesbond" \

--copy-statement "/src/query.sql" \

--drop

Running using Docker

docker run --network host -it --rm shayonj/pg-osc:latest \

pg-online-schema-change perform \

--alter-statement 'ALTER TABLE books ADD COLUMN "purchased" BOOLEAN DEFAULT FALSE; ALTER TABLE books RENAME COLUMN email TO new_email;' \

--dbname "postgres" \

--host "localhost" \

--username "jamesbond" \

--drop

Caveats

- Partitioned tables are not supported as of yet. Pull requests and ideas welcome.

- A primary key should exist on the table; without it,

pg-oscwill raise an exception- This is because - currently there is no other way to uniquely identify rows during replay.

pg-oscwill acquireACCESS EXCLUSIVElock on the parent table twice during the operation.- First, when setting up the triggers and the shadow table.

- Next, when performing the swap and updating FK references.

- Note: If

kill-backendsis passed, it will attempt to terminate any competing operations during both times.

- By design,

pg-oscdoesn't kill any other DDLs being performed. It's best to not run any DDLs against the parent table during the operation. - Due to the nature of duplicating a table, there needs to be enough space on the disk to support the operation.

- Index, constraints and sequence names will be altered and lose their original naming.

- Can be fixed in future releases. Feel free to open a feature req.

- Triggers are not carried over.

- Can be fixed in future releases. Feel free to open a feature req.

- Foreign keys are dropped & re-added to referencing tables with a

NOT VALID. A follow onVALIDATE CONSTRAINTis run.- Ensures that integrity is maintained and re-introducing FKs doesn't acquire additional locks, hence the

NOT VALID.

- Ensures that integrity is maintained and re-introducing FKs doesn't acquire additional locks, hence the

How does it work

- Primary table: A table against which a potential schema change is to be run

- Shadow table: A copy of an existing primary table

- Audit table: A table to store any updates/inserts/delete on a primary table

- Create an audit table to record changes made to the parent table.

- Acquire a brief

ACCESS EXCLUSIVElock to add a trigger on the parent table (for inserts, updates, deletes) to the audit table. - Create a new shadow table and run ALTER/migration on the shadow table.

- Copy all rows from the old table.

- Build indexes on the new table.

- Replay all changes accumulated in the audit table against the shadow table.

- Delete rows in the audit table as they are replayed.

- Once the delta (remaining rows) is ~20 rows, acquire an

ACCESS EXCLUSIVElock against the parent table within a transaction and:- swap table names (shadow table <> parent table).

- update references in other tables (FKs) by dropping and re-creating the FKs with a

NOT VALID.

- Runs

ANALYZEon the new table. - Validates all FKs that were added with

NOT VALID. - Drop parent (now old) table (OPTIONAL).

Development

- Install ruby 3.1.3

\curl -sSL https://get.rvm.io | bash

rvm install 3.1.3

rvm use 3.1.3

- Spin up postgres via Docker Compose -

docker compose up bundle exec rspecto run the tests.- You can also run

bin/consolefor an interactive prompt that will allow you to experiment.

To install this gem onto your local machine, run bundle exec rake install.

Local testing

docker compose up

pgbench --initialize -s 10 --foreign-keys --host localhost -U jamesbond -d postgres

pgbench -T 60000 -c 5 --host localhost -U jamesbond -d postgres

bundle exec bin/pg-online-schema-change perform -a 'ALTER TABLE pgbench_accounts ALTER COLUMN aid TYPE BIGINT' -d "postgres" -h "localhost" -u "jamesbond" -w "password"

Releasing

- Bump version in

version.rb - Commit

./scripts/release.sh 0.2.0- Update

CHANGELOG.md - Create a new release - https://github.com/shayonj/pg-osc/releases/new

Contributing

Bug reports and pull requests are welcome on GitHub at https://github.com/shayonj/pg-osc.

License

The gem is available as open source under the terms of the MIT License.

Code of Conduct

Everyone interacting in the PgOnlineSchemaChange project's codebases, issue trackers, chat rooms and mailing lists is expected to follow the code of conduct.