XGBoost for probabilistic prediction. Like NGBoost, but faster, and in the XGBoost scikit-learn API.

$ pip install xgboost-distributionpython_requires = >=3.8

install_requires =

scikit-learn

xgboost>=1.7.0

XGBDistribution follows the XGBoost scikit-learn API, with an additional keyword

argument specifying the distribution (see the documentation for a full list of

available distributions):

from sklearn.datasets import load_boston

from sklearn.model_selection import train_test_split

from xgboost_distribution import XGBDistribution

data = load_boston()

X, y = data.data, data.target

X_train, X_test, y_train, y_test = train_test_split(X, y)

model = XGBDistribution(

distribution="normal",

n_estimators=500,

early_stopping_rounds=10

)

model.fit(X_train, y_train, eval_set=[(X_test, y_test)])After fitting, we can predict the parameters of the distribution:

preds = model.predict(X_test)

mean, std = preds.loc, preds.scaleNote that this returned a namedtuple of numpy arrays for each parameter of the distribution (we use the scipy stats naming conventions for the parameters, see e.g. scipy.stats.norm for the normal distribution).

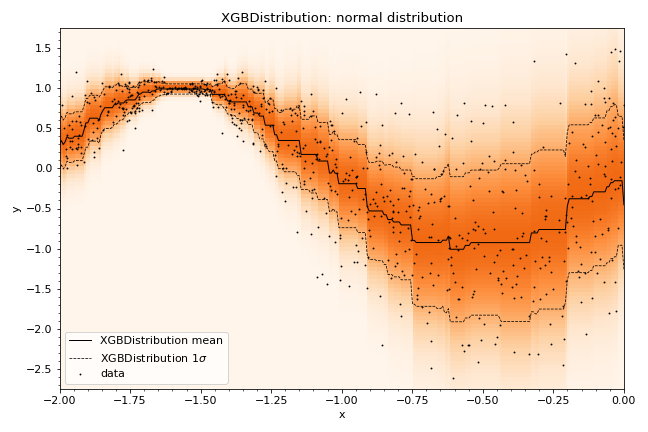

XGBDistribution follows the method shown in the NGBoost library, using natural

gradients to estimate the parameters of the distribution.

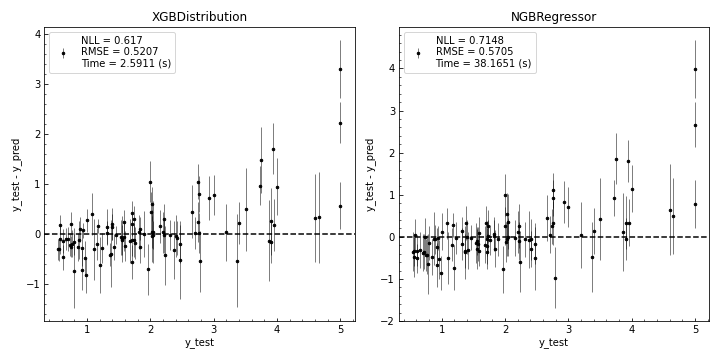

Below, we show a performance comparison of XGBDistribution with the NGBoost

NGBRegressor, using the Boston Housing dataset, estimating normal distributions.

We note that while the performance of the two models is essentially identical (measured

on negative log-likelihood of a normal distribution and the RMSE), XGBDistribution

is 30x faster (timed on both fit and predict steps):

Please see the experiments page in the documentation for detailed results across various datasets.

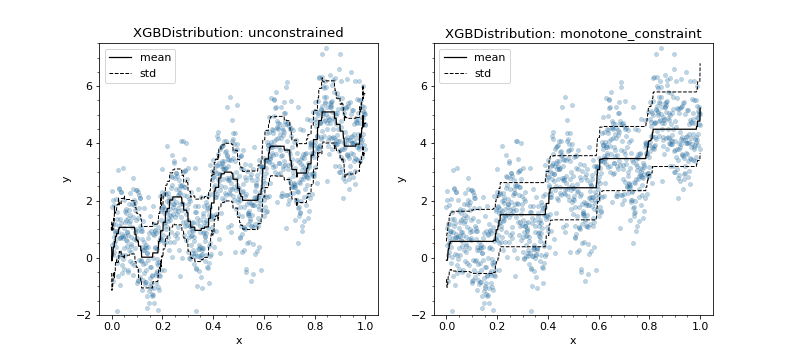

XGBDistribution offers the full set of XGBoost features available in the

XGBoost scikit-learn API, allowing, for example, probabilistic regression

with monotonic constraints:

This package would not exist without the excellent work from:

- NGBoost - Which demonstrated how gradient boosting with natural gradients can be used to estimate parameters of distributions. Much of the gradient calculations code were adapted from there.

- XGBoost - Which provides the gradient boosting algorithms used here, in

particular the

sklearnAPIs were taken as a blue-print.

This project has been set up using PyScaffold 4.0.1. For details and usage information on PyScaffold see https://pyscaffold.org/.