This repository contains a reimplementation of the Deep Recurrent Attentive Writer (DRAW) network architecture introduced by K. Gregor, I. Danihelka, A. Graves and D. Wierstra. The original paper can be found at

http://arxiv.org/pdf/1502.04623

- Blocks follow the install instructions. This will install all the other dependencies for you (Theano, Fuel, etc.).

- Theano

- Fuel

- picklable_itertools

- Bokeh 0.8.1+

- ipdb

You need to set the location of your data directory:

echo "data_path: /home/user/data" >> ~/.fuelrc

You need to download binarized MNIST data:

export PYLEARN2_DATA_PATH=/home/user/data

wget https://github.com/lisa-lab/pylearn2/blob/master/pylearn2/scripts/datasets/download_binarized_mnist.py

python download_binarized_mnist.py

The datasets/README.md file has instructions for additional data-sets.

Before training you need to start the bokeh-server

bokeh-server

or

boke-server --ip 0.0.0.0

To train a model with a 2x2 read and a 5x5 write attention window run

./train-draw --attention=2,5 --niter=64 --lr=3e-4 --epochs=100

On Amazon g2xlarge it takes more than 40min for Theano's compilation to end and training to start. Once training starts you can track its

live plotting.

It will take about 2 days to train the model. After each epoch it will save 3 pkl files:

- a pickle of the enitre main loop,

- a pickle of the model, and

- a pickle of the log.

With

python sample.py [pickle-of-model]

# this requires ImageMagick to be installed

convert -delay 5 -loop 0 samples-*.png animaion.gif

you can create samples similar to

Run

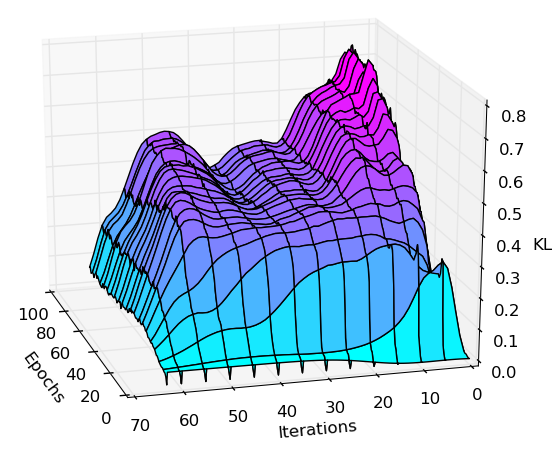

pyhthon plot-kl.py [pickle-of-log]

to create a visualization of the KL divergence potted over inference iterations and epochs. E.g:

Run

./attention.py

to test the attention windowing code. It will open three windows: A window displaying the original input image, a window displaying some extracted, downsampled content (testing the read-operation), and a window showing the upsampled content (matching the input size) after the write operation.

Work in progress