This project is a pytorch vision of NCC, which is about Discovering causal signals in images.

!!THIS IS AN UNFISHED PROJECT!!

- Use the NCC-datasetGen.py to produce the trainX and trainY data (in ./data), which train the NCC causal model.

- Use the NCC-NN-training-torch.py to train the NCC causal model

- Use the NCCTest.py to test the NCC causal model, and the test data is ./data/tubehengenDataFormat.json

- In ResNetNCC.py, use VOC2012 classification task to finetune the ResNet50, whose fc layer was replaced by a 512-512-20 dense layers.

- After training the NCC-ResNet50, use it to generate the feature-class vectors in GenResNetNCCVector.py.

- Use the NCCtest.py to deal with the feature-class vectors produced in NCC-ResNet50, and get the causal/anticausal score.

- Use codeForIntervention.py to get the result.

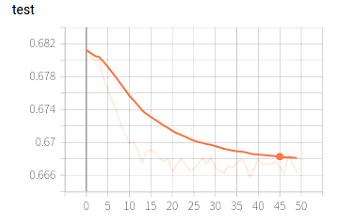

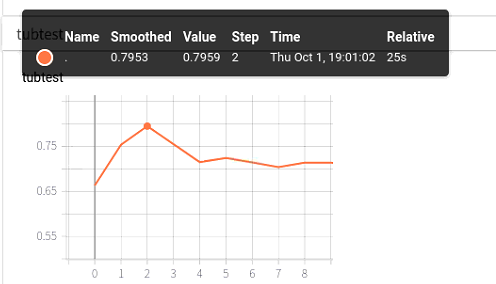

My NCC model get 74% acc in Tuebingen datasets, which is 79% in official tf version.

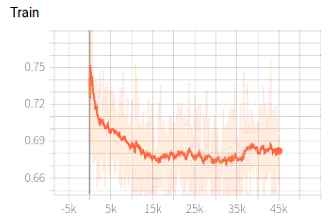

I realize it in this way: 4 layers in NCC, the first 2 layers named embeded layers, and another 2 layers named classified layers.

Every layer include: Linear => Normalization => ReLu => Dropout

The whole NCC model is: input => Embeded Layers => reduce_mean => Classified Layers => sigmoid => output, and the input is [B, data_size, 2], output is [B, 1]

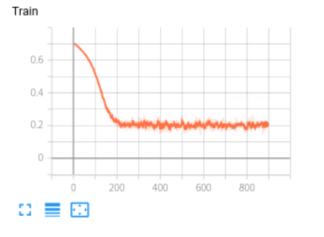

My NCC-ResNet50 get 93% acc in voc classification task, which is 97% in official tf version.

I realize it in this way:

imgs => ResNet50 (without the last fc layers) => features => 512-512-20

it is a multi-label learning task, freeze the ResNet50 grad to finetune the 3 layers network.

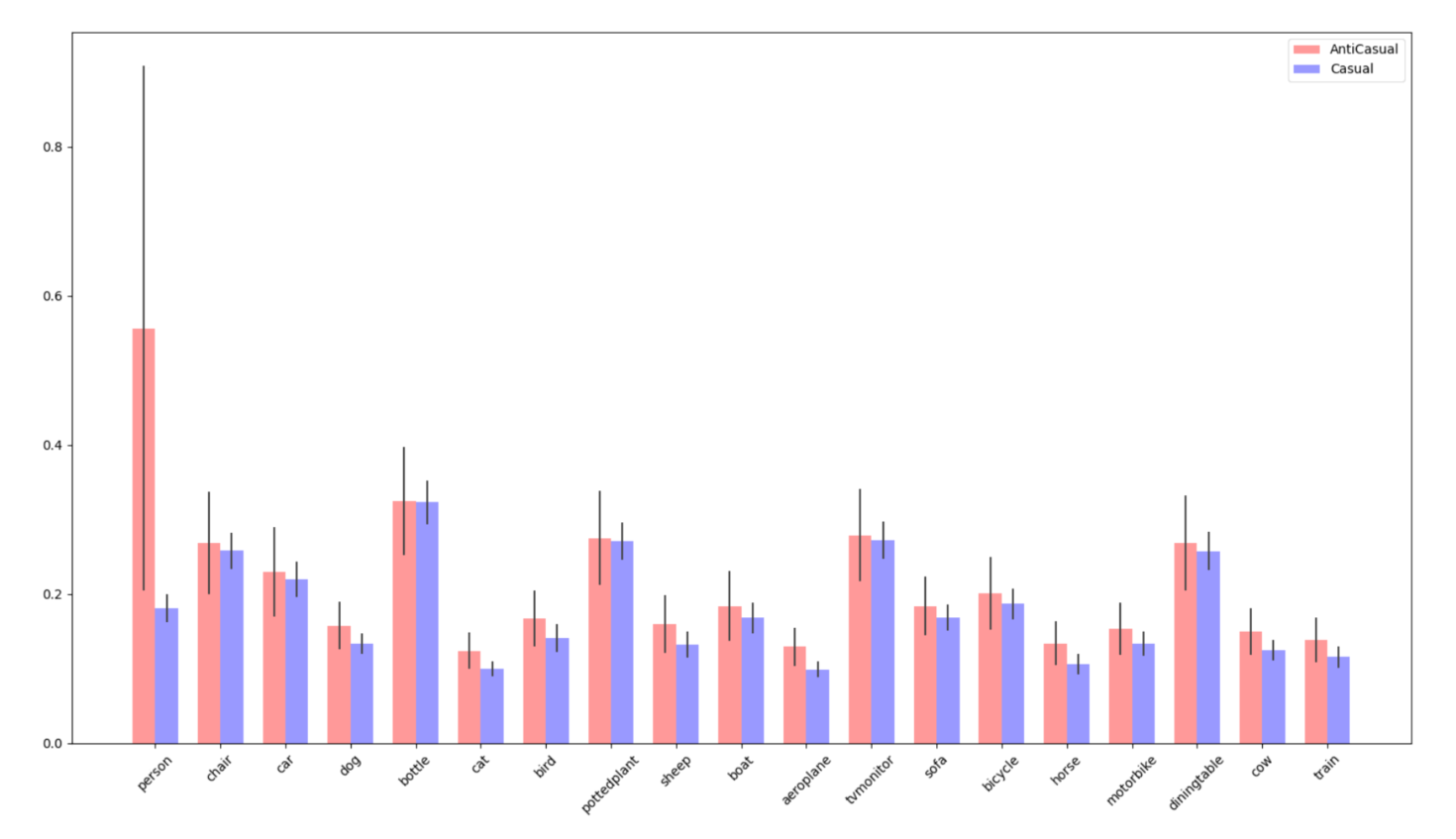

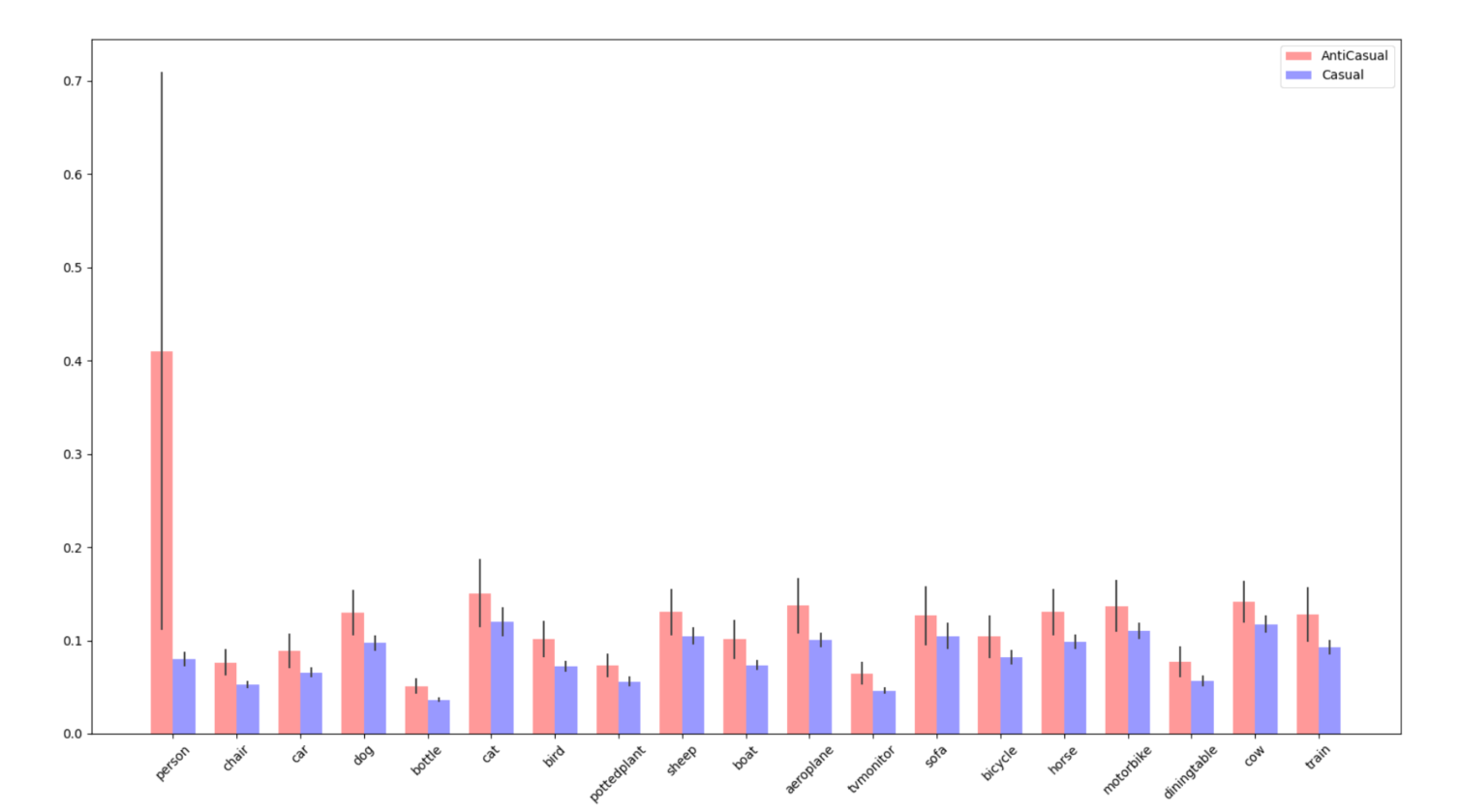

But when I connected both 2 models, I don't get the paper's results in context-feature: