SignNet: Recognize Alphabets in the American Sign Language in Real Time

Zeqiang Lai , Zhiyuan Liang , Kexiang Huang

Beijing Institute of Technology

Note that this is a simple course project rather than a serious research project.

- Clone the repository

git clone https://github.com/Zeqiang-Lai/SignNet.git-

Install the requirements, see requirement section for instruction.

-

Download pretrained model

- BaiduNetDisk, Code: 40hi

- Google Drive

-

Unzip pretrained model and put it into

signnet/pretraineddirectory -

Run the live demo

python live_demo.py- OpenCV

- QT

- Python3

Install the Python requirement using the following command.

cd signnet

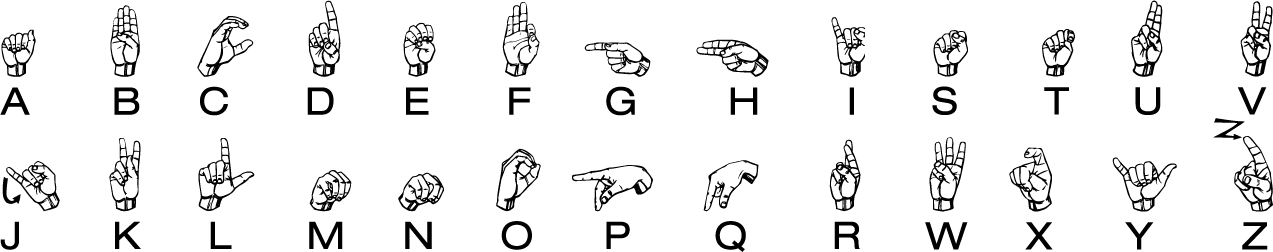

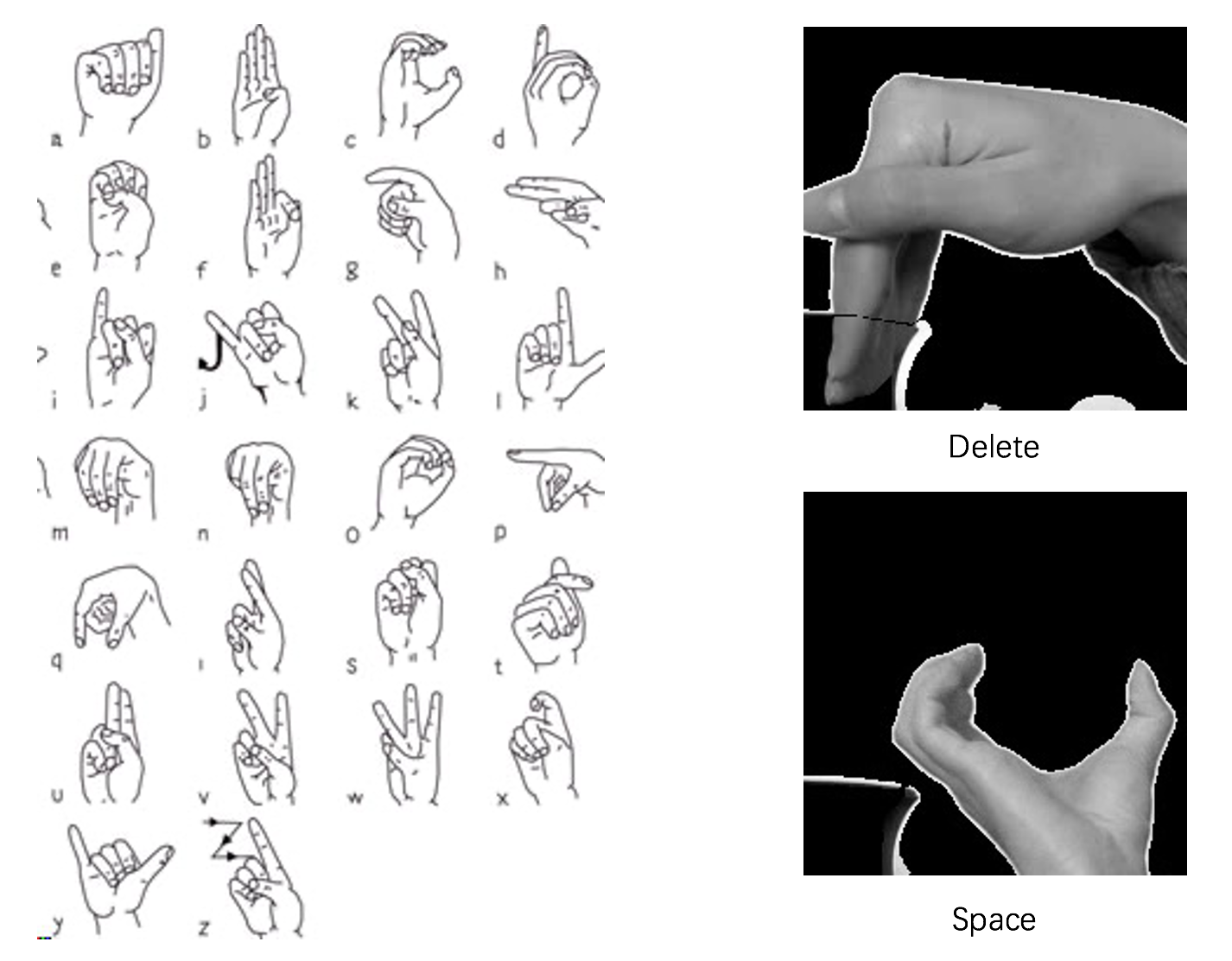

conda env create -f env.yamlThe gestures for the sign alphabets we used are a little differnt from what it is shown in the header image. Our version can be shown in the following pictures.

The total alphabets our model can recognitize includes 26 letters, space and delete (control operator).

To be done

The training and test dataset are collected on our own.

- The training set contains 29 videos (26 letters, space, delete and nothing) and each lasts 30s.

- The test set also contains 29 videos, but each only last 10s.

We will open our dataset soon.

We also made a attempt to train a model with ASL Alphabet dataset, and test using ASL Alphabet Test , but the result is poor.

- Record video demo

- Upload training and test data

- Finish report

- Integrate camera and text windows into a single one

- Complete testing code (test.py)

- Complete demo with static image (demo.py)

- Tutorial for training

This project is inspired by these related projects.