Pytorch implementation of CycleGAN [1].

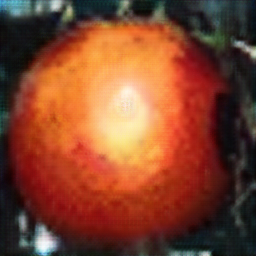

- apple2orange

- apple training images: 995, orange training images: 1,019, apple test images: 266, orange test images: 248

- horse2zebra

- horse training images: 1,067, zebra training images: 1,334, horse test images: 120, zebra test images: 140

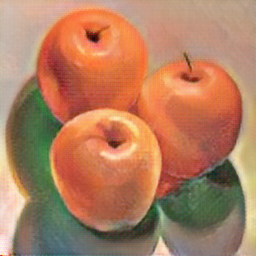

apple2orange (after 200 epochs)

| Input |

Output |

Reconstruction |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| Input |

Output |

Reconstruction |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

- Learning Time

- apple2orange - Avg. per epoch: 299.38 sec; Total 200 epochs: 62,225.33 sec

horse2zebra (after 200 epochs)

| Input |

Output |

Reconstruction |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| Input |

Output |

Reconstruction |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

- Learning Time

- horse2zebra - Avg. per epoch: 299.25 sec; Total 200 epochs: 61,221.27 sec

- Ubuntu 14.04 LTS

- NVIDIA GTX 1080 ti

- cuda 8.0

- Python 2.7.6

- pytorch 0.1.12

- matplotlib 1.3.1

- scipy 0.19.1

[1] Zhu, Jun-Yan, et al. "Unpaired image-to-image translation using cycle-consistent adversarial networks." arXiv preprint arXiv:1703.10593 (2017).

(Full paper: https://arxiv.org/pdf/1703.10593.pdf)