To use this conda environment, you need to install Miniconda, then run this command

conda env create -f environment.yml

If you would like to use OpenVINO for inference. Please check OpenVINO official Documentation for the installation. This code utilizes OpenVINO 2020.1.023.

We can easily download VCTK dataset using torchaudio. Please do to use Part 0 - Download VCTK Dataset.ipynb if you are stuck.

Simply run the code in the Part 1 - Training.ipynb notebook and you are good to go.

Here is the training process if you would want to learn more:

-

Dataset Preparation

If you would like to use your own dataset, use folder structure as in VCTK:vctk_dataset txt speaker_1 sentence_1.txt sentence_2.txt speaker_2 sentence_1.txt sentence_2.txt wav48 speaker_1 sentence_1.wav sentence_2.wav speaker_2 sentence_1.wav sentence_2.wav -

Dataset & Dataloader

I have createdVCTKTripletDatasetandVCTKTripletDataloaderdan would prepare Triplet data to train the speaker embedding. Here is a quick look for youdataset = VCTKTripletDataset(wav_path, txt_path, n_data=3000, min_dur=1.5) dataloader = VCTKTripletDataloader(dataset, batch_size=32)In a nutshell, here is what it does:

- Only audio longer than

min_durseconds is considered as data. - Randomly choose 2 speakers, A and B, from the dataset folder.

- Randomly choose 2 audios from A and 1 from B, mark it as anchor, positive, and negative.

- Repeat

n_datatimes. Now you have a dataset. - The dataset is loaded as minibatch of size

batch_size.

- Only audio longer than

-

Architecture & Config

Simply create the model architecture you would like to use. I have made one sample for you insrc/model.py. You can directly use it by simply changing the hyperparameters. Please follow the notebook if you are confused.❗ Note: If you would like to use custom architecture in OpenVINO, make sure it is compatible with OpenVINO model optimizer and inference engine.

-

Training Preparation

Set up themodel,criterion,optimizer, andcallbackhere. -

Training

As what it says, running the code will train the model

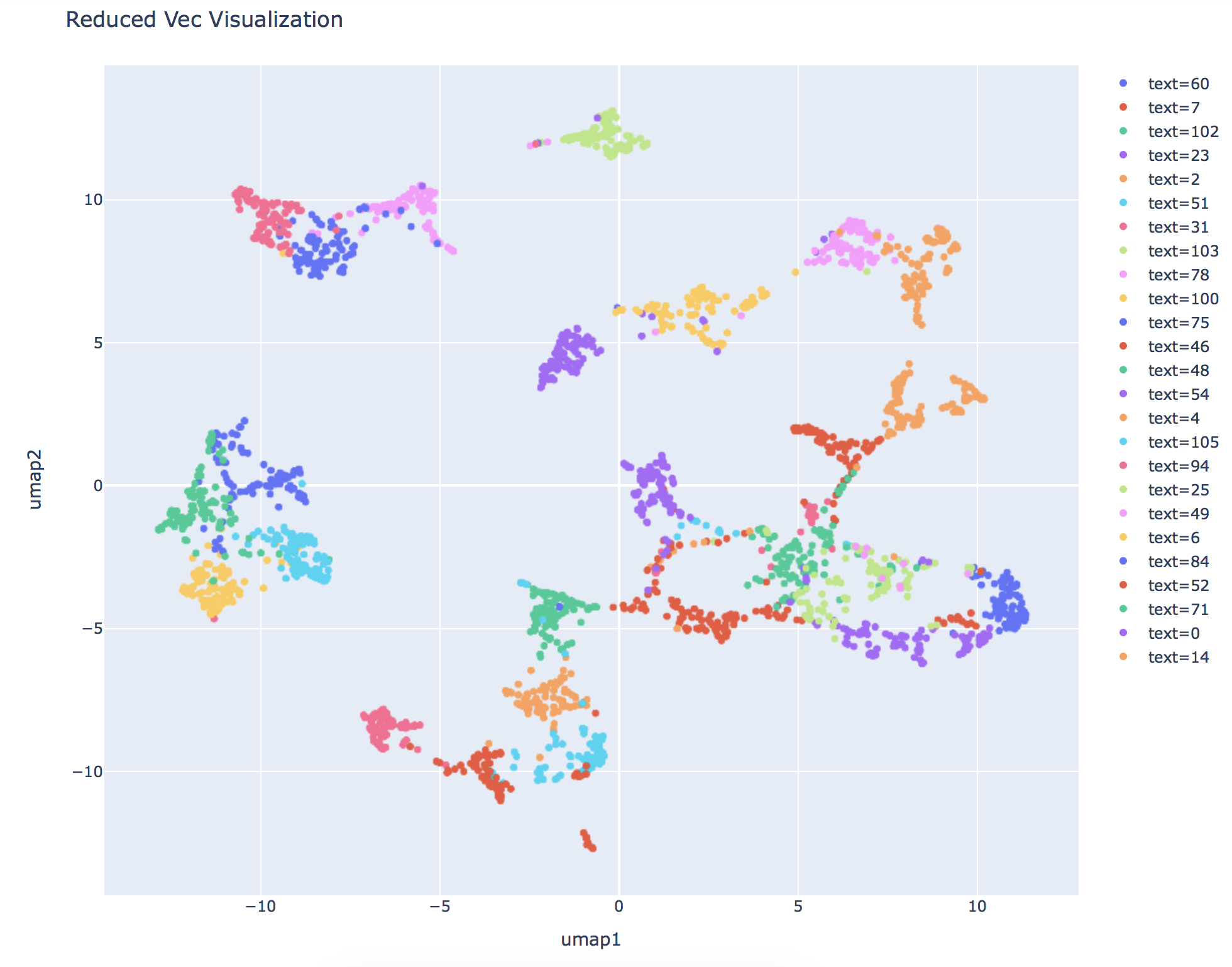

While training, you can visualize the embedding to confirm if the model actually learns. Similarly, I have prepared VCTKSpeakerDataset and VCTKSpeakerDataloader for this purpose. Here is a sample visualization using Uniform Manifold Approximation and Projection.

I have prepared a PyTorchPredictor and OpenVINOPredictor object for speaker diarization. Here is a sneak peek:

p = PyTorchPredictor(config_path, model_path, max_frame=45, hop=3)

p = OpenVINOPredictor(model_xml, model_bin, config_path, max_frame=45, hop=3)

To use it, simply input the arguments and use .predict(wav_path) and it will return the diarization timestamp and speakers. The timestamps are in seconds.

You can use this sample dataset in Kaggle to test the speaker diarization. For example:

p = PyTorchPredictor("model/weights_best.pth", "model/configs.pth")

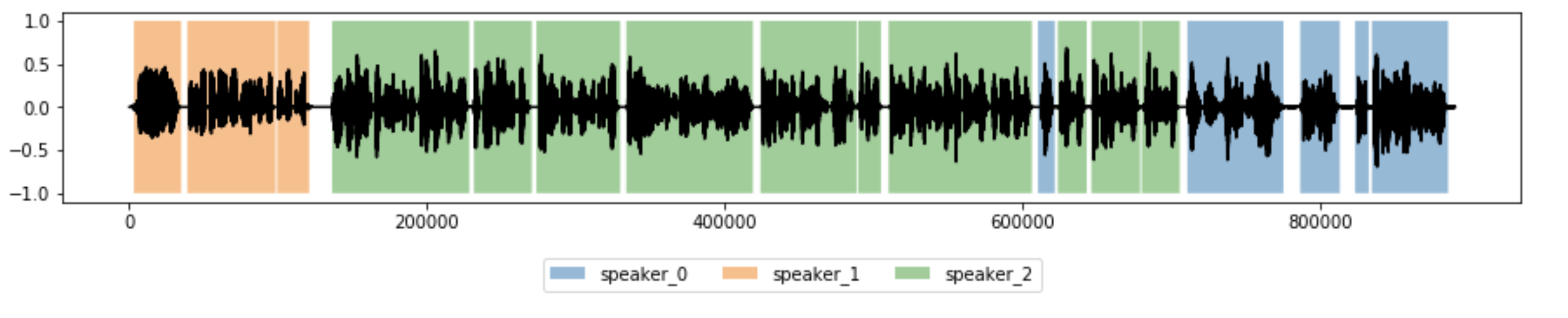

timestamps, speakers = p.predict("dataset/test/test_1.wav", plot=True)

setting plot=True provides you with the diarization visualization like this

If you would like to use OpenVINO, use .to_onnx(fname, outdir) to convert the model into onnx format.

p.to_onnx("speaker_diarization.onnx", "model/openvino")

I hope you like the projects. I made this repo for educational purposes so it might need further tweaking to reach production level. Feel free to create an issue if you need help, and I hope I'll have the time to help you. Thank you.