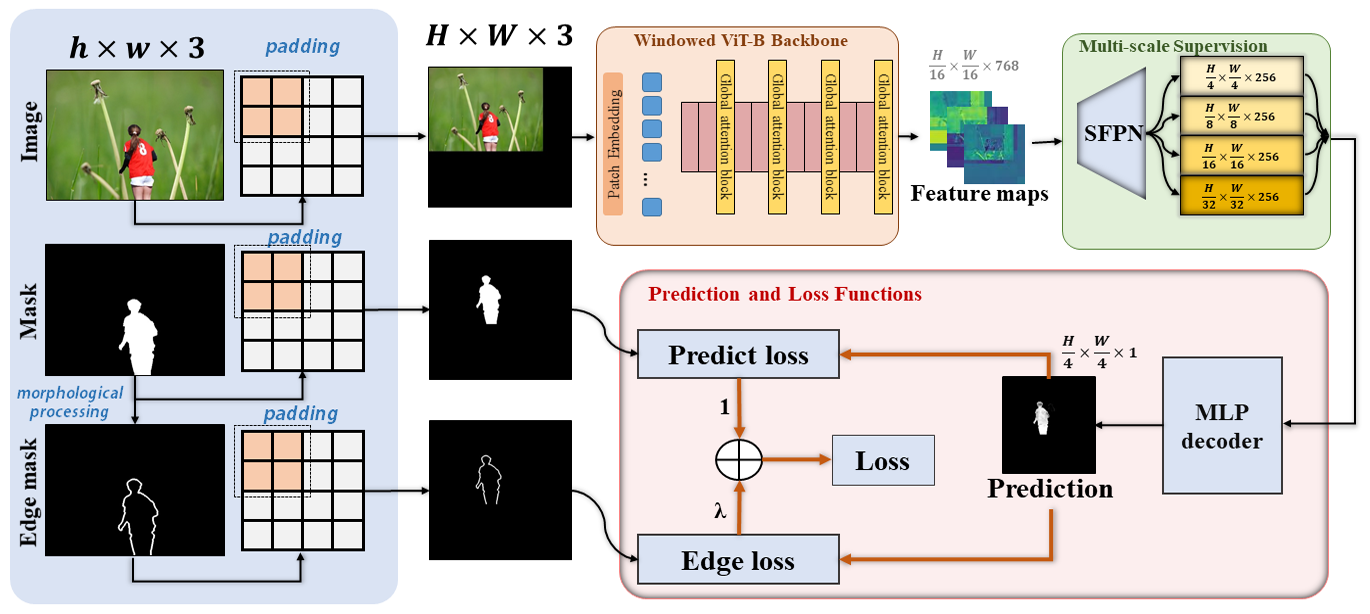

This repo contains an official PyTorch implementation of our paper: IML-ViT: Benchmarking Image Manipulation Localization by Vision Transformer.

- [2023/12/24] Training code released! Welcome to discuss and report the bugs and interesting findings! We will try our best to improve this work.

- [2023/10/03] 🎉🎉 Our work that applies Contrastive learning on the image manipulation localization task to solve data insufficiency problem, NCL-IML, is accepted by ICCV2023! 🎉🎉

Ubuntu LTS 20.04.1

CUDA 11.7 + cudnn 8.4.0

Python 3.8

PyTorch 1.11

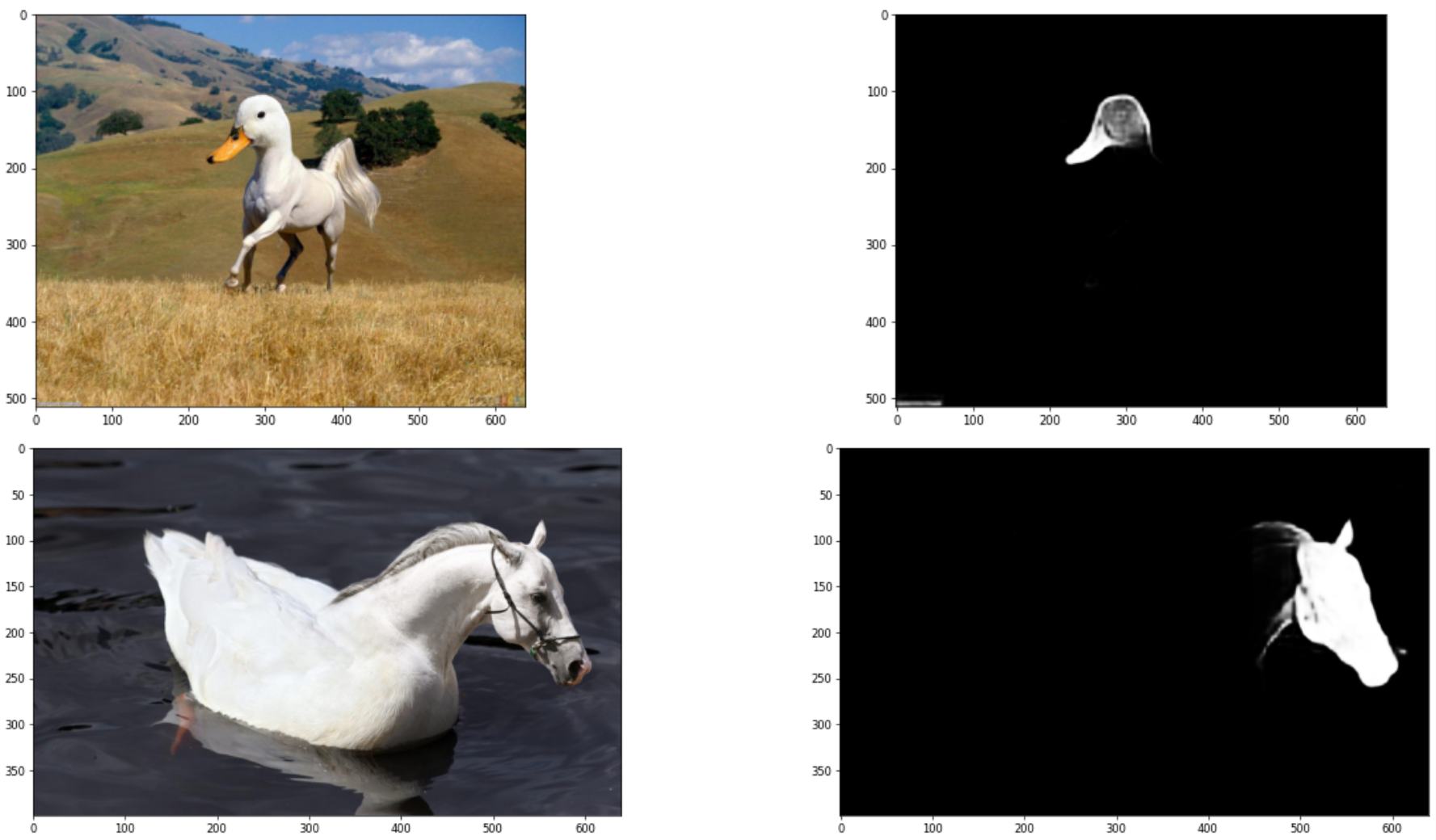

- We highly recommend you try out our IML-ViT model on Colab!

- We also prepared a playground for you to test our model with various images on the Internet conveniently.

Currently, You can follow the tutorial to experience the running pipeline of IML-ViT. The only difference from the Colab version is the lack of a playground for testing online images.

- Step 1: You should install the packages in requirements.txt with

pip install -r requirements.txtfirst. - Step 2: You should download the pre-trained IML-ViT weights from Google Drive or Baidu NetDisk and place them as

./checkpoints/iml-vit_checkpoint.pth.- Note that this checkpoint is the 144 epoch trained on CASIAv2's both authentic and manipulated images with NVIDIA 3090 GPUs with batchsize=1.

- Step 3: You can follow the instructions in Demo.ipynb to see how we pad the images and inference with the IML-ViT.

Now training code for IML-ViT is released!

First, you may prepare the dataset to fit the protocol of our dataloader for a quick start. Or, you can design your dataloader and modify the corresponding interfaces.

- We defined two types of Dataset class

json_dataset, which gets input image and corresponding ground truth from a JSON file with a protocol like this:where "Negative" represents a totally black ground truth that doesn't need a path (all authentic)[ [ "/Dataset/CASIAv2/Tp/Tp_D_NRN_S_N_arc00013_sec00045_11700.jpg", "/Dataset/CASIAv2/Gt/Tp_D_NRN_S_N_arc00013_sec00045_11700_gt.png" ], ...... [ "/Dataset/CASIAv2/Au/Au_nat_30198.jpg", "Negative" ], ...... ]mani_datasetwhich loads images and ground truth pairs automatically from a directory having sub-directories namedTp(for input images) andGt(for ground truths). This class will generate the pairs using the sortedos.listdir()function. You can takeimages\sample_iml_datasetas an example.

- These datasets will do zero-padding automatically. Standard augmentation methods like ImageNet normalization will also be added.

- Both datasets can generate

edge_maskwhen specifying theedge_widthparameter. Then, this dataset will return 3 objects (image, GT, edge mask) while only 2 objects whenedge_width=None. - For inference, returning the actual shape of the original image is crucial. You can set

if_return_shape=Trueto get this value.

Thus, you may revise your dataset like mani_dataset or generate a json file for each dataset you are willing to train or test. We have prepared the Naive IML transforms class and edge mask generator class. You can directly call them by using json_dataset or mani_dataset in ./utils/datasets.py to check if your revising is correct.

You may follow the instructions to download the Masked Autoencoder pre-trained weights before training. Thanks for their impressive work and their open-source contributions!

The main entrance is main_train.py, you may use the following script to call training on Linux:

torchrun \

--standalone \

--nnodes=1 \

--nproc_per_node=1 \

main_train.py \

--world_size 1 \

--batch_size 1 \

--data_path "<Your custom dataset path>/CASIA2.0" \

--epochs 200 \

--lr 1e-4 \

--min_lr 5e-7 \

--weight_decay 0.05 \

--edge_lambda 20 \

--predict_head_norm "BN" \

--vit_pretrain_path "<Your path to pretrained weights >/mae_pretrain_vit_base.pth" \

--test_data_path "<Your custom dataset path>/CASIA1.0" \

--warmup_epochs 4 \

--output_dir ./output_dir/ \

--log_dir ./output_dir/ \

--accum_iter 32 \

--seed 42 \

--test_period 4 \

--num_workers 4 \

2> train_error.log 1>train_log.logdata_path is for training dataset

test_data_path is for testing dataset during training process

vit_pretrain_path is the path for MAE pre-trained ViT weights

You should modify the path in <> to your custom path. The default settings are generally recommended training parameters, but if you have a more powerful device, increasing the batch size and adjusting other parameters appropriately is also acceptable.

Note that we observed that the predict_head_norm parameter, i.e. norm type of the predict_head may greatly influence the performance of the model. Some conclusions are here:

We tested three different types of normalization in the decoder head, and they may yield different results due to dataset configurations and other factors. Some intuitive conclusions are as follows:

- "LN" -> Layer norm : The fastest convergence, but poor generalization performance.

- "BN" Batch norm : When include authentic images during training, set batchsize = 2 may have poor performance. But if you can train with larger batchsize (e.g. NVIDIA A40 with 48GB memory can train with batchsize = 4) It may performs better.

- "IN" Instance norm : A form that can definitely converge, equivalent to a batchnorm with batchsize=1. When abnormal behavior is observed with BatchNorm, one can consider trying Instance Normalization. It's important to note that in this case, the settings of

nn.InstanceNorm2dshould include settingtrack_running_statsandaffineto True, rather than the default settings in PyTorch.

Anyway, We sincerely welcome to report other strange/shocking findings among the parameter settings in the issue. This can contribute to a more comprehensive understanding of the inherent properties of IML-ViT in the research community.

For more information, you may use python main_train.py -h to see the full help list of the command arguments.

We recommend you monitor the training process with the following measures:

- Check the logging file:

- We have redirected the standard output to the

train_log.logfile. If the training proceeds correctly, you can check the latest status at the end of this file.

- We have redirected the standard output to the

- Using TensorBoard

- For a more vivid and straightforward visualization, you can call a Tensorboad process under the

./output_dirwith the commandtensorboard --logdir ./. Then you can see the statistics and graphs with Internet Explorer.

- For a more vivid and straightforward visualization, you can call a Tensorboad process under the

You can use our Colab demo or offline demo to check the performance of our powerful IML-ViT model. The only difference is to replace the default checkpoint with your own.

If you want to train this Model with the CASIAv2 dataset, we provide a revised version of CASIAv2 datasets, which corrected several mistakes in the original datasets provided by the author. Details can be found in the link shown below:

If you find our work interesting or helpful, please don't hesitate to give us a star🌟 and cite our paper🥰! Your support truly encourages us!

@misc{ma2023imlvit,

title={IML-ViT: Benchmarking Image Manipulation Localization by Vision Transformer},

author={Xiaochen Ma and Bo Du and Zhuohang Jiang and Ahmed Y. Al Hammadi and Jizhe Zhou},

year={2023},

eprint={2307.14863},

archivePrefix={arXiv},

primaryClass={cs.CV}

}