Local Transcribe with Whisper is a user-friendly desktop application that allows you to transcribe audio and video files using the Whisper ASR system. This application provides a graphical user interface (GUI) built with Python and the Tkinter library, making it easy to use even for those not familiar with programming.

- Simpler usage:

- File type: You no longer need to specify file type. The program will only transcribe elligible files.

- Language: Added option to specify language, which might help in some cases. Clear the default text to run automatic language recognition.

- Model selection: Now a dropdown option that includes most models for typical use.

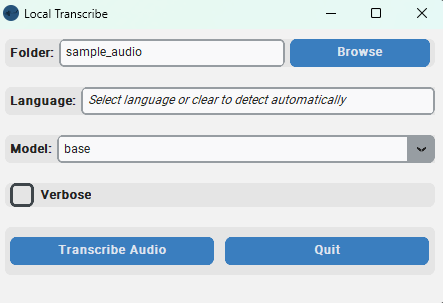

- New and improved GUI.

- Executable: On Windows and don't want to install python? Try the Exe file! See below for instructions (Experimental)

- Select the folder containing the audio or video files you want to transcribe. Tested with m4a video.

- Choose the language of the files you are transcribing. You can either select a specific language or let the application automatically detect the language.

- Select the Whisper model to use for the transcription. Available models include "base.en", "base", "small.en", "small", "medium.en", "medium", and "large". Models with .en ending are better if you're transcribing English, especially the base and small models.

- Enable the verbose mode to receive detailed information during the transcription process.

- Monitor the progress of the transcription with the progress bar and terminal.

- Confirmation dialog before starting the transcription to ensure you have selected the correct folder.

- View the transcribed text in a message box once the transcription is completed.

Download the zip folder and extract it to your preferred working folder.

Or by cloning the repository with:

git clone https://github.com/soderstromkr/transcribe.git

The executable version of Local Transcribe with Whisper is a standalone program and should work out of the box. This experimental version is available if you have Windows, and do not have (or don't want to install) python and additional dependencies. However, it requires more disk space (around 1Gb), has no GPU acceleration and has only been lightly tested for bugs, etc. Let me know if you run into any issues!

- Download the project folder. As the image above shows.

- Find and unzip build.zip (get a coffee or a tea, this might take a while depending on your computer)

- Run the executable (app.exe) file.

This is recommended if you don't have Windows. Have Windows and use python, or want to use GPU acceleration (Pytorch and Cuda) for faster transcriptions. I would generally recommend this method anyway, but I can understand not everyone wants to go through the installation process for Python, Anaconda and the other required packages.

- This script was made and tested in an Anaconda environment with Python 3.10. I recommend this method if you're not familiar with Python. See here for instructions. You might need administrator rights.

- Whisper requires some additional libraries. The setup page states: "The codebase also depends on a few Python packages, most notably HuggingFace Transformers for their fast tokenizer implementation and ffmpeg-python for reading audio files." Users might not need to specifically install Transfomers. However, a conda installation might be needed for ffmpeg1, which takes care of setting up PATH variables. From the anaconda prompt, type or copy the following:

conda install -c conda-forge ffmpeg-python

- The main functionality comes from openai-whisper. See their page for details. As of 2023-03-22 you can install via:

pip install -U openai-whisper

- To run the app built on TKinter and TTKthemes. If using these options, make sure they are installed in your Python build. You can install them via pip.

pip install tkinter

and

pip install customtkinter

- Run the app:

- For Windows: In the same folder as the app.py file, run the app from terminal by running

python app.pyor with the batch file called run_Windows.bat (for Windows users), which assumes you have conda installed and in the base environment (This is for simplicity, but users are usually adviced to create an environment, see here for more info) just make sure you have the correct environment (right click on the file and press edit to make any changes). If you want to download a model first, and then go offline for transcription, I recommend running the model with the default sample folder, which will download the model locally. - For Mac: Haven't figured out a better way to do this, see the instructions here

- For Windows: In the same folder as the app.py file, run the app from terminal by running

- When launched, the app will also open a terminal that shows some additional information.

- Select the folder containing the audio or video files you want to transcribe by clicking the "Browse" button next to the "Folder" label. This will open a file dialog where you can navigate to the desired folder. Remember, you won't be choosing individual files but whole folders!

- Enter the desired language for the transcription in the "Language" field. You can either select a language or leave it blank to enable automatic language detection.

- Choose the Whisper model to use for the transcription from the dropdown list next to the "Model" label.

- Enable the verbose mode by checking the "Verbose" checkbox if you want to receive detailed information during the transcription process.

- Click the "Transcribe" button to start the transcription. The button will be disabled during the process to prevent multiple transcriptions at once.

- Monitor the progress of the transcription with the progress bar.

- Once the transcription is completed, a message box will appear displaying the transcribed text. Click "OK" to close the message box.

- You can run the application again or quit the application at any time by clicking the "Quit" button.

Don't want fancy EXEs or GUIs? Use the function as is. See example for an implementation on Jupyter Notebook.

Footnotes

-

Advanced users can use

pip install ffmpeg-pythonbut be ready to deal with some PATH issues, which I encountered in Windows 11. ↩