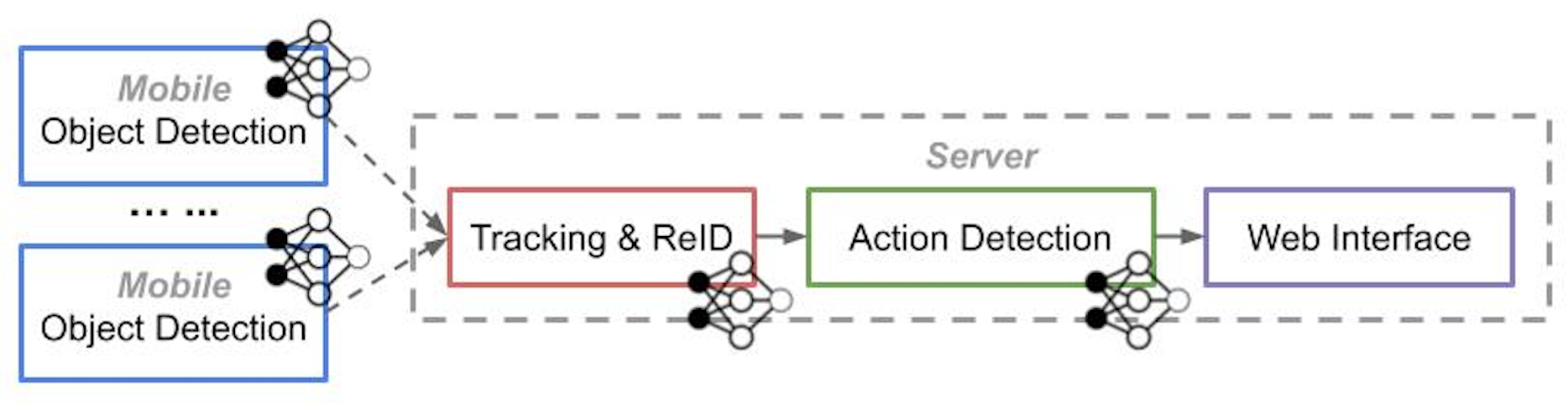

The workflow is shown in the image below. You will run one of the scripts to turn the device into a mobile/tracker/action/web node.

mobile/src code for object detectiontracker/src code for tracking and ReIDserver/src code for action detectionweb/src code for web interfacenetwork/src code for I/O and RPCconfig/config file for the system setupmain_xxx.pythe main script to run the nodecheckpoints/folder that contains the model filesdata/a test video is heremisc/scripts for debugging/testing

- Python 3.X

- OpenCV 3.X

- TensorFlow 1.12+

- Install other py packages using

pip install -r requirements.txt

- Clone the repo with

git clone https://github.com/USC-NSL/Caesar.git

- Prepare models (download the models to the

checkpoints/folder)

- Object Detection (Option-1: MobileNet-V2, Option-2: MaskRCNN). The MaskRCNN model is slower but detects better for people in drone/surveillance camera. It requires additional setup. Please check its repo for details and dependency.

- ReID (DeepSort)

- Action Detection (ACAM). For ACAM-related setup, please look at its repo and make sure your environment can run its demo code.

- Update config files to your settings

All the files you need to change are in

config/folder.

- Modify

label_mapping.txtto parse your object detector's output (key is the integer class id, value is the string object label). The default one is for SSD-MobileNetV2. - Modify

camera_topology.txtto indicate the camera connectivity (see the inline comments in the file for detail) - Modify

act_def.txtto define your complex activity using the syntax (see the inline comments in the file for detail) - Modify

const_xxx.pyto specify the model path, threshold, machine ip, etc., for this node (mobile, tracker, action, web). Read the inline comments for detail

- Run the node

- For a mobile node, run

python main_mobile.py(need GPU). If you are using a mobile GPU like Nvidia TX2, please set the board to the best-performance mode for max FPS. Here is a tutorial for TX2. - On a server: Run one of

python main_tracker.py/python main_act.py/python main_web.py(the tracker and the act node need GPU).

- About the runtime

- All the node will periodically ping their next hop for reconnection, so it doesn't matter what order you start/end these nodes.

- Each node will save logs into a

debug.logtext file in the home dir - If you want to end one node, press Ctrl-C to exit, some node may need Ctrl-C second time to be fully ended.

You can add more DNN-generated single-tube actions, check FAQ for how to do.

- Cross-tube actions:

close,near,far,approach,leave,cross - Single-tube actions:

start,end,move,stop,use_phone,carry,use_computer,give,talk,sit,with_bike,with_bag

Instead of running all the nodes online, you may want to just run part of the workflow and check the intermediate results (like detection and tracknig). Therefore, you will need to follow these:

- First, turn on the

SAVE_DATAinxx_const.pyso a node could save its results to an npy file underres/[main_node_name] - Then, you can run

main_gt.py. This script will act as node that reads the raw videos and the npy data. It could render the data in frames (e.g. detections, track ids, actions) so you can see them without running the webserver. Moreover, it upload the data to the next running node. Parameters formain_gt.pycan be changed inconfig/const_gt.py.

Example:

- Run

main_mobile.pyonly on several videos and get the data saved. Then you can config the npy data source to be the mobile's output formain_gt.py(speicify this in its config fileconfig/const_gt.py). Moreover, you should make the tracker node's IP as the server address formain_gt.py. - Then, run

main_tracker.pyandmain_gt.py. Nowmain_gt.pydisplays the mobile's detection result, and also uploads the data to the tracker node for tracking. - When finished, you can see the tracker's npy file in its own result folder. Set this file to be the source data path in

main_gt.pyand change its server address tomain_act.py. - Now you can run

main_gt.pyagain but as a tracker node, and use it to input tomain_act.pyfor action results. You can repeat the process for debuggingmain_web.pyin the last step.

Q: Object detection on Nvidia TX2 is slow, how to make it faster?

- A: Instead of using the default MobileNet model, you should use its optimized TensorRT version for TX2. Nvidia has a chain to squeeze the model so it runs much faster. Here is the tutorial for generating the TensorRT model. You can download ours here. The useage of TensorRT model is the same as regular model. With TensorRT, we increase the detection FPS from 4 to 10.5.

Q: I want to enable more DNN-detected single tube action, how to do that?

- A: Go to

config/const_action.py, add the action name and its detection threshold to theNN_ACT_THRES. Make sure that the action name is already inACTION_STRINGS.

Q: How to run two mobile nodes on the same machine?

- A: Duplicate

config/const_mobile.pywith a different name (e.g.const_mobile2.py) and edit it to match the settings for the second camera. Then make a copy ofmain_mobile.pyto bemain_mobile2.py. In the main file line ??, change thefrom config.const_mobileto befrom config.const_mobile2.

Q: The end-to-end delay is long, how to optimize?

- A: (1) Disable the web node and directly show the visualized result at act node. We will add this function later. (2) Initiate more action DNN instances. We will add this as an option so you can easily config.

If you have any other issues, please leave your questions in "issues"