-

Competition Name: Generative-AI Navigation Information Competition for UAV Reconnaissance in Natural Environments I:Image Data Generation

-

Team Name: TEAM_5101

-

Final Competition Results:

Testing Dataset FID Score Rank Private 89.09644 4 Public 88.878136 6 -

Key Technologies:

Tengfei Wang, Ting Zhang, Bo Zhang, Hao Ouyang, Dong Chen, Qifeng Chen, Fang Wen

2022

paper | project website | video | online demo

We present a simple and universal framework that brings the power of the pretraining to various image-to-image translation tasks.

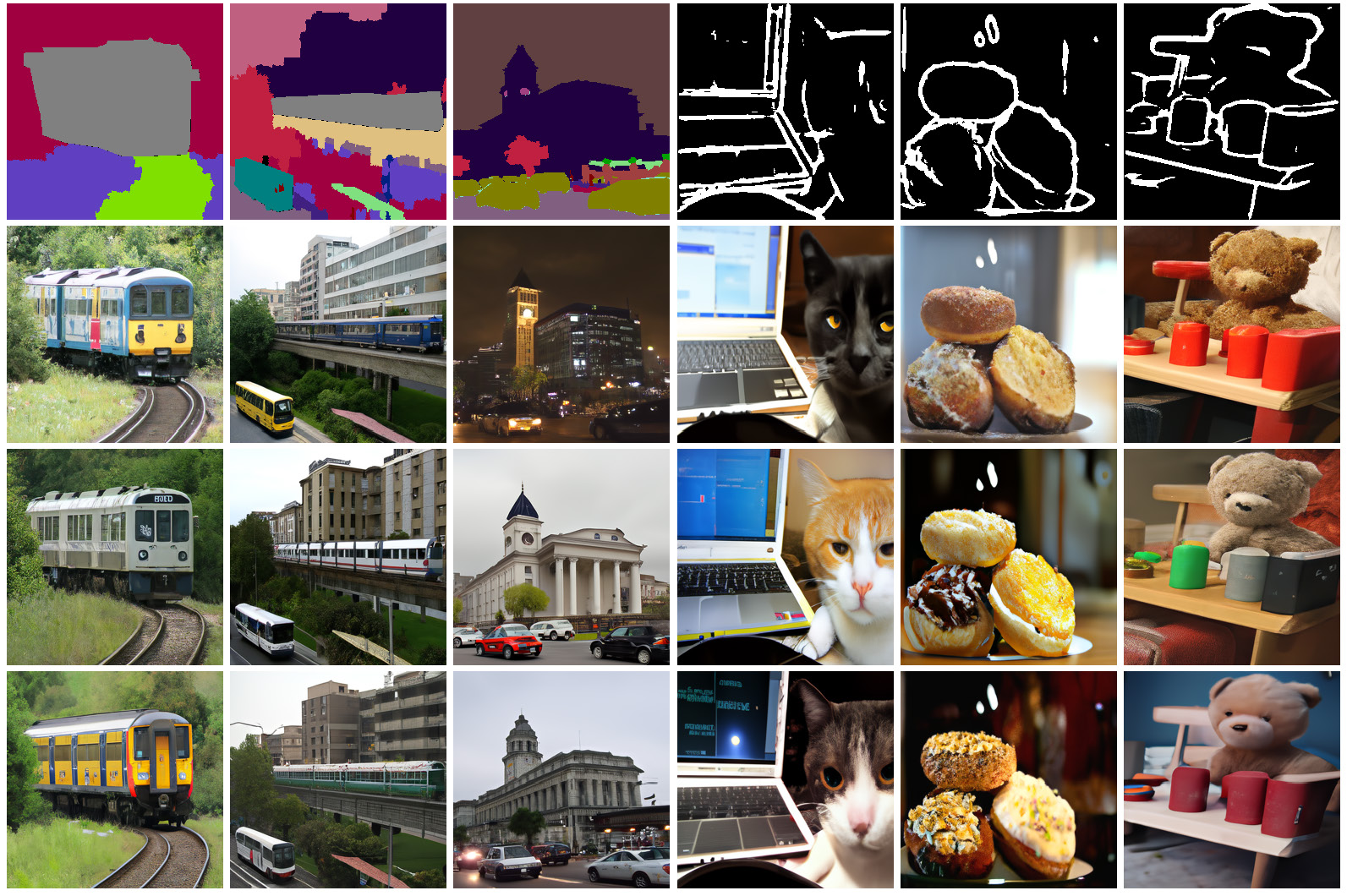

Diverse samples synthesized by our approach.

git clone https://github.com/PITI-Synthesis/PITI.git

cd PITI

sudo apt-get update

sudo apt-get install openmpi-bin libopenmpi-dev -y

conda env create -f environment.yml

conda activate PITI

conda install -c conda-forge openmpi -y

pip install mpi4py==3.0.3 dlib==19.22.1

pip install gradio

Please download pre-trained models for both Base model and Upsample model, and put them in ./ckpt.

| Model | Task | Dataset |

|---|---|---|

| Base-64x64 | Mask-to-Image | Trained on COCO. |

| Upsample-64-256 | Mask-to-Image | Trained on COCO. |

| Base-64x64 | Sketch-to-Image | Trained on COCO. |

| Upsample-64-256 | Sketch-to-Image | Trained on COCO. |

If you fail to access to these links, you may alternatively find our pretrained models here.

Download the following pretrained models into ./ckpt/.

| Model | Task | Dataset |

|---|---|---|

| Base-64x64 | Mask-to-Image | Trained on COCO. |

| Upsample-64-256 | Mask-to-Image | Trained on COCO. |

Run the notebook preprocess.ipynb to preprocess training dataset.

Taking mask-to-image synthesis as an example: (sketch-to-image is the same)

Modify mask_finetune_base.sh and run:

bash mask_finetune_base.sh

Run the notebook generate-example.ipynb to generate output images.

If you find this work useful for your research, please cite:

@misc{

title = {Utilizing PITI for Generating Autonomous UAV Images in Natural Environments},

author = {Zhe-Yu Guo},

url = {https://github.com/Tianming8585/PITI},

year = {2024}

}

Thanks for PITI for sharing their code and pretrained models.

-

@article{wang2022pretraining, title = {Pretraining is All You Need for Image-to-Image Translation}, author = {Wang, Tengfei and Zhang, Ting and Zhang, Bo and Ouyang, Hao and Chen, Dong and Chen, Qifeng and Wen, Fang}, journal={arXiv:2205.12952}, year = {2022}, } -

@misc{ title = {Apply DP-GAN on Generative-AI Navigation Information Competition for UAV Reconnaissance in Natural Environments}, author = {Wei-Chun Tsao}, url = {https://github.com/Tsao666/DP_GAN}, year = {2024} }