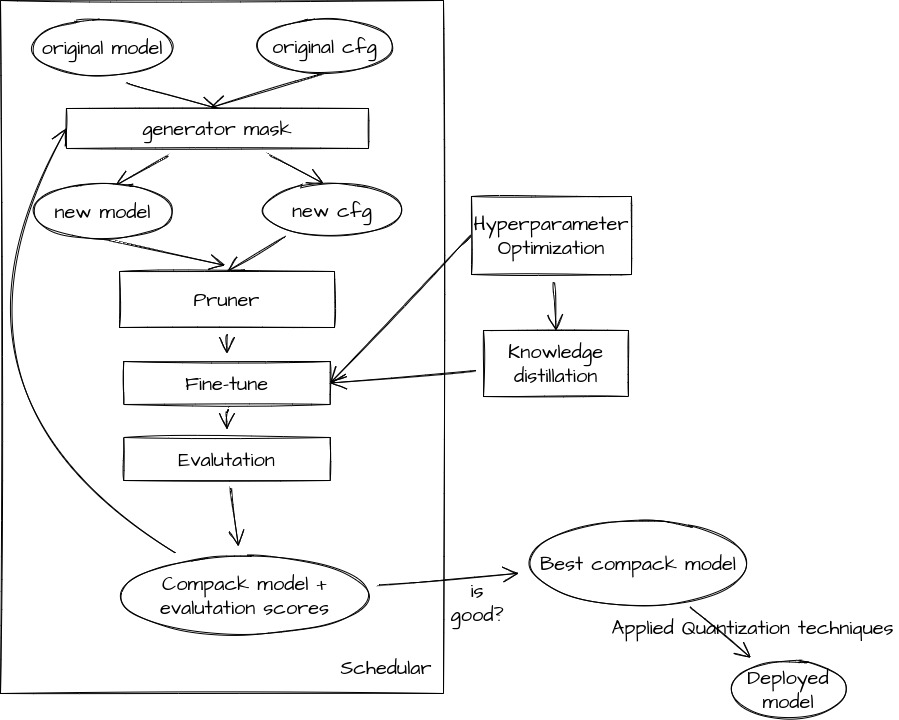

A Pytorch Optimization library for benchmarking and extending works in the domains of Knowledge Distillation, Pruning, and Quantization.

- AGP Pruner (L-norm, APoZ)

- Slim Pruner (ChannlePruner, FilterPruner, GroupPruner, ...)

- FPGM Pruner

- ADMM Pruner

- Sensitivity Prunner

- Support PQT, QAT, DorefaQuantization, v.v

Easy traning with student, teacher.

- Add example apply KD for training pruned model.

- Add reviewKD Distilling Knowledge via Knowledge Review

pip install -r requirments.txt-

Document: Comming soon!!!!!

-

Get infomation: Link

- Add pruning method for Transformer, RNN.

- Add quantization method.

- Add more loss function in Knowledge Distillation method.

- Add Spatial SVD method: Tensor decomposition technique to split a large layer in two smaller ones.

- Add visualization in tensorboard.