IP-Adapter: Text Compatible Image Prompt Adapter for Text-to-Image Diffusion Models

Introduction

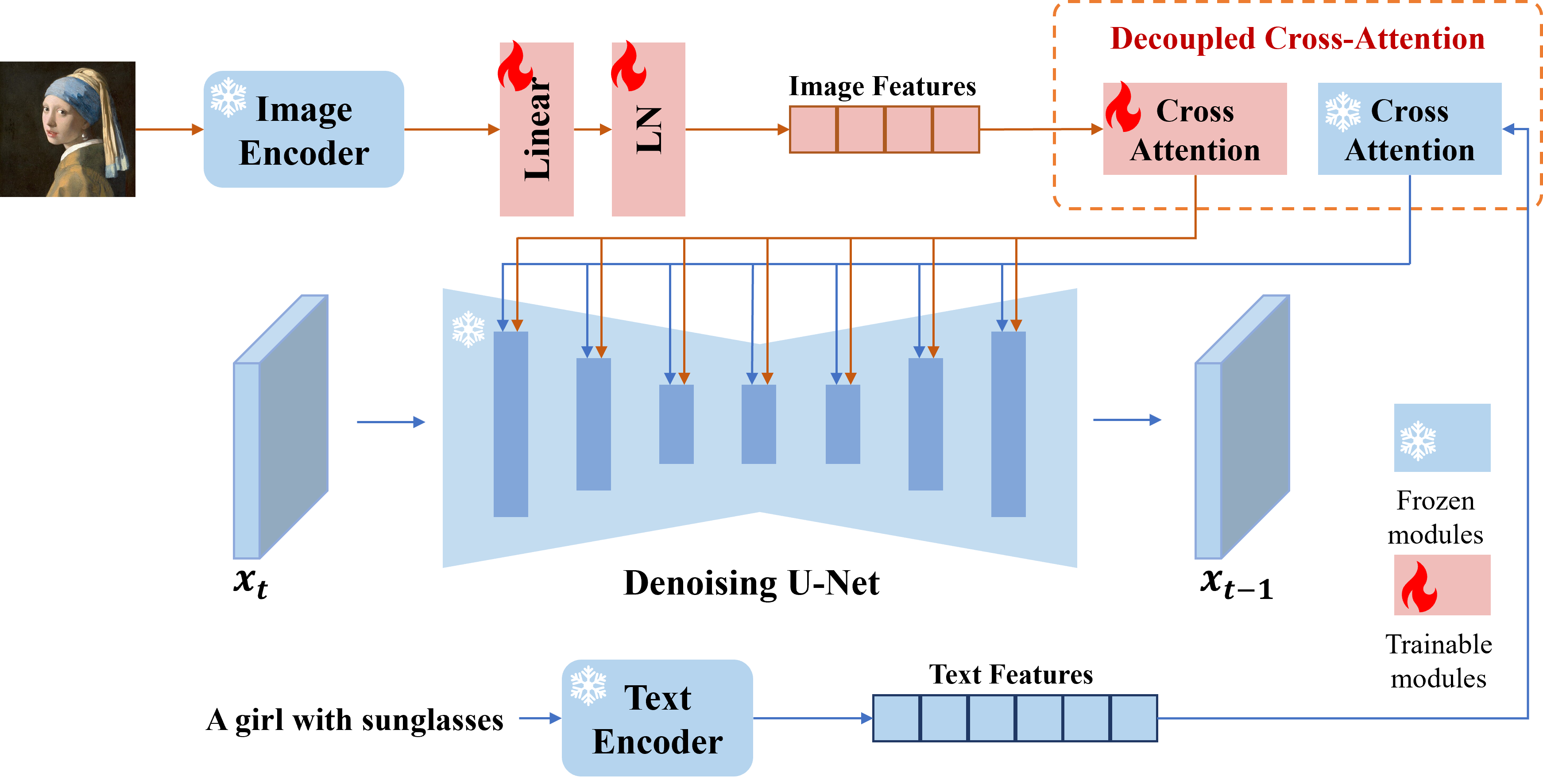

we present IP-Adapter, an effective and lightweight adapter to achieve image prompt capability for the pretrained text-to-image diffusion models. An IP-Adapter with only 22M parameters can achieve comparable or even better performance to a fine-tuned image prompt model. IP-Adapter can be generalized not only to other custom models fine-tuned from the same base model, but also to controllable generation using existing controllable tools. Moreover, the image prompt can also work well with the text prompt to accomplish multimodal image generation.

Release

- [2023/8/18] 🔥 Add code and models for SDXL 1.0. Demo is here.

- [2023/8/16] 🔥 We release the code and models.

Dependencies

- diffusers >= 0.19.3

Download Models

you can download models from here. To run the demo, you should also download the following models:

- runwayml/stable-diffusion-v1-5

- stabilityai/sd-vae-ft-mse

- SG161222/Realistic_Vision_V4.0_noVAE

- ControlNet models

How to Use

- ip_adapter_demo: image variations, image-to-image, and inpainting with image prompt.

- ip_adapter_controlnet_demo: structural generation with image prompt.

- ip_adapter_multimodal_prompts_demo: generation with multimodal prompts.

Best Practice

- If you only use the image prompt, you can set the

scale=1.0andtext_prompt=""(or some generic text prompts, e.g. "best quality", you can also use any negative text prompt). If you lower thescale, more diverse images can be generated, but they may not be as consistent with the image prompt. - For multimodal prompts, you can adjust the

scaleto get best results. In most cases, settingscale=0.5can get good results. For the version of SD 1.5, we recommend using community models to generate good images.

Citation

If you find IP-Adapter useful for your your research and applications, please cite using this BibTeX:

@article{ye2023ip-adapter,

title={IP-Adapter: Text Compatible Image Prompt Adapter for Text-to-Image Diffusion Models},

author={Ye, Hu and Zhang, Jun and Liu, Sibo and Han, Xiao and Yang, Wei},

booktitle={arXiv preprint arxiv:2308.06721},

year={2023}

}