End-to-end Video Text Spotting with Transformer

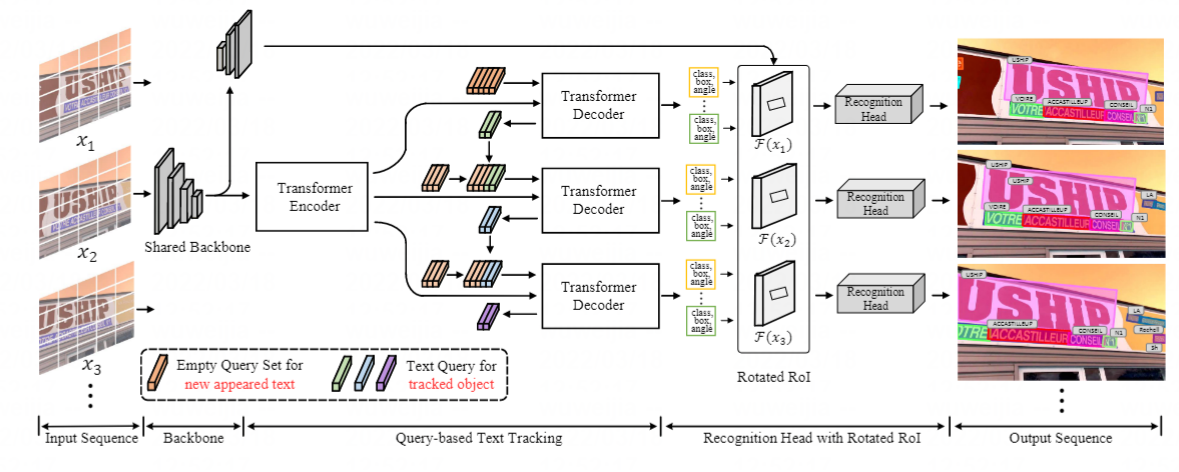

Video text spotting(VTS) is the task that requires simultaneously detecting, tracking and recognizing text instances in video. Recent methods typically develop sophisticated pipelines based on Intersection over Union (IoU) or appearance similarity in adjacent frames to tackle this task. In this paper, rooted in Transformer sequence modeling, we propose a novel video text DEtection, Tracking, and Recognition framework (TransDETR), which views the VTS task as a direct long-sequence temporal modeling problem.

Link to our new benchmark BOVText: A Large-Scale, Bilingual Open World Dataset for Video Text Spotting

-

(29/05/2022) Update unmatched pretrained and finetune weight.

-

(12/05/2022) Rotated_ROIAlig has been refined.

-

(08/04/2022) Refactoring the code.

-

(1/1/2022) The complete code has been released .

| Methods | MOTA | MOTP | IDF1 | Mostly Matched | Partially Matched | Mostly Lost |

|---|---|---|---|---|---|---|

| TransDETR | 47.5 | 74.2 | 65.5 | 832 | 484 | 600 |

Models are also available in Google Drive.

| Methods | MOTA | MOTP | IDF1 | Mostly Matched | Partially Matched | Mostly Lost |

|---|---|---|---|---|---|---|

| TransDETR | 58.4 | 75.2 | 70.4 | 614 | 326 | 427 |

| TransDETR(aug) | 60.9 | 74.6 | 72.8 | 644 | 323 | 400 |

Models are also available in Google Drive.

- The training time is on 8 NVIDIA V100 GPUs with batchsize 16.

- We use the models pre-trained on COCOTextV2.

- We do not release the recognition code due to the company's regulations.

The codebases are built on top of Deformable DETR and MOTR.

-

Linux, CUDA>=9.2, GCC>=5.4

-

Python>=3.7

We recommend you to use Anaconda to create a conda environment:

conda create -n TransDETR python=3.7 pip

Then, activate the environment:

conda activate TransDETR

-

PyTorch>=1.5.1, torchvision>=0.6.1 (following instructions here)

For example, if your CUDA version is 9.2, you could install pytorch and torchvision as following:

conda install pytorch=1.5.1 torchvision=0.6.1 cudatoolkit=9.2 -c pytorch

-

Other requirements

pip install -r requirements.txt

-

Build MultiScaleDeformableAttention and Rotated ROIAlign

cd ./models/ops sh ./make.sh cd ./models/Rotated_ROIAlign python setup.py build_ext --inplace

- Please download ICDAR2015 and COCOTextV2 dataset and organize them like FairMOT as following:

.

├── COCOText

│ ├── images

│ └── labels_with_ids

├── ICDAR15

│ ├── images

│ ├── track

│ ├── train

│ ├── val

│ ├── labels

│ ├── track

│ ├── train

│ ├── val

- You also can use the following script to generate txt file:

cd tools/gen_labels

python3 gen_labels_COCOTextV2.py

python3 gen_labels_15.py

python3 gen_labels_YVT.py

cd ../../You can download COCOTextV2 pretrained weights from Pretrained TransDETR Google Drive. Or training by youself:

sh configs/r50_TransDETR_pretrain_COCOText.sh

Then training on ICDAR2015 with 8 GPUs as following:

sh configs/r50_TransDETR_train.sh

You can download the pretrained model of TransDETR (the link is in "Main Results" session), then run following command to evaluate it on ICDAR2015 dataset:

sh configs/r50_TransDETR_eval.sh

evaluate on ICDAR13

python tools/Evaluation_ICDAR13/evaluation.py

evaluate on ICDAR15

cd exps/e2e_TransVTS_r50_ICDAR15

zip -r preds.zip ./preds/*

then submit to the ICDAR2015 online metric

For visual in demo video, you can enable 'vis=True' in eval.py like:

--show

then run the script:

python tools/vis.py

TransDETR is released under MIT License.

If you use TransDETR in your research or wish to refer to the baseline results published here, please use the following BibTeX entries:

@article{wu2022transdetr,

title={End-to-End Video Text Spotting with Transformer},

author={Weijia Wu, Chunhua Shen, Yuanqiang Cai, Debing Zhang, Ying Fu, Ping Luo, Hong Zhou},

journal={arxiv},

year={2022}

}