Website | Quick start | Infrastructure | Example | Contribute | FAQ

OpenNext takes the Next.js build output and converts it into a package that can be deployed to any functions as a service platform.

OpenNext aims to support all Next.js 13 features. Some features are work in progress. Please open a new issue to let us know!

- API routes

- Dynamic routes

- Static site generation (SSG)

- Server-side rendering (SSR)

- Incremental static regeneration (ISR)

- Middleware

- Image optimization

-

Naviate to your Next.js app

cd my-next-app -

Build the app

npx open-next@latest build

This will generate an

.open-nextdirectory with the following bundles:my-next-app/ .open-next/ assets/ -> Static files to upload to an S3 Bucket server-function/ -> Handler code for server Lambda Function middleware-function/ -> Handler code for middleware Lambda@Edge Function image-optimization-function/ -> Handler code for image optimization Lambda FunctionIf your Next.js app does not use middleware,

middleware-functionwill not be generated.

When calling open-next build, OpenNext builds the Next.js app using the @vercel/next package. And then it transforms the build output to a format that can be deployed to AWS.

OpenNext imports the @vercel/next package to do the build. The package internally calls next build with the minimalMode flag. This flag disables running middleware in the server code. Instead, it bundles the middleware code separately. This allows us to deploy middleware to edge locations. That is similar to how middleware is deployed on Vercel.

Then the build output gets transformed into a format that can be deployed to AWS. Files in assets/ are ready to be uploaded to AWS S3. And function code are wrapped inside Lambda handlers. They are ready to be deployed to AWS Lambda and Lambda@Edge.

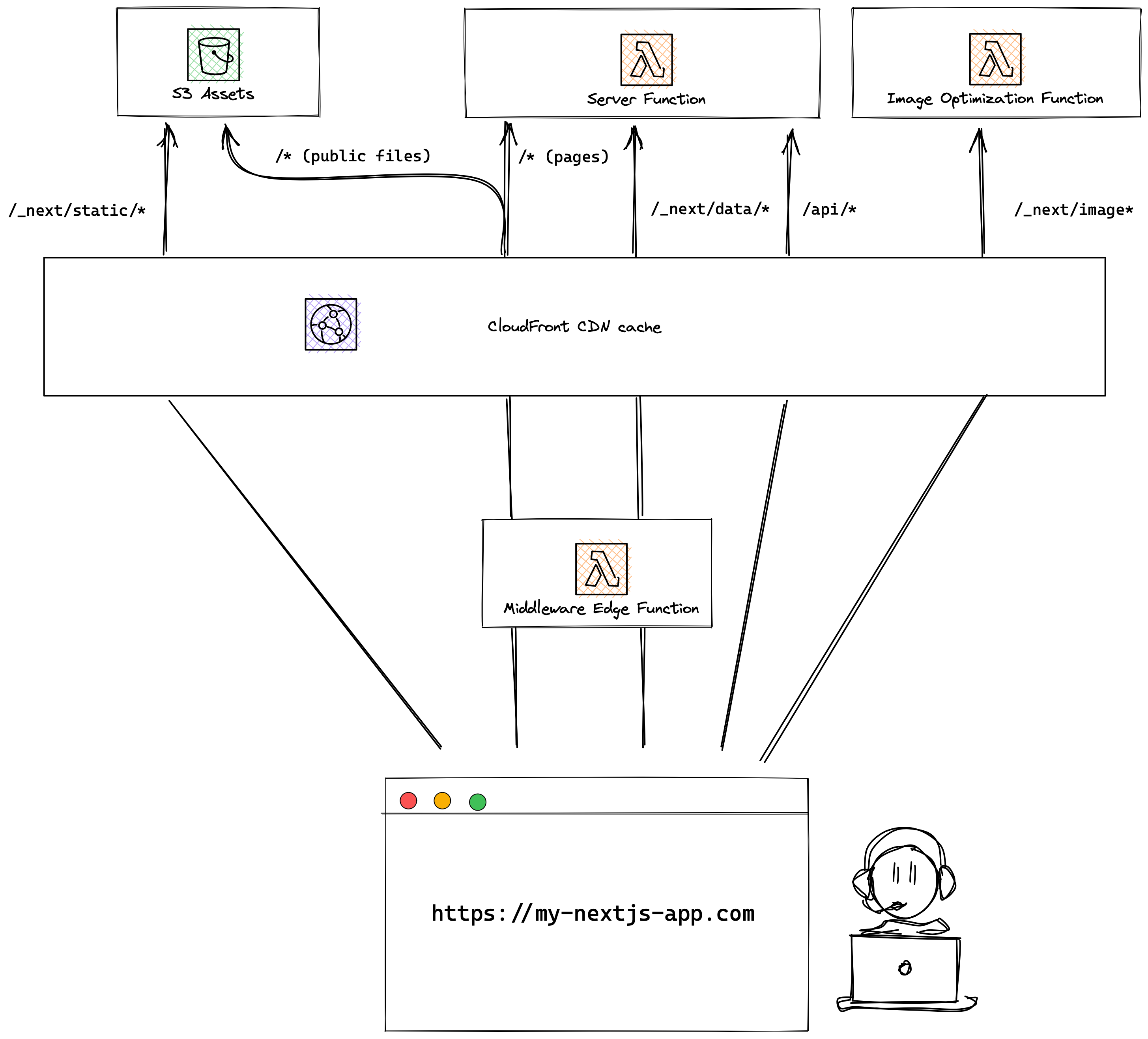

OpenNext does not create the underlying infrastructure. You can create the infrastructure for your app with your preferred tool — SST, CDK, Terraform, Serverless Framework, etc.

This is the recommended setup.

Let's dive into the recommended configuration for each AWS resource.

Create an S3 bucket and upload the content in .open-next/assets to the root of the bucket. For example, .open-next/assets/favicon.ico would get uploaded to /favicon.ico off the bucket root.

There are two types of files in .open-next/assets:

Hashed files

These are files with a hash component in the file name. Hashed files are located inside .open-next/assets/_next, ie. .open-next/assets/_next/static/css/0275f6d90e7ad339.css. The hash values in the filenames are guaranteed to change when the content of the files change. So hashed files should be cached both at the CDN level and at the browser level. When uploading the hashed files to S3, the recommended cache control setting is

public,max-age=31536000,immutable

Un-hashed files

Other files inside .open-next/assets are copied over from your app's public/ folder, ie. .open-next/assets/favicon.ico. The file name for un-hashed files can remain unchanged when the content change. Un-hashed files should be cached at the CDN level, but not at the browser level. And when the content of un-hashed files change, invalidate the CDN cache on deploy. When uploading the un-hashed files to S3, the recommended cache control setting is

public,max-age=0,s-maxage=31536000,must-revalidate

Create a Lambda function with the code from .open-next/image-optimization-function.

This function handles image optimization requests when the Next.js <Image> component is used. The sharp library is bundled with the function. And it is used to convert the image.

Note that image optimization function responds with the Cache-Control header, and the image will be cached both at the CDN level and at the browser level.

Create a Lambda function with the code from .open-next/server-function.

This function handles all the other types of requests from the Next.js app, including Server-side Rendering (SSR) requests and API requests. OpenNext builds the Next.js app in the standalone mode. The standalone mode generates a NextServer class that does the request handling. And the server function wraps around the NextServer.

Create a CloudFront distribution, and dispatch requests to their cooresponding handlers (behaviors). The following behaviors are configured:

| Behavior | Requests | Origin | Allowed Headers |

|---|---|---|---|

/_next/static/* |

Hashed static files | S3 bucket | |

/_next/image |

Image optimization | image optimization function | Accept |

/_next/data/* |

data requests | server function | x-op-middleware-request-headersx-op-middleware-response-headersx-nextjs-datax-middleware-prefetchsee why |

/api/* |

API | server function | |

/* |

catch all | server function fallback to S3 bucket see why |

x-op-middleware-request-headersx-op-middleware-response-headersx-nextjs-datax-middleware-prefetchsee why |

Create a Lambda function with the code from .open-next/middleware-function, and attach it to the /_next/data/* and /* behaviors as viewer request edge function. This allows the function to run your Middleware code before the request hits your server function, and also before cached content.

The middleware function uses the Node.js 18 global fetch API. It requires to run on Node.js 18 runtime. See why Node.js 18 runtime is required.

Note that if middleware is not used in the Next.js app, the middleware-function will not be generated. In this case, you don't have to create the Lambda@Edge function, and configure it in the CloudFront distribution.

Recall in the S3 bucket section, files in your app's public/ folder are staitc, and are uploaded to the S3 bucket. Ideally, requests to these files should be handled by the S3 bucket. For example:

https://my-nextjs-app.com/favicon.ico

This requires the CloudFront distribution to have the behavior /favicon.ico, and set the S3 bucket as the origin. However, CloudFront has a default limit of 25 behaviors per distribution. It is not a scalable solution to create 1 behavior per file.

To workaround the issue, requests to public/ files are handled by the cache all behavior /*. The behavior sends the request to the server function first. And if the server fails to handle the request, it will fallback to the S3 bucket.

This means on cache miss, the request will take slightly longer to process.

Recall in the Server function section, the server function uses the NextServer class from Next.js' build output to handle requests. NextServer does not seem to set the correct Cache Control headers.

To workaround the issue, the server function checks if the request is to an HTML page. And it will set the Cache Control header:

public, max-age=0, s-maxage=31536000, must-revalidate

Middleware allows you to modify the request and response headers. This requires the middleware function to be able to pass custom headers defined in your Next.js app's middleware code to the server function.

CloudFront lets your pass all headers to the server function. But by doing so, the Host header is also passed along to the server function. And API Gateway would reject the request. There is no way to configure CloudFront too pass all but Host header.

To workaround the issue, the middleware function JSON encodes all request headers into the x-op-middleware-request-headers header. And all response headers into the x-op-middleware-response-headers header. The server function will then decodes the headers.

Note that the x-op-middleware-request-headers and x-op-middleware-response-headers headers need to be added to CloudFront distribution's cache policy allowed list.

Vercel uses the Headers.getAll() function in the middleware code. This function is not part of the Node.js 18 global fetch API. We have two options:

- Inject the

getAll()function to the global fetch API. - Use the

node-fetchpackage to polyfill the fetch API.

We decided to go with option 1. It does not require addition dependency. And it is likely that Vercel removes the usage of the getAll() function down the road.

In the example folder, you can find a Next.js feature test app. Here's a link deployed using SST's NextjsSite construct. It contains a handful of pages. Each page aims to test a single Next.js feature.

You can find the server log in the AWS CloudWatch console of the region you deployed to.

You can find the middleware log in the AWS CloudWatch console of the region you are physically close to. For example, if you deployed your app to us-east-1 and you are in London, it's likely you will find the logs in eu-west-2.

Create a PR and add a new page to the benchmark app in example with the issue.

To run OpenNext locally:

- Clone this repo

- Build

open-nextcd open-next yarn build - Run

open-nextin watch modeyarn dev

- Make

open-nextlinkable from your Next.js appyarn link

- Link

open-nextin your Next.js appNow you can make changes incd path/to/my/nextjs/app yarn link open-nextopen-next, and runyarn open-next buildin your Next.js app to test the changes.

The next build command generates a server function that runs the middleware. With this setup, if you use middleware for static pages, these pages cannot be cached. If cached, CDN (CloudFront) will send back the cached response without calling the origin (server Lambda function). To ensure the middleware is invoked on every request, caching is always disabled.

Vercel deploys the middleware code to edge functions, which gets invoked before the request reaches the CDN. This way, static pages can be cached. On request, the middleware gets called, and then the CDN can send back the cached response.

OpenNext is designed to adopt the same setup as Vercel. And building using @vercel/next allows us to separate the middleware code from the server code.

Maintained by SST. Join our community: Discord | YouTube | Twitter