This is our PyTorch implementation for ToDayGAN. Code was written by Asha Anoosheh (built upon ComboGAN)

If you use this code for your research, please cite:

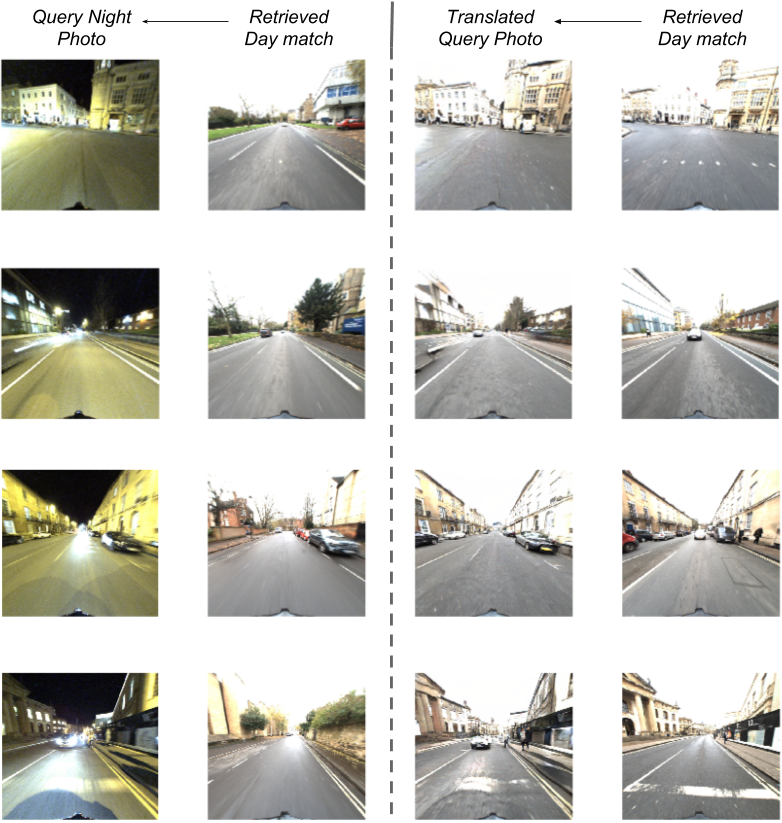

Night-to-Day Image Translation for Retrieval-based Localization Asha Anoosheh, Torsten Sattler, Radu Timofte, Marc Pollefeys, Luc van Gool In Arxiv, 2018.

- Linux or macOS

- Python 3

- CPU or NVIDIA GPU + CUDA CuDNN

- Install requisite Python libraries.

pip install torch

pip install torchvision

pip install visdom

pip install dominate- Clone this repo:

git clone https://github.com/AAnoosheh/ToDayGAN.gitExample running scripts can be found in the scripts directory.

One of our pretrained models for the Oxford Robotcars dataset is found HERE. Place under ./checkpoints/robotcar_2day and test using the instructions below, with args --name robotcar_2day --dataroot ./datasets/<your_test_dir> --n_domains 2 --which_epoch 150 --loadSize 512

Because of sesitivity to instrinsic camera characteristics, testing should ideally be on the same Oxford dataset photos (and same Grasshopper camera) found conveniently preprocessed and ready-to-use HERE.

If using this pretrained model, <your_test_dir> should contain two subfolders test0 & test1, containing Day and Night images to test, respectively (as mine was trained with this ordering). test0 can be empty if you do not care about Day image translated to Night, but just needs to exist to not break the code.

- Train a model:

python train.py --name <experiment_name> --dataroot ./datasets/<your_dataset> --n_domains <N> --niter <num_epochs_constant_LR> --niter_decay <num_epochs_decaying_LR>

Checkpoints will be saved by default to ./checkpoints/<experiment_name>/

- Fine-tuning/Resume training:

python train.py --continue_train --which_epoch <checkpoint_number_to_load> --name <experiment_name> --dataroot ./datasets/<your_dataset> --n_domains <N> --niter <num_epochs_constant_LR> --niter_decay <num_epochs_decaying_LR>

- Test the model:

python test.py --phase test --serial_test --name <experiment_name> --dataroot ./datasets/<your_dataset> --n_domains <N> --which_epoch <checkpoint_number_to_load>

The test results will be saved to a html file here: ./results/<experiment_name>/<epoch_number>/index.html.

- Flags: see

options/train_options.pyfor training-specific flags; seeoptions/test_options.pyfor test-specific flags; and seeoptions/base_options.pyfor all common flags. - Dataset format: The desired data directory (provided by

--dataroot) should contain subfolders of the formtrain*/andtest*/, and they are loaded in alphabetical order. (Note that a folder named train10 would be loaded before train2, and thus all checkpoints and results would be ordered accordingly.) Test directories should match alphabetical ordering of the training ones. - CPU/GPU (default

--gpu_ids 0): set--gpu_ids -1to use CPU mode; set--gpu_ids 0,1,2for multi-GPU mode. - Visualization: during training, the current results and loss plots can be viewed using two methods. First, if you set

--display_id> 0, the results and loss plot will appear on a local graphics web server launched by visdom. To do this, you should havevisdominstalled and a server running by the commandpython -m visdom.server. The default server URL ishttp://localhost:8097.display_idcorresponds to the window ID that is displayed on thevisdomserver. Thevisdomdisplay functionality is turned on by default. To avoid the extra overhead of communicating withvisdomset--display_id 0. Secondly, the intermediate results are also saved to./checkpoints/<experiment_name>/web/index.html. To avoid this, set the--no_htmlflag. - Preprocessing: images can be resized and cropped in different ways using

--resize_or_cropoption. The default option'resize_and_crop'resizes the image such that the largest side becomesopt.loadSizeand then does a random crop of size(opt.fineSize, opt.fineSize). Other options are either justresizeorcropon their own.