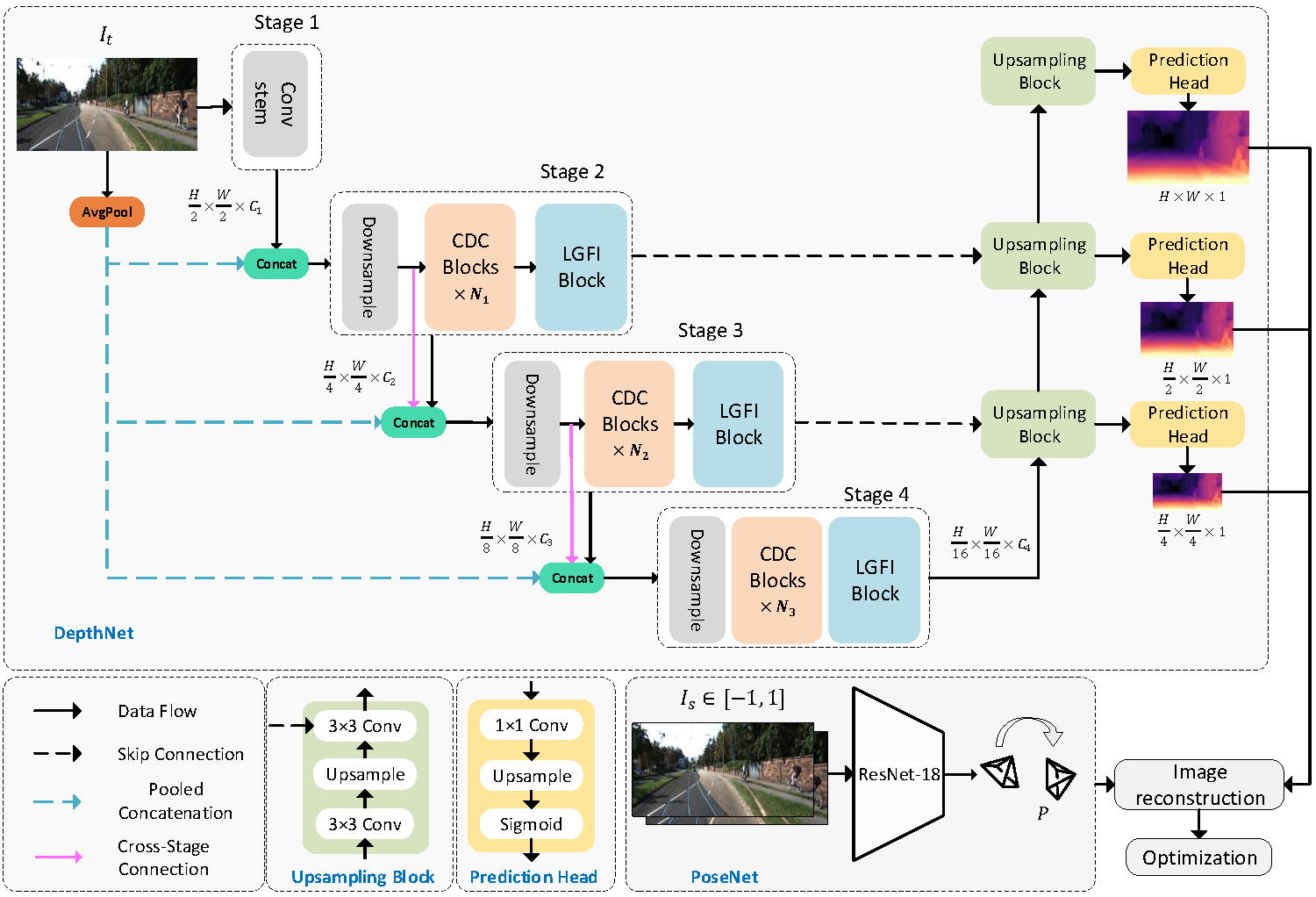

A Lightweight CNN and Transformer Architecture for Self-Supervised Monocular Depth Estimation [paper link]

Ning Zhang*, Francesco Nex, George Vosselman, Norman Kerle

(Lite-Mono-8m 1024x320)

You can download the trained models using the links below.

| --model | Params | ImageNet Pretrained | Input size | Abs Rel | Sq Rel | RMSE | RMSE log | delta < 1.25 | delta < 1.25^2 | delta < 1.25^3 |

|---|---|---|---|---|---|---|---|---|---|---|

| lite-mono | 3.1M | yes | 640x192 | 0.107 | 0.765 | 4.561 | 0.183 | 0.886 | 0.963 | 0.983 |

| lite-mono-small | 2.5M | yes | 640x192 | 0.110 | 0.802 | 4.671 | 0.186 | 0.879 | 0.961 | 0.982 |

| lite-mono-tiny | 2.2M | yes | 640x192 | 0.110 | 0.837 | 4.710 | 0.187 | 0.880 | 0.960 | 0.982 |

| lite-mono-8m | 8.7M | yes | 640x192 | 0.101 | 0.729 | 4.454 | 0.178 | 0.897 | 0.965 | 0.983 |

| lite-mono | 3.1M | yes | 1024x320 | 0.102 | 0.746 | 4.444 | 0.179 | 0.896 | 0.965 | 0.983 |

| lite-mono-small | 2.5M | yes | 1024x320 | 0.103 | 0.757 | 4.449 | 0.180 | 0.894 | 0.964 | 0.983 |

| lite-mono-tiny | 2.2M | yes | 1024x320 | 0.104 | 0.764 | 4.487 | 0.180 | 0.892 | 0.964 | 0.983 |

| lite-mono-8m | 8.7M | yes | 1024x320 | 0.097 | 0.710 | 4.309 | 0.174 | 0.905 | 0.967 | 0.984 |

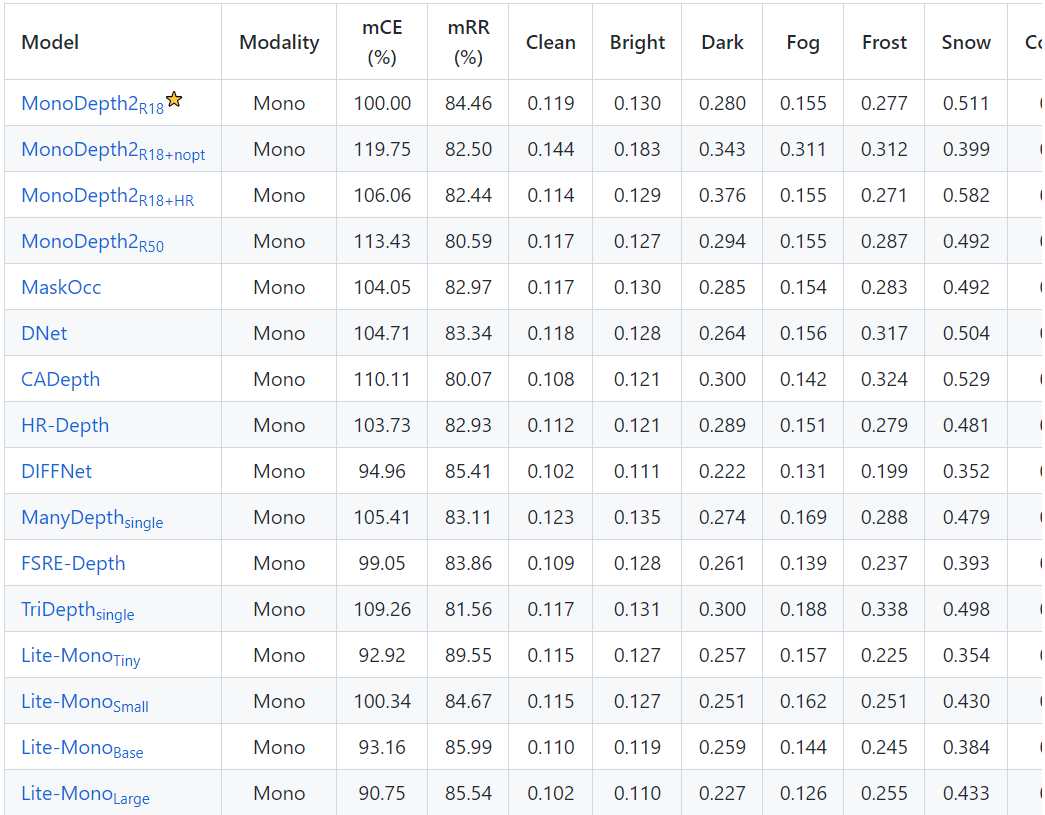

The RoboDepth Challenge Team is evaluating the robustness of different depth estimation algorithms. Lite-Mono has achieved the best robustness to date.

Please refer to Monodepth2 to prepare your KITTI data.

python test_simple.py --load_weights_folder path/to/your/weights/folder --image_path path/to/your/test/image

python evaluate_depth.py --load_weights_folder path/to/your/weights/folder --data_path path/to/kitti_data/ --model lite-mono

pip install 'git+https://github.com/saadnaeem-dev/pytorch-linear-warmup-cosine-annealing-warm-restarts-weight-decay'

python train.py --data_path path/to/your/data --model_name mytrain --num_epochs 30 --batch_size 12 --mypretrain path/to/your/pretrained/weights --lr 0.0001 5e-6 31 0.0001 1e-5 31

tensorboard --log_dir ./tmp/mytrain

@InProceedings{Zhang_2023_CVPR,

author = {Zhang, Ning and Nex, Francesco and Vosselman, George and Kerle, Norman},

title = {Lite-Mono: A Lightweight CNN and Transformer Architecture for Self-Supervised Monocular Depth Estimation},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2023},

pages = {18537-18546}

}