This repository stores data and code for the paper ChatLog: Recording and Analysing ChatGPT Across Time

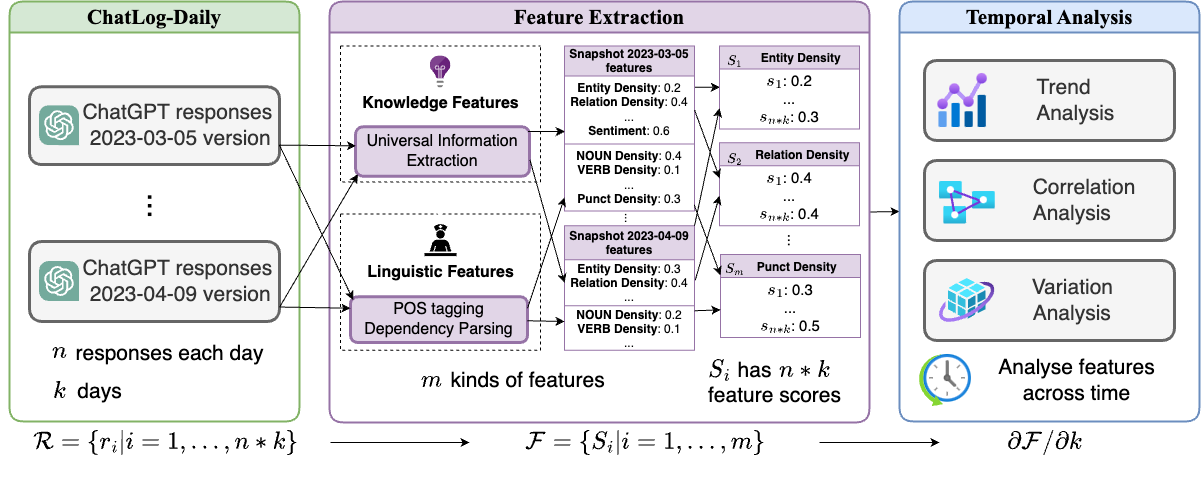

ChatGPT has achieved great success and can be considered to have acquired an infrastructural status. There are abundant works for evaluating ChatGPT on benchmarks. However, existing benchmarks encounter two challenges: (1) Disregard for periodical evaluation and (2) Lack of fine-grained features. In this paper, we construct ChatLog, an ever-updating dataset with large-scale records of diverse long-form ChatGPT responses for 21 NLP benchmarks from March, 2023 to now. We conduct a comprehensive performance evaluation to find that most capabilities of ChatGPT improve over time except for some abilities, and there exists a step-wise evolving pattern of ChatGPT. We further analyze the inherent characteristics of ChatGPT by extracting the knowledge and linguistic features. We find some stable features that stay unchanged and apply them on the detection of ChatGPT-generated texts to improve the robustness of cross-version detection. We will continuously maintain our project at GitHub.

We release our data at cloud.

If you have any questions about the data, please raise a issue

Now the category is as following, you can download them by clicking the link:

- ChatLog-Monthly

- ChatLog-Daily

- api

- everyday_20230305-20230409.zip

- everyday_20230410-20230508.zip

- everyday_20230509-20230610.zip

- everyday_20230611-20230708.zip

- everyday_20230709-20230813.zip

- everyday_20230814-20230831.zip

- everyday_20230901-20230930.zip

- everyday_20231001-20231113.zip

- everyday_20231114-20231228.zip

- everyday_20240109-20240209.zip

- everyday_20240210-20240313.zip

- everyday_20240314-20240422.zip

- everyday_20240423-20240527.zip

- open

- processed_csv

- api

Every zip file contains some jsonl files and each json object is as the format:

| column name: | id | source_type | source_dataset | source_task | q | a | language | chat_date | time |

|---|---|---|---|---|---|---|---|---|---|

| introduction: | id | type of the source: from open-access dataset/api | dataset of the question come from | specific task name,such as sentiment analysis | question | response of ChatGPT | language | The time that ChatGPT responses | The time that the data is stored into our database |

| example | 'id': 60 | 'source_type': 'open' | 'source_dataset': 'ChatTrans' | 'source_task': 'translation' | 'q': 'translate this sentence into Chinese: Good morning', | 'a': '早上好', | 'language': 'zh' | 'chat_date': '2023-03-03', | 'time': '2023-03-04 09:58:09', |

The ChatLog-Monthly and ChatLog-Daily will be continuously updated.

For processsing data from 20230305 to 20230409, please use v1 version's shells. For processsing data after 20230410, please use v2 version's shells.

- For extracting all the knowledge and linguistic features, run:

sh shells/process_new_data_v1.sh

- For analyzing features and calculating variation, run:

sh shells/analyse_var_and_classify_across_time_v1.sh

- Use LightGBM that ensembles the features with RoBERTa to train a robust ChatGPT detector, run:

sh shells/lgb_train_v1.sh

- For trend and correlation analysis, first dumping knowledge features into

avg_HC3_knowledge_pearson_corr_feats.csv

sh shells/draw_knowledge_feats_v1.sh

- Then dump other linguistic features into

avg_HC3_all_pearson_corr_feats.csv

sh shells/draw_eval_corr_v1.sh

-

Finally, we can draw heatmaps and lineplots for trend and correlation analysis:

- Put the dumped

avg_HC3_knowledge_pearson_corr_feats.csvandavg_HC3_all_pearson_corr_feats.csvunder the./shellsfolder - Then use

./shells/knowledge_analysis.ipynband./shells/temporal_analysis.ipynbto draw every figure.

- Put the dumped