AudioInsight processes audio, transcribes it, summarizes it, generates a title for the content, and allows users to ask questions about the related audio.

This is an entry for the Cloudflare AI Challenge.

Live on: https://audioinsight.gabrielsena.dev/

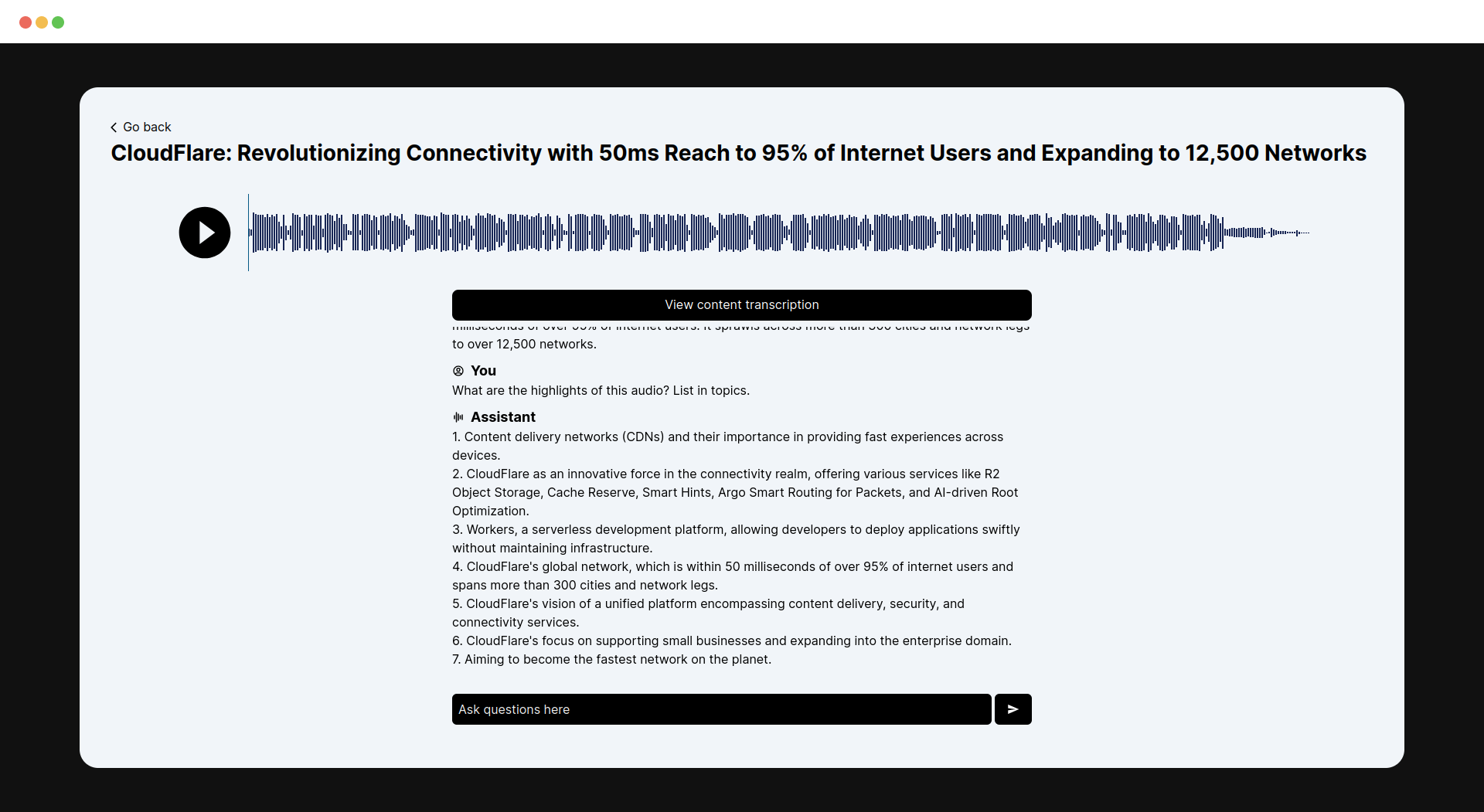

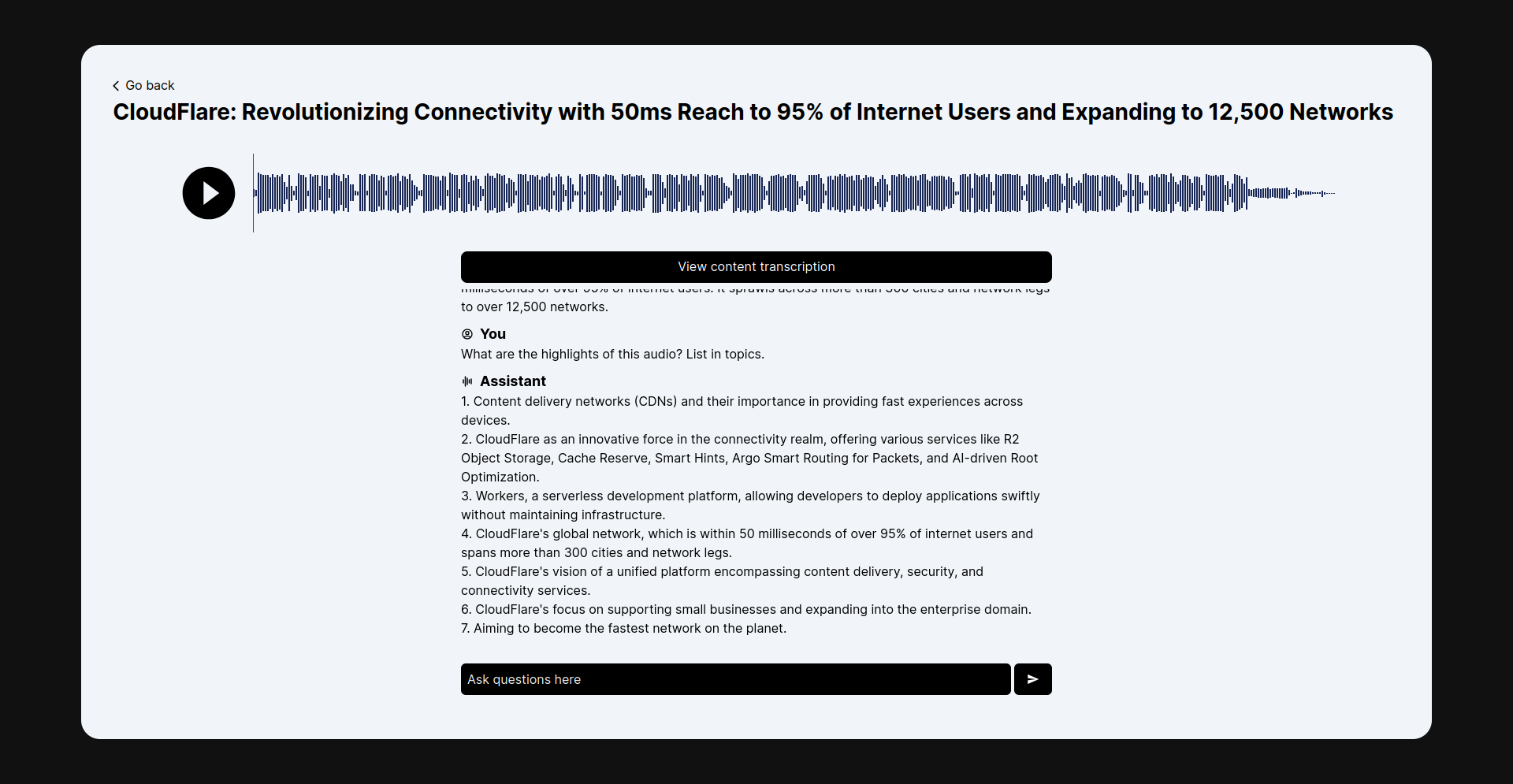

- On the application's homepage, the user uploads an audio file.

- We use the whisper model to transcribe the audio into text.

- We use the neural-chat-7b-v3-1-awq model to generate a title based on the provided content.

- We summarize the content with the bart-large-cnn model.

- After that, the user can ask questions, and we use the neural-chat-7b-v3-1-awq model to answer the user's questions.

- D1 Database is responsible for storing chat and its history.

- The Cloudflare R2 is responsible for storing chat's audio files.

- Cloudflare Pages is responsible for hosting the entire NextJS application, which provides a front-end and back-end ecosystem.

- Preserve conversation: Your chat and audio are stored remotely. You can continue talking about the audio later.

- Start by cloning this repository:

git clone git@github.com:gabrielsenadev/audioinsight.git- Install dependencies:

npm ci- Create D1 Database:

npx wrangler d1 create db-d1-audioinsight- Configure your database:

npx wrangler d1 execute db-d1-audioinsight --remote --file=./src/database/schema.sql- Create your R2 bucket:

npx wrangler r2 bucket create r2-audios- Update wrangler.toml to target your recently created database and bucket properly:

[[d1_databases]]

binding = "DB"

database_name = "db-d1-audioinsight"

database_id = "d485c019-8021-4d08-88e6-e5a6ea66ad4e"

[[r2_buckets]]

binding = 'R2'

bucket_name = 'r2-audios'- Run preview:

npm run preview- Deploy the application:

npm run deployIn the examples/ directory, there are some useful audios to try this application.