UniAD.mp4

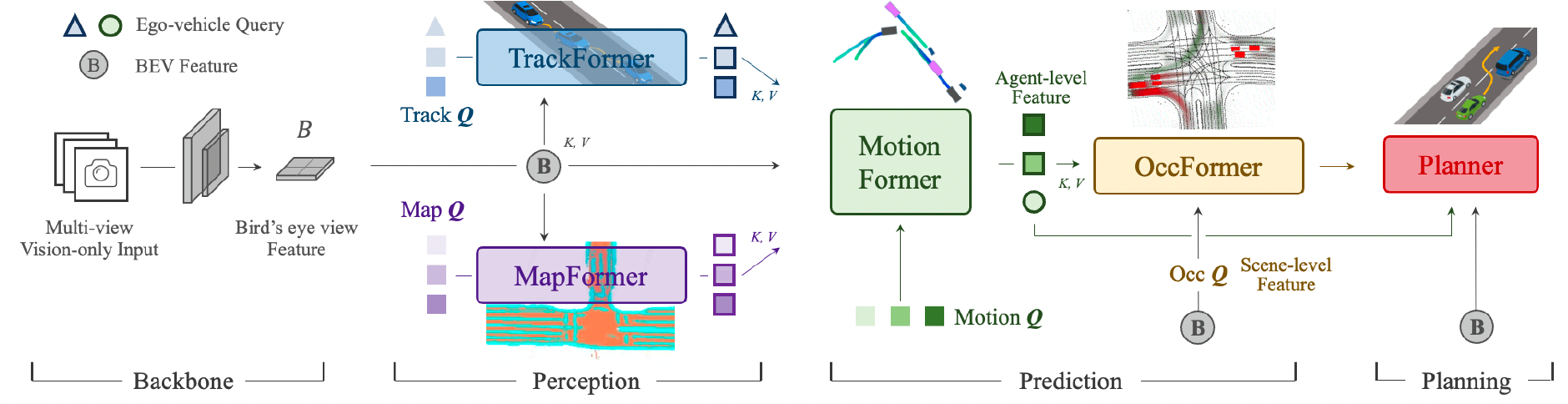

- 🚘 类脑解剖对齐理念: 类脑驾驶是一个遵循类脑解剖对齐理念的统一自动驾驶算法框架。 不是独立的模块化设计和多任务学习,而是分层次地投放一系列任务,包括感知、预测和规划任务。

UniAD 分两个阶段进行训练。 将发布两个阶段的预训练检查点,每个模型的结果如下表所示。

我们首先训练感知模块(即跟踪和地图)以获得下一阶段的稳定权重初始化。 BEV 特征聚合为 5 帧 (queue_length = 5).

| 方法 | 编码器 | Tracking AMOTA |

Mapping IoU-lane |

config | 下载 |

|---|---|---|---|---|---|

| UniAD-B | R101 | 0.390 | 0.297 | base-stage1 | base-stage1 |

我们一起优化包括跟踪、建图、运动、occupancy、规划的所有任务。BEV features are aggregated with 3 frames (queue_length = 3).

| Method | Encoder | Tracking AMOTA |

Mapping IoU-lane |

Motion minADE |

Occupancy IoU-n. |

Planning avg.Col. |

config | Download |

|---|---|---|---|---|---|---|---|---|

| UniAD-B | R101 | 0.358 | 0.317 | 0.709 | 64.1 | 0.25 | base-stage2 | base-stage2 |

- Download the checkpoints you need into

UniAD/ckpts/directory. - You can evaluate these checkpoints to reproduce the results, following the

evaluationsection in TRAIN_EVAL.md. - You can also initialize your own model with the provided weights. Change the

load_fromfield topath/of/ckptin the config and follow thetrainsection in TRAIN_EVAL.md to start training.

The overall pipeline of UniAD is controlled by uniad_e2e.py which coordinates all the task modules in UniAD/projects/mmdet3d_plugin/uniad/dense_heads. If you are interested in the implementation of a specific task module, please refer to its corresponding file, e.g., motion_head.

- Upgrade the implementation of MapFormer from Panoptic SegFormer to TopoNet, which features the vectorized map representations and topology reasoning.

- Support larger batch size [Est. 2023/04]

- [Long-term] Improve flexibility for future extensions

- All configs & checkpoints

- Visualization codes

- Separating BEV encoder and tracking module

- Base-model configs & checkpoints

- Code initialization

- Fix bug: Unable to reproduce the results of stage1 track-map model when training from scratch. [Ref: OpenDriveLab/UniAD#21]

All assets and code are under the Apache 2.0 license unless specified otherwise.

该研究基于以下论文的成果:

@inproceedings{hu2023_uniad,

title={Planning-oriented Autonomous Driving},

author={Yihan Hu and Jiazhi Yang and Li Chen and Keyu Li and Chonghao Sima and Xizhou Zhu and Siqi Chai and Senyao Du and Tianwei Lin and Wenhai Wang and Lewei Lu and Xiaosong Jia and Qiang Liu and Jifeng Dai and Yu Qiao and Hongyang Li},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

year={2023},

}