The machine learning meta-model with synthetic data (useful for MLOps/feature store), is independent of machine learning solutions (definition in json, data in csv/parquet).

This meta-model is suitable for:

- compare capabilities and functions of machine learning solutions (as part of RFP/X and SWOT analysis)

- independent test new versions of machine learning solutions (with aim to keep quality in time)

- unit, sanity, smoke, system, reqression, function, acceptance, performance, shadow, ... tests

- external test coverage (in case, that internal test coverage is not available or weak)

- etc.

Note: You can see real usage in e.g. project qgate-sln-mlrun for testing MLRun/Iguazio solution.

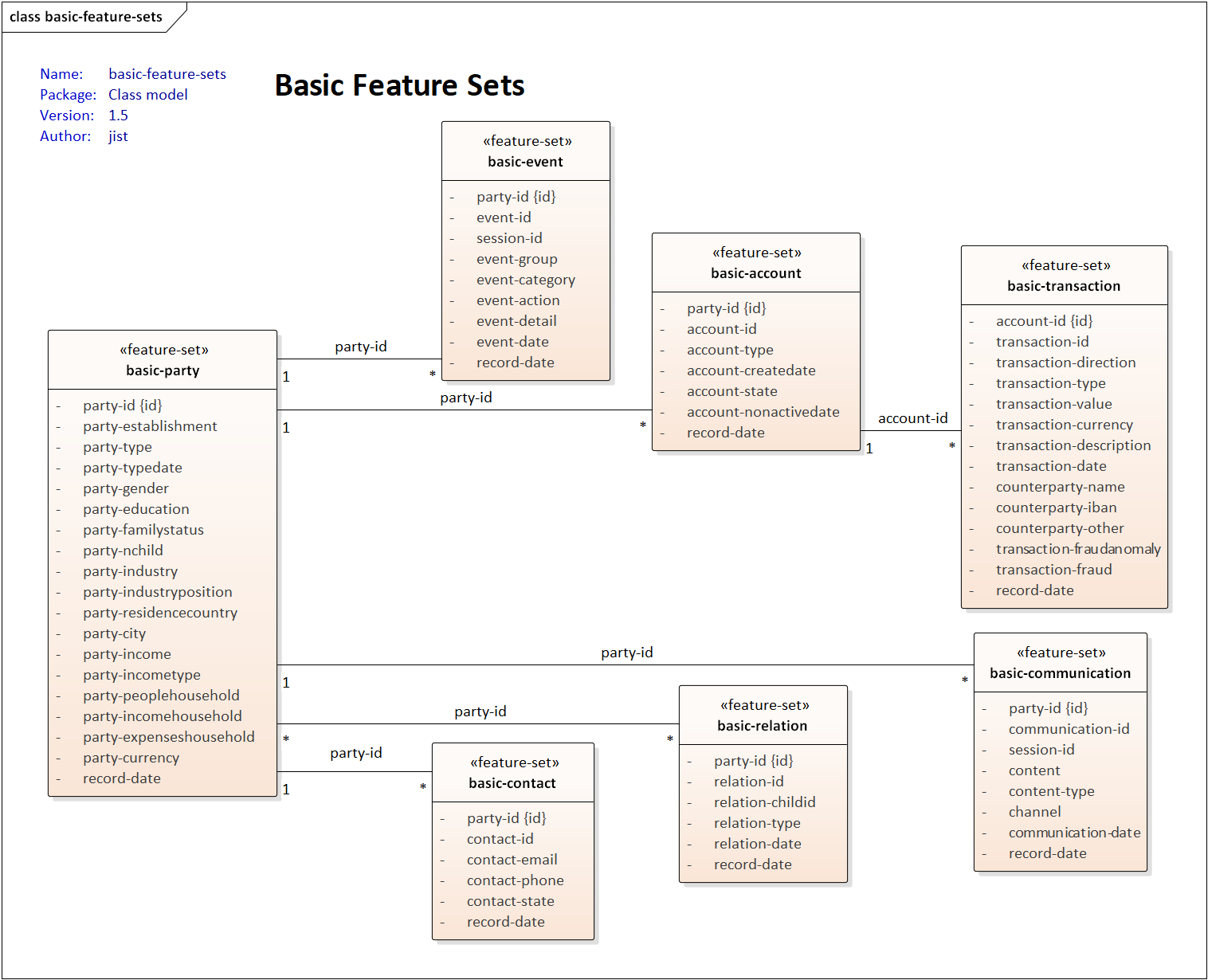

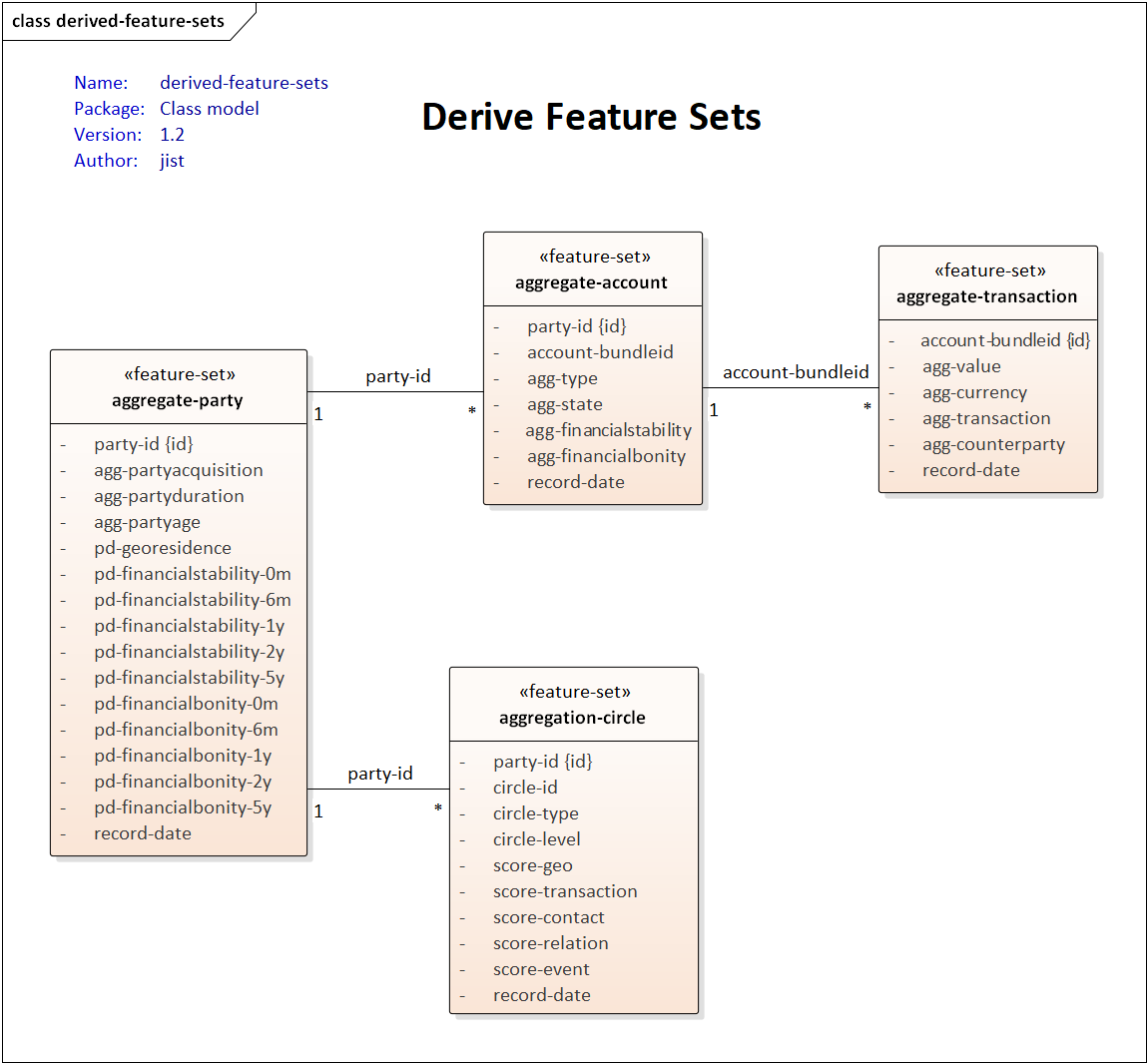

The solution contains this simple structure:

- 00-high-level

- The high-level view to the meta-model for better understanding

- 01-model

- The definition contains 01-projects, 02-feature sets, 03-feature vectors, 04-ml models, etc. in JSON format

- 02-data

- The data for meta-model in CSV/GZ format (future support parquet) for party, account, transaction, event, communication, etc.

- You can also generate your own dataset with requested size (see sample './02-data/03-size-10k.sh' and description 'python main.py generate --help')

- 03-test

- The information for test simplification e.g. feature vector vs on/off-line data, test/data hints, etc.

Addition detail, see

The supported sources/targets for realization (see the definition /spec/targets/

in JSON files):

- Redis, MySQL

- File system with format

- Parquet, CSV