EfficientViT is a new family of vision models for efficient high-resolution vision, especially segmentation. The core building block of EfficientViT is a new lightweight multi-scale attention module that achieves global receptive field and multi-scale learning with only hardware-efficient operations.

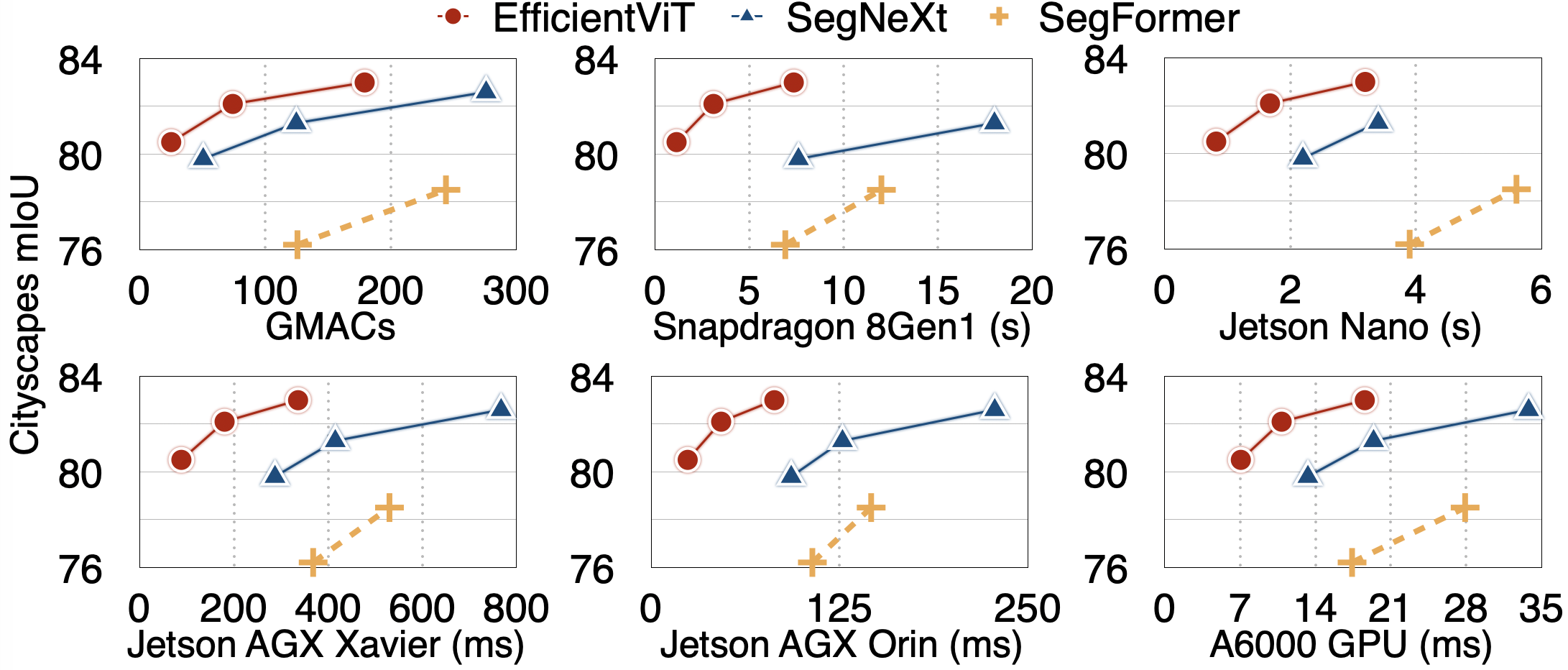

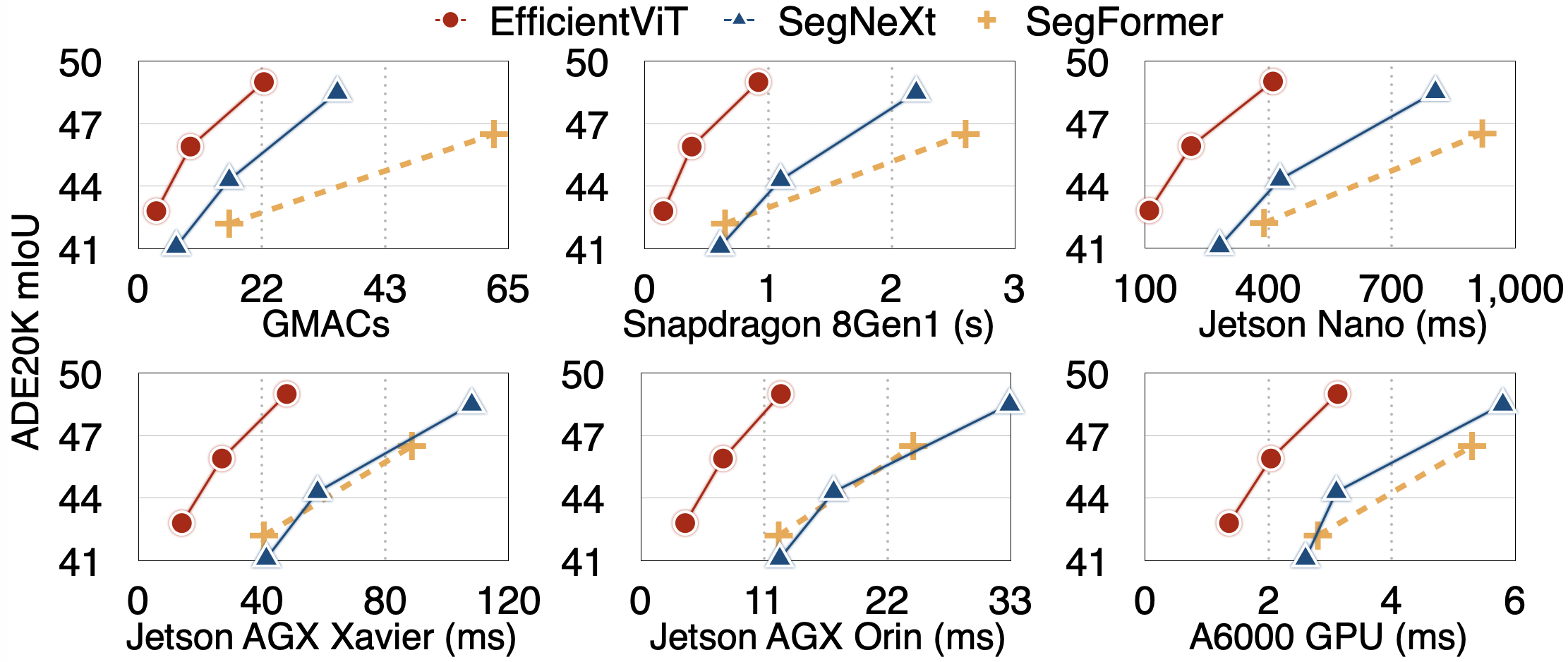

Here are comparisons with prior SOTA semantic segmentation models:

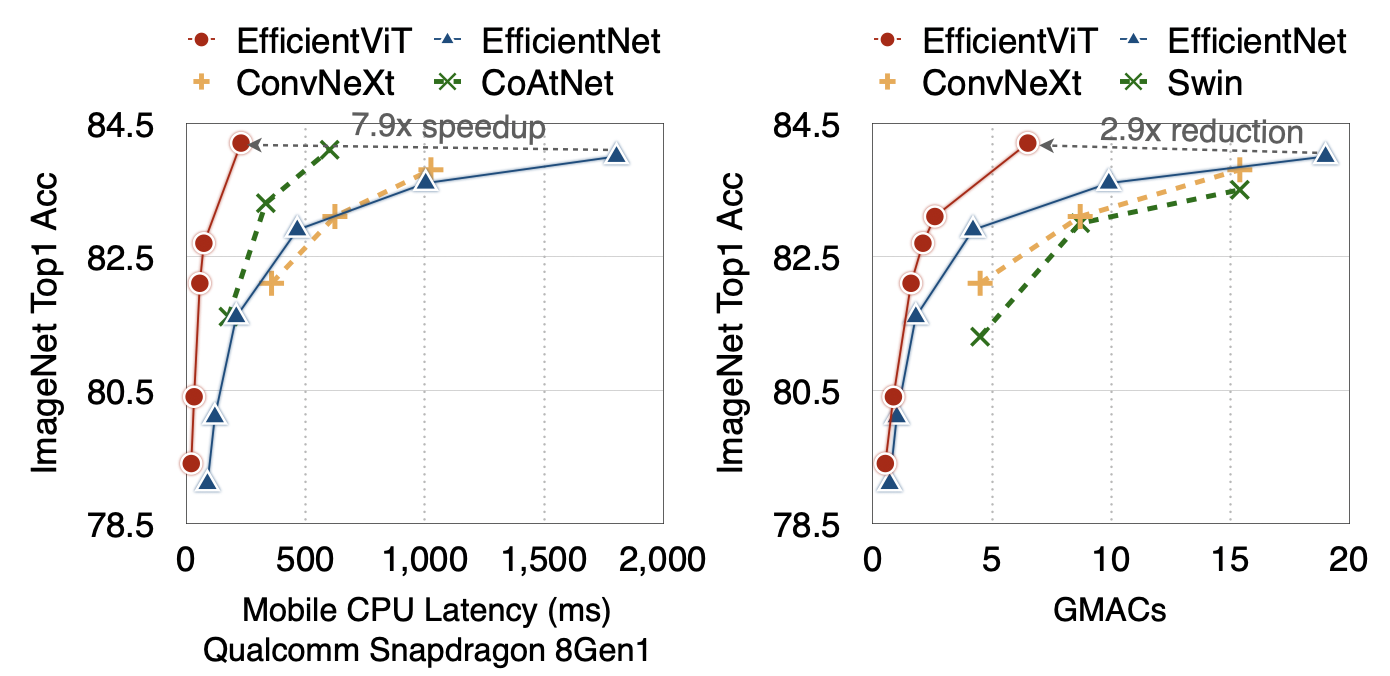

Here are the results of EfficientViT on image classification:

- [2023/07/18] EfficientViT is accepted by ICCV 2023.

conda create -n efficientvit python=3.10

conda activate efficientvit

conda install -c conda-forge mpi4py openmpi

pip install -r requirements.txt- ImageNet: https://www.image-net.org/

- Cityscapes: https://www.cityscapes-dataset.com/

- ADE20K: https://groups.csail.mit.edu/vision/datasets/ADE20K/

Latency is measured on NVIDIA Jetson Nano and NVIDIA Jetson AGX Orin with TensorRT, fp16, batch size 1.

| Model | Resolution | ImageNet Top1 Acc | ImageNet Top5 Acc | Params | MACs | Jetson Nano | Jetson Orin | Checkpoint |

|---|---|---|---|---|---|---|---|---|

| EfficientViT-B1 | 224 | 79.4 | 94.3 | 9.1M | 0.52G | 24.8ms | 1.48ms | link |

| EfficientViT-B1 | 256 | 79.9 | 94.7 | 9.1M | 0.68G | 28.5ms | 1.57ms | link |

| EfficientViT-B1 | 288 | 80.4 | 95.0 | 9.1M | 0.86G | 34.5ms | 1.82ms | link |

| EfficientViT-B2 | 224 | 82.1 | 95.8 | 24M | 1.6G | 50.6ms | 2.63ms | link |

| EfficientViT-B2 | 256 | 82.7 | 96.1 | 24M | 2.1G | 58.5ms | 2.84ms | link |

| EfficientViT-B2 | 288 | 83.1 | 96.3 | 24M | 2.6G | 69.9ms | 3.30ms | link |

| EfficientViT-B3 | 224 | 83.5 | 96.4 | 49M | 4.0G | 101ms | 4.36ms | link |

| EfficientViT-B3 | 256 | 83.8 | 96.5 | 49M | 5.2G | 120ms | 4.74ms | link |

| EfficientViT-B3 | 288 | 84.2 | 96.7 | 49M | 6.5G | 141ms | 5.63ms | link |

| Model | Resolution | Cityscapes mIoU | Params | MACs | Jetson Nano | Jetson Orin | Checkpoint |

|---|---|---|---|---|---|---|---|

| EfficientViT-B0 | 1024x2048 | 75.7 | 0.7M | 4.4G | 275ms | 9.9ms | link |

| EfficientViT-B1 | 1024x2048 | 80.5 | 4.8M | 25G | 819ms | 24.3ms | link |

| EfficientViT-B2 | 1024x2048 | 82.1 | 15M | 74G | 1676ms | 46.5ms | link |

| EfficientViT-B3 | 1024x2048 | 83.0 | 40M | 179G | 3192ms | 81.8ms | link |

| Model | Resolution | ADE20K mIoU | Params | MACs | Jetson Nano | Jetson Orin | Checkpoint |

|---|---|---|---|---|---|---|---|

| EfficientViT-B1 | 512 | 42.8 | 4.8M | 3.1G | 110ms | 4.0ms | link |

| EfficientViT-B2 | 512 | 45.9 | 15M | 9.1G | 212ms | 7.3ms | link |

| EfficientViT-B3 | 512 | 49.0 | 39M | 22G | 411ms | 12.5ms | link |

from efficientvit.cls_model_zoo import create_cls_model

model = create_cls_model(

name="b3",

pretrained=True,

weight_url="assets/checkpoints/cls/b3-r288.pt"

)from efficientvit.seg_model_zoo import create_seg_model

model = create_seg_model(

name="b3",

dataset="cityscapes",

pretrained=True,

weight_url="assets/checkpoints/seg/cityscapes/b3.pt"

)from efficientvit.seg_model_zoo import create_seg_model

model = create_seg_model(

name="b3",

dataset="ade20k",

pretrained=True,

weight_url="assets/checkpoints/seg/ade20k/b3.pt"

)Please run eval_cls_model.py or eval_seg_model.py to evaluate our models.

Examples: classification, segmentation

Please run eval_seg_model.py to visualize the outputs of our semantic segmentation models.

Example:

python eval_seg_model.py --dataset cityscapes --crop_size 1024 --model b3 --save_path demo/cityscapes/b3/To generate TFLite files, please refer to tflite_export.py. It requires the TinyNN package.

pip install git+https://github.com/alibaba/TinyNeuralNetwork.gitExample:

python tflite_export.py --export_path model.tflite --task seg --dataset ade20k --model b3 --resolution 512 512To generate onnx files, please refer to onnx_export.py.

Please see TRAINING.md for detailed training instructions.

Han Cai: hancai@mit.edu

- ImageNet Pretrained models

- Segmentation Pretrained models

- ImageNet training code

- Super resolution models

- Object detection models

- Segmentation training code

If EfficientViT is useful or relevant to your research, please kindly recognize our contributions by citing our paper:

@article{cai2022efficientvit,

title={Efficientvit: Enhanced linear attention for high-resolution low-computation visual recognition},

author={Cai, Han and Gan, Chuang and Han, Song},

journal={arXiv preprint arXiv:2205.14756},

year={2022}

}