This the NCKU course WEB RESOURCE DISCOVERY AND EXPLOITATION homework III, targe is create a crawler application to crawling millions webpage.

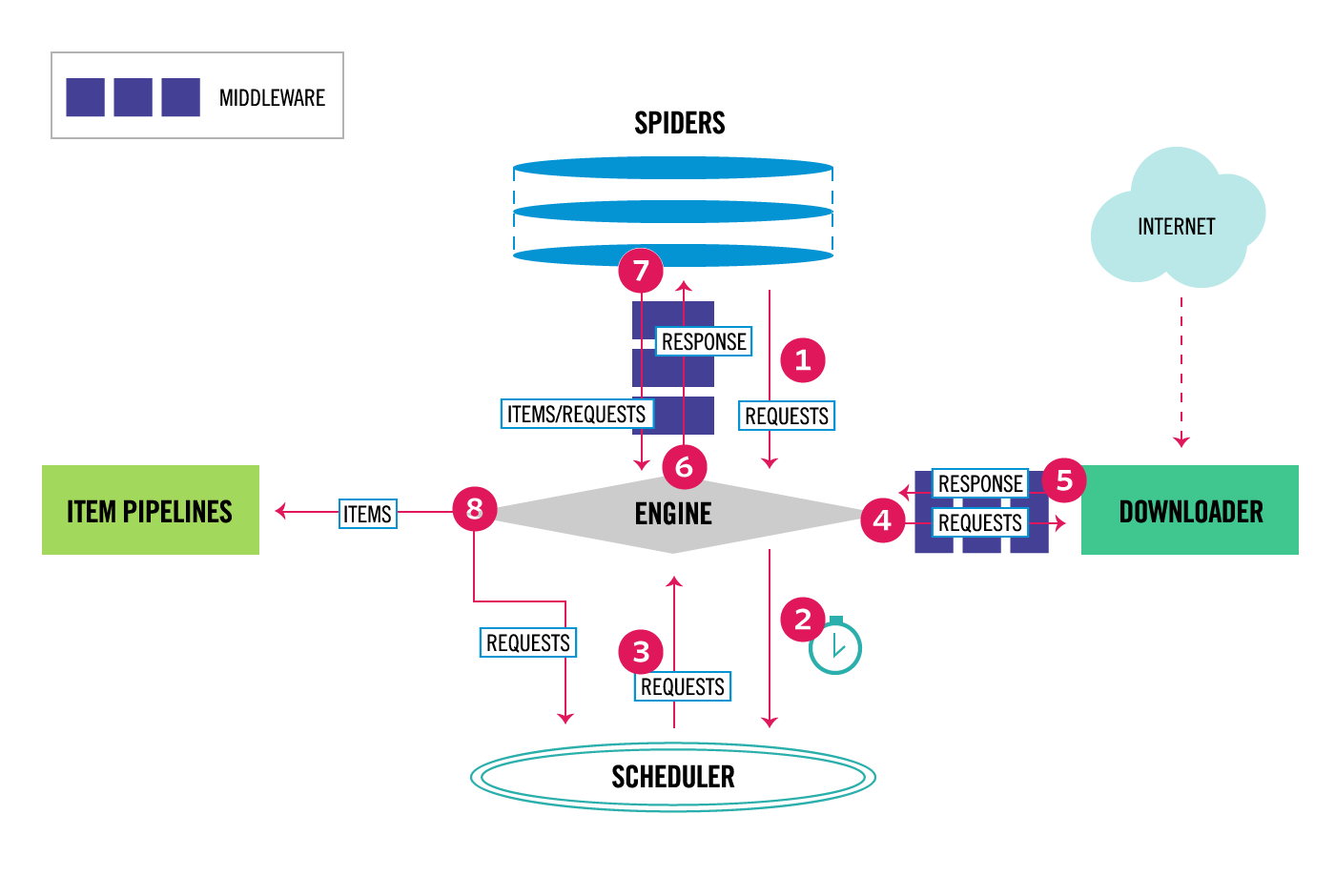

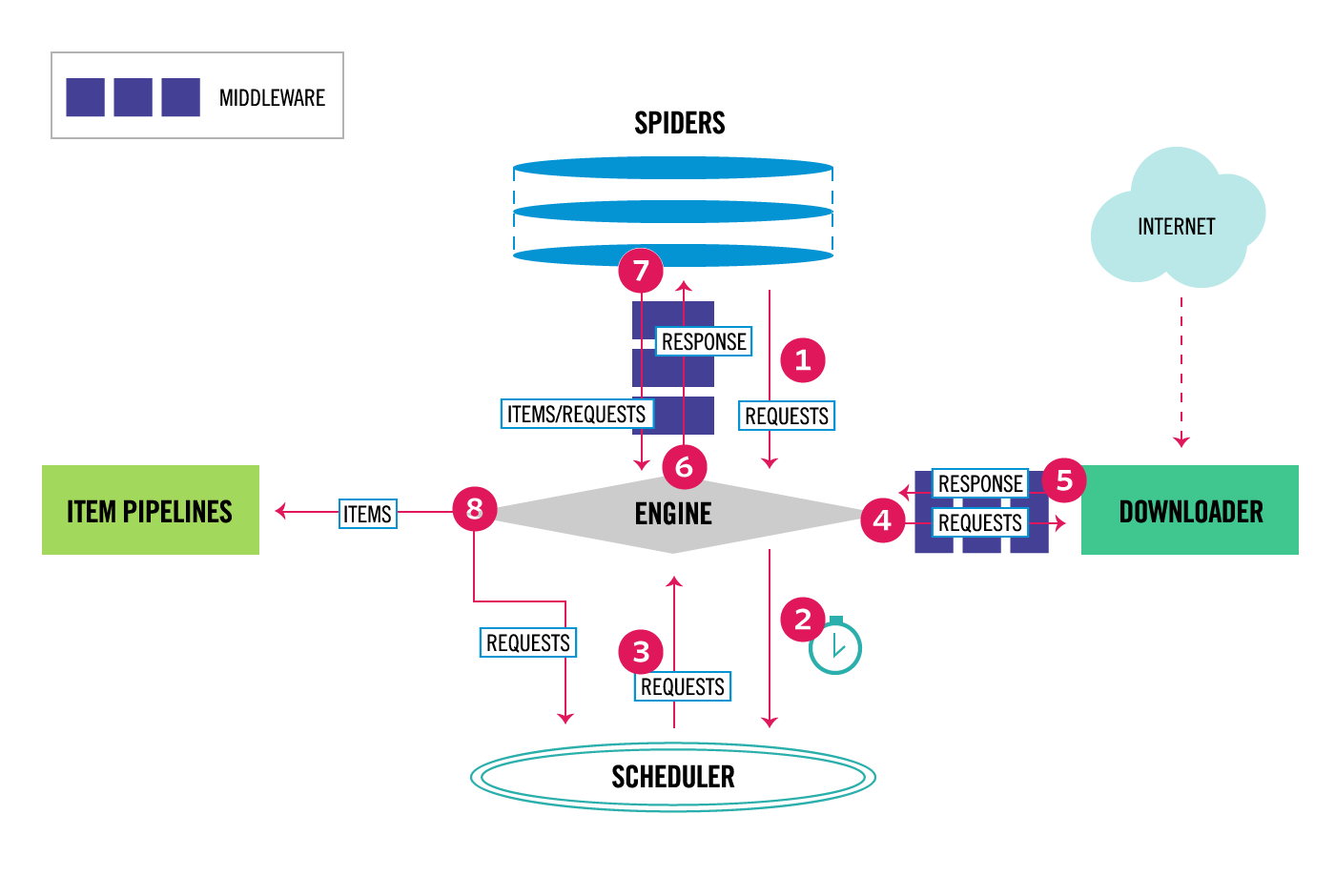

image source

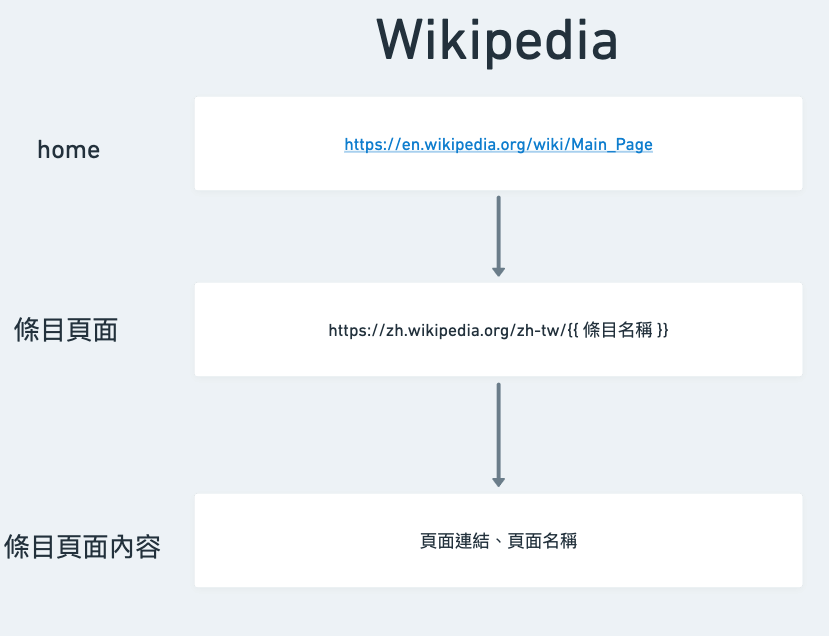

image source

Medium Article

- Crawl millions of webpages

- Remove non-HTML pages

- Performance optimization

- How many page can crawl per hour

- Total time to crawl millions of pages

- Skip robot.txt

# edit settings.py

ROBOTSTXT_OBEY = False

- Use random user-agent

pip install fake-useragent

# edit middlewares.py

class FakeUserAgentMiddleware(UserAgentMiddleware):

def __init__(self, user_agent=''):

self.user_agent = user_agent

def process_request(self, request, spider):

ua = UserAgent()

request.headers['User-Agent'] = ua.randomDOWNLOADER_MIDDLEWARES = {

"millions_crawler.middlewares.FakeUserAgentMiddleware": 543,

}single spider in 2023/03/21

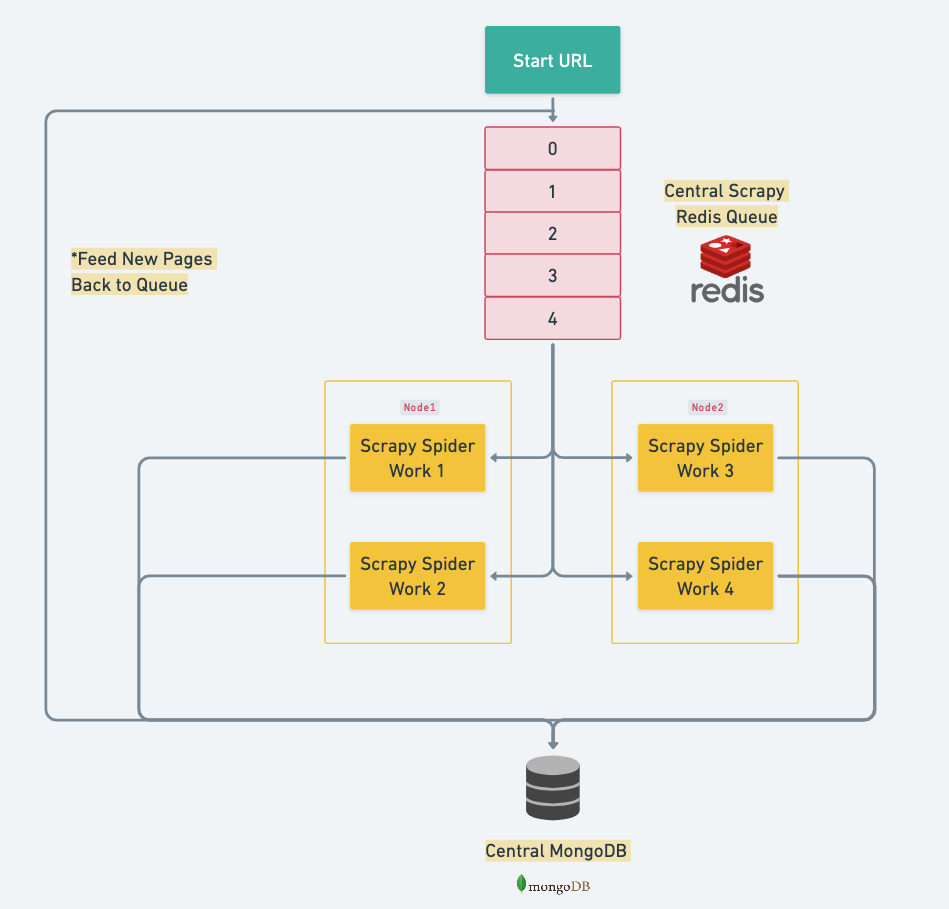

| Spider |

Total Page |

Total Time (hrs) |

Page per Hour |

| tweh |

152,958 |

1.3 |

117,409 |

| w8h |

4,759 |

0.1 |

32,203 |

| wiki* |

13,000,320 |

43 |

30,240 |

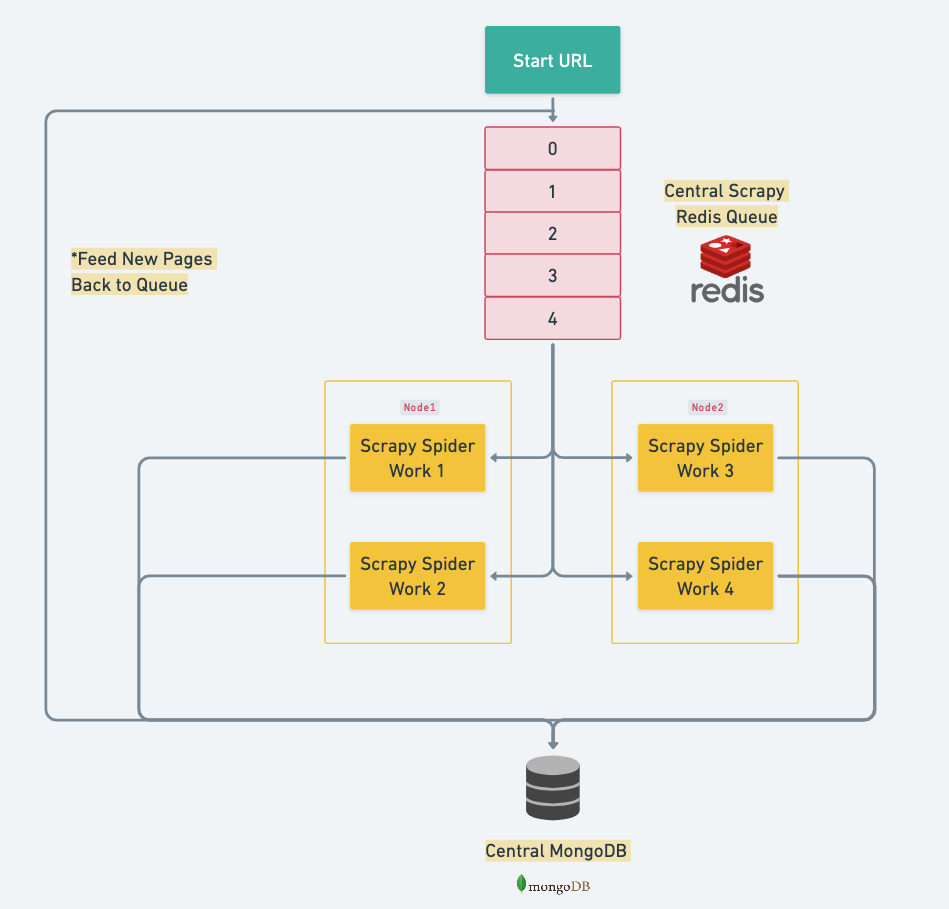

distributed spider (4 spider) in 2023/03/24

| Spider |

Total Page |

Total Time (hrs) |

Page per Hour |

| tweh |

153,288 |

0.52 |

- |

| w8h |

4,921 |

0.16 |

- |

| wiki* |

4,731,249 |

43.2 |

109,492 |

- create a .env file

- Install Redis

sudo apt-get install redis-server

- Install MongoDB

sudo apt-get install mongodb

- Run Redis

- run MongoDB

sudo service mongod start

- Run spider

cd millions-crawler

scrapy crawl [$spider_name] # $spider_name = tweh, w8h, wiki

pip install -r requirements.txt

- GitHub | fake-useragent

- GitHub | scrapy

- 【Day 20】反反爬蟲

- Documentation of Scrapy

- 解决 Redis 之 MISCONF Redis is configured to save RDB snapshots, but is currently not able to persist o...

- Ubuntu Linux 安裝、設定 Redis 資料庫教學與範例

- 如何連線到遠端的 Linux + MongoDB 伺服器?

- Scrapy-redis 之終結篇