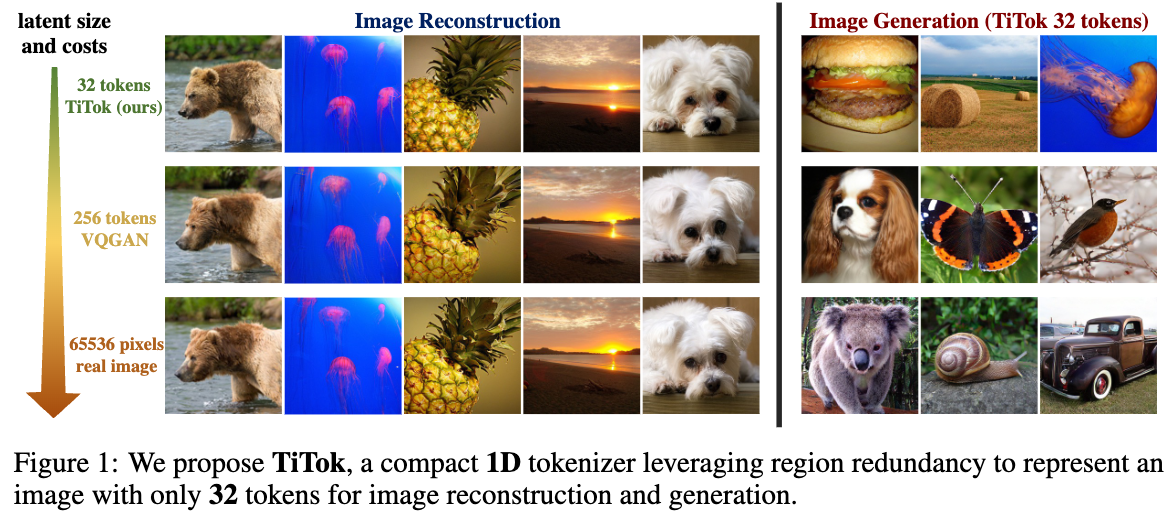

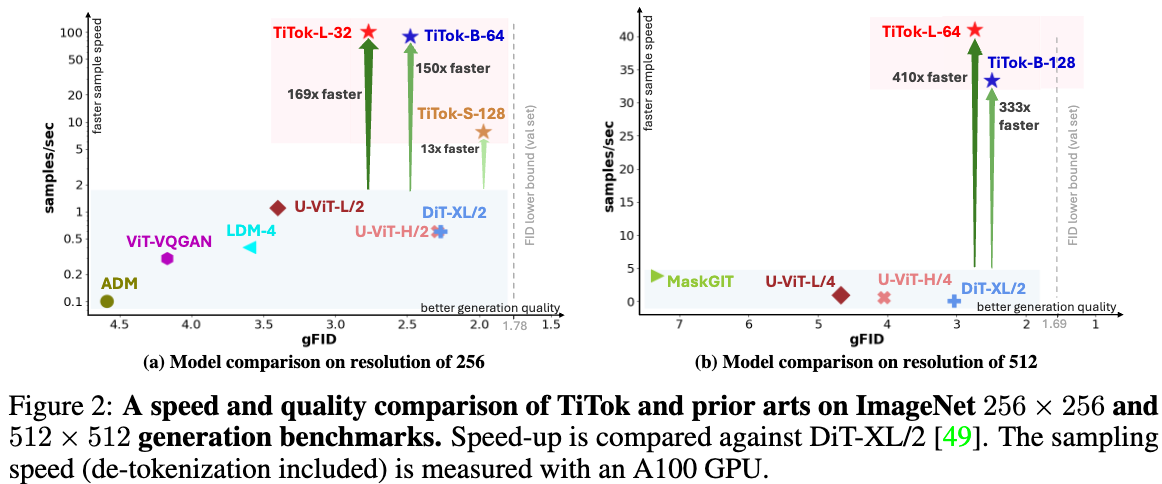

We present a compact 1D tokenizer which can represent an image with as few as 32 discrete tokens. As a result, it leads to a substantial speed-up on the sampling process (e.g., 410 × faster than DiT-XL/2) while obtaining a competitive generation quality.

We introduce a novel 1D image tokenization framework that breaks grid constraints existing in 2D tokenization methods, leading to a much more flexible and compact image latent representation.

The proposed 1D tokenizer can tokenize a 256 × 256 image into as few as 32 discrete tokens, leading to a signigicant speed-up (hundreds times faster than diffusion models) in generation process, while maintaining state-of-the-art generation quality.

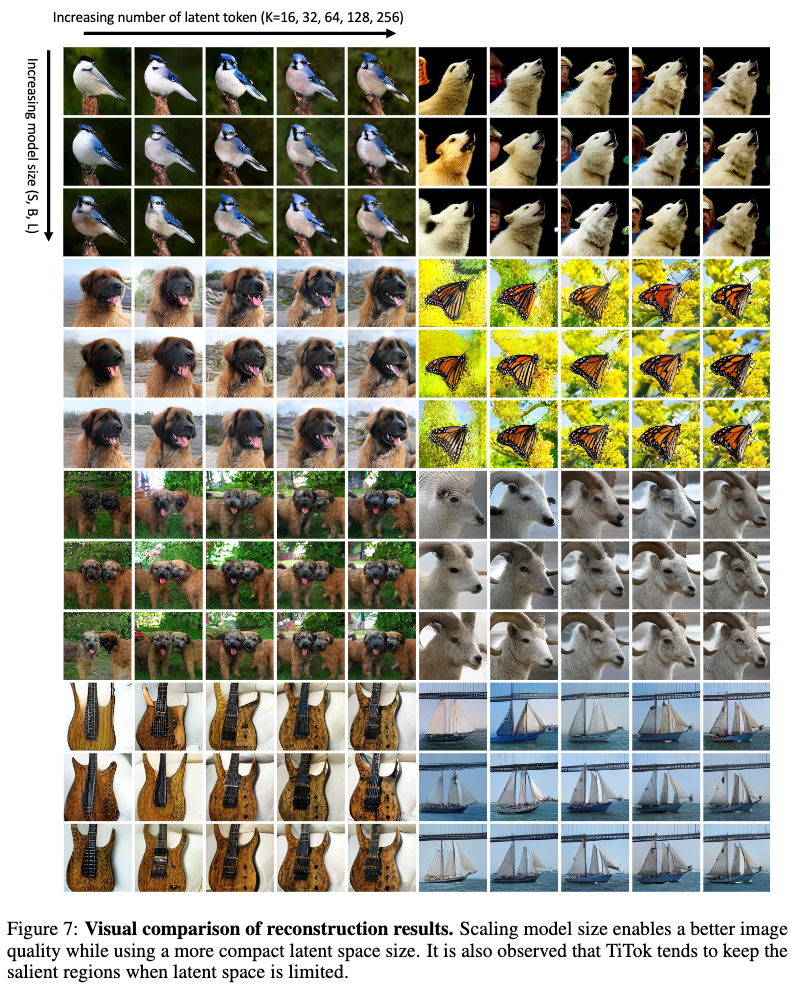

We conduct a series of experiments to probe the properties of rarely studied 1D image tokenization, paving the path towards compact latent space for efficient and effective image representation.

| Dataset | Model | Link | FID |

|---|---|---|---|

| ImageNet | TiTok-L-32 Tokenizer | checkpoint, hf model | 2.21 (reconstruction) |

| ImageNet | TiTok-B-64 Tokenizer | TODO | 1.70 (reconstruction) |

| ImageNet | TiTok-S-128 Tokenizer | TODO | 1.71 (reconstruction) |

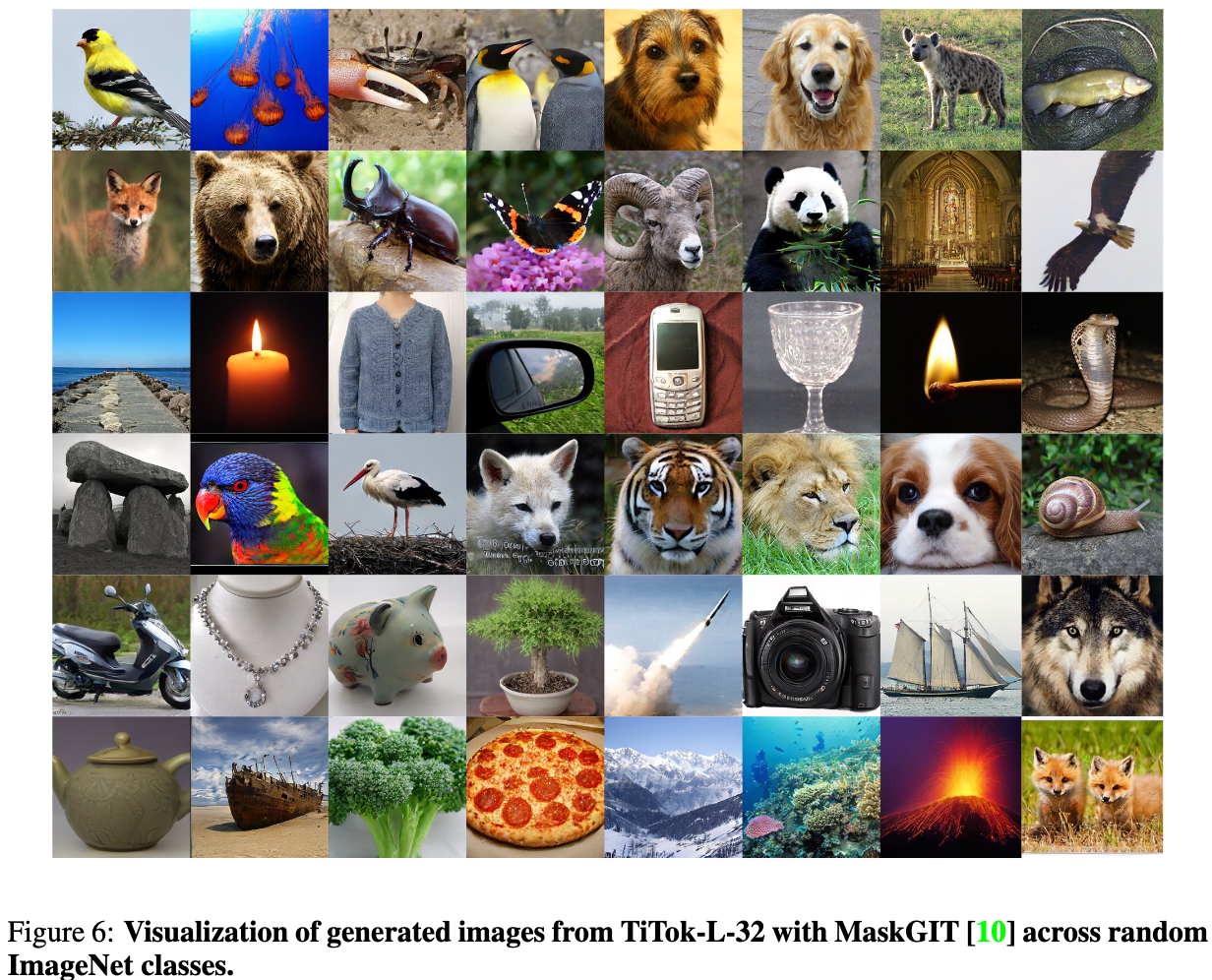

| ImageNet | TiTok-L-32 Generator | checkpoint, hf model | 2.77 (generation) |

| ImageNet | TiTok-B-64 Generator | TODO | 2.48 (generation) |

| ImageNet | TiTok-S-128 Generator | TODO | 1.97 (generation) |

Please note that these models are trained only on limited academic dataset ImageNet, and they are only for research purposes.

pip3 install -r requirements.txtWe provide a jupyter notebook for a quick tutorial on reconstructing and generating images with TiTok-L-32.

We also support TiTok with HuggingFace 🤗 Demo!

If you use our work in your research, please use the following BibTeX entry.

@article{yu2024an,

author = {Qihang Yu and Mark Weber and Xueqing Deng and Xiaohui Shen and Daniel Cremers and Liang-Chieh Chen},

title = {An Image is Worth 32 Tokens for Reconstruction and Generation},

journal = {arxiv: 2406.07550},

year = {2024}

}