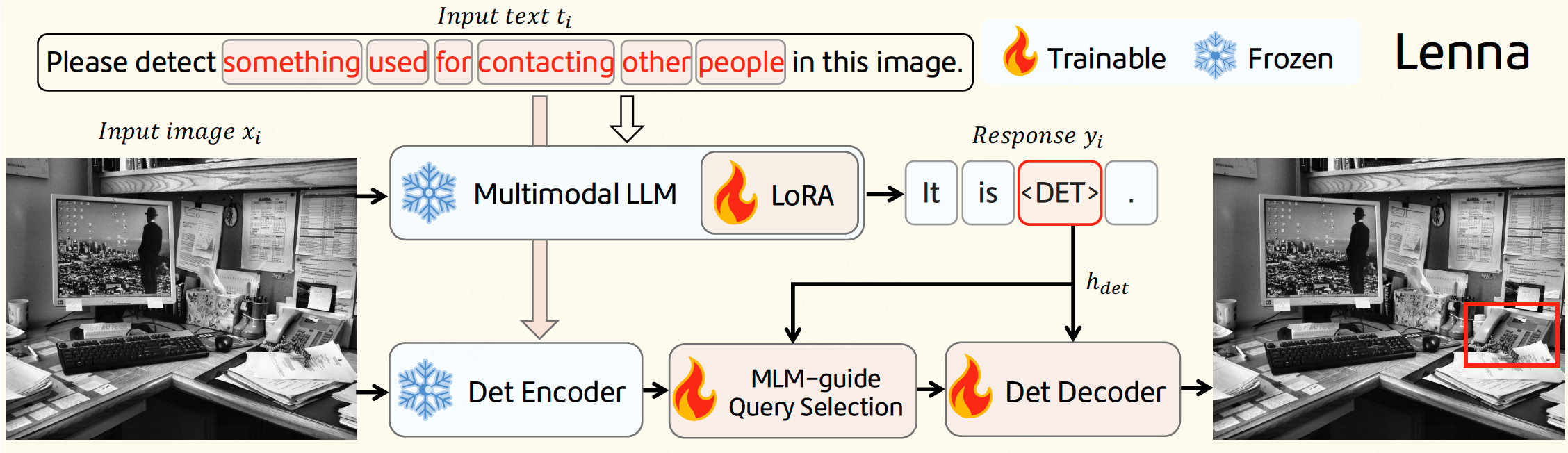

Lenna Architecture

1. Installation

-

We utilize A100 GPU for training and inference.

-

Git clone our repository and creating conda environment:

git clone https://github.com/Meituan-AutoML/Lenna.git conda create -n lenna python=3.10 conda activate lenna pip install -r requirements.txt

-

Follow mmdet/get_started to install mmdetection series.

2. Prepare Lenna checkpoint

- Download the Lenna-7B checkpoint from HuggingFace.

4. Inference

-

After preparing the checkpoint and conda environment, please execute the following script to implement single image inference:

python chat.py \ --ckpt-path path/to/Lenna-7B \ --vis_save_path ./vis_output \ --threshold 0.3 -

When the model has finished loading, you will see the following prompt:

[Lenna] Please input your caption: {input your caption} [Lenna] Input prompt: Please detect the {your caption} in this image. [Lenna] Please input the image path: {input your image path} -

Fill in the

{input your caption}with the description of the object you want to detect, and the{input your image path}with your image path.

- 2023-12-28 Inference code and the Lenna-7B model are released.

- 2023-12-05 Note: Paper is released on arxiv.

@article{wei2023lenna,

title={Lenna: Language enhanced reasoning detection assistant},

author={Wei, Fei and Zhang, Xinyu and Zhang, Ailing and Zhang, Bo and Chu, Xiangxiang},

journal={arXiv preprint arXiv:2312.02433},

year={2023}

}This repo benefits from LISA, GroundingDINO, LLaVA and Vicuna.

This repository is released under the Apache 2.0 license as found in the LICENSE file.