This repository contains the code for replicating the results of our paper:

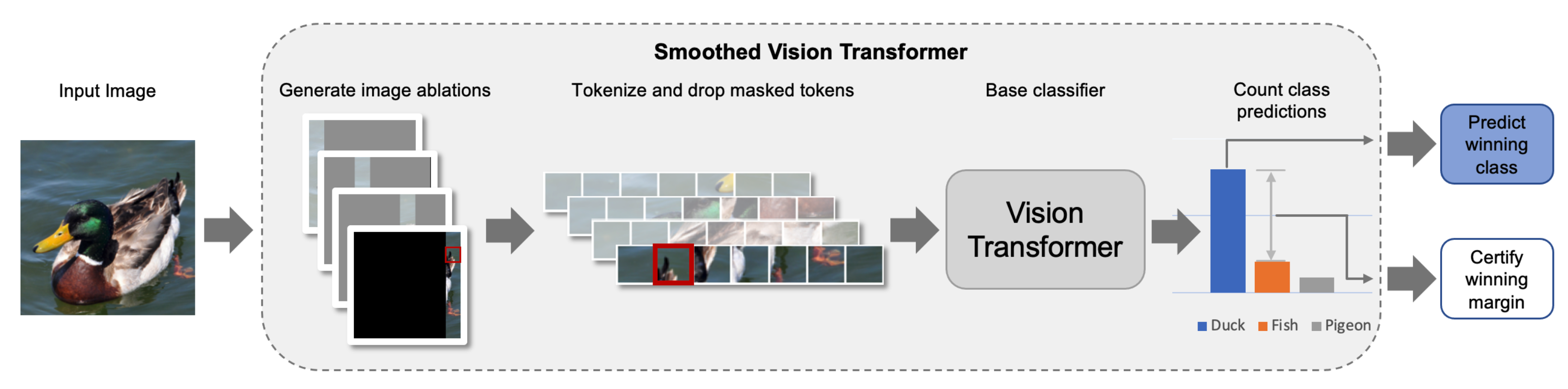

Certified Patch Robustness via Smoothed Vision Transformers

Hadi Salman*, Saachi Jain*, Eric Wong*, Aleksander Madry

Paper

Blog post Part I.

Blog post Part II.

@article{salman2021certified,

title={Certified Patch Robustness via Smoothed Vision Transformers},

author={Hadi Salman and Saachi Jain and Eric Wong and Aleksander Madry},

booktitle={ArXiv preprint arXiv:2110.07719},

year={2021}

}Our code relies on the MadryLab public robustness library, which will be automatically installed when you follow the instructions below.

-

Clone our repo:

git clone https://github.mit.edu/hady/smoothed-vit -

Install dependencies:

conda create -n smoothvit python=3.8 conda activate smoothvit pip install -r requirements.txt

Now, we will walk you through the steps to create a smoothed ViT on the CIFAR-10 dataset. Similar steps can be followed for other datasets.

The entry point of our code is main.py (see the file for a full description of arguments).

First we will train the base classifier with ablations as data augmentation. Then we will apply derandomizd smoothing to build a smoothed version of the model which is certifiably robust.

The first step is to train the base classifier (here a ViT-Tiny) with ablations.

python src/main.py \

--dataset cifar10 \

--data /tmp \

--arch deit_tiny_patch16_224 \

--pytorch-pretrained \

--out-dir OUTDIR \

--exp-name demo \

--epochs 30 \

--lr 0.01 \

--step-lr 10 \

--batch-size 128 \

--weight-decay 5e-4 \

--adv-train 0 \

--freeze-level -1 \

--drop-tokens \

--cifar-preprocess-type simple224 \

--ablate-input \

--ablation-type col \

--ablation-size 4

Once training is done, the mode is saved in OUTDIR/demo/.

Now we are ready to apply derandomized smoothing to obtain certificates for each datapoint against adversarial patches. To do so, simply run:

python src/main.py \

--dataset cifar10 \

--data /tmp \

--arch deit_tiny_patch16_224 \

--out-dir OUTDIR \

--exp-name demo \

--batch-size 128 \

--adv-train 0 \

--freeze-level -1 \

--drop-tokens \

--cifar-preprocess-type simple224 \

--resume \

--eval-only 1 \

--certify \

--certify-out-dir OUTDIR_CERT \

--certify-mode col \

--certify-ablation-size 4 \

--certify-patch-size 5

This will calculate the standard and certified accuracies of the smoothed model. The results will be dumped into OUTDIR_CERT/demo/.

That's it! Now you can replicate all the results of our paper.

If you find our pretrained models useful, please consider citing our work.

| Model | Ablation Size = 19 |

|---|---|

| ResNet-18 | LINK |

| ResNet-50 | LINK |

| WRN-101-2 | LINK |

| ViT-T | LINK |

| ViT-S | LINK |

| ViT-B | LINK |

| Model | Ablation Size = 75 |

|---|---|

| ViT-T | LINK |

| ViT-S | LINK |

| ViT-B | LINK |

We have uploaded the most important models. If you need any other model (for the sweeps for example) please let us know and we are happy to provide!