Scalable and Transferable Medical Image Segmentation Models Enpowered by Large-scale Supervised Pre-training

Our models are built based on nnUNetV1. You should meet the requirements of nnUNet. Copy the following files in this repo to your nnUNet repository.

copy /network_training/* nnunet/training/network_training/

copy /network_architecture/* nnunet/network_architecture/

copy run_finetuning.py nnunet/run/

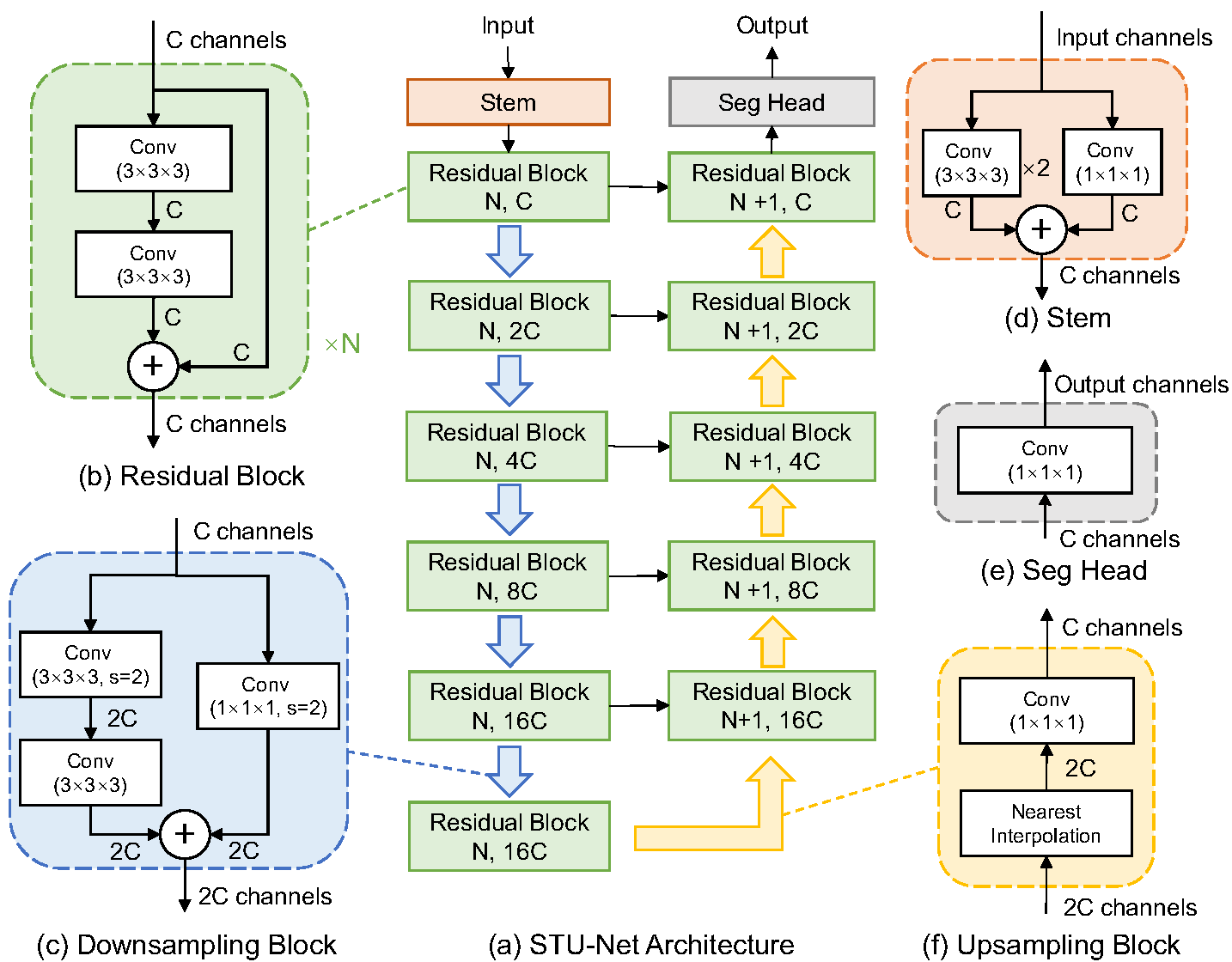

| name | crop size | #params | FLOPs | model |

|---|---|---|---|---|

| STU-Net-S | 128x128x128 | 14.6M | 0.13T | model |

| STU-Net-B | 128x128x128 | 58.26M | 0.51T | model |

| STU-Net-L | 128x128x128 | 440.30M | 3.81T | model |

| STU-Net-H | 128x128x128 | 1457.33M | 12.60T | model |

To perform fine-tuning on downstream tasks, use the following command with the base model as an example:

python run_finetuning.py 3d_fullres STUNetTrainer_base_ft TASKID FOLD -pretrained_weights MODEL

Please note that you may need to adjust the learning rate according to the specific downstream task. To do this, modify the learning rate in the corresponding Trainer (e.g., STUNetTrainer_base_ft) for the task.