This codebase provides the official PyTorch implementation of our NeurIPS 2023 paper:

FreeMask: Synthetic Images with Dense Annotations Make Stronger Segmentation Models

Lihe Yang, Xiaogang Xu, Bingyi Kang, Yinghuan Shi, Hengshuang Zhao

In Conference on Neural Information Processing Systems (NeurIPS), 2023

[Paper] [Datasets] [Models] [Logs] [BibTeX]

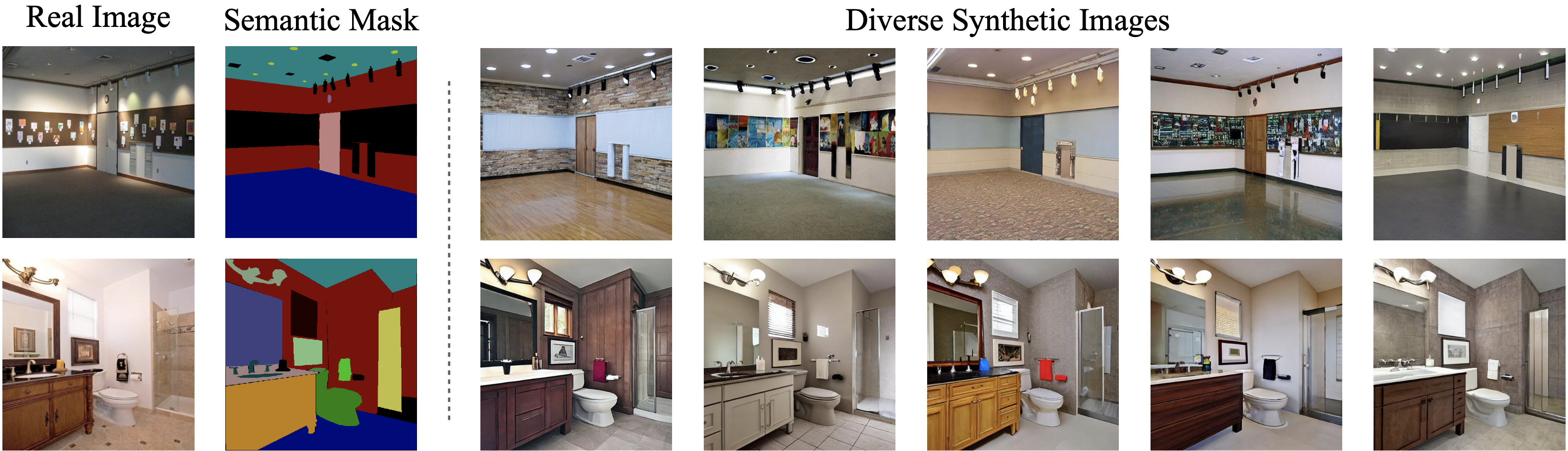

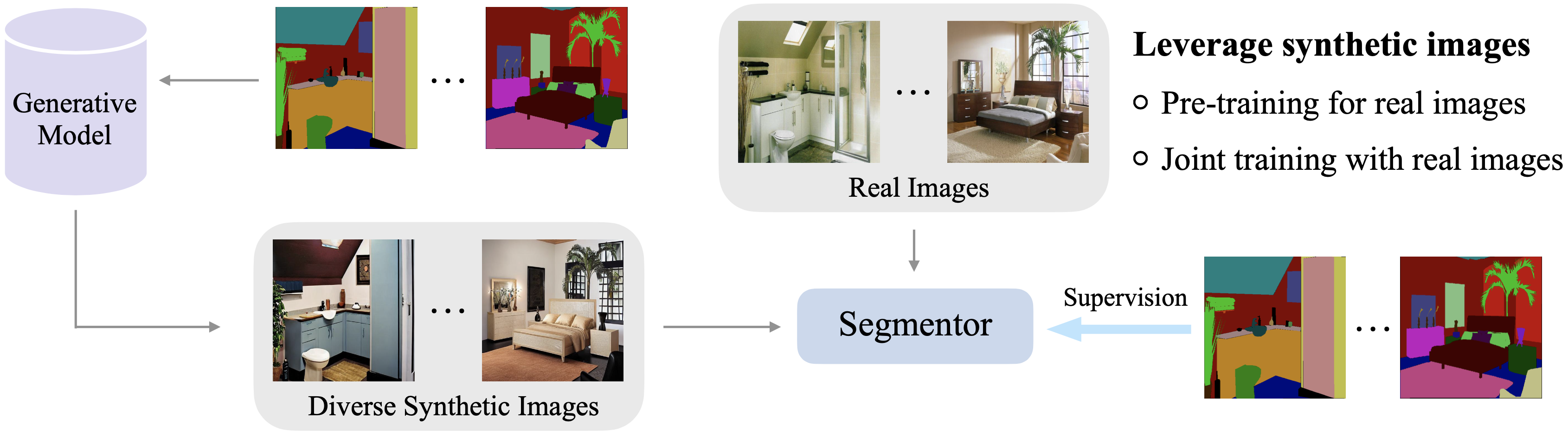

We generate diverse synthetic images from semantic masks, and use these synthetic pairs to boost the fully-supervised semantic segmentation performance.

| Model | Backbone | Real Images | + Synthetic Images | Gain ( |

Download |

|---|---|---|---|---|---|

| Mask2Former | Swin-T | 48.7 | 52.0 | +3.3 | ckpt | log |

| Mask2Former | Swin-S | 51.6 | 53.3 | +1.7 | ckpt | log |

| Mask2Former | Swin-B | 52.4 | 53.7 | +1.3 | ckpt | log |

| SegFormer | MiT-B2 | 45.6 | 47.9 | +2.3 | ckpt | log |

| SegFormer | MiT-B4 | 48.5 | 50.6 | +2.1 | ckpt | log |

| Segmenter | ViT-S | 46.2 | 47.9 | +1.7 | ckpt | log |

| Segmenter | ViT-B | 49.6 | 51.1 | +1.5 | ckpt | log |

| Model | Backbone | Real Images | + Synthetic Images | Gain ( |

Download |

|---|---|---|---|---|---|

| Mask2Former | Swin-T | 44.5 | 46.4 | +1.9 | ckpt | log |

| Mask2Former | Swin-S | 46.8 | 47.6 | +0.8 | ckpt | log |

| SegFormer | MiT-B2 | 43.5 | 44.2 | +0.7 | ckpt | log |

| SegFormer | MiT-B4 | 45.8 | 46.6 | +0.8 | ckpt | log |

| Segmenter | ViT-S | 43.5 | 44.8 | +1.3 | ckpt | log |

| Segmenter | ViT-B | 46.0 | 47.5 | +1.5 | ckpt | log |

We share our already processed synthetic ADE20K and COCO-Stuff-164K datasets below. The ADE20K-Synthetic dataset is 20x larger than its real counterpart, while the COCO-Synthetic is 6x larger than its real counterpart.

Follow the instructions to download. The COCO annotations need to be pre-processed following the instructions.

Install MMSegmentation:

pip install -U openmim

mim install mmengine

mim install "mmcv>=2.0.0"

pip install "mmsegmentation>=1.0.0"

pip install "mmdet>=3.0.0rc4"

pip install ftfyNote:

- Please modify the dataset path

data_rootanddata_root_synin config files. - If you use SegFormer, please convert the pre-trained MiT backbones following this and put them under

pretraindirectory.

bash dist_train.sh <config> 8We thank FreestyleNet for providing their mask-to-image synthesis models.

If you find this project useful, please consider citing:

@inproceedings{freemask,

title={FreeMask: Synthetic Images with Dense Annotations Make Stronger Segmentation Models},

author={Yang, Lihe and Xu, Xiaogang and Kang, Bingyi and Shi, Yinghuan and Zhao, Hengshuang},

booktitle={NeurIPS},

year={2023}

}