Authors: Yihao Liu*, Liangbin Xie*, Li Siyao, Wenxiu Sun, Yu Qiao, Chao Dong [paper]

*equal contribution

If you find our work is useful, please kindly cite it.

@InProceedings{liu2020enhanced,

author = {Yihao Liu and Liangbin Xie and Li Siyao and Wenxiu Sun and Yu Qiao and Chao Dong},

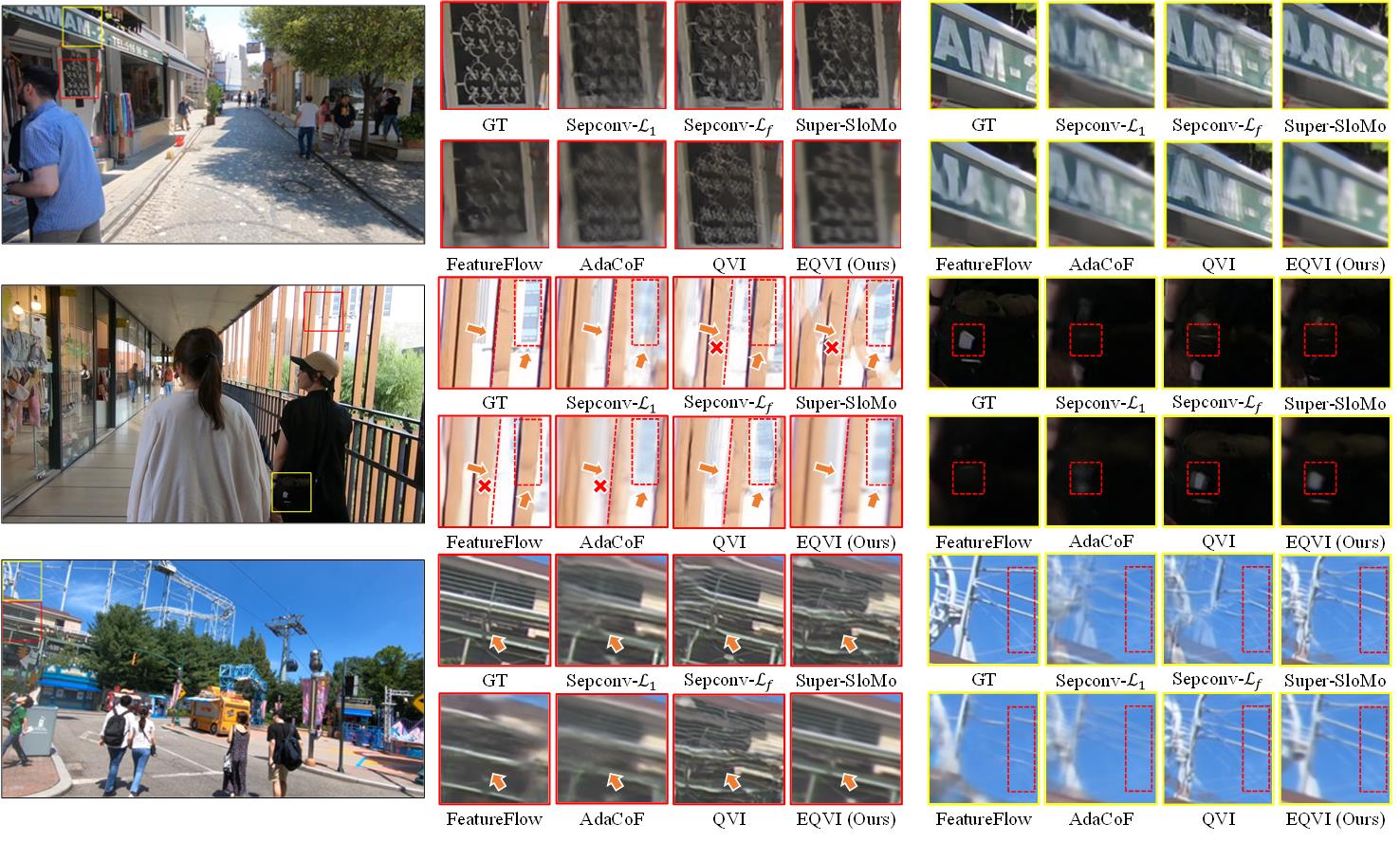

title = {Enhanced quadratic video interpolation},

booktitle = {European Conference on Computer Vision Workshops},

year = {2020},

}

- Three pretrained models trained on REDS_VTSR dataset.

- Inference script for REDS_VTSR validation and testing dataset.

- Upload pretrained models to [Baidu Drive].

- Provide a generic inference script for arbitrary dataset.

- Provide more pretrained models on other training datasets.

- Make a demo video.

- Summarize quantitative comparisons in a Table.

- Provide a script to help with synthesizing a video from given frames.

👷 The list gose on and on...

So many things to do, let me have a break... 🙈

- Python >= 3.6

- Tested on PyTorch==1.2.0 (may work for other versions)

- Tested on Ubuntu 18.04.1 LTS

- NVIDIA GPU + CUDA

In our implementation, we use ScopeFlow as a pretrained flow estimation module.

Please follow the instructions to install the required correlation package:

cd models/scopeflow_models/correlation_package

python setup.py install

Note:

if you use CUDA>=9.0, just execute the above commands straightforward;

if you use CUDA==8.0, you need to change the folder name correlation_package_init into correlation_package, and then execute the above commands.

Please refer to ScopeFlow and irr for more information.

-

⚡ Currently we only provide EQVI models trained on REDS_VTSR dataset.

-

⚡ We empirically find that the training datasets have significant influence on the performance. That is to say, there exists a large dataset bias. When the distribution of training and testing data mismatch, the model performance could dramatically drop. Thus, the generalizability of video interpolation methods is worth investigating.

-

The pretrained models can be downloaded at Google Drive or Baidu Drive (Token: satj).

-

Unzip the downloaded zip file.

unzip checkpoints.zip

There should be four models in the checkpoints folder:

checkpoints/scopeflow/Sintel_ft/checkpoint_best.ckpt

# pretrained ScopeFlow model with Sintel finetuning (you can explore other released models of ScopeFlow)checkpoints/Stage3_RCSN_RQFP/Stage3_checkpoint.ckpt

# pretrained Stage3 EQVI model (RCSN + RQFP)checkpoints/Stage4_MSFuion/Stage4_checkpoint.ckpt

# pretrained Stage4 EQVI model (RCSN + RQFP + MS-Fusion)checkpoints/Stage123_scratch/Stage123_scratch_checkpoint.ckpt

# pretrained Stage123 EQVI model from scratch

| Model | baseline | RCSN | RQFP | MS-Fusion | REDS_VTSR val (30 clips)* | REDS_VTSR5 (5 clips) ** |

|---|---|---|---|---|---|---|

| Stage3 RCSN+RQFP | ✅ | ✅ | ✅ | ❌ | 24.0354 | 24.9633/0.7268 |

| Stage4 MS-Fusion | ✅ | ✅ | ✅ | ✅ | 24.0562 | 24.9706/0.7263 |

| Stage123 scratch | ✅ | ✅ | ✅ | ❌ | 24.0962 | 25.0699/0.7296 |

* The performance is evaluated by x2 interpolation (interpolate 1 frame between two given frames).

** Poposed in our [EQVI paper]. Clip 002, 005, 010, 017 and 025 of REDS_VTSR validation set.

Clarification:

- We recommend to use Stage123 scratch model (

checkpoints/Stage123_scratch/Stage123_scratch_checkpoint.ckpt), since it achieves the best quantitative performance on REDS_VTSR validation set. - The Stage3 RCSN+RQFP and Stage4 MS-Fusion models are obtained during the AIM2020 VTSR Challenge via the proposed stage-wise training strategy. We adopt the stage-wise training strategy to accelerate the entire training procedure. Interestingly, after the competition, we trained a model with RCSN and RQFP equipped from scratch, and found that it functions well and even surpasses our previous models. (However, it costs much more training time.)

The REDS_VTSR training and validation dataset can be found here.

More datasets and models will be included soon.

- Specify the inference settings

modifyconfigs/config_xxx.py, including:

testset_roottest_sizetest_crop_sizeinter_framespreserve_inputstore_path

and etc.

- Execute the following command to start inference:

- For REDS_VTSR dataset, you could use

interpolate_REDS_VTSR.pyto produce the interpolated frames in the same naming manner. For example, given the input frames 00000000.png and 00000008.png, if we choose to interpolate 3 frames (inter_frames=3), then the output frames are automatically named as 00000002.png, 00000004.png and 00000006.png.

CUDA_VISIBLE_DEVICES=0 python interpolate_REDS_VTSR.py configs/config_xxx.py

Note: interpolate_REDS_VTSR.py is specially coded with REDS_VTSR dataset.

⚡ Now we support testing for arbitrary dataset with a generic inference script interpolate_EQVI.py.

- For other datasets, run the following command. For example, given input frames 001.png and 002.png, if we choose to interpolate 3 frames (

inter_frames=3), then the output frames will be named as 001_0.png, 001_1.png, 001_2.png.

CUDA_VISIBLE_DEVICES=0 python interpolate_EQVI.py configs/config_xxx.py

The output results will be stored in the specified $store_path$.